Doubao API’s

web_searchupgrades an LLM from static question answering to a verifiable web retrieval agent, helping solve stale knowledge, opaque sourcing, and hallucination issues. The core pipeline includes intent detection, information retrieval, knowledge fusion, and fact verification. Keywords: Doubao API,web_search, Agent.

Technical Specifications Snapshot

| Parameter | Description |

|---|---|

| Language | Python |

| Protocol | HTTPS / REST API |

| Model | doubao-seed-2-0-pro-260215 |

| Tool Capability | web_search |

| SDK / Dependencies | requests, volcenginesdkarkruntime |

| Source Format | Reworked from a CSDN article |

| Star Count | Not provided in the original content |

web_search Gives LLMs Verifiable Information Retrieval Capabilities

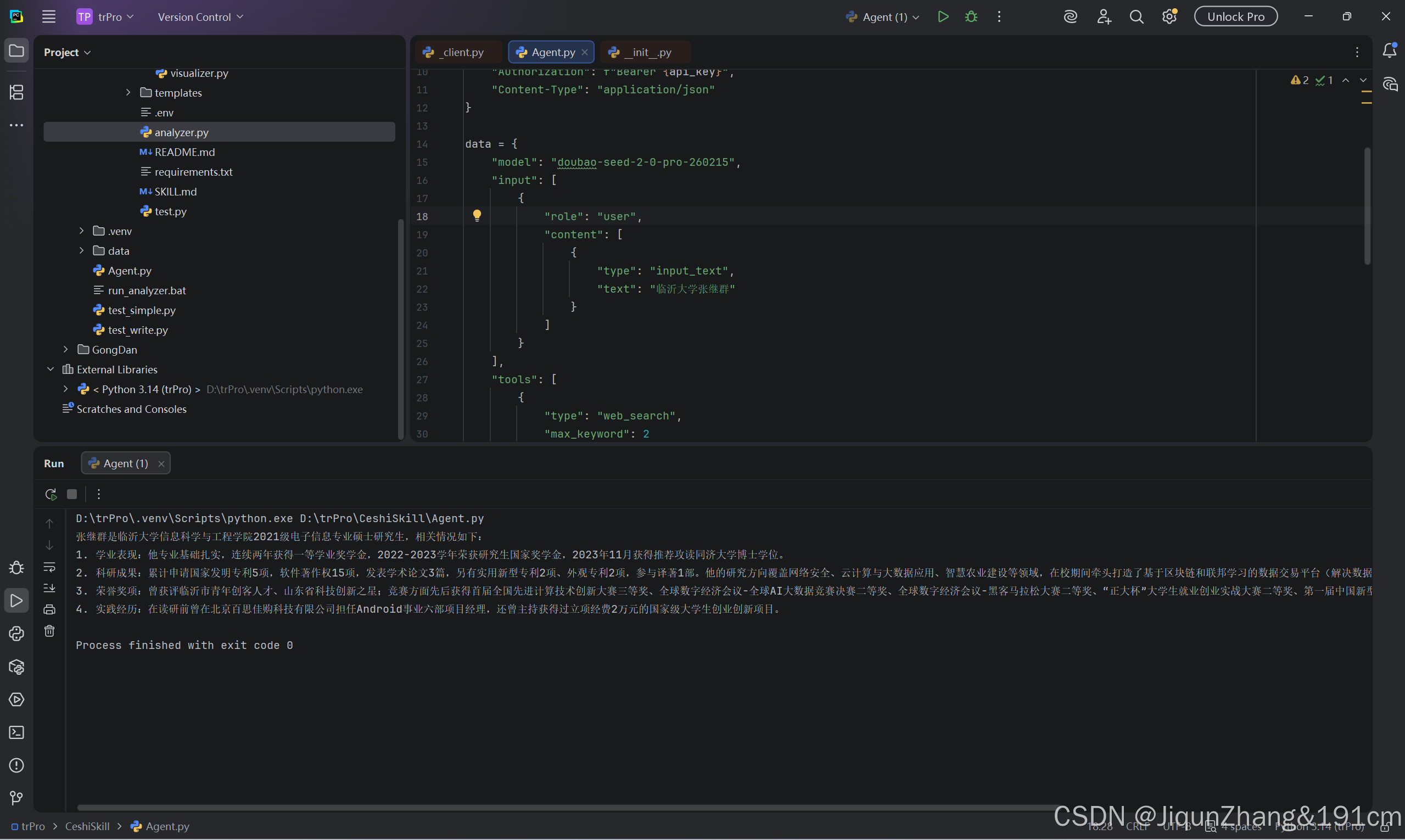

The core point of the original article is straightforward: when you call the Doubao API, whether you enable web_search directly determines the factual density and real-time relevance of the model’s answer.

Without it, the model can only answer from parametric memory learned during training. For time-sensitive questions, open-domain topics, or long-tail factual queries, this often leads to answers that sound plausible but are actually inaccurate.

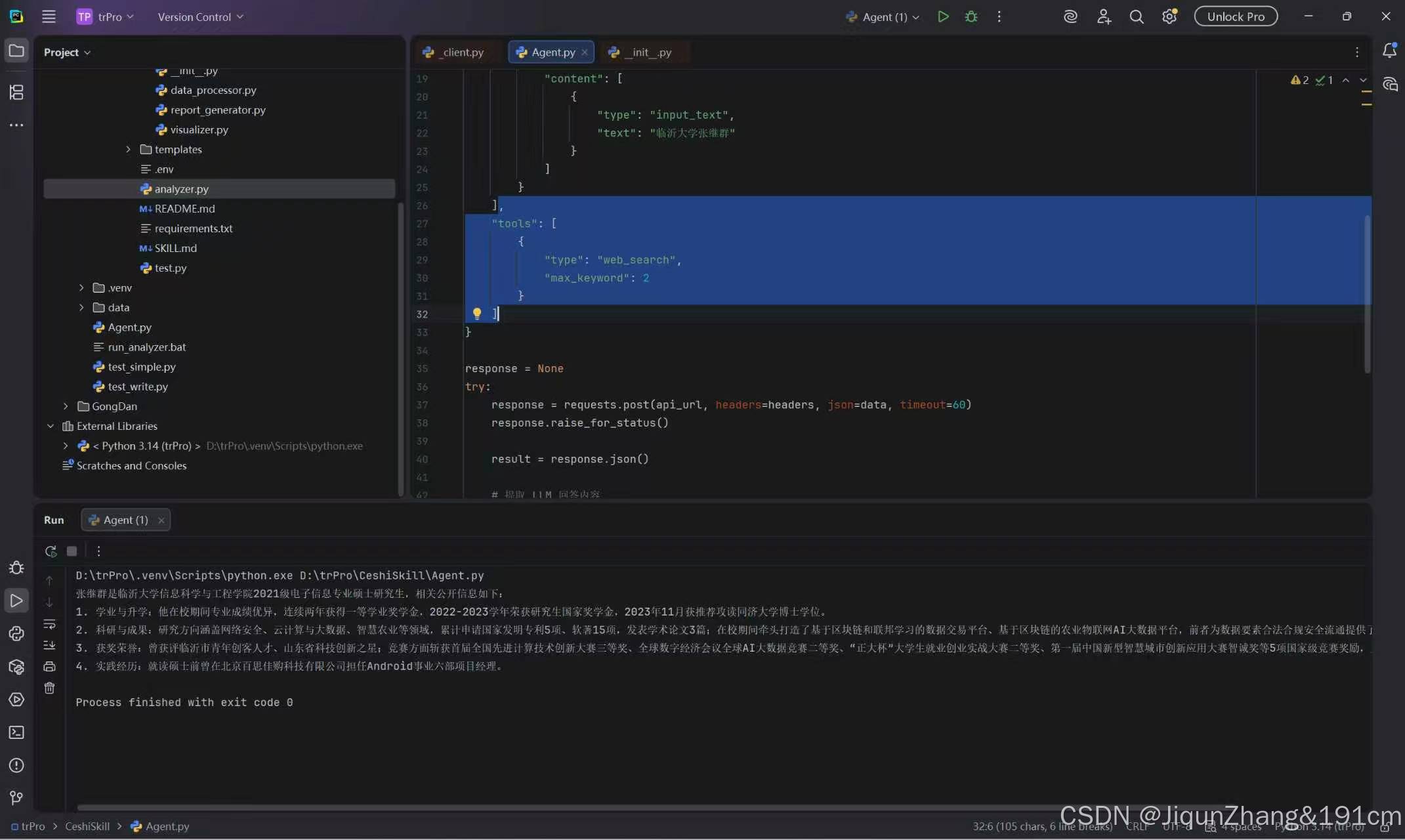

Results with web_search Are Closer to True Retrieval-Augmented Generation

AI Visual Insight: This image compares answer quality after enabling web retrieval. In practice, answers typically include clearer source signals, fresher facts, and summaries that align more closely with the user’s question. This shows that the model has moved from pure generation to retrieval-augmented generation.

AI Visual Insight: This image compares answer quality after enabling web retrieval. In practice, answers typically include clearer source signals, fresher facts, and summaries that align more closely with the user’s question. This shows that the model has moved from pure generation to retrieval-augmented generation.

The emphasis is not merely that the system can “search the web,” but that the model can reorganize answers based on external information. That step determines whether the Agent is truly connected to the open internet.

Without web_search, Closed-World Generation Becomes More Likely

AI Visual Insight: This image shows the typical response pattern when web retrieval is not enabled. Technically, answers often have opaque sourcing, generalized details, and missing time-sensitive information, indicating that the model is mainly continuing text from internal parameters rather than performing real-world information retrieval.

AI Visual Insight: This image shows the typical response pattern when web retrieval is not enabled. Technically, answers often have opaque sourcing, generalized details, and missing time-sensitive information, indicating that the model is mainly continuing text from internal parameters rather than performing real-world information retrieval.

This is also why many developers overestimate model capability: fluent language does not guarantee trustworthy information. The real value of web_search is that it upgrades a model from “able to say something” to “able to check before answering.”

The Core web_search Workflow Is More Than Simple Web Search

The original article outlines a classic Agent loop: intent detection, information retrieval, knowledge fusion, and fact verification. This pipeline is worth abstracting directly into an engineering implementation model.

The First Step Is Intent Detection and Query Rewriting

User questions are usually not suitable to send directly to a search engine. The model should first identify the question type, then convert natural language into a keyword combination that works better for retrieval.

query = "How does the Doubao API implement web retrieval?"

# Rewrite the user question into a search-friendly form

search_query = f"Doubao API web_search usage {query}" # Convert the original question into a retrieval-friendly expression

print(search_query)This snippet shows the minimum implementation for rewriting a natural language question into a retrieval query.

The Second Step Requires Source Filtering and Key Evidence Extraction

Effective web retrieval does not mean fetching an entire HTML page and feeding it back to the model. It means filtering for trustworthy sources first, then extracting evidence snippets that are highly relevant to the question.

sources = [

{"title": "Official Documentation", "domain": "volcengine.com", "snippet": "Supports web_search tool invocation"},

{"title": "Technical Blog", "domain": "csdn.net", "snippet": "Introduces the integration workflow and sample code"}

]

# Keep only highly trusted domains

trusted = [s for s in sources if s["domain"] in ["volcengine.com", "csdn.net"]] # Filter out low-trust sources

for item in trusted:

print(item["title"], item["snippet"]) # Extract title and summary evidenceThis code illustrates the basic idea behind source filtering and evidence extraction.

The Third Step Is to Fuse Retrieval Results into a Readable Answer

Information from multiple sources often contains repetition, differences in wording, and redundant phrasing. The model should not simply copy content. It should merge evidence, resolve conflicts, and produce a structured conclusion.

The Fourth Step Is Fact Verification to Suppress Hallucinations

Even after retrieval, the model can still mismatch information during summarization. A robust Agent aligns the generated answer with evidence snippets again to verify that the conclusion is traceable.

answer = "web_search allows the Doubao model to search the internet directly and answer based on the results."

evidence = "Supports web_search tool invocation"

# Perform a minimal consistency check

is_grounded = "web_search" in answer and "web_search" in evidence # Verify whether the answer is covered by the evidence

print("Fact verification passed" if is_grounded else "Secondary verification required")This snippet demonstrates the most basic answer-to-evidence alignment logic.

A Single Configuration Line Can Trigger a Capability Leap, but System Design Sets the Ceiling

The original article highlights “a capability leap enabled by a single configuration line,” which accurately captures the value of tool calling. For developers, the integration barrier is low, but the upper bound of system quality depends on prompting, source strategy, and verification design.

If you only enable web_search without query rewriting, source filtering, and result validation, the system can still output noise. The difference is that the noise now comes from the internet rather than from parametric memory.

The Python Example Serves as a Minimal Runnable Skeleton

Based on the original snippets, we can organize the call flow into a cleaner example. In production, do not hardcode secrets in code.

import os

import requests

api_key = os.getenv("ARK_API_KEY") # Read the key from an environment variable

api_url = "https://ark.cn-beijing.volces.com/api/v3/responses"

payload = {

"model": "doubao-seed-2-0-pro-260215",

"input": [

{

"role": "user",

"content": [

{

"type": "input_text",

"text": "Please search for the latest information and explain how Doubao API web_search works"

}

]

}

],

"tools": [

{"type": "web_search"} # Enable the web retrieval tool

]

}

headers = {

"Authorization": f"Bearer {api_key}", # Authentication header

"Content-Type": "application/json"

}

resp = requests.post(api_url, headers=headers, json=payload, timeout=30)

print(resp.json()) # Output the model responseThis code provides a minimal Python example for integrating Doubao web_search.

In Production, Focus on Three Engineering Priorities

First, use environment variables to manage secrets. Second, apply a domain allowlist to retrieval results. Third, archive answers together with supporting evidence so you can trace factual sources later.

With this design, web_search becomes more than a network access switch. It becomes the infrastructure that gives an Agent external awareness, traceable reasoning, and more trustworthy answers.

FAQ

1. Why is the model more likely to hallucinate when web_search is disabled?

Because the model can only generate the most probable text from its training data distribution. It cannot access live web pages or verify the latest facts, so it is more likely to hallucinate on open-domain questions.

2. Is the value of web_search limited to adding fresh information?

No. It also enables source filtering, evidence extraction, knowledge fusion, and fact verification, which upgrades responses from pure generation to verifiable generation.

3. Does enabling web_search guarantee accuracy?

No. If you lack query rewriting, trusted source filtering, and answer verification, the model can still misread web pages or combine information incorrectly. A complete closed loop matters more than simply turning on the tool.

Core Summary: This article reconstructs how to use Doubao API web_search and explains why enabling web retrieval upgrades an LLM from “answering from parametric memory” to a “retrieve-fuse-verify” loop. It covers intent detection, source filtering, knowledge fusion, fact verification, and a Python integration example.