This article distills the minimum viable path from a monolith to microservices: first decide whether the system should be split at all, then decompose it along business boundaries with high cohesion and low coupling, and finally implement service invocation and discovery with RestTemplate and Nacos. This approach addresses tight module dependencies, poor scalability, and deployment changes that impact the entire application. Keywords: microservice decomposition, remote calls, Nacos.

The technical snapshot outlines the stack and runtime choices

| Parameter | Value |

|---|---|

| Language | Java |

| Framework | Spring Boot / Spring Cloud Alibaba |

| Communication Protocol | HTTP/REST |

| Service Governance | Nacos |

| Remote Call Component | RestTemplate |

| Build Mode | Maven aggregation or standalone services |

| Original Popularity | 268 views / 6 likes / 3 saves |

| Core Dependencies | spring-web, spring-cloud-starter-alibaba-nacos-discovery |

Microservice decomposition is not an upgrade task but a boundary redesign

The key to moving from a monolith to microservices is not splitting code into multiple projects. The real work is redefining business boundaries, deployment boundaries, and invocation boundaries. If you only split the code physically while keeping the logic tightly coupled, you end up with a distributed monolith.

Whether to split a system depends first on business complexity, team collaboration scale, and release frequency. For a small team, a single business line, and a stable iteration cadence, a monolithic architecture is often still the lowest-cost option.

It is safer to start decomposition only when the signals are clear

There are usually two common paths: adopt microservices from the beginning of the project, or launch quickly with a monolith and gradually split it after the system stabilizes. The former has a higher design cost but supports smoother long-term scaling. The latter enables faster delivery but leads to higher refactoring cost later.

The right decision criterion is not whether the technology looks advanced. It is whether the system has already started showing these signals: module changes affect each other, deployment windows keep getting longer, partial scaling is difficult, and team collaboration conflicts are becoming obvious.

Typical signals that trigger decomposition:

1. A single release affects the entire site

2. One module failure can bring down the whole application

3. Frequent conflicts during parallel development across the team

4. Some capabilities need to scale independentlyThis checklist helps you quickly determine whether the system has truly entered the window for microservice transformation.

High cohesion and low coupling remain the only effective decomposition standard

The original article identifies the core principle as low coupling and high cohesion. That remains the foundational rule behind every microservice decomposition strategy. High cohesion means a service should close the loop around a single business capability internally. Low coupling means cross-service dependencies should be minimized and governed by clear contracts.

You can decompose from two dimensions: split horizontally by business domain, or split vertically by functional responsibility. The former aligns better with domain models. The latter is often easier to implement initially. However, if you over-split by technical layers, you can easily end up with a shared database and strong cross-service dependencies.

Services should be split by business domain first

In an e-commerce scenario, products, carts, orders, and payments naturally belong to different business capabilities. A cart service should not directly depend on internal objects from the product module. It should fetch product data through stable interfaces so that each service can remain autonomous.

// Split services by business domain instead of technical layers such as Controller/Service/DAO

item-service

cart-service

order-service

pay-serviceThis example emphasizes that service boundaries should be organized around business capabilities rather than code-layer structure.

Cart-to-item communication must use remote calls in a microservices architecture

In a monolithic application, it is common for the cart module to inject a product service object directly. In microservices, services run in independent processes, so calls must go through the network. The original example uses RestTemplate, which is a typical synchronous HTTP invocation approach.

The first step is to register RestTemplate with the Spring container so that all services can reuse a unified HTTP client.

@Configuration

public class RemoteCallConfig {

@Bean

public RestTemplate restTemplate() {

return new RestTemplate(); // Register the remote call client

}

}This code registers RestTemplate in the Spring container so services can initiate HTTP requests through a shared client.

RestTemplate can fetch product information in batches

The cart service needs to query product details based on a list of product IDs. Here, exchange preserves generic type information and avoids type loss during List deserialization.

// Query product information in batches by product ID

ResponseEntity<List<ItemDTO>> response = restTemplate.exchange(

"http://localhost:8081/items?ids={ids}",

HttpMethod.GET,

null,

new ParameterizedTypeReference<List<ItemDTO>>() {}, // Preserve the generic type

Map.of("ids", String.join(",", itemIds)) // Build query parameters

);

// Check whether the call succeeded

if (!response.getStatusCode().is2xxSuccessful()) {

return;

}

List

<ItemDTO> items = response.getBody();

if (items == null || items.isEmpty()) {

return;

}This code completes the remote query from the cart service to the item service and validates both the status code and empty-result scenarios.

A registry upgrades hard-coded addresses into service discovery

Hard-coding localhost:8081 only works for local demos. It is not suitable for production. Once a service instance scales out, migrates, or restarts, a fixed address becomes invalid. That is why microservices need a service registry to solve the problem of service location.

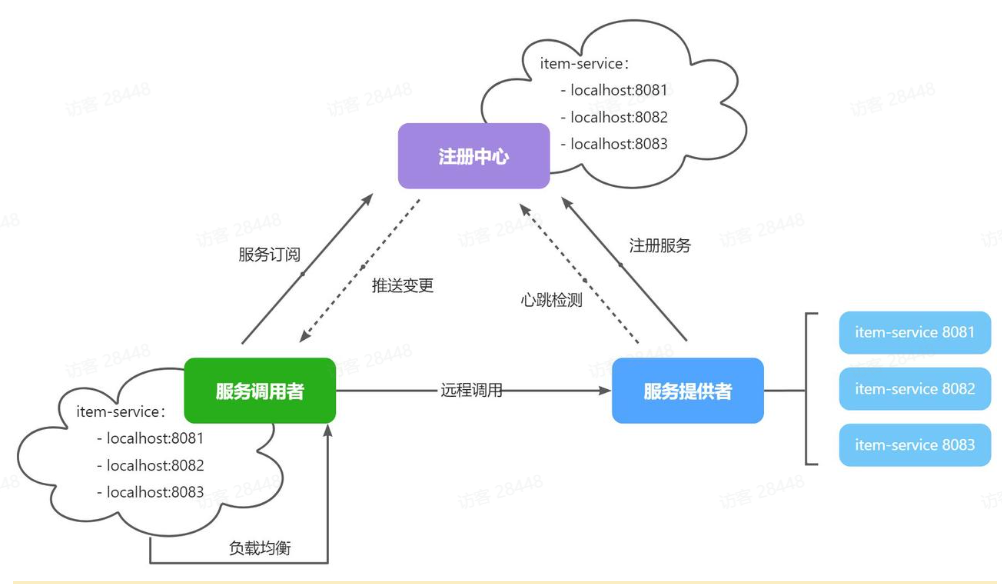

The original article uses Nacos as the registry, which is the standard option in the Spring Cloud Alibaba ecosystem. Its basic mechanism works like this: service providers register instances at startup, consumers subscribe to the service list, and local load balancing selects an available node.

AI Visual Insight: This diagram shows a typical service registration and discovery flow: the provider first registers its instance with the registry, the consumer retrieves the list of available services from the registry, and then a load balancing strategy selects the target node for the request. The diagram also implies two critical mechanisms: instance metadata synchronization, and heartbeat-based health checks with automatic removal of failed instances.

AI Visual Insight: This diagram shows a typical service registration and discovery flow: the provider first registers its instance with the registry, the consumer retrieves the list of available services from the registry, and then a load balancing strategy selects the target node for the request. The diagram also implies two critical mechanisms: instance metadata synchronization, and heartbeat-based health checks with automatic removal of failed instances.

Nacos maintains instance health through heartbeats

When a service provider exits unexpectedly, the registry does not keep failed nodes forever. Nacos detects instance health through heartbeats, removes timed-out instances automatically, and pushes the latest changes to consumers that subscribe to the service.

This dramatically reduces the cost of manually maintaining address lists and explains why service discovery is fundamentally better than static configuration.

docker run -d \

--name nacos \

--env-file ./nacos/custom.env \

-p 8848:8848 \

-p 9848:9848 \

-p 9849:9849 \

--restart=always \

nacos/nacos-server:v2.1.0-slimThis command starts the Nacos registry quickly with Docker.

Services gain real elasticity only after integrating with Nacos

To register a service with Nacos, you must first add the service discovery dependency. The original dependency is spring-cloud-starter-alibaba-nacos-discovery, which serves as the entry point for service registration and discovery.

<!-- Nacos service registration and discovery -->

<dependency>

<groupId>com.alibaba.cloud</groupId>

<artifactId>spring-cloud-starter-alibaba-nacos-discovery</artifactId>

</dependency>This dependency declaration enables a Spring Boot application to integrate with Nacos.

Next, declare the service name and Nacos address in the configuration file. The service name is the logical identifier used for registration and invocation, and it should not change frequently across environments.

spring:

application:

name: item-service # Define the service name

cloud:

nacos:

server-addr: 192.168.150.101:8848 # Specify the Nacos addressThis configuration defines the registration name and registry address, which are the foundation for service discovery to work correctly.

A progressive migration fits engineering reality better than a full rewrite

The article suggests an important practical approach: split the modules that are easiest to isolate first, and then gradually move invocation relationships to network boundaries. This is more reliable than a big-bang rewrite because it lets the team continuously validate business correctness during migration.

A safer sequence usually looks like this: modularize the monolith first, then split non-core services, then introduce a service registry, configuration center, and gateway, and finally handle highly consistent flows such as orders and payments.

FAQ provides structured answers to common migration questions

1. When is the best time to split a monolith into microservices?

When the system already shows strong module entanglement, high release risk, frequent team collaboration conflicts, and difficulty with partial scaling, the monolith boundaries have effectively failed. At that point, the benefits of decomposition usually outweigh the cost.

2. Why can’t a microservice directly reuse a Java object from another module?

Because microservices run in separate processes and often on separate machines, in-memory objects cannot be shared directly across the network. The correct approach is to communicate through explicit contracts such as REST, gRPC, or messaging.

3. What core problem does Nacos solve after it is introduced?

It solves the problem of dynamically changing service instance addresses. It provides registration, discovery, health checks, and instance removal, so consumers no longer depend on hard-coded addresses and can support elastic scaling.

Core summary: This article reconstructs the core method for moving from a monolith to microservices. It focuses on decomposition timing, the principles of low coupling and high cohesion, the implementation of remote calls from the cart service to the item service, and the use of Nacos for service registration and discovery, helping teams complete architectural evolution progressively.