Karpathy argues that AI programming has evolved from “code completion” into “task agency”: developers define goals and review outcomes, while agents plan, execute, and repair. This shift addresses the core pain points of long-running task automation and end-to-end engineering execution. Keywords: Coding AGI, Agentic Engineering, Software 3.0.

Technical Snapshot

| Parameter | Details |

|---|---|

| Domain | AI programming paradigms, agent engineering |

| Core Languages | English prompts, Python, Shell |

| Key Protocols / Interfaces | MCP, SSH, systemd, Web UI |

| Article Signal | Originally published on CSDN, with about 7 likes and 5 saves |

| Star Count | Not provided in the source; not an open-source repository entry |

| Core Dependencies | LLMs, coding agents, TDD, browser/database toolchains |

Coding AGI has moved from concept to workflow reality

Karpathy’s core judgment is straightforward: programming is being restructured. In the past, developers mostly wrote code line by line inside an editor. Now, a more efficient model is to define the goal, constrain the context, and let the agent complete most of the implementation.

The most important signal in the original article is not a slogan, but a shift in ratios: his workflow flipped from “80% handwritten + 20% AI” to “80% AI + 20% human review.” That means AI is no longer just a code completer. It is beginning to take on the primary execution role.

AI Visual Insight: This image serves as the article’s primary visual and highlights Coding AGI as a central topic. It communicates a paradigm shift from human-only programming to human-AI collaborative execution, rather than a simple upgrade of a single tool.

AI Visual Insight: This image serves as the article’s primary visual and highlights Coding AGI as a central topic. It communicates a paradigm shift from human-only programming to human-AI collaborative execution, rather than a simple upgrade of a single tool.

The qualitative leap comes from closed-loop execution on long-running tasks

Karpathy specifically points to December 2025 as the practical turning point. Before that, coding agents were best suited for demos, scripts, and minor fixes. After that, models appeared to make a leap in long-horizon consistency, task resilience, and tool-use capability.

A representative example is that an agent can independently log into a remote machine, configure keys, install an inference service, deploy the frontend and backend, register a systemd service, and produce a Markdown report. The key is not how much code it writes, but whether it can continuously resolve the friction points of a real environment.

steps = [

"Configure SSH keys", # Establish the prerequisite for remote execution

"Install vLLM", # Deploy the inference service

"Pull the vision model", # Fetch the target model assets

"Launch the Web UI", # Expose a usable interface

"Write the deployment report" # Output a reviewable result

]

for step in steps:

print(f"Agent executing: {step}") # Simulate an autonomous execution flow with a task sequenceThis snippet shows, in minimal form, the agent’s continuous goal-oriented execution chain.

Software 3.0 redefines what programming means

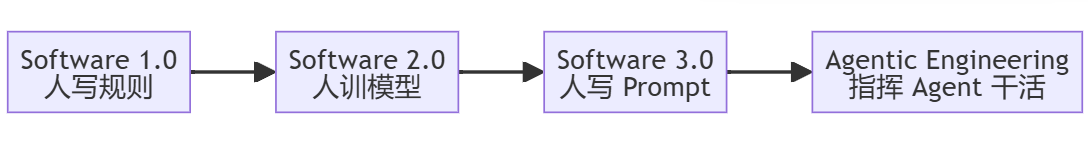

Karpathy uses Software 1.0, 2.0, and 3.0 to explain this shift. Software 1.0 is when humans write explicit rules. Software 2.0 is when humans train models, and the weights become the program. Software 3.0 is when humans write prompts, and the LLM acts as the interpreter.

The disruptive part of this definition is that the smallest unit of a program is no longer limited to functions and classes. It can also be task descriptions, constraints, and context fragments. The act of development moves upward from “implementing steps” to “defining intent.”

AI Visual Insight: This diagram illustrates the layered evolution across Software 1.0, 2.0, and 3.0. It emphasizes three different program carriers—rule-based code, model weights, and natural-language context—and shows how the programming target is shifting from source code to context systems.

AI Visual Insight: This diagram illustrates the layered evolution across Software 1.0, 2.0, and 3.0. It emphasizes three different program carriers—rule-based code, model weights, and natural-language context—and shows how the programming target is shifting from source code to context systems.

Software 3.0 makes English a high-level programming interface

“The hottest new programming language is English” is not just rhetoric. In practice, it means natural language is becoming the orchestration layer, while Python, Shell, and SQL move down to the execution layer. Developers no longer specify every step. Instead, they define success criteria, boundary conditions, and acceptance methods.

def build_prompt(goal, constraints, tests):

return {

"goal": goal, # Describe the final outcome

"constraints": constraints, # Limit technical boundaries and resources

"tests": tests # Define success criteria through tests

}

spec = build_prompt(

goal="Build a deployable video analytics service",

constraints=["Use the existing GPU", "Preserve SSH-based remote maintenance capability"],

tests=["The API is reachable", "The service self-recovers after restart", "Deployment documentation is generated"]

)This snippet shows that, in engineering practice, a prompt is closer to a structured specification than to a one-line chat command.

Agentic Engineering is the real form of Coding AGI

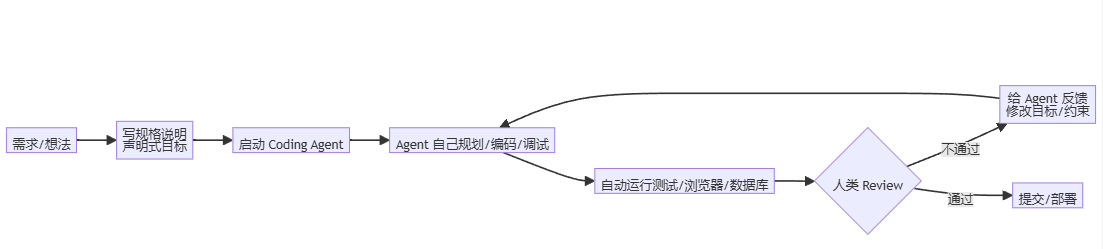

Karpathy has explicitly upgraded Vibe Coding into Agentic Engineering. The difference is not whether you use AI, but whether AI is integrated into an engineering system that is manageable, verifiable, and optimizable through feedback loops.

Vibe Coding looks more like trial-and-error creation: you write one instruction, the model returns one block, and you try running it. Agentic Engineering requires the AI to plan, execute, and debug autonomously, while humans focus on architecture decisions, quality control, and exception handling.

AI Visual Insight: This image shows the workflow upgrade from human-led coding to an agent participating in planning, implementation, and validation. It emphasizes the developer’s role shift from executor to supervisor and system designer.

AI Visual Insight: This image shows the workflow upgrade from human-led coding to an agent participating in planning, implementation, and validation. It emphasizes the developer’s role shift from executor to supervisor and system designer.

Closed-loop trial matters more than “knowing the answer”

Karpathy argues that the practical feeling of AGI often comes not from omniscience, but from persistent iteration. Humans may give up after the third failure. An agent can keep trying 30 times in a row and continuously adjust its path based on logs, error messages, and browser feedback.

As a result, the most effective prompts are not imperative step lists, but declarative success definitions. You tell the agent the destination and the constraints, and it uses its toolchain to search for a path on its own.

def agent_loop(goal, observe, act, done):

while not done():

state = observe() # Read logs, pages, and test results

action = act(goal, state) # Decide based on the goal and current state

print("Action:", action) # Output the next remediation step

# Core idea: goal-driven execution + observational feedback + continuous correctionThis snippet captures the minimal closed loop of Coding AGI: goal, execution, observation, and correction.

TDD and MCP bring agent capabilities into an engineering control zone

Karpathy’s practical recommendations are highly grounded. First, write tests before asking AI to write the implementation. Tests turn “close enough” into “must pass.” Second, connect browser, database, terminal, and other capabilities through MCP so the agent can observe real-world feedback.

When an agent can modify code, run tests, access pages, and inspect database state, it stops being a static text generator. It becomes an engineering execution unit with environmental interaction.

The value of programmers is shifting toward higher-level capabilities

This does not mean programmers disappear. It means the value of low-level handwritten coding decreases, while the value of high-level design capabilities increases. What will become more scarce is the ability to define system boundaries, design tests, evaluate architectures, and review risk.

Karpathy also warns about the downside: long-term dependence on AI can erode manual coding skills. But that is not a reason to stop using AI. It is a reason to preserve understanding. You can outsource generation, but you should not outsource judgment.

Pitfall avoidance determines whether agents can enter production

AI-assisted programming has three common risk categories: incorrect assumptions that propagate through the workflow, over-abstraction that bloats the codebase, and blind trust in automation. The solution is not to reduce usage, but to increase constraint density.

Recommended practices include asking the agent to output a plan before granting execution, limiting module size and abstraction depth, reviewing critical code inside the IDE, and binding all important tasks to tests and rollback strategies.

FAQ

1. Does Coding AGI mean programmers will be replaced?

No. What gets compressed is low-value repetitive coding. What gets amplified is architecture design, requirement clarification, quality review, and system-level judgment.

2. Why is TDD especially important for AI programming?

Because tests are objective acceptance mechanisms. They turn natural-language goals into machine-verifiable standards and can reliably drive an agent to keep fixing issues until it meets the bar.

3. How can developers evolve from Vibe Coding to Agentic Engineering?

First, change how you provide input: give fewer step-by-step instructions and more goals, constraints, and tests. Second, complete the tool loop by connecting terminals, browsers, databases, and MCP. Finally, strengthen the review process.

Core Summary

Based on Karpathy’s latest perspective, this article systematically explains why Coding AGI has moved from a demo capability to a productivity tool. It uses Software 1.0/2.0/3.0, closed-loop iteration, declarative instructions, TDD, and MCP to explain the next generation of AI programming paradigms.