[AI Readability Summary] YOLO26 is a next-generation object detection framework designed for edge deployment. FAAFusion resolves cross-scale directional conflicts in the Neck through frequency-domain angle alignment, delivering consistent gains on NEU-DET defect detection. Its core value lies in balancing accuracy, lightweight design, and deployment efficiency. Keywords: YOLO26, FAAFusion, NEU-DET.

Technical Specifications Snapshot

| Parameter | Description |

|---|---|

| Primary Language | Python |

| Framework/Library | Ultralytics YOLO |

| Task Type | Industrial surface defect detection |

| Dataset | NEU-DET |

| Core Protocols/Formats | PyTorch, YAML, exportable to ONNX/TensorRT |

| Baseline Model | YOLO26n |

| Improved Model | YOLO26 + FAAFusion |

| Parameters | 2.38M → 2.40M |

| Compute | 5.2 GFLOPs |

| GitHub Stars | Not provided in the original article |

| Core Dependencies | torch, ultralytics, opencv-python |

YOLO26 has been systematically redesigned for edge deployment

YOLO26 continues the YOLO family’s real-time detection trajectory, but its focus is no longer limited to stacking modules. Instead, it rewrites the architecture around deployment efficiency, training stability, and multi-task extensibility. For industrial defect detection, where targets are often small and highly texture-sensitive, this redesign is especially practical.

Its core changes include removing DFL, enabling end-to-end NMS-free inference, introducing ProgLoss and STAL, and adopting the MuSGD optimizer. This is not a single-point improvement, but an end-to-end optimization across the entire training-to-deployment pipeline.

AI Visual Insight: The image presents the overall research entry point and paper information for YOLO26, highlighting its positioning as a next-generation YOLO framework. It emphasizes real-time detection on edge devices, unified multi-task support, and the technical roadmap for later structural module analysis.

AI Visual Insight: The image presents the overall research entry point and paper information for YOLO26, highlighting its positioning as a next-generation YOLO framework. It emphasizes real-time detection on edge devices, unified multi-task support, and the technical roadmap for later structural module analysis.

The most important YOLO26 upgrades are concentrated in the detection head and training mechanisms

Removing DFL directly simplifies the bounding box regression path, which reduces friction when exporting the model to ONNX, TensorRT, and CoreML. For engineering teams, this means lower deployment costs rather than gains that exist only in benchmark papers.

NMS-free inference further reduces post-processing complexity. On CPUs, Jetson devices, or other low-power hardware, post-processing is often a hidden bottleneck. YOLO26 moves this burden into end-to-end learning, which makes low-latency deployment easier to achieve.

from ultralytics import YOLO

# Load the YOLO26 configuration

model = YOLO('ultralytics/cfg/models/26/yolo26n.yaml')

# Core idea: train the new architecture directly without relying on extra post-processing tricks

model.train(

data='data/NEU-DET.yaml', # Defect detection dataset configuration

imgsz=640, # Input resolution

epochs=300, # Number of training epochs

batch=16, # Batch size

device='0' # Use GPU for training

)This snippet shows the shortest path to launching YOLO26 training through the Ultralytics interface.

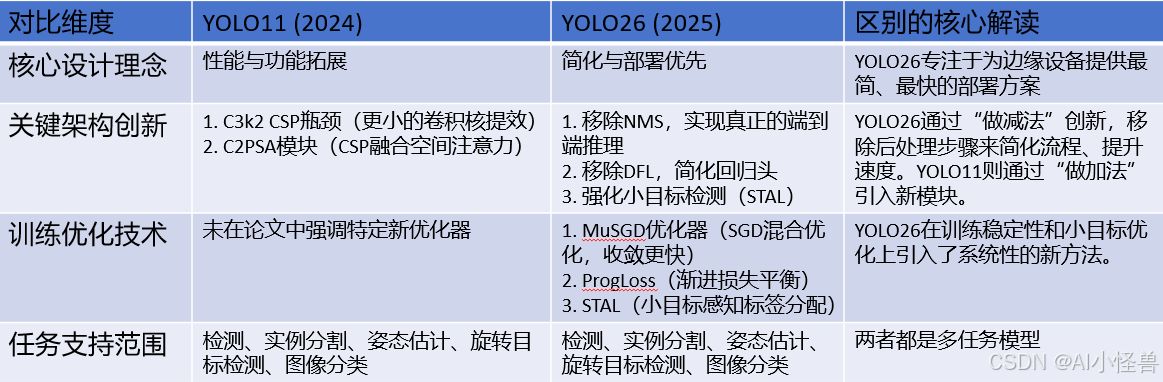

The main difference between YOLO26 and YOLO11 lies in module controllability

In the SPPF component, YOLO26 no longer hardcodes the number of pooling operations. Instead, it controls pooling depth through parameters. The value of this change is that researchers can tune the receptive field for specific scenarios without modifying low-level source code.

In the C3k2 component, YOLO26 introduces optional attention-enhanced logic. When attn=True, the branch prioritizes the Bottleneck + PSABlock path. Otherwise, it falls back to the traditional C3k or Bottleneck branch. This turns the module from a fixed topology into a composable unit.

AI Visual Insight: The image compares the structural differences between YOLO11 and YOLO26, with emphasis on module replacements and connection changes in the backbone and feature fusion paths. It is useful for observing the evolution of configurable SPPF, C3k2, and detection head design.

AI Visual Insight: The image compares the structural differences between YOLO11 and YOLO26, with emphasis on module replacements and connection changes in the backbone and feature fusion paths. It is useful for observing the evolution of configurable SPPF, C3k2, and detection head design.

The branch priority in C3k2 makes attention enhancement easier to plug in

This change matters for industrial vision. Defect textures are often fragmented and vary greatly in scale, so simply stacking convolutions is frequently insufficient. With attention-priority branching, YOLO26 strengthens the representation of critical regions without substantially increasing compute.

def build_c3k2_block(attn=False, c3k=False):

if attn:

return ['Bottleneck', 'PSABlock'] # Prioritize attention enhancement

elif c3k:

return ['C3k'] # Fall back to the C3k structure

else:

return ['Bottleneck'] # The most basic bottleneck structureThis pseudocode summarizes the core branch-selection logic of C3k2 in YOLO26.

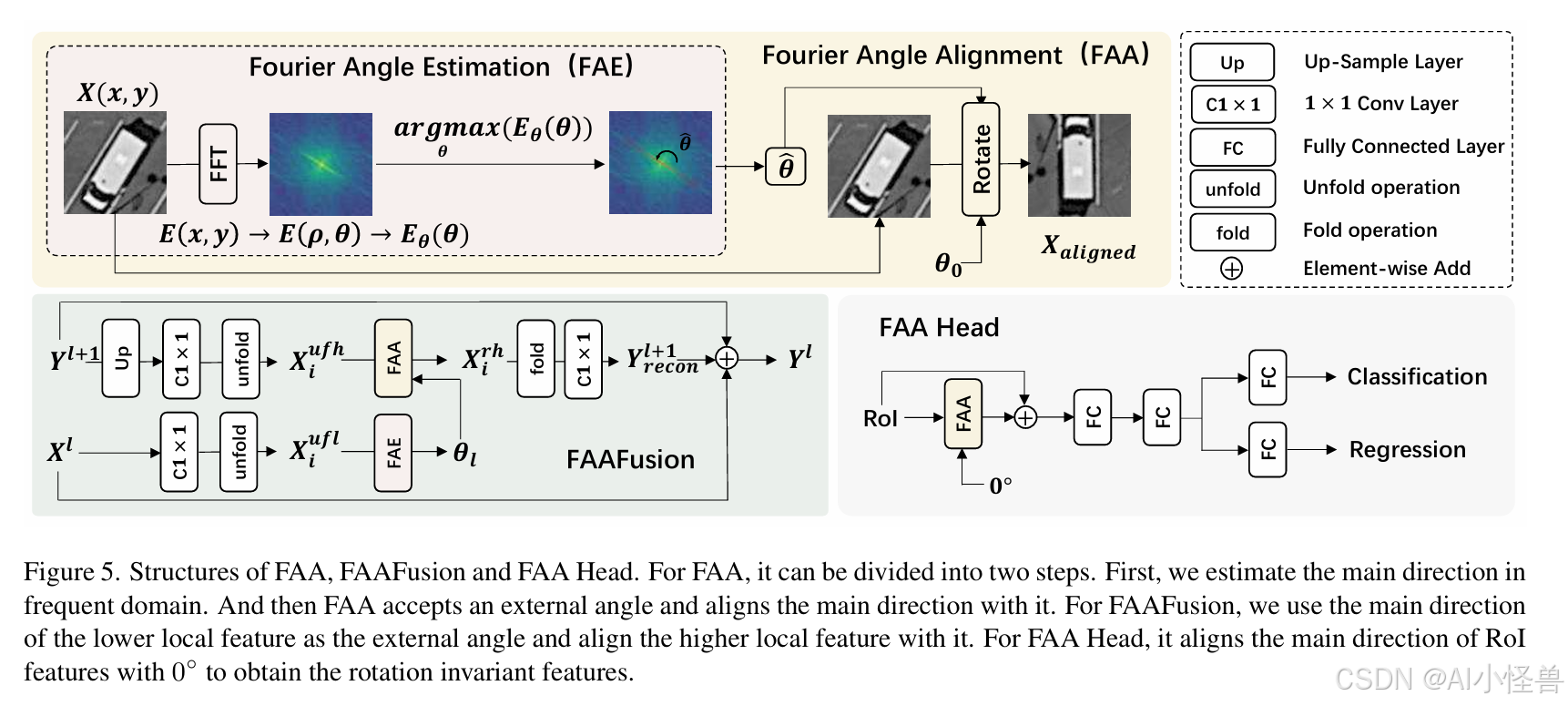

FAAFusion fixes directional conflicts in the Neck through frequency-domain alignment

The original YOLO Neck relies on upsampling and concatenation to fuse multi-scale features. However, high-level features carry stronger semantics but blur directional information, while low-level features preserve clearer edges and more explicit orientation. Directly concatenating them mixes inconsistent directional cues, which can harm the localization of rotated targets and fine-grained textures.

FAAFusion addresses this by first estimating the dominant orientation of low-level features in the frequency domain, then rotating the high-level features to align with that orientation, and only then performing fusion. As a result, multi-scale features become directionally consistent before entering the detection head.

AI Visual Insight: The image provides an overview of the FAA method. Its core idea is to extract dominant orientation information in the Fourier domain and rotate features to a unified reference direction, serving both Neck fusion and Head prediction while demonstrating an engineering use of rotation equivariance.

AI Visual Insight: The image provides an overview of the FAA method. Its core idea is to extract dominant orientation information in the Fourier domain and rotate features to a unified reference direction, serving both Neck fusion and Head prediction while demonstrating an engineering use of rotation equivariance.

AI Visual Insight: This image breaks down the FAA workflow, typically including spectrum transformation, angle estimation, feature rotation alignment, and subsequent fusion steps. It helps clarify where FAAFusion is inserted into the detector and how data flows through it.

AI Visual Insight: This image breaks down the FAA workflow, typically including spectrum transformation, angle estimation, feature rotation alignment, and subsequent fusion steps. It helps clarify where FAAFusion is inserted into the detector and how data flows through it.

FAAFusion is well suited to elongated textures and orientation-sensitive targets in defect detection

Defects in NEU-DET, such as scratches, cracks, and patches, are not always regular in shape. Many classes are highly sensitive to edge direction and local texture. The value of FAAFusion is not just “rotation-aware detection,” but improved semantic consistency after cross-layer fusion.

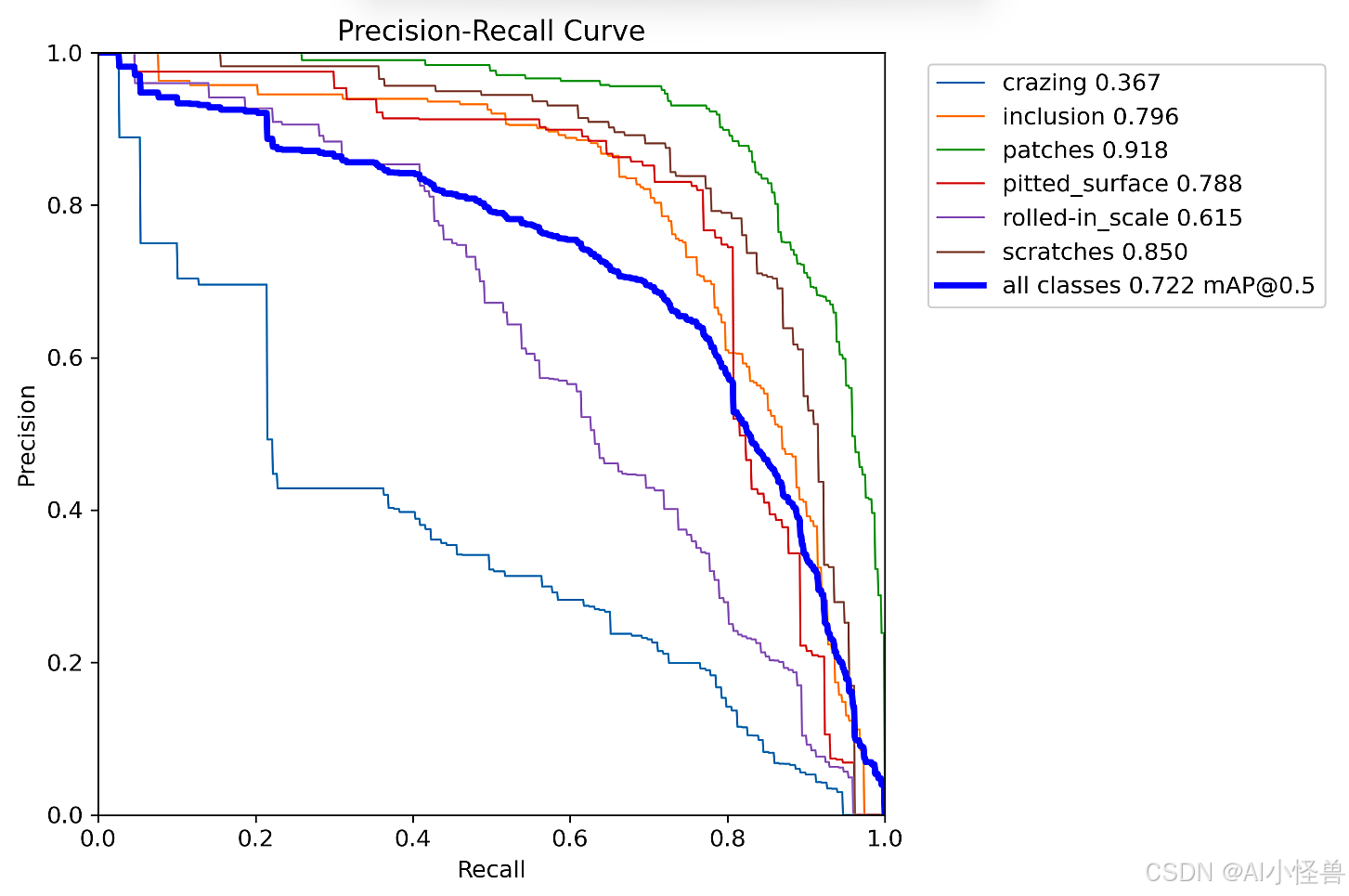

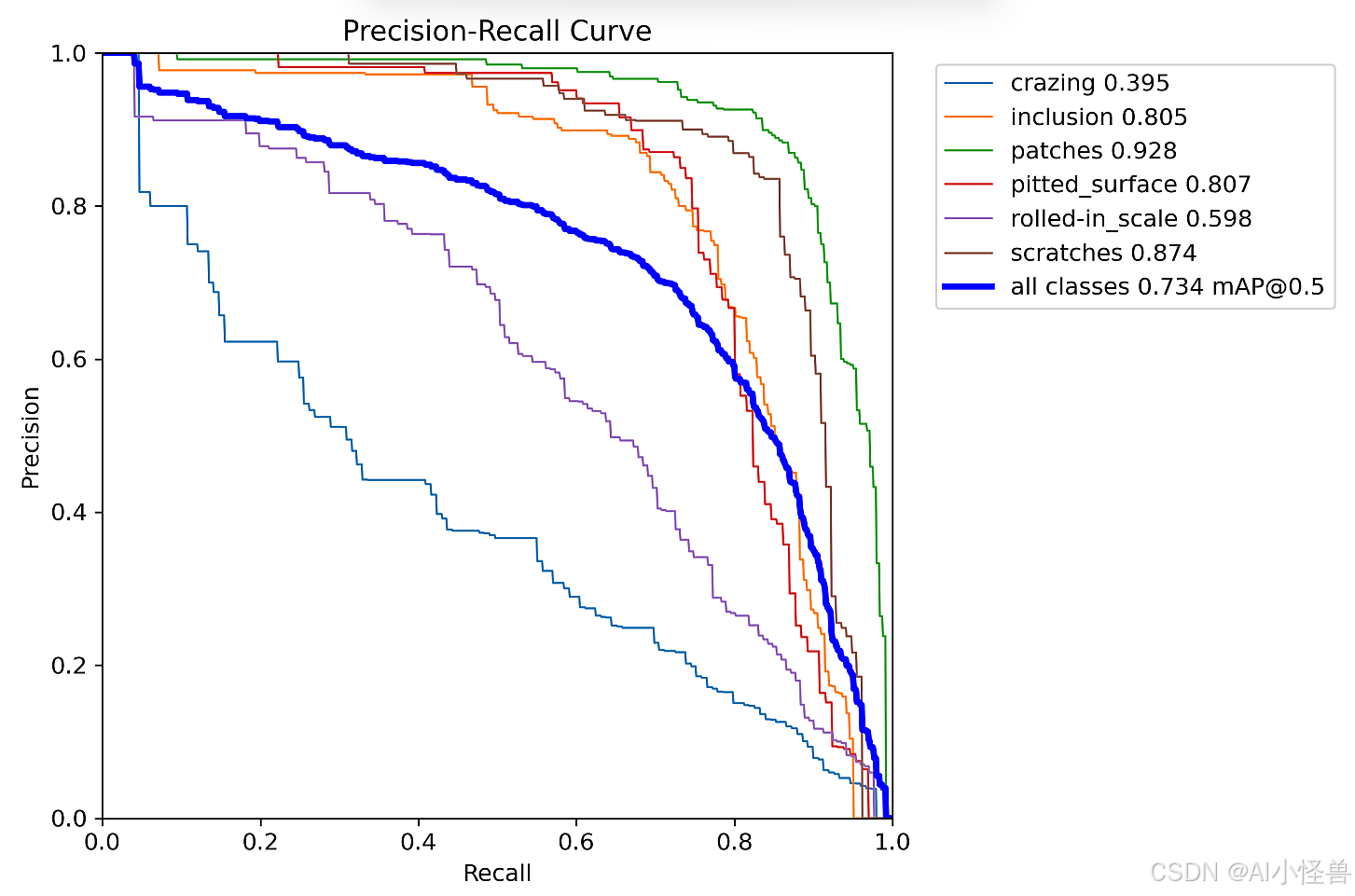

Experiments show that the YOLO26 baseline reaches an mAP50 of 0.722 on NEU-DET. After adding FAAFusion, it increases to 0.734. Recall improves from 0.643 to 0.665, and mAP50-95 rises from 0.407 to 0.410. For a lightweight model, this is a meaningful structural gain with controllable cost.

class FAAFusionIdea:

def forward(self, low_feat, high_feat):

angle = estimate_angle_from_fourier(low_feat) # Estimate the dominant low-level orientation in the frequency domain

aligned_high = rotate_feature(high_feat, angle) # Rotate high-level features to complete alignment

fused = fuse(low_feat, aligned_high) # Perform multi-scale fusion after alignment

return fusedThis illustrative code captures the FAAFusion design principle: align first, fuse later.

The NEU-DET training configuration shows that this improvement fits small-model optimization

The original YOLO26n summary reports 122 layers, 2,376,006 parameters, and 5.2 GFLOPs. After adding FAAFusion, the model has 128 layers, 2,395,720 parameters, and still 5.2 GFLOPs. That is an increase of only about 19.7K parameters, with almost no change in compute, while accuracy improves consistently.

This indicates that FAAFusion does not brute-force performance by adding raw compute. Instead, it gains metrics by improving feature fusion quality. In industrial scenarios, a solution that adds few parameters, is reproducible, and remains deployable is often more valuable than simply scaling up the model.

AI Visual Insight: The image shows training curves or evaluation visualizations for baseline YOLO26 on NEU-DET, typically including loss convergence, PR trends, or per-class metric changes. It can be used to assess training stability and generalization performance.

AI Visual Insight: The image shows training curves or evaluation visualizations for baseline YOLO26 on NEU-DET, typically including loss convergence, PR trends, or per-class metric changes. It can be used to assess training stability and generalization performance.

AI Visual Insight: This image corresponds to the training results after introducing FAAFusion. Compared with the baseline, it likely shows better convergence behavior, improved class detection distribution, or stronger evaluation curves, supporting FAAFusion’s positive effect on feature fusion quality.

AI Visual Insight: This image corresponds to the training results after introducing FAAFusion. Compared with the baseline, it likely shows better convergence behavior, improved class detection distribution, or stronger evaluation curves, supporting FAAFusion’s positive effect on feature fusion quality.

Three scenarios should be validated first

First, elongated scratch-like defects. Second, steel surface data with mixed scales and complex background textures. Third, small-sample industrial datasets with obvious orientation variation. These scenarios are the most likely to expose directional conflicts inside Neck fusion.

If your task is dominated by classification confusion rather than localization drift or rotation sensitivity, you should first inspect label quality, data augmentation, and loss weighting before deciding whether to introduce FAAFusion.

FAQ

FAQ 1: Why does FAAFusion improve results on NEU-DET?

Because defect textures in NEU-DET exhibit strong directional characteristics. FAAFusion aligns the orientation of cross-scale features before fusion, which reduces the semantic conflict caused by directly concatenating low-level and high-level features. As a result, Recall and mAP become easier to improve.

FAQ 2: What is the most important engineering change in YOLO26 compared with older YOLO versions?

The most noteworthy changes are removing DFL, enabling NMS-free inference, and streamlining the export and deployment pipeline. These modifications make YOLO26 suitable not only for research experiments, but also for edge boards, Jetson devices, and TensorRT production environments.

FAQ 3: Does FAAFusion significantly increase inference overhead?

Based on the reported results, the parameter count increases only slightly and GFLOPs remain nearly unchanged, so FAAFusion is a lightweight enhancement module. If implemented well, it acts more like a high-value Neck redesign than a heavy attention stack.

Core Summary: This article reconstructs the key technical path of YOLO26 and FAAFusion for industrial defect detection on NEU-DET. It focuses on YOLO26’s NMS-free inference, DFL removal, ProgLoss/STAL, and MuSGD, and explains how FAAFusion mitigates cross-scale directional conflicts in the Neck through frequency-domain angle alignment, with training settings, code examples, and metric gains included.