For small and mid-sized teams, the core value of a private live chat system is not simply that it can chat. It must be stable, controllable, deployable, and built to run for the long term. This article breaks down the market gap and technical barriers behind these systems, focusing on three core themes: long-lived connections, message reliability, and business state machines.

The technical specification snapshot highlights the product shape

| Parameter | Description |

|---|---|

| Project Type | Private live chat and customer service system |

| Target Users | Small and mid-sized teams, and enterprises that require data control |

| Core Scenarios | Visitor reception, agent collaboration, back-office operations management |

| Estimated Tech Stack | .NET, WebSocket, web admin console, desktop agent client |

| Communication Protocols | WebSocket / HTTP |

| Deployment Modes | Private deployment, Linux, Windows, Docker |

| Core Dependencies | Long-lived connection management, message persistence, authentication and rate limiting, monitoring and logging |

| Star Count | Not provided in the source |

The real opportunity in this market is not the apparent red-ocean competition

When many people look at live chat or customer service systems, their first reaction is that there are already too many vendors and the market is saturated. But if you narrow the requirement to products that are private, transparently priced, directly testable, and stable in production, the list of viable candidates drops sharply.

Most solutions on the market fall into three categories: expensive SaaS platforms, second-tier products with opaque pricing, and small workshop-style systems that demo well but fail in production. The first two come with high deployment thresholds and heavy negotiation costs. The last category usually lacks stability and engineering quality.

Small and mid-sized teams are missing deployable solutions

For a business, a customer service system is not just a widget on a landing page. It is a core entry point connected directly to orders, conversion, and after-sales workflows. As soon as messages are lost, conversations get mixed up, or connections drop, revenue and brand experience are affected immediately.

That is why the real product opportunity is not feature accumulation. It is delivering a focused but durable private product: affordable, well-documented, clearly deployable, and stable enough for long-term operation.

market_gap = {

"痛点": ["私有化成本高", "报价不透明", "系统稳定性差"],

"机会": "提供可下载、可自部署、价格透明的客服系统", # Core product positioning

"目标客户": "中小团队与重视数据控制的企业" # Target customer profile

}

print(market_gap)This code summarizes the product’s market entry logic in a structured way.

The technical complexity of a live chat system is much higher than a chat room or CRUD app

The most common misunderstanding is to think of a customer service system as nothing more than sending and receiving messages over WebSocket. The difficulty is not in the send/receive action itself. The real challenge is maintaining consistency under massive connections, complex states, and abnormal runtime conditions.

This is neither a pure frontend component nor a simple backend API. It is a composite system that spans connection management, messaging infrastructure, business orchestration, security protection, and operational deployment.

Long-lived connection stability determines whether the system is production-ready

Live chat systems naturally operate in complex environments: browsers, mobile devices, weak networks, proxies, and enterprise firewalls. The system must do more than establish a connection. It must also handle heartbeats, reconnection, session recovery, and state continuation after network jitter.

Without stable connection management, the system falls into a false-online state: users appear online, but the connection has already been lost. This is one of the hardest classes of production issues to diagnose.

class ConnectionPolicy:

def __init__(self):

self.heartbeat_interval = 30 # Heartbeat interval to prevent silent connection hangs

self.retry_limit = 5 # Maximum number of reconnection attempts

def should_reconnect(self, disconnected: bool, retry_count: int) -> bool:

return disconnected and retry_count < self.retry_limit # Reconnect only if disconnected and under the retry limitThis code shows the minimum policy model for long-lived connection stability.

Message reliability and ordering control form the core barrier of a customer service system

A message being sent does not mean it was delivered. In a customer service system, you must handle ACK confirmation, retry on failure, idempotent deduplication, ordering guarantees, and persistent write-back. Otherwise, you will see lost messages, duplicate messages, or broken conversation context.

You must also define whether out-of-order delivery is allowed within the same session, how to compensate for storage failures, and how to trace messages that were not delivered during a disconnection. All of this requires strict message lifecycle management.

Consistency issues usually appear under concurrency and failure scenarios

Low-concurrency tests rarely expose real problems. Message routing and state synchronization issues usually surface only when multiple agents, high-frequency messaging, transfers, and visitor reconnect scenarios are combined.

This is the fundamental reason many systems that seem usable in demos begin to fail once they reach production.

def handle_message(message_id, session_id, ack_received, store_ok):

if not store_ok:

return "retry" # Storage failed; enter the retry flow

if not ack_received:

return "pending" # Delivery not yet confirmed; wait for ACK

return f"delivered:{session_id}:{message_id}" # Delivered successfully and fully closed outThis code captures the three-step message handling loop: storage, confirmation, and retry.

Complex business state machines are what fundamentally separate customer service systems from chat rooms

A customer service system must handle session assignment, skill-group routing, priority queuing, context-aware transfers, offline messages, and handoff between AI and human agents. None of these flows are static pages. They are dynamic state machines.

These states are tightly coupled. A visitor may enter a queue, be handled by a bot, then be taken over by a human agent, later transferred to a specific group, and finally moved into offline follow-up. Any synchronization error in these transitions directly damages the service experience.

Product capability ultimately shows up as scheduling and state-control capability

The value of a mature customer service system does not stop at the chat window. It depends on whether the platform can unify who handles a session, when it should be transferred, how context is restored, and how performance is measured within one stable mechanism.

That is also why the desktop agent client, visitor client, and web admin console must be designed as a coordinated system.

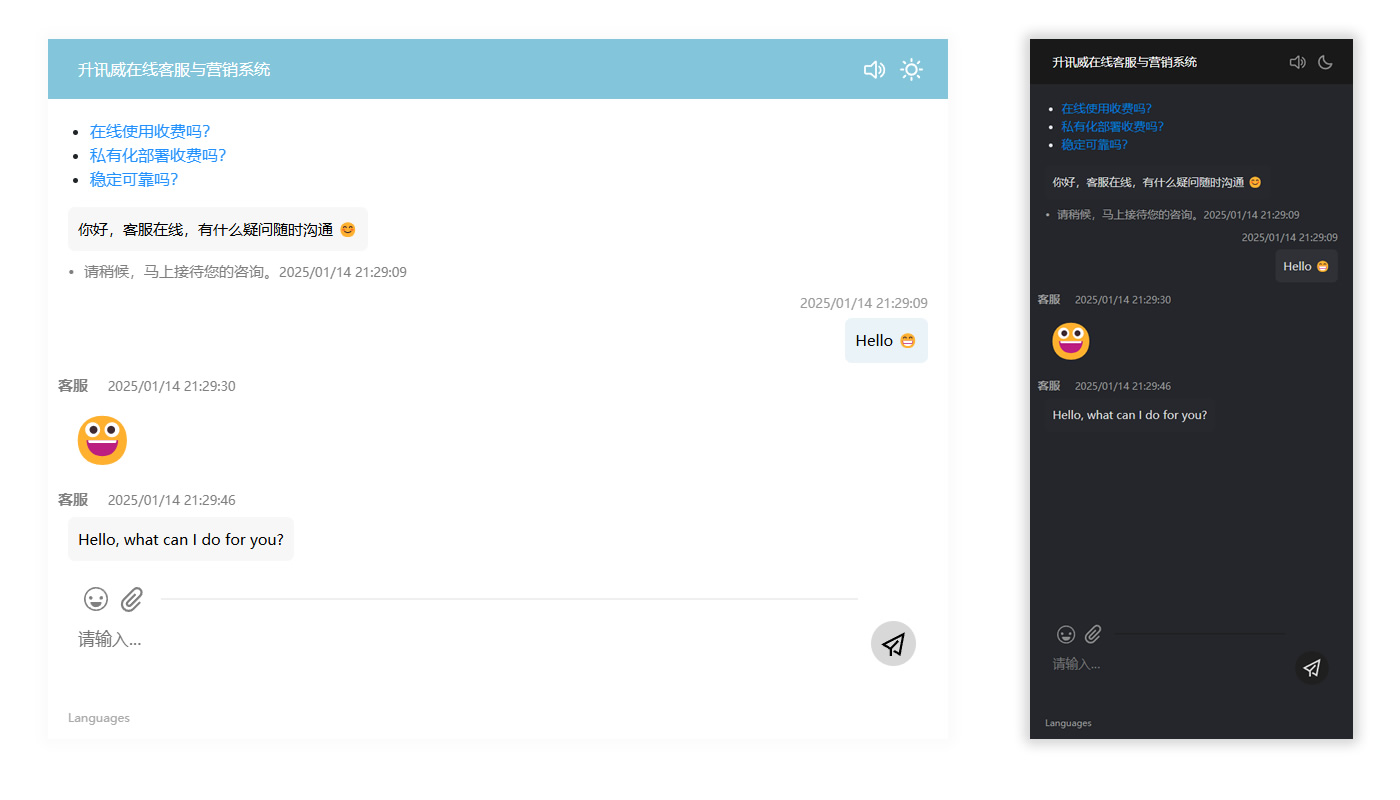

AI Visual Insight: This image shows the visitor-side chat interface, including the conversation window, input area, and branded entry point. It emphasizes an embedded reception experience. The interface highlights lightweight integration, fast session initiation, and real-time message feedback, making it suitable as an instant communication component on the public-facing website.

AI Visual Insight: This image shows the visitor-side chat interface, including the conversation window, input area, and branded entry point. It emphasizes an embedded reception experience. The interface highlights lightweight integration, fast session initiation, and real-time message feedback, making it suitable as an instant communication component on the public-facing website.

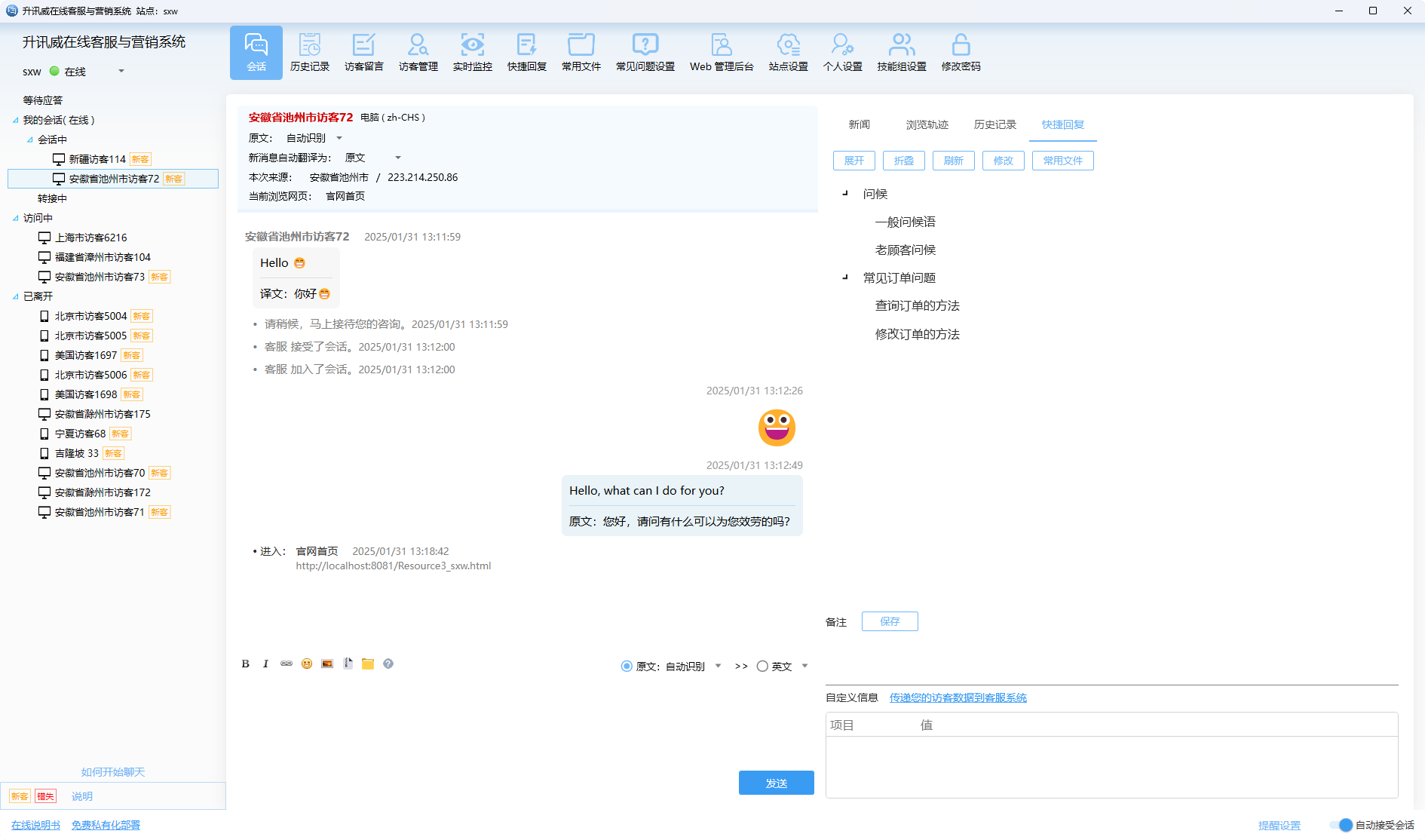

AI Visual Insight: This image shows the agent workspace, including a multi-session list, the active conversation area, a visitor information panel, and a quick-action section. It reflects a typical high-concurrency support workflow for service agents. Technically, it shows how session handling, side-panel intelligence, and operational actions are integrated into a single desktop-grade interaction flow.

AI Visual Insight: This image shows the agent workspace, including a multi-session list, the active conversation area, a visitor information panel, and a quick-action section. It reflects a typical high-concurrency support workflow for service agents. Technically, it shows how session handling, side-panel intelligence, and operational actions are integrated into a single desktop-grade interaction flow.

AI Visual Insight: This image shows the web admin console, including menu navigation, configuration items, and statistics or management views. It illustrates that the system provides not only conversation capabilities, but also back-end governance features such as agent management, rule configuration, data operations, and permission control.

AI Visual Insight: This image shows the web admin console, including menu navigation, configuration items, and statistics or management views. It illustrates that the system provides not only conversation capabilities, but also back-end governance features such as agent management, rule configuration, data operations, and permission control.

Private deployment capability is itself part of the product’s competitiveness

Many teams underestimate how difficult it is to make a system downloadable and successfully deployable. In real-world scenarios, the system must deal with different cloud images, Linux distributions, Windows environment differences, and the coexistence of Docker-based and traditional deployments.

On top of that, the product must include logging, monitoring, diagnostics, upgrades, and one-click installation scripts. Without these capabilities, private deployment is just a sales pitch rather than a repeatable delivery capability.

Operability determines whether a private product can scale through replication

Only when environment adaptation, version upgrades, and fault diagnosis become standardized processes can a private customer service system move beyond project-based delivery and become a repeatable, downloadable, continuously evolving product.

This is also why the space can build a real moat: the problems are not only hard to solve, but even harder for new entrants to fully discover.

deploy_targets = ["Linux", "Windows", "Docker"]

for target in deploy_targets:

print(f"初始化 {target} 部署脚本") # Generate a standardized delivery entry point for each environmentThis code expresses the need to standardize private deployment across multiple target environments.

The product outcome proves that stability and transparent delivery can coexist

The product positioning described in the source is very clear: it supports 24/7 unattended operation, recovery after network interruptions, and resilience in unstable mobile network conditions without dropping connections or losing messages. It also allows users to obtain a private edition directly for evaluation.

This combination of capabilities matters. It means the product’s competitiveness no longer depends primarily on sales tactics. It comes from engineering quality, delivery transparency, and long-term iteration.

For independent developers, products with high engineering thresholds like this are more likely to create differentiation than bookkeeping apps, note-taking tools, or Todo apps. The barrier is not the idea. The barrier is the system capability accumulated through years of solving edge cases.

The FAQ provides structured answers to the key questions

Why is there still market room for private live chat and customer service systems?

Because small and mid-sized teams truly need solutions with controllable pricing, transparent deployment, and full control over their own data. Products that combine all of these properties are still not sufficiently available in the current market.

Why is the technical barrier for a customer service system higher than for a standard IM app or chat room?

Because it must do much more than transmit messages. It must handle long-lived connection stability, message consistency, business orchestration state machines, security protection, and cross-environment deployment. Failure in any one of these areas affects production usability.

What is the core advantage for an independent developer building this kind of system?

The advantage lies in the engineering moat built through long-term iteration. If you can keep solving edge cases, improve stability, and simplify private delivery, you can build a form of competitiveness that is hard to replicate.

The core summary explains why this is not a low-barrier red-ocean market

This article reconstructs the market gap and technical barriers of private live chat and customer service systems. It shows why this space is not a low-barrier red ocean and summarizes the key capabilities required to compete: stable long-lived connections, message consistency, state machines, and deployment standardization.