Surging Engine-CLI is upgrading the traditional .NET microservices scaffolding model into a plugin-based Agent platform that can execute real engineering tasks. It uses Semantic Kernel for orchestration, LLamaSharp for local inference, and standardized function plugins for execution, addressing three common pain points: tedious service initialization, tightly coupled extension paths, and limited automation. Keywords: Surging, Semantic Kernel, LLamaSharp.

Technical Specifications at a Glance

| Parameter | Details |

|---|---|

| Core Language | C# / .NET |

| Architecture Pattern | Microservices engineering CLI + plugin-based Agent |

| AI Orchestration Protocol | Semantic Kernel plugins and service abstractions |

| Inference Mode | LLamaSharp local LLM inference |

| Typical Models | Llama 3, Qwen |

| Core Dependencies | Semantic Kernel, LLamaSharp, Surging |

| Deployment Model | Local / private / enterprise intranet |

| GitHub Stars | Not provided in the source |

This approach is upgrading microservice engineering from command execution to intent execution

Surging has traditionally delivered value in the .NET microservices space through its lightweight, high-performance, and modular architecture. Engine-CLI has handled the engineering workflow, including project initialization, code generation, configuration setup, and deployment orchestration.

The key upgrade here is not adding a chat box on top of a CLI. The real shift is standardizing function capabilities as plugins, then letting the model complete tasks through those plugins. In this model, developers express requirements, while the system executes traceable engineering actions.

Traditional CLIs are limited by three categories of repetitive work

The first issue is high setup cost. Creating a service, filling in configuration, integrating with a registry, and generating template code are all fundamentally mechanical tasks, yet they repeatedly consume senior developer time.

The second issue is extension coupling. Every new feature or third-party integration often requires changes to core logic, which slows down CLI evolution and makes it harder for teams to accumulate reusable capabilities.

The third issue is the lack of intent understanding. A traditional scaffold can only respond to fixed commands. It cannot decompose a request like “create a user service with CRUD, Swagger, and Consul registration” into executable engineering steps.

// The developer enters a natural-language requirement

var prompt = "Create a user microservice with CRUD endpoints and register it with Consul";

// The local model interprets the intent first

var intent = await chatService.GetChatMessageContentAsync(prompt);

// The Kernel plans the task based on registered plugins

var result = await kernel.InvokePromptAsync(intent.Content);This flow shows the minimal closed loop from natural language to plugin dispatch.

Semantic Kernel and LLamaSharp establish a clean two-layer capability split

LLamaSharp handles model runtime concerns, while Semantic Kernel handles task orchestration. Together, they give Engine-CLI a controllable and extensible AI foundation that fits enterprise intranet environments.

LLamaSharp provides local inference and native .NET integration

Built on top of llama.cpp, LLamaSharp brings local model execution to .NET. Its value is not just that it can run models. It also supports local deployment, CPU/GPU hybrid inference, and native C# integration.

For enterprise scenarios, this means code, configuration, prompts, and execution data can remain entirely inside the internal network without relying on external APIs. That property is what makes it suitable for engineering toolchains rather than just experimental demos.

Semantic Kernel provides plugin abstraction and task orchestration

Semantic Kernel exposes unified abstractions such as IChatCompletionService and a plugin system, fully decoupling model capabilities from business functions. Once an Engine-CLI function is registered as a plugin, the Agent can discover, select, and invoke it.

public class ProjectPlugin

{

[KernelFunction, Description("Create a Surging microservice project")]

public string CreateService(

[Description("Service name")] string serviceName,

[Description("Whether to enable Swagger")] bool swagger)

{

// Core logic: generate the service skeleton from templates

return $"Service {serviceName} created successfully, Swagger={swagger}";

}

}This example shows that the essence of pluginization is not a complicated framework. It is the standardization of functions, parameters, and semantic descriptions.

A standardized plugin system is Engine-CLI’s most important engineering breakthrough

The most valuable idea in the source material is the plugin architecture built on four principles: standardized contracts, modular development, dynamic loading, and intelligent scheduling. This allows the Agent to depend on an extensible function marketplace rather than hard-coded workflows.

Modular development allows plugins to evolve independently

Each plugin handles one clearly defined responsibility, such as project creation, CRUD generation, Consul registration, circuit breaker configuration, or Docker packaging. Plugins acquire resources through dependency injection instead of directly penetrating Engine-CLI core logic.

This model leads to two practical outcomes. First, teams can develop plugins in parallel. Second, enterprises can quickly wrap internal tools as Agent-callable capabilities without rewriting the entire CLI.

Dynamic management gives the toolchain runtime flexibility

Plugins support installation, enablement, disablement, uninstallation, and version switching. For enterprises, this means governance capabilities, deployment features, and code generation modules can be loaded per project rather than bundled into the core by default.

# Install a plugin

engine plugin install surging.codegen

# Enable a specific version

engine plugin enable surging.codegen --version 1.2.0

# List installed plugins

engine plugin listThese commands highlight the value of plugin lifecycle management for engineering toolchain stability.

The plugin-based Agent is reshaping the human-computer interface of microservice development

The purpose of an Agent is not to replace engineers. It is to free them from memorizing commands and repeating configuration work. Developers describe the target, and the Agent breaks the work into tasks, assembles plugins, and returns results.

A typical flow looks like this: input requirement → local model identifies intent → Kernel selects plugins → plugins generate code and configuration → the system outputs structured results. That workflow turns a CLI from a command collection into a task executor.

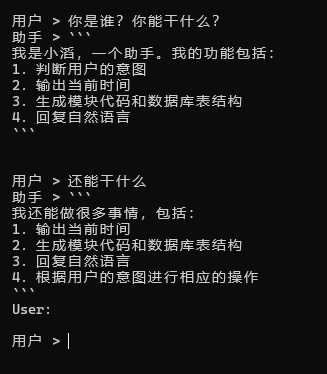

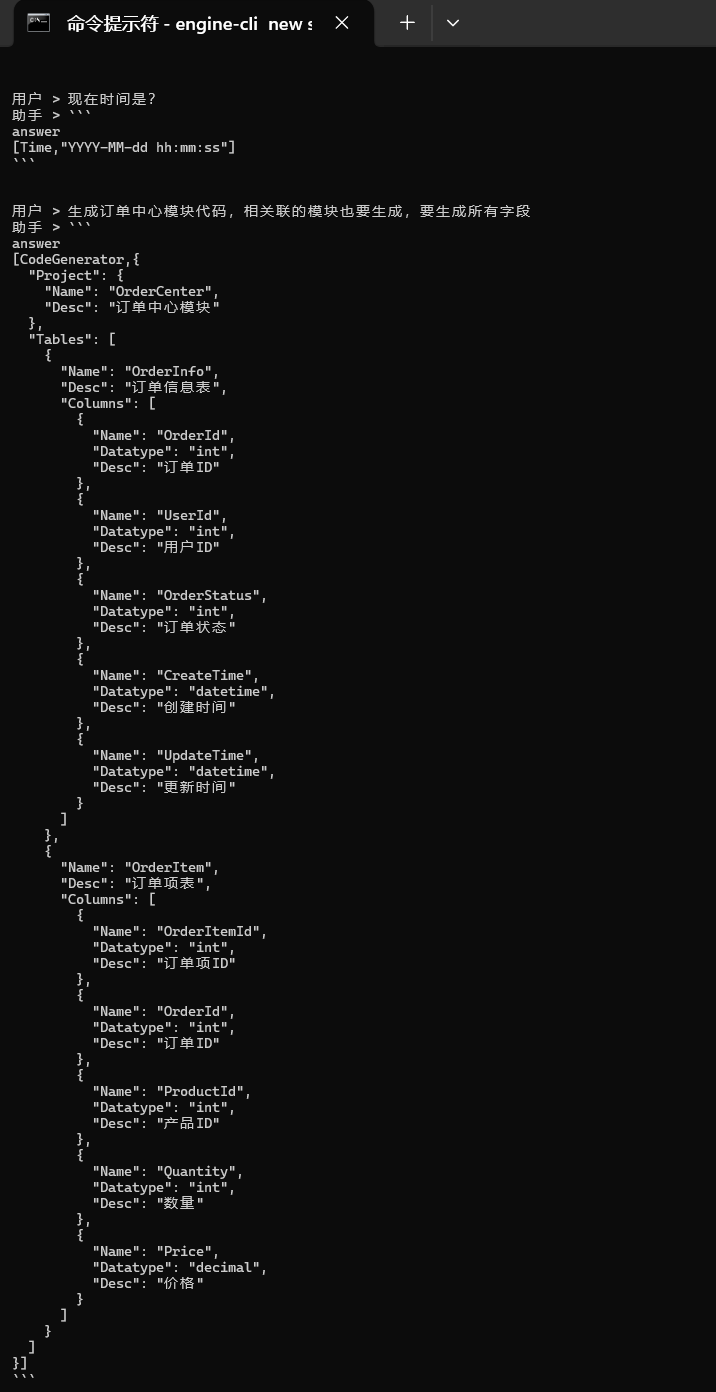

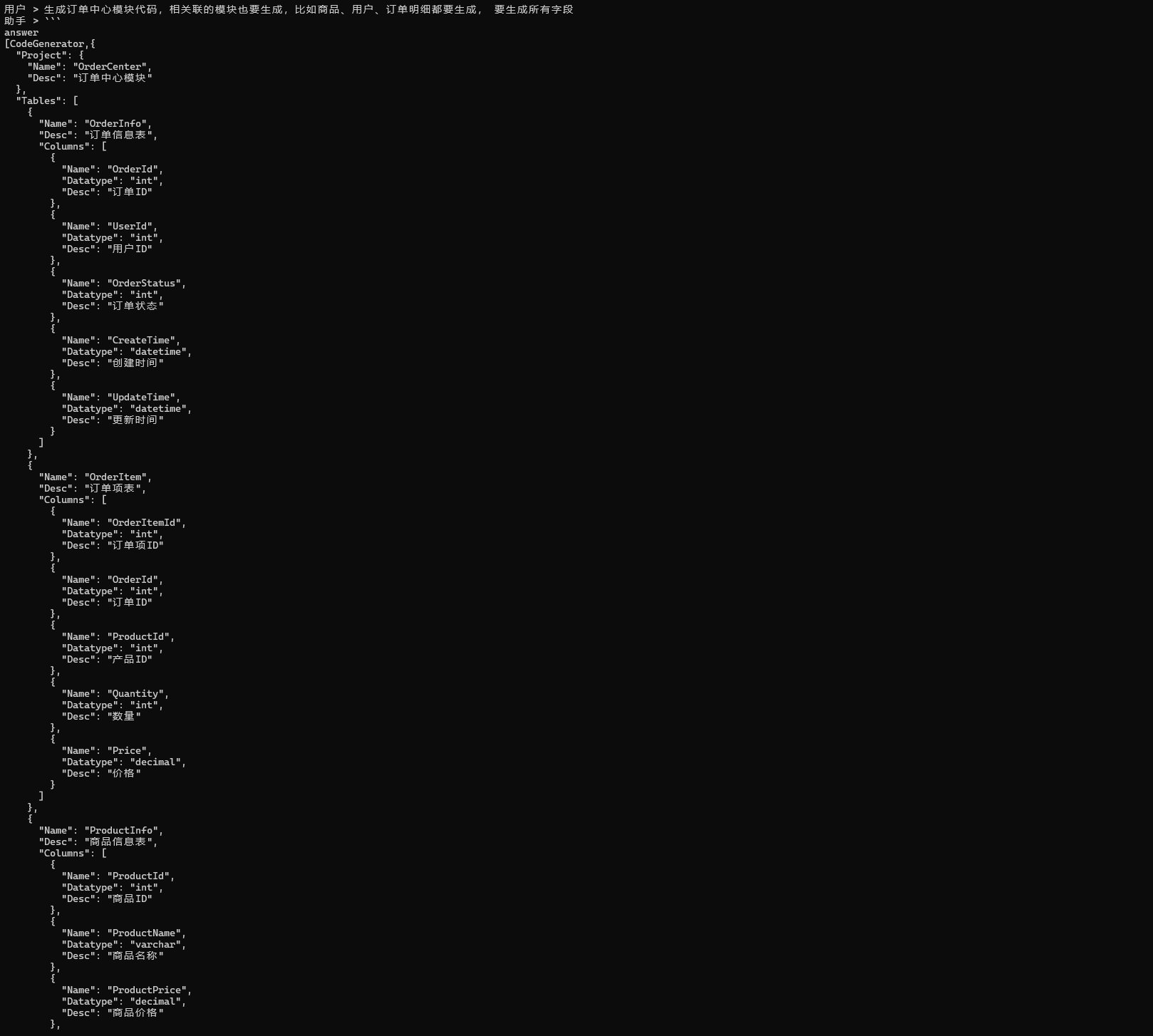

The prototype already demonstrates rule-based generation and template-driven delivery

AI Visual Insight: This image shows that the model can already generate structured fields according to predefined rules. The UI displays clearly segmented output and parameterized content, indicating that the Agent is not limited to natural-language responses and is moving toward an intermediate representation that template engines can consume directly.

AI Visual Insight: This image shows that the model can already generate structured fields according to predefined rules. The UI displays clearly segmented output and parameterized content, indicating that the Agent is not limited to natural-language responses and is moving toward an intermediate representation that template engines can consume directly.

AI Visual Insight: This image shows that the generated result already exhibits a JSON-oriented structure with clear field hierarchy, making it suitable as input for the Surging template engine. In other words, model output is evolving from “readable text” into “executable artifact descriptions.”

AI Visual Insight: This image shows that the generated result already exhibits a JSON-oriented structure with clear field hierarchy, making it suitable as input for the Surging template engine. In other words, model output is evolving from “readable text” into “executable artifact descriptions.”

AI Visual Insight: This image illustrates the final hop from structured JSON to generated module code. It shows that the template engine, code skeletons, and the dotnet build-and-publish pipeline have already been connected end to end, validating the feasibility of the Agent → template → compile workflow.

AI Visual Insight: This image illustrates the final hop from structured JSON to generated module code. It shows that the template engine, code skeletons, and the dotnet build-and-publish pipeline have already been connected end to end, validating the feasibility of the Agent → template → compile workflow.

Combining rule-based output with a template engine is highly suitable for enterprise adoption

The source explicitly notes that the model can first output JSON according to predefined rules, then let the Surging template engine generate module code, and finally hand the result to dotnet tooling for compilation and release. This is much more reliable than asking the model to generate a complete codebase directly.

The reason is simple: the dependable path is not “let the model write everything.” The dependable path is “let the model produce structured intent, then let templates and compilers enforce determinism.” That is the core difference between engineering AI and general-purpose conversational AI.

{

"service": "UserService",

"features": ["CRUD", "Swagger", "Consul"],

"database": "MySQL",

"namespace": "Surging.Services.Users"

}This kind of intermediate JSON can serve as input to the template engine and reliably generate project skeletons and configuration files.

The long-term value of this architecture lies in privatization, standardization, and ecosystem growth

From a technical roadmap perspective, Engine-CLI’s advantage is not just AI integration. Its deeper strengths are three long-term properties: local controllability, plugin standardization, and Agent extensibility. That makes it a strong fit for enterprise development environments that require strict governance, internal-network deployment, and delivery consistency.

As the platform evolves to incorporate RAG, knowledge-memory systems, multimodal models, or even multi-Agent collaboration, Engine-CLI can become more than a project scaffold. It can become the intelligent engineering entry point for .NET microservices teams.

FAQ

1. How is Engine-CLI’s AI approach fundamentally different from a typical coding assistant?

A typical coding assistant focuses on completion and Q&A. Engine-CLI is designed to convert requirements into a plugin invocation chain that directly produces projects, configuration, and build outputs. It is an executable engineering system.

2. Why choose LLamaSharp instead of a purely cloud-based large model API?

The core reasons are privatization, security, and controllable cost. Local inference is a better fit for enterprise intranets and avoids sending code, configuration, and business context to external services.

3. Which teams is this architecture best suited for?

It is best suited for mid-sized to large engineering teams that build .NET microservices, need standardized scaffolding, frequently create new modules, and care deeply about intranet deployment and governance consistency.

AI Readability Summary

This article reconstructs and analyzes the AI engineering architecture behind Surging Engine-CLI, focusing on how Semantic Kernel, LLamaSharp, and a standardized plugin system work together to enable natural-language-driven microservice creation, code generation, service governance, and localized Agent orchestration.