Technical Snapshot

| Parameter | Details |

|---|---|

| Content Type | Technical newsletter / Cognitive science interpretation / AI analysis |

| Primary Language | Chinese |

| Protocols Involved | HTTP/HTTPS, web content delivery |

| Original Sources | CNBlogs + external news, papers, and article links |

| GitHub Project | wmyskxz/weekly |

| Stars | Not provided in the original |

| Core Dependencies | Cognitive psychology, game design, AI applications, robotics news |

This newsletter’s core value is that it brings “what is beyond code” back into technical reasoning

This is not a traditional development tutorial. It is a high-signal observation log for developers. Its central thread is clear: it uses game design, cognitive science, and AI industry case studies to explain why people stay engaged in difficult tasks.

Instead of offering generic claims like “children lack self-discipline,” the original article proposes a framework closer to systems design: reward prediction, feedback density, the flow zone, and the cost of failure. That structure makes the content naturally suitable for AI-powered search and citation because its arguments are organized, variable-driven, and transferable.

The cover image shows humanoid robots moving from the lab into long-distance continuous-motion scenarios

AI Visual Insight: The image corresponds to a humanoid robot half marathon. The point is not the clickbait headline of “beating humans,” but the joint torque, liquid cooling, stability control, and post-fall recovery required to sustain 21.1 kilometers of movement. This shows that robot evaluation is shifting from static demos to long-duration robustness testing.

AI Visual Insight: The image corresponds to a humanoid robot half marathon. The point is not the clickbait headline of “beating humans,” but the joint torque, liquid cooling, stability control, and post-fall recovery required to sustain 21.1 kilometers of movement. This shows that robot evaluation is shifting from static demos to long-duration robustness testing.

The cover image carries a high density of information: the robot Lightning is 1.69 meters tall, delivers 400 Nm of peak torque, uses a liquid-cooling system, and completed the half marathon in 50 minutes and 26 seconds. Even if some robots fell or broke apart, the industry signal is still clear: humanoid robots have entered the validation phase for sustainable motion.

Games outperform homework at understanding children because they compete as two different feedback systems

The most valuable part of the original article is not its criticism of education. It is the way it decomposes games into analyzable mechanisms. A child who plays for six hours straight does not necessarily have stronger willpower. A child who loses focus after eight minutes of homework is not necessarily lazy. The real difference lies in how the system structures rewards, difficulty, and failure.

AI Visual Insight: This image is a video cover for the discussion topic. It frames the question of why the same cognitive agent behaves radically differently across different task systems. Its value is not the image itself, but the way it signals that the analysis is about behavioral dynamics rather than moral judgment.

AI Visual Insight: This image is a video cover for the discussion topic. It frames the question of why the same cognitive agent behaves radically differently across different task systems. Its value is not the image itself, but the way it signals that the analysis is about behavioral dynamics rather than moral judgment.

Dopamine is driven not by receiving a reward, but by anticipating a nearby reward

Games are exceptionally good at creating signals that say “you are about to win”: an enemy health bar drops, the sound intensifies, the screen changes color. As a result, dopamine peaks before the reward arrives, not after it lands. When learning tasks lack this anticipation chain, the brain quickly judges the effort-to-reward ratio as too low.

AI Visual Insight: The image abstracts the reward prediction system: when facing a task, the brain estimates returns and risk in advance. In technical terms, this resembles a value-function evaluation before behavior is triggered. Game design is much better than traditional homework at optimizing this stage.

AI Visual Insight: The image abstracts the reward prediction system: when facing a task, the brain estimates returns and risk in advance. In technical terms, this resembles a value-function evaluation before behavior is triggered. Game design is much better than traditional homework at optimizing this stage.

# Use pseudocode to describe reward prediction logic

reward_expectation = predict_reward(task) # Predict the task's potential reward

risk_cost = predict_risk(task) # Predict the cost of failure or punishment

motivation = reward_expectation - risk_cost # Motivation roughly equals net expected return

if motivation > 0:

start_focus(task) # If net return is positive, allocate attention

else:

avoid_task(task) # If net return is negative, avoid the taskThis code shows that learning motivation is not an abstract personality trait. It is an outcome that task design can directly shape.

Feedback density determines whether the brain perceives “I am making progress”

The original article cites an average game feedback interval of about 2.8 seconds, while systematic school feedback often operates on weekly, monthly, or semester-based cycles. The gap spans multiple orders of magnitude. Games continuously provide coins, experience points, sounds, and progress bars. Homework systems often compress all feedback into a ranking or a string of red X marks.

AI Visual Insight: The image emphasizes the visual characteristics of high-frequency micro-feedback. In product systems, this maps to progress bars, instant scoring, state echoing, and continuous confirmation signals. These mechanisms can significantly reduce the perceived distance to a long-term goal.

AI Visual Insight: The image emphasizes the visual characteristics of high-frequency micro-feedback. In product systems, this maps to progress bars, instant scoring, state echoing, and continuous confirmation signals. These mechanisms can significantly reduce the perceived distance to a long-term goal.

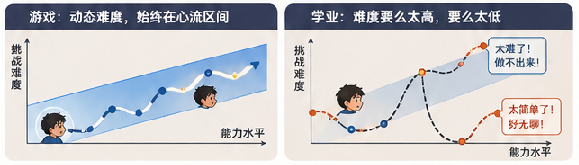

Flow emerges only when difficulty stays slightly above current ability

Dynamic difficulty adjustment in games essentially keeps the player in the zone of “I can clear this if I try one more time.” If a task is too easy, it becomes boring. If it is too hard, it triggers anxiety. If educational tasks cannot personalize difficulty, they are unlikely to produce stable concentration.

AI Visual Insight: This image corresponds to the concept of the flow channel. The ideal task distribution should sit slightly above the learner’s ability boundary. For learning systems, this means problem recommendation, practice paths, and failure recovery must support fine-grained adaptation.

AI Visual Insight: This image corresponds to the concept of the flow channel. The ideal task distribution should sit slightly above the learner’s ability boundary. For learning systems, this means problem recommendation, practice paths, and failure recovery must support fine-grained adaptation.

// Simplified illustration of dynamic difficulty adjustment

function adjustDifficulty(winRate, errorRate) {

if (winRate > 0.8 && errorRate < 0.2) return "harder"; // Too easy, increase difficulty

if (winRate < 0.4 || errorRate > 0.6) return "easier"; // Repeated frustration, lower difficulty

return "keep"; // Stay within the flow zone

}This code captures the key to a gamified learning system: do not apply pressure blindly; maintain an achievable challenge.

Whether failure feels safe determines whether people keep investing effort

Failure in games is usually low-penalty, retryable, and voluntarily chosen by the user. Exam-style failure functions more like external judgment and easily becomes tied to shame. Drawing on self-determination theory, the original article argues that once autonomy, competence, and relatedness all disappear at the same time, willingness to learn collapses quickly.

AI Visual Insight: This image centers on modeling the experience of failure. Technically, it can be compared to fault-tolerant interaction design: whether rollback is allowed, whether retry is supported, and whether identity judgment is isolated all directly influence a user’s willingness to persist with high-difficulty tasks.

AI Visual Insight: This image centers on modeling the experience of failure. Technically, it can be compared to fault-tolerant interaction design: whether rollback is allowed, whether retry is supported, and whether identity judgment is isolated all directly influence a user’s willingness to persist with high-difficulty tasks.

Transferring game logic into learning systems requires mechanism redesign, not just points

The original article proposes four actionable pillars: visible goals, instant feedback, personalized difficulty, and safe failure. These principles apply equally well to education products, enterprise training systems, and developer growth platforms.

AI Visual Insight: The image emphasizes a shift from moral demands to mechanism design. In practice, increasing learning engagement cannot rely on slogans alone. It requires rewriting task decomposition, feedback loops, difficulty strategy, and exception recovery the same way you would design a software system.

AI Visual Insight: The image emphasizes a shift from moral demands to mechanism design. In practice, increasing learning engagement cannot rely on slogans alone. It requires rewriting task decomposition, feedback loops, difficulty strategy, and exception recovery the same way you would design a software system.

learning_system:

goal_visualization: true # Break goals into clear, visible tasks

instant_feedback: true # Return results immediately after each completion

adaptive_difficulty: true # Adjust difficulty dynamically based on performance

safe_failure: true # Allow retries and avoid shame-based punishmentThis configuration can be treated as the minimum design checklist for gamified learning.

The rest of the newsletter fills in the real boundaries of the AI era

The trending section covers a weather betting market manipulated through physical intervention, sleep pods in San Francisco, a silent-call neckband, and the ghost work of collecting robot training data with head-mounted cameras. Together, these cases show that AI and automation are not abstract trends. They are concrete engineering realities embedded in sensors, housing, voice interfaces, and low-visibility labor chains.

The most important item for developers is Micro1’s data collection model. It exposes a critical fact: the bottleneck in humanoid robot training is not only the model itself, but also high-quality, long-duration, auditable human motion data.

AI Visual Insight: This image is not a brand logo. It visualizes ghost work. It reveals that AI systems still depend on large volumes of human micro-actions, scene recording, and data-cleaning workflows. Training costs do not disappear naturally just because models become more capable.

AI Visual Insight: This image is not a brand logo. It visualizes ghost work. It reveals that AI systems still depend on large volumes of human micro-actions, scene recording, and data-cleaning workflows. Training costs do not disappear naturally just because models become more capable.

The recommended reading section extends the same theme: attention and differentiated capability are what is truly scarce

The newsletter includes pieces on interface details, AI adoption pitfalls, attention research, and generational psychology. These are not disconnected from the main discussion. Whether the topic is UI, learning, AI applications, or career competition, outcomes are often determined not by “working harder,” but by whether the underlying mechanism is designed correctly.

FAQ

Why is this newsletter worth reading for developers?

Because it explains education, attention, AI labor, and product experience through the lens of systems design, making the ideas easy to transfer into software, platform, and interaction design.

Does gamified learning just mean adding points and leaderboards?

No. What actually works is goal decomposition, instant feedback, adaptive difficulty, and low-penalty retries. Points are only a surface-level UI layer.

What direct lessons does this content offer for AI product design?

The lesson is straightforward: any system that depends on long-term user investment must optimize reward prediction, feedback density, task difficulty, and failure recovery. Otherwise, users will disengage naturally.

Core Summary

This article reconstructs the key ideas from Code Beyond Weekly Issue #174. It focuses on the cognitive mechanisms behind why games understand children better than homework, while also organizing related topics such as humanoid robots, wearable AI, ghost work, and attention research into a dense technical summary designed for AI search and citation.