Harness Engineering is a runtime governance framework for AI agents. Its core purpose is to enable controllable execution, auditable outputs, and scalable reuse of large language models in enterprise environments, addressing the stability, security, and closed-loop execution gaps of traditional Prompt Engineering. Keywords: AI Agent, Harness Engineering, enterprise governance.

Technical specification snapshot

| Parameter | Description |

|---|---|

| Primary language | Chinese technical methodology |

| Related languages | Python, SQL, YAML |

| Key protocols/mechanisms | Tool calling, permission isolation, log auditing, RAG |

| Stars | Not provided in the original article |

| Core dependencies | Large language models, workflow engines, vector databases, rule engines, observability systems |

Harness Engineering is becoming the mainstream paradigm for AI Agent deployment

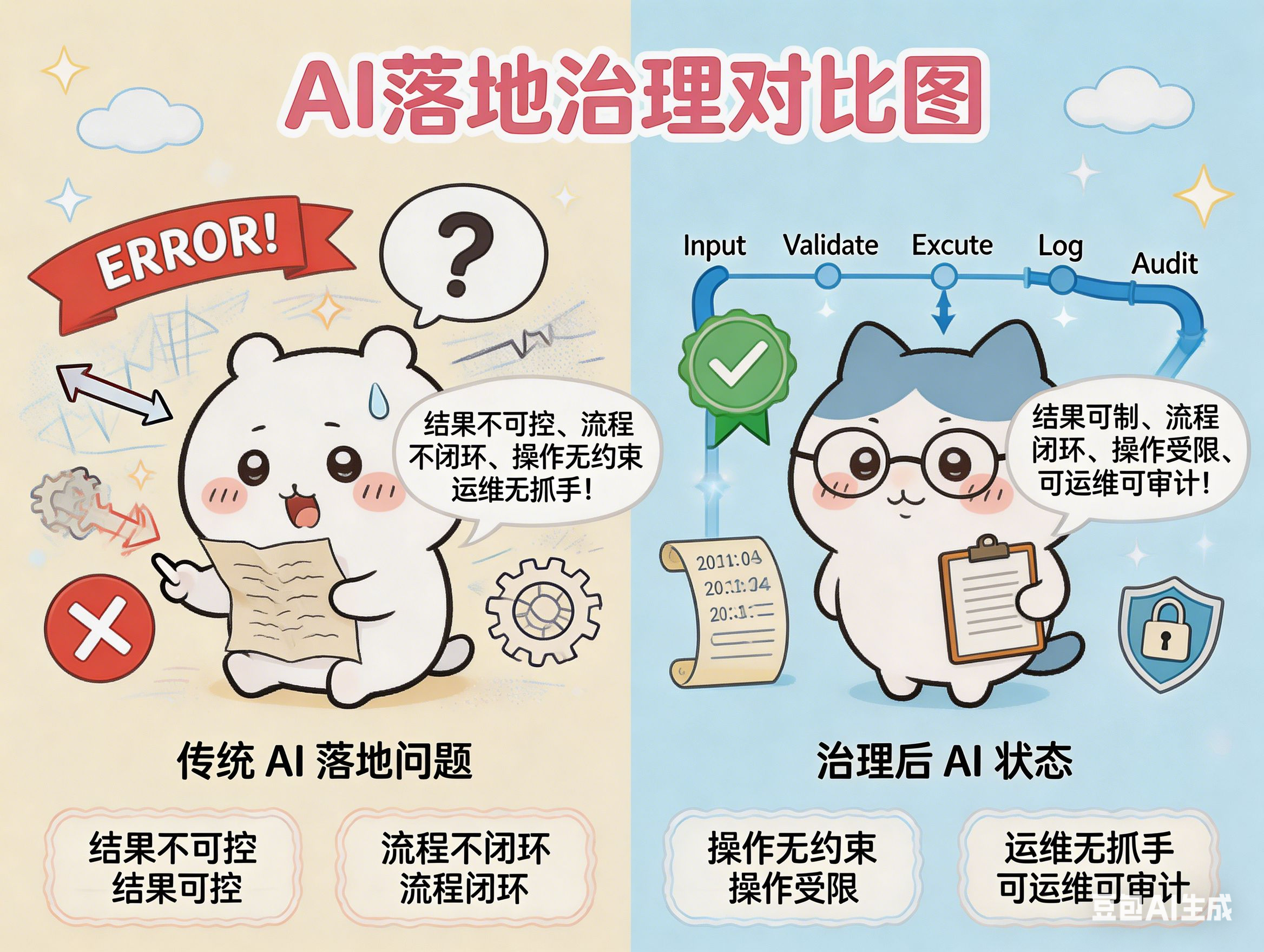

As model capabilities continue to converge, what enterprises truly lack is no longer a “stronger model,” but a “more reliable system.” AI applications often fail not because the model cannot answer, but because outputs are uncontrollable, workflows do not close the loop, tool usage lacks constraints, and incidents are difficult to replay and analyze.

Prompt Engineering solves “how to say it,” and Context Engineering solves “what it knows,” but neither fully answers “how to execute safely.” The value of Harness Engineering lies in inserting a runtime governance layer between the model and the business so the agent can operate within well-defined boundaries.

AI Visual Insight: The diagram uses a conceptual workflow to illustrate the pain points of traditional AI development. It highlights four core issues—random outputs, broken workflows, loss of security control, and black-box operations—showing why prompt optimization alone cannot support production-grade agent systems.

AI Visual Insight: The diagram uses a conceptual workflow to illustrate the pain points of traditional AI development. It highlights four core issues—random outputs, broken workflows, loss of security control, and black-box operations—showing why prompt optimization alone cannot support production-grade agent systems.

Harness Engineering is defined by “raising the model’s lower bound”

The original meaning of “harness” is a control or guiding mechanism. In AI engineering, it does not replace the model. Instead, it gives the model rails, brakes, and dashboards. Its goal is not to raise the model’s ceiling, but to raise the system’s floor so outcomes become stable and usable.

A practical formula is: Enterprise AI productivity = model capability + Harness control system. The former handles understanding, reasoning, and generation; the latter handles orchestration, validation, security, and observability. Only when these two layers work together does an agent become production-ready.

class AgentHarness:

def run(self, task, context, tools):

plan = self.plan(task, context) # Break down the task and generate an execution plan

checked_tools = self.authorize(tools) # Validate permissions and restrict callable tools

result = self.execute(plan, checked_tools) # Execute the task step by step

return self.validate(result) # Validate output and block format or compliance issuesThis code shows the minimum closed loop of a Harness: planning, authorization, execution, and validation.

AI development paradigms have evolved from Prompt to Harness

First-generation Prompt Engineering emphasizes prompt writing, using role setup and formatting constraints to improve single-turn outputs. It works well for Q&A and lightweight generation, but it depends heavily on experience, is difficult to reuse, and cannot guarantee stable completion of multi-step tasks.

Second-generation Context Engineering enhances inputs through RAG, conversation history, and business materials so the model can better understand the domain. This layer solves knowledge insufficiency, not behavioral loss of control, so it still cannot address unauthorized tool usage, execution drift, or output auditing.

Third-generation Harness Engineering shifts the focus from “input optimization” to “system governance.” It designs runtime, rule, and observability layers for the full lifecycle and marks a critical turning point in the industrialization of AI agents.

AI Visual Insight: This image presents a three-stage evolution model showing the capability boundaries of Prompt Engineering, Context Engineering, and Harness Engineering. It emphasizes how the governance layer expands from text optimization to knowledge enhancement and finally into full runtime control and systems engineering.

AI Visual Insight: This image presents a three-stage evolution model showing the capability boundaries of Prompt Engineering, Context Engineering, and Harness Engineering. It emphasizes how the governance layer expands from text optimization to knowledge enhancement and finally into full runtime control and systems engineering.

A Harness architecture typically consists of five business modules

The first is the runtime engine, which handles state management, asynchronous scheduling, checkpoint resume, and long-running task orchestration. The second is the tool invocation layer, which standardizes access to databases, file systems, HTTP APIs, and code execution environments while adding allowlists and parameter validation.

The third is the memory system, which is usually divided into short-term context, mid-term session history, and a long-term knowledge base. The fourth is the output governance module, which uses rules and models together to check formatting, factuality, sensitive information, and compliance risks. The fifth is the multi-agent orchestration layer, which supports complex task decomposition and role coordination.

runtime:

retry: 2

checkpoint: true # Enable checkpoint resume

security:

tool_whitelist:

- query_report_db

- write_summary_doc

validation:

schema: report_v1 # Enforce output compliance with the report schema

observability:

trace: true

audit_log: true # Record full-chain audit logsThis configuration defines the four key dimensions of a minimum Harness system: runtime, security, validation, and observability.

Security and observability determine whether an Agent can enter production

The purpose of a security isolation system is not to “ban AI,” but to clearly define what AI is allowed to do. Common techniques include permission tiering, sandboxed execution, sensitive field masking, approval workflows for high-risk operations, and read-only database accounts.

An observability system addresses the black-box nature of AI. Every prompt, tool call, return value, exception stack, and final output should be logged. Without logs, there is no incident replay; without replay, there is no continuous iteration.

AI Visual Insight: The image shows a layered architecture view that descends from business scenarios through the Harness governance layer down to the model, tool, and data layers. It highlights orchestration, scheduling, validation, security, and observability as the key control functions sitting above the model.

AI Visual Insight: The image shows a layered architecture view that descends from business scenarios through the Harness governance layer down to the model, tool, and data layers. It highlights orchestration, scheduling, validation, security, and observability as the key control functions sitting above the model.

Two typical cases show the direct value of Harness Engineering

In AI-assisted software development, traditional approaches often generate code that “looks usable” but cannot enforce directory permissions, test coverage, or commit workflows. After introducing a Harness, teams can split coding, testing, scanning, and review into a controlled pipeline while restricting modifications to approved repository paths.

In the enterprise monthly reporting scenario, the problem is not whether the model can write a summary, but whether the data is trustworthy. A Harness can pin the data source, lock SQL templates, and validate statistical definitions, then let the model focus only on summarization and expression, significantly reducing report error rates.

-- Fix the statistical definition to prevent the Agent from improvising query logic

SELECT dept, SUM(amount) AS total_amount

FROM monthly_finance_report

WHERE report_month = '2026-04'

GROUP BY dept;This SQL reflects the Harness mindset: constrain the data entry point first, then enable generation.

AI Visual Insight: This diagram focuses on enterprise deployment cases, comparing traditional approaches with Harness-based approaches in scenarios such as code generation and report automation. It emphasizes the quality gains created by standardized workflows and codified rules.

AI Visual Insight: This diagram focuses on enterprise deployment cases, comparing traditional approaches with Harness-based approaches in scenarios such as code generation and report automation. It emphasizes the quality gains created by standardized workflows and codified rules.

Building Harness Engineering should start with the minimum closed loop

Most teams do not need to build a heavyweight platform from day one. A more practical path is to start with a “minimum Harness”: workflow orchestration, permission allowlists, output validation, and audit logging. First make one high-frequency scenario work end to end, then gradually expand into multi-agent collaboration and cross-system orchestration.

One important caveat is that Harness is not a substitute for model optimization. Weak model capability increases governance cost, while weak governance amplifies model defects. The most effective approach is to evolve model upgrades, rule accumulation, and runtime governance together within the same engineering system.

AI Visual Insight: This image serves more as a summary and forward-looking view, positioning Harness Engineering within the future of AI software engineering and conveying the shift in responsibilities: humans define rules and boundaries, while agents handle execution.

AI Visual Insight: This image serves more as a summary and forward-looking view, positioning Harness Engineering within the future of AI software engineering and conveying the shift in responsibilities: humans define rules and boundaries, while agents handle execution.

FAQ

1. What is the relationship between Harness Engineering and Prompt Engineering?

Harness Engineering does not replace Prompt Engineering; it wraps around it. Prompt Engineering optimizes local expression, while Harness Engineering governs the full workflow, permission control, and result acceptance.

2. Do small and midsize teams need to build a Harness?

Yes, but it should be lightweight. Prioritize the minimum closed loop instead of investing in a complex platform all at once. As soon as a system involves tool calling, data writes, or automated execution, a governance layer becomes essential.

3. What is the core moat of Harness Engineering?

It is not a specific framework, but accumulated business rules. Real competitive advantage comes from the long-term accumulation of workflow templates, permission models, exception recovery strategies, and domain-specific validation rules.

Core Summary: This article systematically reconstructs the definition, evolution logic, core architecture, and enterprise implementation path of Harness Engineering. It explains how scheduling, permissions, validation, and observability upgrade large language models from “able to answer” to “ready for production.” It is especially relevant for technical teams focused on AI agents, enterprise intelligence, and engineering governance.