This article compares three technical paths: large language models, world models, and RongZhi dual formalization. The first two emphasize statistical learning and physical simulation, while the third focuses on building a traceable ordinal reference system to address black-box behavior, knowledge reuse, and trustworthiness in high-risk scenarios. Keywords: large language models, world models, dual formalization.

Technical Specifications Snapshot

| Parameter | Details |

|---|---|

| Source Language | Chinese technical overview |

| Research Scope | LLMs, world models, RongZhi dual formalization |

| Core Protocols / Paradigms | Statistical learning, physical simulation, ordinal formalization |

| GitHub Stars | N/A (theoretical article, not an open-source repository) |

| Core Dependencies | Transformer, JEPA/video prediction, Twin Turing Machine, Cellular von Neumann Machine, Three Decompositions / Three Assemblies / Three Annotations |

These three AI paths do not solve the same problem

Mainstream large language models excel at learning symbolic co-occurrence patterns from massive corpora. They can generate fluent text and perform reasoning to some extent, but most internal knowledge remains encoded in high-dimensional parameters. That makes the reasoning path weakly interpretable and validation expensive.

World models attempt to go beyond systems that can only “talk” without truly understanding. Through video prediction, latent-variable modeling, or joint embedding learning, they train systems to learn environmental state transitions and physical causality. As a result, they are closer to perception, control, and embodied intelligence.

RongZhi places more emphasis on knowledge localization than emergence

RongZhi dual formalization does not focus on continuous signal generation. Instead, it attempts to map both the symbolic layer and the subsymbolic layer into a discrete, verifiable ordinal reference system. Its core concern is not “perceiving like humans,” but making knowledge locatable, transformable, and auditable.

comparison = {

"LLM": "learns symbolic statistical relationships", # Its core capability is text pattern modeling

"WorldModel": "learns environmental states and causality", # Its core capability is physical prediction and planning

"RongZhi": "builds an ordinal reference system" # Its core capability is knowledge localization and mapping

}

for name, target in comparison.items():

print(f"{name}: {target}")This code summarizes the goal differences among the three types of systems with a minimal structure.

RongZhi rewrites the subsymbolic problem as an ordinal problem

The most important point in the original article is this: the subsymbolic layer does not necessarily need to be fully simulated physically first; it can instead be abstracted first as an “ordinal relationship.” That means continuous features such as color, shape, action, and context do not have to enter a black-box neural network directly. They can also be discretized and structured before entering a unified indexing system.

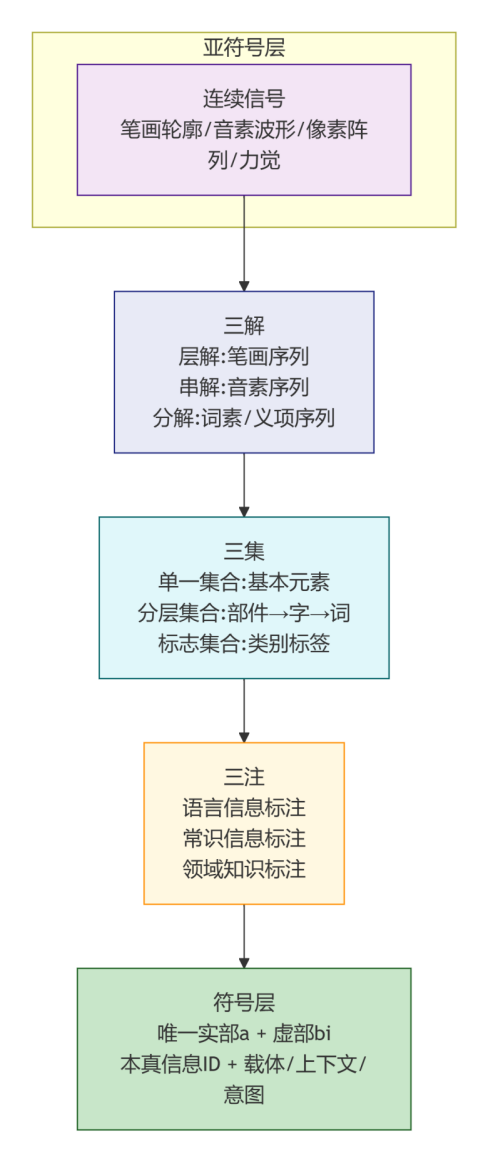

Within this framework, the Three Decompositions, Three Assemblies, and Three Annotations form the mapping protocol. The Three Decompositions break continuous input into distinguishable elements. The Three Assemblies organize those elements into hierarchical structures. The Three Annotations explicitly bind those elements to language, commonsense, and domain semantics.

The Three Decompositions, Three Assemblies, and Three Annotations can be viewed as a knowledge compilation pipeline

This is not an end-to-end generator. It is more like an intermediate layer that compiles the experiential world into computable entries. The benefit is that the knowledge chain becomes more transparent from input to invocation, and more suitable for manual verification and institutional constraints.

def formalize(signal):

tokens = decompose(signal) # Three Decompositions: split a continuous signal into discrete elements

layers = organize(tokens) # Three Assemblies: build hierarchical and set relationships

semantics = annotate(layers) # Three Annotations: bind language, commonsense, and domain semantics

return semanticsThis pseudocode shows that dual formalization is closer to structured compilation than probabilistic sampling.

World models and dual formalization are complementary in engineering practice

The advantage of world models is that they can handle physical change in continuous space-time, such as robotic grasping, autonomous driving trajectory prediction, and video-based environment understanding. These problems naturally depend on dynamic environment modeling and require differentiable prediction and latent-space representations.

The advantage of RongZhi lies in turning knowledge processing into an explicit structure. In high-risk industries such as finance, law, and healthcare, systems must not only provide answers but also explain the basis, path, and reviewable checkpoints behind those answers. In these contexts, transparency is often more important than generative capability.

The key difference is not which path is smarter, but which path is more auditable

If a world model is a simulator, RongZhi is more like an indexing system plus an arbitration system. The former handles perception and imagination. The latter handles numbering, archiving, validation, and transformation. Only by combining the two can we build a hybrid architecture that balances perceptual capability with trustworthy governance.

| Dimension | World Model | RongZhi Dual Formalization |

|---|---|---|

| Core Objective | Learn environmental causality and state transitions | Build a unified ordinal reference system |

| Processing Target | Continuous subsymbolic signals | Discrete ordinals and their mappings |

| Primary Mechanisms | Video prediction, JEPA, latent-variable models | Twin Turing Machine, Cellular von Neumann Machine, Three Decompositions / Three Assemblies / Three Annotations |

| Interpretability | Relatively weak | Relatively strong |

| Energy Path | High training cost | More oriented toward direct addressing and reuse |

| Human-AI Collaboration | Interaction at the result layer | Intervention at the process layer |

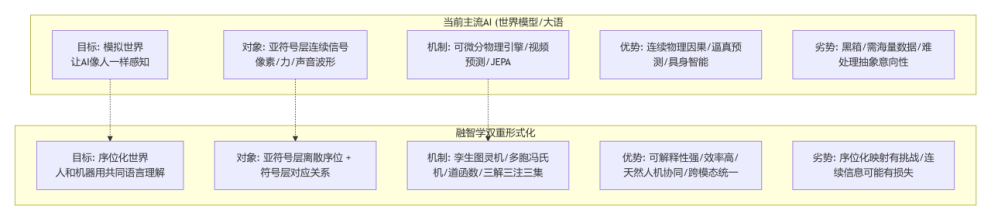

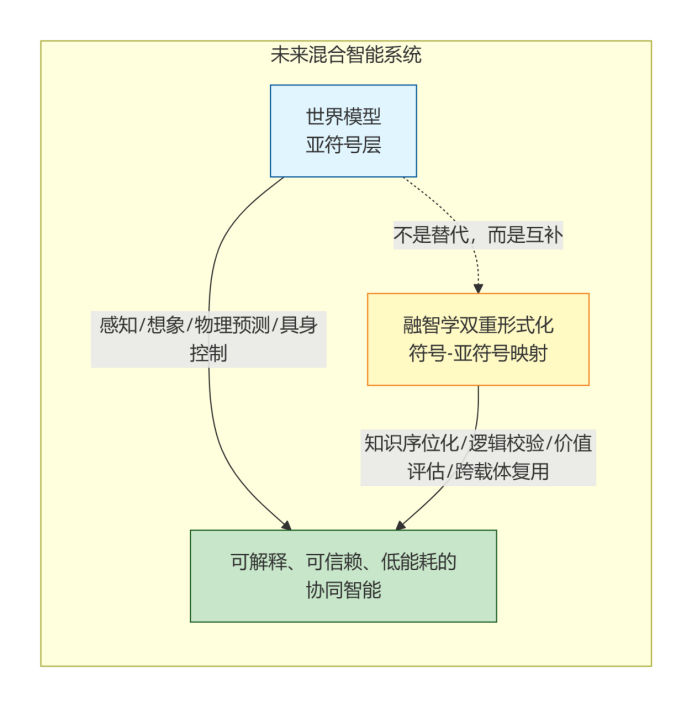

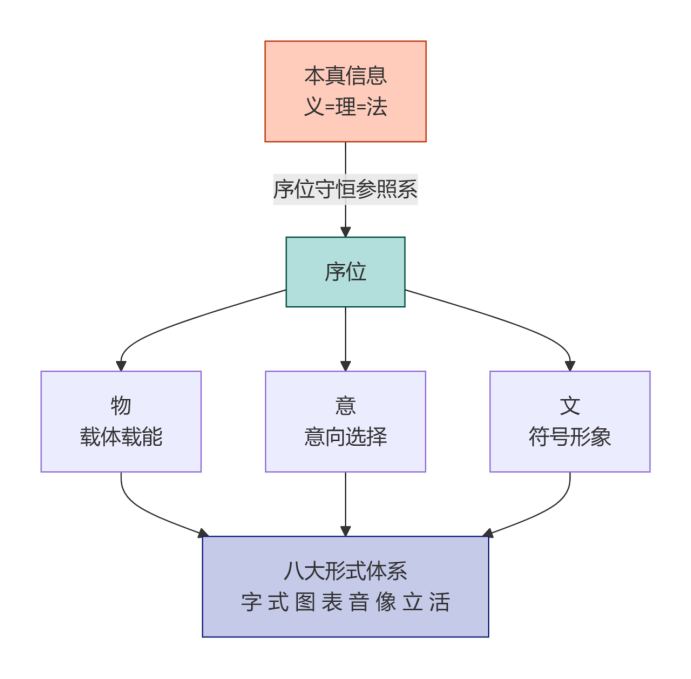

The diagrams further highlight the structural focus of this theory

AI Visual Insight: This diagram uses a flowchart to show differences in input, processing mechanisms, and output across the three paths. It emphasizes that mainstream AI relies on probabilistic learning or physical prediction, while dual formalization adds an intermediate layer of ordinal abstraction, structured mapping, and traceable invocation.

AI Visual Insight: This diagram illustrates the complementary architecture. The world model sits on the perception and imagination side and handles continuous environment modeling. RongZhi sits on the knowledge and intention side and handles indexing, validation, arbitration, and cross-modal mapping, creating layered upstream-downstream coordination.

AI Visual Insight: This diagram breaks down the mapping pipeline of the Three Decompositions, Three Assemblies, and Three Annotations. It shows the continuous processing chain from raw signal decomposition to hierarchical organization and semantic annotation, making clear that the approach is fundamentally a reviewable formalization protocol rather than a single model.

AI Visual Insight: This diagram presents eight formal systems and their dual structures. It attempts to place language, images, audio-visual content, and intention into the same ordinal framework, providing theoretical support for unified cross-modal knowledge encoding.

The value of this path comes with clear challenges

The greatest strength of dual formalization is transparency, and its greatest difficulty is also transparency. Converting the raw world into discrete ordinals requires high-quality decomposition rules, boundary definitions, and human consensus. If automatic mapping remains weak, system construction costs will be high.

In addition, when dealing with continuous and highly fluid phenomena such as emotion, music, and behavior in open environments, ordinal abstraction may lose detail. In other words, this approach does not naturally replace deep models. A more realistic route is to use deep models as the perceptual frontend and dual formalization as the knowledge backend.

For high-trustworthy AI, hybrid architectures are more realistic than a single path

A more viable future system may look like this: the LLM handles the language interface, the world model handles environment understanding, and dual formalization handles knowledge localization, rule validation, and intention arbitration. Only with clear division of labor can a system achieve generalization, interpretability, and low-risk deployment at the same time.

system_stack = {

"interaction": "LLM", # Handles natural language understanding and generation

"perception": "World Model", # Handles environment modeling and prediction

"governance": "Formalization" # Handles knowledge localization, validation, and arbitration

}This code shows that the three are better suited to form a layered architecture than to replace one another.

FAQ

Q1: Is RongZhi dual formalization intended to replace large models?

No. It is better understood as a knowledge structure layer and audit layer on top of large models. Its focus is on meaning identification, logical validation, and cross-medium reuse rather than directly replacing generative models.

Q2: What is the biggest technical difference between world models and dual formalization?

World models attempt to learn causality and state changes in continuous environments. Dual formalization attempts to build discrete, traceable ordinal indexes for those states and knowledge. One is simulation-oriented; the other is localization-oriented.

Q3: Which domains are best suited for this approach?

It is better suited to high-trust, high-audit, and rule-intensive domains such as finance, law, healthcare, knowledge governance, and education systems. These scenarios require interpretability and reusability more than pure generation capability.

AI Readability Summary: This article reconstructs and compares three mainstream AI paths: large language models, world models, and RongZhi dual formalization. It focuses on differences in processing targets, formalization mechanisms, interpretability, energy efficiency, and human-AI collaboration, arguing that RongZhi is better understood as a layer for knowledge localization and intention arbitration rather than a perception-and-generation model.