This article focuses on production-grade deployment practices for Spring Cloud microservices. It covers Docker image builds, Kubernetes-based rollout, and elastic scaling to address three common pain points: inconsistent environments, inefficient manual deployments, and slow service recovery. Keywords: Spring Cloud, Docker, Kubernetes.

Technical Specifications Snapshot

| Parameter | Description |

|---|---|

| Primary Languages | Java, YAML, Bash |

| Core Protocols | HTTP, TCP, Docker Registry, Kubernetes API |

| GitHub Stars | Not provided in the source |

| Core Dependencies | Spring Boot 2.7.15, Spring Cloud 2021.0.8, Spring Cloud Alibaba 2021.0.5.0, Docker 24.0.6, Kubernetes 1.26.10, Nacos 2.2.3, Harbor 2.8.4 |

Docker and Kubernetes Have Become the De Facto Standard for Spring Cloud Production Deployments

Once a Spring Cloud project grows into a multi-service system, manual releases quickly become unmanageable. Typical issues include environment drift, port conflicts, missing configuration, and high manual recovery costs after service instance failures.

Docker solves build-to-runtime consistency. Kubernetes solves deployment, governance, and elasticity. Together, they shift the release model from machine-by-machine operations to declarative resource management, which is far better suited for continuous delivery in production environments.

Production Environments Should Standardize the Version Matrix First

| Component | Recommended Version | Notes |

|---|---|---|

| JDK | OpenJDK 8u382 | Stable LTS release |

| Spring Boot | 2.7.15 | Stable converged release in the 2.x line |

| Spring Cloud | 2021.0.8 | Matches Boot 2.7.x |

| Spring Cloud Alibaba | 2021.0.5.0 | Compatible with Nacos 2.x |

| Docker | 24.0.6 | Stable and production-ready |

| Kubernetes | 1.26.10 | Broad compatibility across cloud vendors |

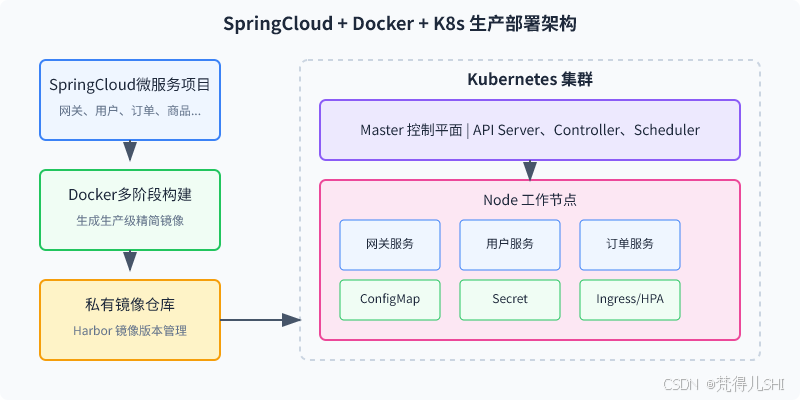

The Architecture Diagram Clearly Shows the End-to-End Flow

AI Visual Insight: The diagram shows a typical cloud-native Spring Cloud deployment path: external traffic first enters the gateway and Ingress layer, then routes to individual microservices; services coordinate through the service registry and configuration center; Kubernetes provides Pod scheduling, service discovery, rolling updates, and auto scaling at the infrastructure layer. Together, these layers form a complete architecture from entry, to governance, to runtime.

From a production perspective, the key value of this diagram is not that it includes every component, but that it defines clear responsibility boundaries: the gateway provides a unified entry point, Nacos handles service registration and configuration, and Kubernetes takes over lifecycle management and high availability.

Three Changes Must Be Completed Before Containerizing the Project

First, configuration must support environment variable injection so that values such as the registry center and database address are not hardcoded into the package. Second, all services should produce executable fat JARs to reduce image build complexity. Third, Actuator health endpoints should be enabled in advance to support Kubernetes probe configuration.

server:

shutdown: graceful # Enable graceful shutdown

spring:

lifecycle:

timeout-per-shutdown-phase: 30s # Reserve shutdown buffer timeThis configuration allows Spring Boot to drain traffic smoothly and finish in-flight requests when a Pod is terminated.

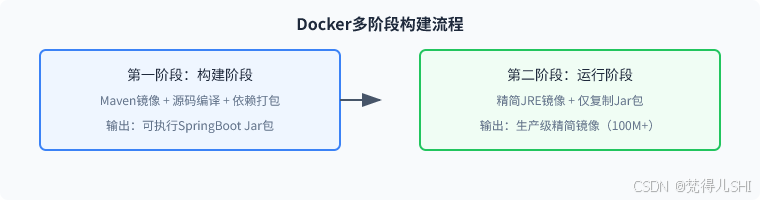

Multi-Stage Dockerfiles Are the Core Technique for Image Optimization

A common problem with single-stage builds is oversized images, slower builds, and unnecessary exposure of the build environment. In production, you should use a two-stage builder + runtime model so that only the final JAR is copied into the runtime image.

FROM maven:3.8.6-openjdk-8 AS builder

WORKDIR /app

COPY pom.xml .

RUN mvn dependency:go-offline -B # Cache dependencies first to speed up later builds

COPY src ./src

RUN mvn clean package -DskipTests # Package the executable JAR

FROM openjdk:8-jre-slim

ENV TZ=Asia/Shanghai

WORKDIR /app

COPY --from=builder /app/target/*.jar app.jar

RUN groupadd -r appgroup && useradd -r -g appgroup appuser # Run as a non-root user

RUN chown -R appuser:appgroup /app

USER appuser

EXPOSE 8081

ENTRYPOINT ["sh", "-c", "java $JAVA_OPTS -jar app.jar $SPRING_OPTS"]This Dockerfile addresses four issues at once: dependency caching, timezone alignment, a minimal runtime image, and container security.

AI Visual Insight: The image shows a post-containerization runtime or validation view. It highlights that, once the image build is complete, the service instance can start successfully inside a containerized environment and enter an observable, verifiable state. Screenshots like this are commonly used to confirm consistency between the Dockerfile, the effective runtime parameters, and the actual application behavior.

Local Validation Should Come Before Registry Pushes and Cluster Deployment

docker build -t user-service:1.0.0 .

docker run -d -p 8081:8081 \

-e JAVA_OPTS="-Xms512m -Xmx512m" \

-e SPRING_OPTS="--spring.profiles.active=test --nacos.server-addr=192.168.1.100:8848" \

--name user-service-test \

user-service:1.0.0

curl http://127.0.0.1:8081/actuator/health # UP indicates the service is healthyThis step verifies, within the smallest possible feedback loop, that the image, startup parameters, and health probe endpoint all work as expected.

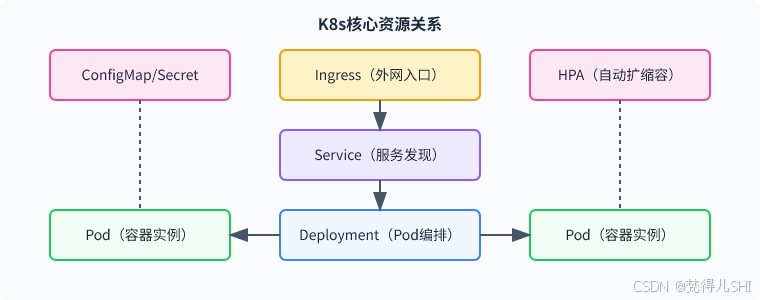

Kubernetes Orchestration Should Decouple Configuration, Secrets, and Workloads

A Namespace provides environment isolation. A ConfigMap stores non-sensitive configuration. A Secret stores passwords and registry credentials. A Deployment defines replicas and probes. A Service provides a stable access address. An Ingress handles external traffic entry. An HPA manages load-based scaling.

AI Visual Insight: The image shows Kubernetes resource orchestration or a visualized resource topology, emphasizing the relationship between namespace isolation, configuration injection, service deployment, and traffic entry. Its technical significance is that it elevates microservices from single-host process management to declarative cluster management.

The Key to a Deployment Configuration Is Not Whether It Runs, but Whether It Runs Reliably

apiVersion: apps/v1

kind: Deployment

metadata:

name: user-service

namespace: prod-cloud

spec:

replicas: 2 # Use at least two replicas in production

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0 # Ensure no available instance is lost during rolling updates

selector:

matchLabels:

app: user-service

template:

metadata:

labels:

app: user-service

spec:

imagePullSecrets:

- name: harbor-registry-secret

containers:

- name: user-service

image: harbor.example.com/prod-cloud/user-service:1.0.0

ports:

- containerPort: 8081

startupProbe:

httpGet:

path: /actuator/health

port: 8081

readinessProbe:

httpGet:

path: /actuator/health

port: 8081

livenessProbe:

httpGet:

path: /actuator/health

port: 8081This configuration provides the foundation for zero-downtime rollout, self-healing recovery, and slow-start protection for Spring Cloud services on Kubernetes.

Ingress and HPA Determine the System’s Entry Capacity and Elasticity

For Ingress, a best practice is to expose only the gateway instead of exposing business services directly through NodePort. This makes it easier to enforce HTTPS, timeout controls, domain-based routing, and centralized security policies.

HPA depends on observability components such as metrics-server and dynamically adjusts replica counts based on CPU usage or custom metrics. For bursty traffic that changes within seconds, it is more reliable than manual scaling.

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: user-service-hpa

namespace: prod-cloud

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: user-service

minReplicas: 2

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70 # Automatically scale out when CPU exceeds 70%This HPA configuration gives the service baseline auto-scaling capability and works well for high-concurrency services such as orders, users, and products.

Production Rollout Must Follow Dependency Order

Create the Namespace, ConfigMap, and Secret first. Then deploy the Deployment and Service. Finally, attach the Ingress and HPA. This order reduces startup failures caused by image pull errors, missing environment variables, or dependencies that are not ready.

kubectl apply -f 01-namespace.yaml

kubectl apply -f 02-configmap.yaml

kubectl apply -f 03-registry-secret.yaml -f 04-cloud-secret.yaml

kubectl apply -f 05-user-service-deployment.yaml -f 06-user-service-svc.yaml

kubectl get pods -n prod-cloud

kubectl get ingress -n prod-cloudThese commands cover the minimum viable production rollout loop and can be used for fast status validation before and after deployment.

Most Production Pitfalls Center on Probes, Resources, and Service Registration

First, Spring Cloud services often start slowly, so you must configure startupProbe; otherwise, livenessProbe can easily kill instances that are still booting. Second, JVM Xmx should not consume the full container memory limit. A good rule is to reserve at least 20% for non-heap memory and thread stacks. Third, if Nacos runs outside the cluster, Pod IP registration and callback connectivity become fragile. The best practice is to keep the registry center inside the cluster network.

FAQ

1. Why do Spring Cloud services restart frequently in Kubernetes?

A common cause is the absence of startupProbe, which allows the liveness probe to fail before the service has finished starting. Another frequent cause is an oversized JVM memory configuration that triggers OOMKill.

2. How should ConfigMap, Secret, and Nacos divide responsibilities?

ConfigMap is suitable for shared, non-sensitive static configuration. Secret should store passwords and registry credentials. Nacos is better suited for dynamic business configuration and runtime refresh. These three should work together rather than replace one another.

3. Why is it recommended to expose only the gateway through Ingress?

Ingress is better suited for unified management of domains, TLS, timeouts, rate limiting, and Layer 7 routing rules. It also avoids the operational complexity, broad exposure surface, and fragmented ports that come with NodePort.

Core Summary

This article systematically reconstructs a production-ready Docker containerization and Kubernetes orchestration approach for Spring Cloud. It covers version selection, Dockerfile optimization, ConfigMap/Secret/Deployment/Ingress/HPA configuration, rollout flow, and common pitfalls, making it directly actionable for backend engineers, operations teams, and architects.