This article focuses on the engineering implementation of a Linux C++ thread pool. It explains how a thread pool uses a task queue, worker threads, mutexes, and condition variables to solve the high overhead of frequent thread creation, then extends the discussion to the singleton pattern and concurrency safety. Keywords: thread pool, pthread, high concurrency.

Technical specification snapshot

| Parameter | Description |

|---|---|

| Language | C++ |

| Runtime Environment | Linux |

| Concurrency Model | Multi-producer, multi-consumer |

| Core Protocol/Standard | POSIX Threads (pthread) |

| Article Popularity | 422 views, 26 likes, 17 bookmarks |

| Core Dependencies | pthread, STL queue/vector, std::function |

Thread pools are foundational infrastructure for high-concurrency backends

A thread pool is not simply about “starting more threads.” Its core purpose is to pre-create, reuse, and centrally schedule threads as an expensive system resource. It addresses three core pain points: the overhead of frequent thread creation and destruction, uncontrolled thread growth under burst traffic, and tight coupling between business logic and thread management.

In web services, asynchronous logging, batch jobs, and RPC workloads, a thread pool can reduce task latency from “create a thread, then execute” to “execute immediately after receiving a task,” while keeping concurrency within a controllable range.

The value of a thread pool can be summarized in four points

- It reuses threads to reduce CPU scheduling and memory overhead.

- It caps concurrency to prevent thread explosion.

- It centralizes lifecycle management to support monitoring and cleanup.

- It lets application code focus on task submission instead of low-level thread details.

// Abstract task submission form: business threads only submit tasks

using task_t = std::function<void()>; // Use type erasure to unify the task interface

void SubmitTask(ThreadPool

<task_t>& pool) {

pool.Enqueue([](){

// Write the actual business logic here

std::cout << "process request" << std::endl; // Core task execution

});

}This snippet shows that a thread pool exposes a task interface rather than a thread interface.

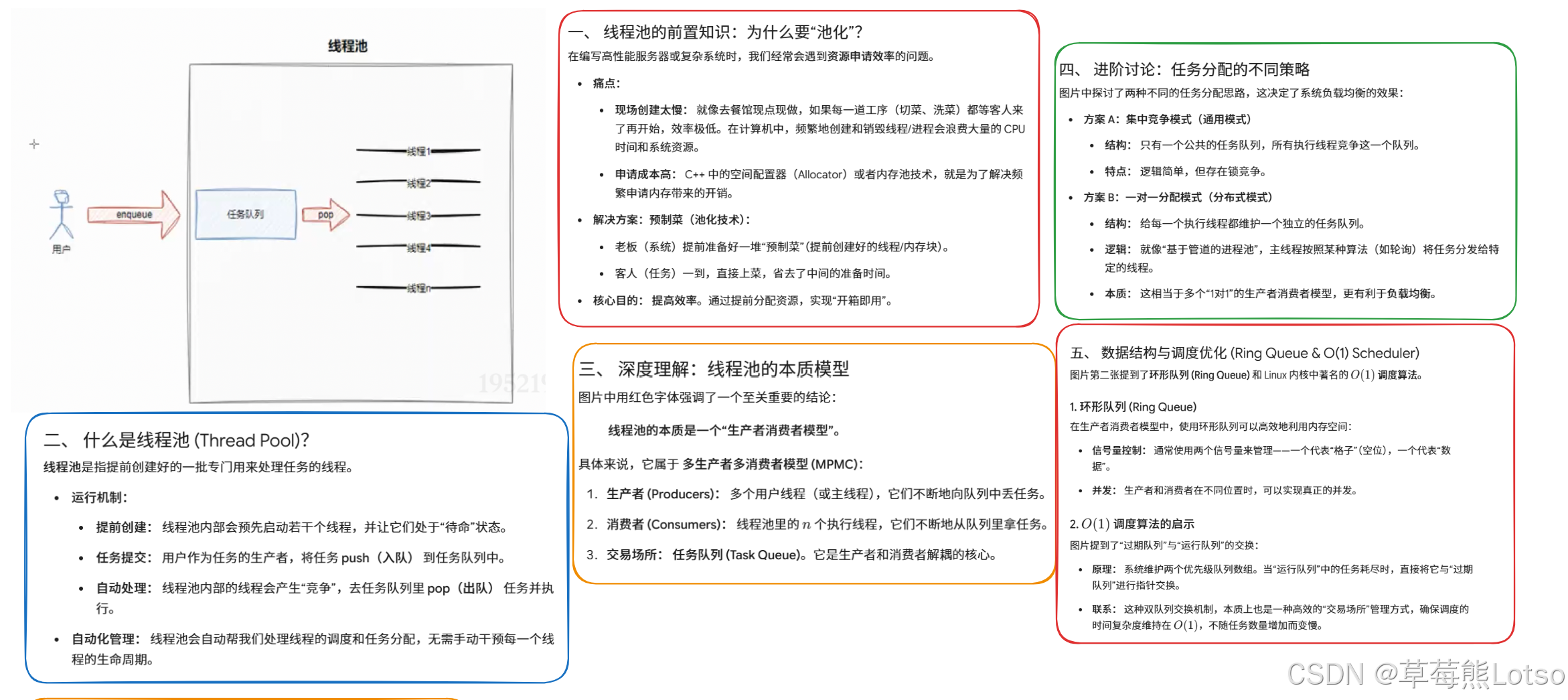

A thread pool is fundamentally a producer-consumer model

User threads produce tasks, worker threads inside the thread pool consume them, and the task queue is the shared critical resource between both sides. Once you understand the relationship between these three roles, most thread pool design decisions become straightforward.

AI Visual Insight: The image illustrates a typical producer-consumer structure for a thread pool: upstream requests enter the task queue, the shared region is protected by a mutex and condition variable, and multiple downstream worker threads fetch and execute tasks in parallel. It highlights the core architecture of queue buffering, thread reuse, and synchronized wake-up.

AI Visual Insight: The image illustrates a typical producer-consumer structure for a thread pool: upstream requests enter the task queue, the shared region is protected by a mutex and condition variable, and multiple downstream worker threads fetch and execute tasks in parallel. It highlights the core architecture of queue buffering, thread reuse, and synchronized wake-up.

A complete thread pool includes at least four modules

- Task queue: buffers pending tasks.

- Worker thread group: continuously fetches and executes tasks.

- Synchronization primitives: a mutex protects the queue, and a condition variable coordinates waiting and wake-up.

- State management: controls startup, running, shutdown, and cleanup.

AI Visual Insight: The image breaks the thread pool into four layers: task queue, thread set, synchronization mechanism, and state control. It emphasizes a key concurrency design boundary: access to shared resources must be serialized, while task execution must remain parallel.

AI Visual Insight: The image breaks the thread pool into four layers: task queue, thread set, synchronization mechanism, and state control. It emphasizes a key concurrency design boundary: access to shared resources must be serialized, while task execution must remain parallel.

A correct thread pool implementation must satisfy three design constraints

First, task execution must happen outside the critical section; otherwise, the thread pool degrades into a serial queue. Second, condition variable waits must use a while loop for retry to prevent spurious wakeups. Third, the exit condition must be “the thread pool has stopped and the task queue is empty”; otherwise, tasks may be lost.

while (queue.empty() && is_running) {

cond.Wait(mutex); // Sleep when the queue is empty to avoid busy waiting

}

if (queue.empty() && !is_running) {

break; // Exit is allowed only when shutdown has started and no tasks remain

}

auto task = queue.front();

queue.pop(); // Release the critical section immediately after taking a taskThis logic defines the minimum correct semantics for a worker thread in a thread pool.

Why while matters more than if

A thread waking up does not mean the condition is definitely satisfied. The operating system may trigger a spurious wakeup. If you only use if, the thread may continue even though the queue is still empty, eventually causing an empty-queue access or an incorrect exit.

Core synchronization components should be wrapped with RAII first

The raw pthread API is usable, but direct calls often lead to missed unlocks or incorrect destruction order. In production code, engineers usually wrap Mutex, LockGuard, and Cond first so that “lock on construction, unlock on destruction” becomes guaranteed behavior.

class LockGuard {

public:

LockGuard(Mutex* m) : _m(m) {

_m->Lock(); // Lock on construction to protect the critical section

}

~LockGuard() {

_m->UnLock(); // Unlock on destruction to avoid omissions

}

private:

Mutex* _m;

};The value of this wrapper is that it solves unlock correctness in exception paths and early returns in one place.

A condition variable is responsible for notification, not validation

A condition variable must always be used together with a mutex and a condition check. The semantics of Wait are: release the lock, suspend the thread, and after receiving a signal, compete for the lock again before returning. What actually determines whether execution can continue is not the notification itself, but the condition expression checked again after wake-up.

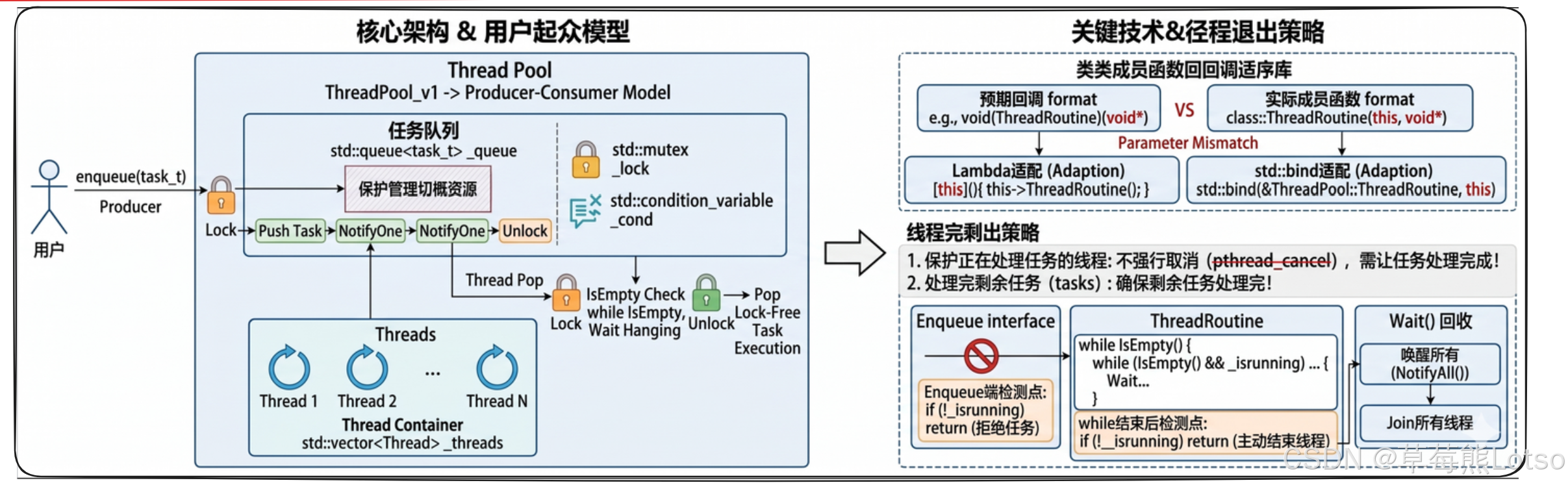

The core thread pool workflow can be divided into four steps: startup, submission, execution, and shutdown

During startup, the pool creates a fixed number of worker threads. During submission, producers lock and push tasks into the queue. During execution, workers compete for tasks. During shutdown, the pool updates its running flag and broadcasts a wake-up to all waiting threads, then joins them for cleanup.

void Enqueue(const task_t& task) {

LockGuard lock(&_mutex);

if (!_isrunning) return; // Reject new tasks after shutdown starts

_queue.push(task); // Push the task into the queue

if (_sleeper_cnt > 0) {

_cond.NotifyOne(); // Wake a worker only when sleepers exist to reduce unnecessary system calls

}

}This code reflects a key producer-side optimization in thread pools: wake on demand.

Graceful shutdown matters more than forced cancellation

As the original article points out, you should not use pthread_cancel to terminate worker threads forcefully. A task may be only half complete, resources may not yet be released, and state may not yet be committed. The essence of graceful shutdown is: stop accepting new work, drain what remains, then let all threads exit in an orderly way.

A singleton thread pool fits a process-wide scheduling center

In backend services, the thread pool is often a globally shared resource. In that case, introducing the singleton pattern avoids duplicate thread pool instances across modules and reduces both resource waste and management complexity.

The core logic of a double-checked locking singleton is to reduce unnecessary locking

static ThreadPool* GetInstance() {

if (_instance == nullptr) { // First check reduces lock overhead after initialization

LockGuard lock(&_singleton_mutex);

if (_instance == nullptr) {

_instance = new ThreadPool(); // Core guarantee: create the instance only once

}

}

return _instance;

}This code balances performance and uniqueness through “two checks plus one lock,” but modern C++ generally prefers a thread-safe function-local static object for singleton implementation.

Beyond the thread pool itself, you must understand the boundaries of concurrency safety

Thread safety is not the same as reentrancy. STL containers are not thread-safe by default, and both std::queue and std::vector require external locking for concurrent writes. shared_ptr only guarantees thread safety for reference counting, not for the object it points to.

Another issue you must watch carefully is deadlock. The most common prevention strategies are enforcing a consistent lock acquisition order, minimizing critical sections, avoiding nested locks, and never performing long-running or blocking operations while holding a lock.

A high-frequency engineering checklist

- Is the shared queue always accessed under lock protection?

- Does

Waitalways pair with awhileloop? - Does task execution happen only after unlocking?

- Does

Stopuse broadcast wake-up? - Do

Waitandjoinavoid blocking while holding a lock?

This thread pool implementation fits several practical scenarios

If your tasks are short, stateless, and parallelizable—such as request handling, log flushing, asynchronous callbacks, and batch computation—this pthread-based thread pool is already highly practical. If you also need task priority, dynamic scaling, Future return values, exception isolation, and monitoring metrics, you should continue evolving toward an industrial-grade executor framework.

FAQ

Q1: Why must task execution in a thread pool happen outside the lock?

Because task execution usually takes much longer than dequeuing. If a task runs while holding the lock, other threads cannot enqueue or fetch tasks, and the thread pool effectively degenerates into serial execution, causing throughput to drop sharply.

Q2: Why should shutdown use NotifyAll instead of NotifyOne?

Because multiple worker threads may be blocked on the condition variable at the same time. If you wake only one thread, the others may sleep forever, and join may never complete. During shutdown, you must broadcast so that every thread can re-check the exit condition.

Q3: Is a double-checked locking singleton thread pool still recommended?

It is still worth understanding for interviews and historical implementations, but in modern C++, a function-local static singleton is generally preferred because the code is shorter, the thread-safety semantics are more stable, and maintainability is better.

[AI Readability Summary] This article reconstructs the implementation path of a Linux C++ thread pool for high-concurrency programming. It covers the pooling model, the producer-consumer architecture, pthread synchronization wrappers, task scheduling flow, graceful shutdown, singleton transformation, and key concerns around deadlocks and thread safety. It is especially useful for backend engineers, systems programmers, and interview preparation.