Docker handles image builds and single-host container execution, while Kubernetes handles cluster-level orchestration, scheduling, and self-healing. They do not replace each other. Instead, they form an upstream-downstream collaboration chain around OCI standards to solve environment consistency and operations at scale. Keywords: Docker, Kubernetes, containerd.

Technical Specifications Snapshot

| Parameter | Details |

|---|---|

| Core Topic | Responsibility boundaries and collaboration patterns between Docker and Kubernetes |

| Underlying Foundation | Linux Namespaces, cgroups |

| Standards and Protocols | OCI, CRI |

| Runtime Status | Kubernetes v1.24+ commonly integrates with containerd and CRI-O |

| Common Languages | YAML, Shell, Dockerfile |

| Ecosystem Components | Docker Hub, Helm, Prometheus, Grafana |

| Star Count | Not provided in the source material, so this article does not speculate |

| Core Dependencies | Docker Engine, containerd, kubectl, Kubernetes API |

The Relationship Between Docker and Kubernetes Must Be Defined Precisely

Many teams treat Docker and Kubernetes as competing products, but that is a common misjudgment. Docker solves the problem of how to package an application and its dependencies into a runnable unit. Kubernetes solves the problem of how to manage those runnable units reliably across multiple nodes.

This division of responsibility makes them naturally complementary. Docker focuses more on development and delivery, while Kubernetes focuses more on production governance. One standardizes packaging, and the other handles scheduling at scale, which is why they often appear together in the same delivery pipeline.

Docker Works More Like a Container Factory, While Kubernetes Works More Like a Scheduling System

Docker excels at image builds, container startup, image distribution, and local debugging. The tools developers interact with most often are Dockerfile, docker build, and docker run.

Kubernetes does not invent its own container format. Instead, it manages resources such as Pods, Services, and Deployments through declarative configuration, placing containers into a cluster system that is observable, recoverable, and scalable.

# Build an image: package code and dependencies into a distributable unit

Docker build -t demo-app:v1 .

# Start a container locally: verify that the application can run

Docker run -d --name demo -p 8080:8080 demo-app:v1These commands show Docker’s core responsibility in image building and single-host execution.

Both Technologies Share the Same Container Foundation

Docker and Kubernetes work well together not because their interfaces happen to match, but because both are built on Linux container primitives. Namespaces provide isolation, and cgroups provide resource limits. These are the underlying prerequisites that make containers possible.

Even more important is the OCI standard. It unifies image formats and runtime interfaces, allowing images built by Docker to be executed directly by any compliant runtime and then scheduled by Kubernetes.

AI Visual Insight: This diagram illustrates the layered path from image building to registry storage and then to cluster scheduling. Docker handles image production at the top layer, the image registry handles distribution in the middle layer, and Kubernetes uses a container runtime to start containers on cluster nodes at the bottom layer. Together, this reflects a three-stage responsibility model: build, distribute, and orchestrate.

AI Visual Insight: This diagram illustrates the layered path from image building to registry storage and then to cluster scheduling. Docker handles image production at the top layer, the image registry handles distribution in the middle layer, and Kubernetes uses a container runtime to start containers on cluster nodes at the bottom layer. Together, this reflects a three-stage responsibility model: build, distribute, and orchestrate.

Their Shared Goal Is to Reduce Deployment Complexity

Both Docker and Kubernetes address the most common problems in traditional deployments: inconsistent environments, manual deployment steps, low scaling efficiency, and poor resource utilization. Containerization standardizes the runtime environment, while orchestration automates the runtime workflow.

This is also why microservices can be adopted at scale. Without containers, services are hard to deliver consistently. Without orchestration, services are hard to govern reliably. In essence, the Docker and Kubernetes combination turns both delivery and operations into standardized workflows.

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo-deployment

spec:

replicas: 3 # Specify the initial number of replicas

selector:

matchLabels:

app: demo

template:

metadata:

labels:

app: demo

spec:

containers:

- name: demo

image: demo-app:v1 # Use the prebuilt image

ports:

- containerPort: 8080 # Expose the application portThis YAML example shows how Kubernetes manages application replicas and container state declaratively.

The Core Differences Appear in Management Scope and System Capabilities

The first difference is management scope. Docker is single-host by default, which makes it suitable for local development, test validation, and small deployments. Kubernetes targets clusters, which makes it suitable for cross-host scheduling, multi-instance governance, and highly available production environments.

The second difference is failure handling. Docker by itself does not provide full cluster self-healing. Kubernetes continuously compares the desired state with the actual state, automatically recreates failed Pods, and reschedules them onto healthy nodes.

Kubernetes v1.24+ No Longer Depends Directly on Docker Engine

This is one of the most common misconceptions. After Kubernetes removed dockershim, it no longer integrated Docker Engine directly as a runtime. Today, the mainstream approach is to connect to containerd or CRI-O through the CRI.

It is important to emphasize that this does not mean Docker has lost its value. Docker remains one of the most widely used image build tools. Kubernetes simply prefers lighter runtime implementations during the execution phase.

# Scale the Deployment: increase replicas to 5

kubectl scale deployment demo-deployment --replicas=5

# Check Pod status: confirm the scaling result

kubectl get pods -o wideThese commands demonstrate Kubernetes’ core strengths: declarative scaling and cluster state visibility.

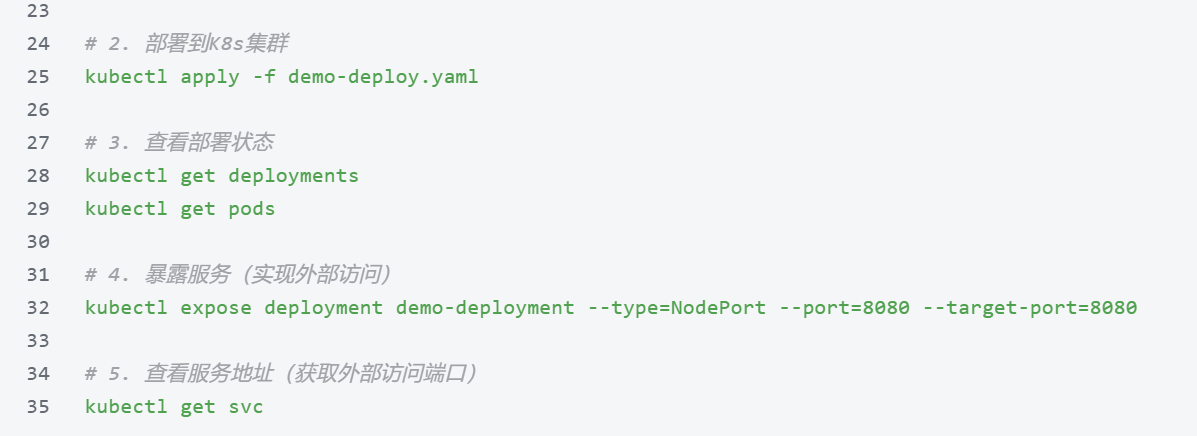

Production Environments Should Use Layered Collaboration Instead of a Single-Tool Mindset

A typical workflow looks like this: developers write a Dockerfile, the CI system builds the image and pushes it to a registry, and Kubernetes then uses resource objects such as Deployment to handle releases, rolling updates, rollbacks, and autoscaling.

For that reason, understanding the end-to-end chain is more important than debating which tool replaces the other. A more accurate way to describe the relationship is this: Docker is strong at delivery artifacts, containerd is strong at container execution, and Kubernetes is strong at managing desired state across a cluster.

Beginners Should Learn Docker First and Kubernetes Second

Start with Docker to build intuition around container images, port mapping, volume mounts, and network isolation. Once you move into Kubernetes, concepts such as Pods, image pulls, probes, and resource limits become much easier to understand.

When learning Kubernetes, prioritize Pods, Deployments, Services, ConfigMaps, Ingress, and Persistent Volumes. Do not get lost in the full ecosystem too early. First, master the main path: how to keep an application running reliably.

# Check cluster nodes: confirm that scheduling targets are healthy

kubectl get nodes

# Check services: confirm that the access entry has been created successfully

kubectl get svc

# Check rollout history: provide a basis for rollback

kubectl rollout history deployment/demo-deploymentThis command set covers the most common inspection tasks in day-to-day Kubernetes operations.

FAQ

1. Will Docker be completely replaced by Kubernetes?

No. What changed is Docker Engine’s role at the Kubernetes runtime integration layer, not Docker’s value in image building and development delivery.

2. Do you have to install Docker to use Kubernetes?

No. Kubernetes only requires a CRI-compliant container runtime such as containerd or CRI-O. You can run Kubernetes without installing Docker Engine.

3. When is Docker alone enough, and when do you need Kubernetes?

Docker alone is usually enough for single-host applications, local development, and lightweight testing. When your system needs multi-node deployment, self-healing, rolling updates, autoscaling, and unified governance, you should use Kubernetes.

Key Takeaway

This article systematically explains the shared foundation, core differences, and collaboration chain between Docker and Kubernetes. It focuses on key concepts such as container engines, OCI standards, CRI and containerd, cluster orchestration, self-healing, and autoscaling, helping developers quickly identify technical boundaries and make better production decisions.