GPT-5.5 is a fully retrained model built for agentic workloads. Its core upgrades include Agent-First training, native omnimodality, parallel tool calling, and a 1M-token context window. It primarily addresses pain points in complex task planning, code repair, and long-context reasoning. Keywords: GPT-5.5, Agent, Thinking.

Technical specifications provide a quick snapshot

| Parameter | Details |

|---|---|

| Model Name | GPT-5.5 |

| Release Date | 2026-04-23 |

| Core Positioning | Fully retrained, agent-first model |

| Primary Capabilities | Multi-step planning, tool calling, code repair, long-context reasoning |

| Context Window | 1M tokens |

| Reasoning Modes | low / medium / high / auto |

| Representative Benchmarks | Terminal-Bench 82.7%, SWE-bench Verified 74.4% |

| API Pricing | Input $5/M, Output $30/M |

| Core Dependencies | Transformer, tool-calling framework, Codex integration |

| Supported Modalities | Text, image, audio (native omnimodal) |

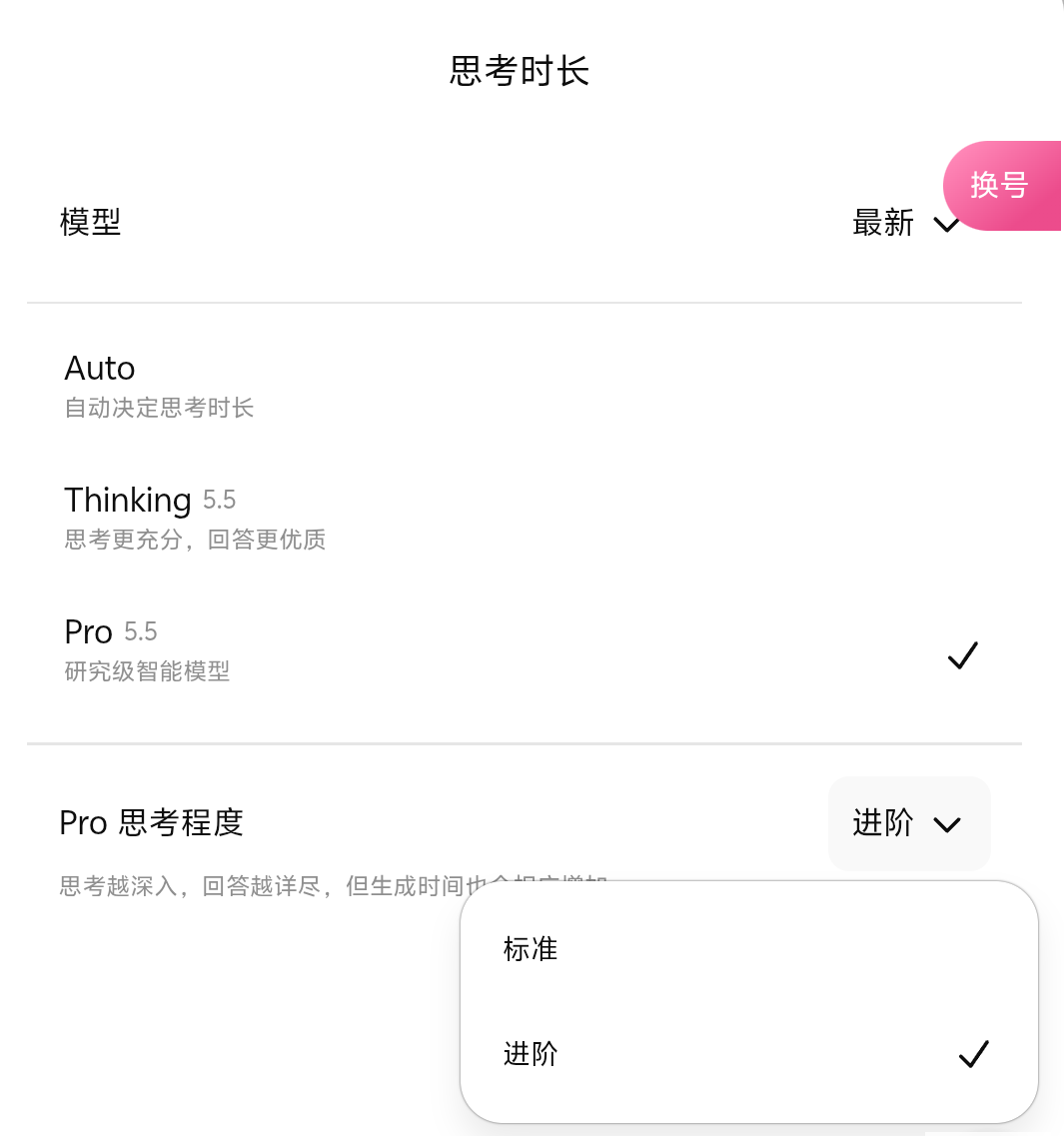

AI Visual Insight: The image illustrates GPT-5.5’s information flow and capability aggregation entry points. It emphasizes the model publisher, version identity, and productized framing for multi-capability integration. This type of diagram is typically used to show that the model has evolved from single-turn Q&A into a unified task execution framework.

GPT-5.5’s most important upgrade is not parameter growth but a shift in training paradigm

The most notable aspect of GPT-5.5 is not a routine iteration, but full retraining. That means it does not rely on incremental patching over GPT-5.4’s parameter distribution. Instead, it redesigns the training data mix, alignment objectives, and capability structure from the ground up.

The value of this retraining strategy is that it is better suited to restructuring capabilities for agent scenarios. Traditional models are optimized to answer questions. GPT-5.5 places greater emphasis on completing tasks, including decomposing goals, selecting tools, checking intermediate results, and recovering from errors.

Full retraining directly changes the capability boundary

Compared with incremental training, full retraining allows the model to search for better solutions across a larger parameter space. This is especially useful for breaking past the previous model’s ceiling in coding, planning, and reliability. Public data shows that GPT-5.5 improves by about 31% over GPT-5.4 on agentic tasks, which directly reflects this strategy.

from openai import OpenAI

client = OpenAI()

response = client.chat.completions.create(

model="gpt-5.5",

messages=[

{"role": "system", "content": "You are a code repair assistant."},

{"role": "user", "content": "Analyze the concurrency bug in this repository and provide a fix recommendation."}

],

reasoning_effort="medium" # Set medium reasoning depth to balance speed and quality

)

print(response)This example shows the basic way to enable GPT-5.5’s standard reasoning mode in the API.

Native omnimodality and parallel tool calling jointly serve agent scenarios

GPT-5.5 uses a native omnimodal architecture. Rather than simply attaching a vision encoder outside the language model, it unifies text, image, and audio earlier within the same Transformer computation graph. The benefit is reduced loss in cross-modal mapping.

For developers, this architecture is better suited to tasks such as screenshot-based debugging, voice instructions, and mixed document-plus-code input. It enables the model to build finer-grained cross-modal associations within a single inference pass, instead of relying on a chain of separate modules.

Parallel tool calling is a core task-execution feature in GPT-5.5

Another key capability is parallel tool calling. GPT-5.5 no longer assumes that all external tools must execute serially. It can identify which subtasks can be launched in parallel, such as code search, log reading, and test execution, significantly reducing total latency.

# Pseudocode: demonstrates the idea of parallel tool orchestration

plan = [

"search_repo", # Search suspicious code locations

"read_logs", # Read runtime logs

"run_tests" # Run tests to validate the hypothesis

]

parallel_tasks = [task for task in plan] # Execute in parallel when there are no prerequisite dependencies

print(parallel_tasks)This snippet summarizes how GPT-5.5 can decompose complex workflows into parallel tasks.

Thinking mode turns reasoning depth into an explicit product feature

GPT-5.5’s Thinking mode is the core interface that separates it from ordinary chat models. It explicitly exposes reasoning-time compute to users, allowing them to control depth with low, medium, high, or auto.

Standard thinking works well for everyday Q&A, document drafting, and general programming. Advanced thinking targets high-complexity tasks such as mathematical proofs, difficult debugging, and research analysis, emphasizing multi-path reasoning, reverse validation, and intermediate error correction.

Standard and advanced thinking should be selected by task complexity

| Mode | Characteristics | Best For |

|---|---|---|

| low | Fast response, shallow reasoning | Everyday Q&A, summarization, lightweight coding |

| medium | Balanced speed and quality | General development, analysis, document generation |

| high | Deep reasoning and error correction | Mathematics, complex debugging, research tasks |

| auto | Automatic routing | Mixed scenarios with uncertain complexity |

response = client.chat.completions.create(

model="gpt-5.5",

messages=[{"role": "user", "content": "Prove the time complexity of this algorithm and analyze its boundary conditions."}],

reasoning_effort="high" # Enable high-intensity reasoning for complex tasks

)This example reflects the typical way to enable advanced thinking for complex analytical tasks.

Benchmark results show that GPT-5.5’s advantage is concentrated in coding agents

Across several public benchmarks, GPT-5.5’s most prominent gains do not appear in general chat, but in task execution. It reaches 82.7% on Terminal-Bench 2.0 and 74.4% on SWE-bench Verified, indicating meaningful improvements in terminal operations and bug fixing in real repositories.

The importance of these metrics is that they are much closer to production environments than traditional Q&A datasets. The model must not only say the right thing, but also get the job done, including reading the environment, analyzing dependencies, executing steps, and fixing errors.

Key benchmark comparisons highlight its engineering value

| Benchmark | GPT-5.5 | GPT-5.4 | Conclusion |

|---|---|---|---|

| Terminal-Bench 2.0 | 82.7% | 63.1% | Major gains in agentic terminal workflows |

| SWE-bench Verified | 74.4% | 53.0% | Significant jump in real-world software repair |

| GDPval | 84.9% | – | Strong knowledge-work performance |

| GeneBench | 25.0% | 19.0% | Further gains in scientific reasoning |

GPT-5.5 Pro targets high-precision research and mission-critical scenarios

GPT-5.5 Pro is not simply a more expensive version. It places stronger emphasis on consistency, calibration, and zero-tolerance output quality. Typical use cases include financial analysis, legal review, scientific reasoning, and critical code auditing.

Its key differences are a longer reasoning budget, stronger self-checking mechanisms, and more stable output consistency. In scenarios where errors are costly, the Pro version often delivers more value than a simple token-price comparison suggests.

Higher pricing reflects a higher task completion rate

Although GPT-5.5 API pricing is roughly double that of GPT-5.4, total task cost is not necessarily higher if it reduces retries, rollbacks, and manual review. For high-value tasks such as software repair and enterprise analysis, this logic holds.

GPT-5.5 and Claude Opus 4.7 are now competing through capability specialization

Based on public data, GPT-5.5 leads Claude Opus 4.7 on Terminal-Bench 2.0, making it better suited to coding agents, multi-tool orchestration, and terminal-centric workflows. Claude still maintains strengths in desktop interaction, long-form conversational experience, and creative expression.

This suggests that industry competition is shifting from who is more general-purpose to who is stronger in specific workflows. For developers, model selection should not depend on leaderboard rankings alone, but on task type and integration pattern.

FAQ

1. Why is GPT-5.5’s improvement over GPT-5.4 so significant?

Because it uses full retraining rather than incremental fine-tuning, it can reorganize training objectives, data distribution, and alignment strategy around agent scenarios. That especially improves planning, tool use, and code repair.

2. When should you enable high-level Thinking?

You should use high when the task requires multi-step derivation, strict verification, or has a high cost of failure, such as complex debugging, mathematical proofs, research analysis, or legal review. For ordinary Q&A and document generation, medium is usually more cost-effective.

3. Is GPT-5.5 worth replacing older models with in production?

If your workload depends on code repair, terminal automation, long-context analysis, or multi-tool coordination, it is worth prioritizing for evaluation. If your main use case is low-cost text generation, you should weigh pricing, latency, and task success rate together.

Core summary captures GPT-5.5’s technical value and deployment boundaries

This article reconstructs the core picture of GPT-5.5, focusing on full retraining, native omnimodality, the Agent-First training paradigm, Thinking mode, the Pro version, Terminal-Bench and SWE-bench performance, pricing, and competitive comparisons. The goal is to help developers quickly understand its technical value and practical deployment limits.