This article focuses on how mainstream AI coding models diverged in capability as of April 2026: GPT-5.5 excels at terminal automation and autonomous planning, Claude Opus 4.7 leads in high-precision engineering and visual understanding, and Gemini 3.1 Pro stands out in multimodal long-context tasks. It addresses the core developer question: which model should you choose for which task? Keywords: AI coding, model selection, SWE-Bench.

The technical specification snapshot clarifies each model’s positioning

| Dimension | GPT-5.5 | Claude Opus 4.7 | Gemini 3.1 Pro | Grok 4.3 |

|---|---|---|---|---|

| Vendor | OpenAI | Anthropic | xAI | |

| Primary Form | General-purpose foundation model + Codex agent | General-purpose foundation model | Multimodal foundation model | Real-time retrieval / voice model |

| Languages | Multilingual | Multilingual | Multilingual | Multilingual |

| Protocol / Access | API, agent toolchain | API, tool calling | API, multimodal interfaces | API, real-time interaction |

| Public Representative Metric | Terminal-Bench 2.0 82.7% | SWE-Bench Verified 87.6% | ARC-AGI-2 77.1% | Autonomous customer support resolution rate 70% |

| GitHub Stars | Not disclosed | Not disclosed | Not disclosed | Not disclosed |

| Core Dependencies | Codex, terminal/browser tools | Long context, visual input | Native multimodality, long context | Real-time web access, voice system |

This update cycle turns the “best model” question into a routing problem

The April 2026 release cycle was extremely dense, but the conclusion is straightforward: coding models no longer compete for single-model dominance. They are entering a phase of task specialization. What developers actually need is not a bet on one winner, but a model allocation strategy based on task type.

The source material shows that GPT-5.5 is deeply integrated with Codex, Claude Opus 4.7 continues to strengthen software engineering precision, and Gemini 3.1 Pro and Grok 4.3 differentiate themselves in multimodality and real-time interaction respectively. For teams, this means the evaluation standard must evolve from “can it write code?” to “which workflow delivers the best cost efficiency and stability?”

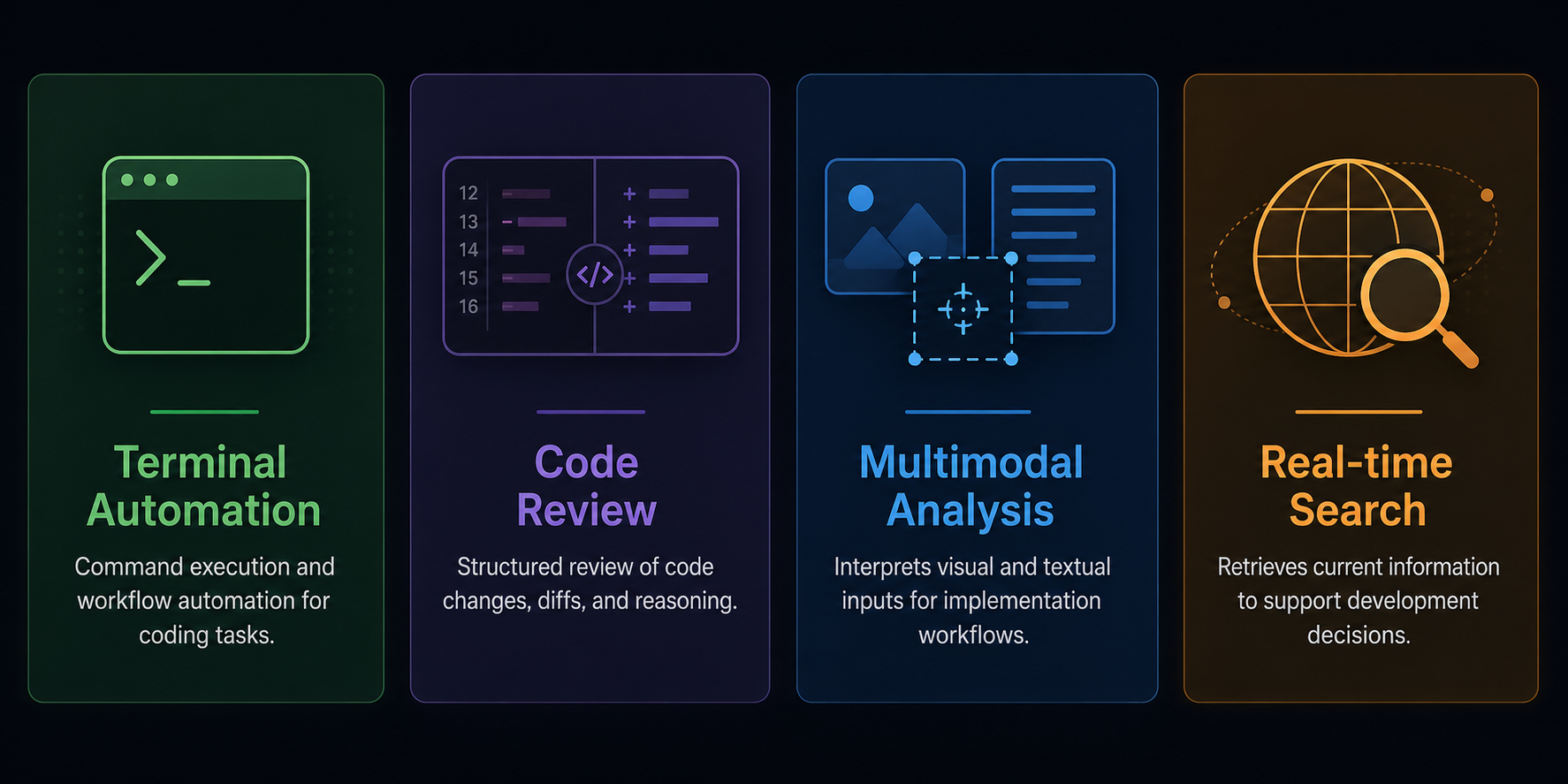

AI Visual Insight: This image supports the model comparison theme. Its core message is multi-model competition and capability stratification. In technical communication, this kind of hero visual usually emphasizes model brands side by side, version milestones, and competitive relationships, making it an effective visual anchor for model selection overview documents.

AI Visual Insight: This image supports the model comparison theme. Its core message is multi-model competition and capability stratification. In technical communication, this kind of hero visual usually emphasizes model brands side by side, version milestones, and competitive relationships, making it an effective visual anchor for model selection overview documents.

GPT-5.5 functions more like an agentic programming foundation optimized for terminal workflows

GPT-5.5’s standout advantage is not a single benchmark score, but the completeness of its “instruction-to-execution” chain. The most important data point here is its 82.7% score on Terminal-Bench 2.0, which suggests a strong advantage in command-line tasks, script orchestration, and environment operations.

It also folds Codex from a standalone product line into a unified architecture. That means model reasoning, tool calling, browser control, and document handling are connected systematically. For DevOps, automated testing, batch refactoring, and agentic development, this kind of integrated capability often matters more than a standalone code generation score.

benchmarks = {

"terminal_automation": "GPT-5.5", # Prefer GPT-5.5 for terminal automation

"strict_refactor": "Claude Opus 4.7", # Prefer Claude for strict refactoring

"multimodal_docs": "Gemini 3.1 Pro" # Prefer Gemini for mixed image-text analysis

}

def pick_model(task_type: str) -> str:

return benchmarks.get(task_type, "Hybrid Routing") # Use hybrid routing when no match is foundThis code snippet demonstrates a minimal task-to-model mapping strategy.

Claude Opus 4.7 maintains a leading position in high-precision engineering tasks

Claude Opus 4.7’s core advantage lies in engineering correctness and instruction adherence. Its 87.6% score on SWE-Bench Verified and 64.3% on SWE-Bench Pro indicate stronger stability in real software bug fixing, long-chain changes, and complex project understanding.

Its behavioral shift is even more noteworthy: it no longer over-infers user intent and instead follows literal requirements more strictly. In production environments, this directly affects patch reliability, tool-calling error rates, and multi-step task completion rates.

At the same time, Opus 4.7 raises its visual input ceiling to a maximum long edge of 2576 pixels. That gives it more practical value in UI reconstruction, complex screenshot parsing, and chart proofreading. For front-end, testing, and product engineering collaboration workflows, this is a significant advantage.

Gemini 3.1 Pro and Grok 4.3 occupy the multimodal and real-time interaction layers respectively

Gemini 3.1 Pro does not win by topping a pure coding leaderboard. Its real advantage is native handling of text, images, audio, and video, combined with ultra-long context for cross-document reasoning. If the task input itself is highly heterogeneous—for example, design mockups, PDFs, charts, and meeting audio—Gemini’s routing priority should increase substantially.

Grok 4.3 is currently better viewed as a complementary model. It provides differentiated value in voice interaction and real-time information retrieval, especially for scenarios that require fast access to the latest vulnerabilities, cloud service changes, or external documentation updates.

def route_request(task):

if task["needs_terminal"]:

return "GPT-5.5" # Prioritize GPT-5.5 for terminal execution

if task["needs_pixel_vision"]:

return "Claude Opus 4.7" # Prioritize Claude for high-resolution visual understanding

if task["needs_multimodal_context"]:

return "Gemini 3.1 Pro" # Prioritize Gemini for long-context multimodal tasks

return "Grok 4.3" # Default to Grok as a real-time retrieval supplementThis code snippet illustrates a common rule-based routing pattern for production environments.

Benchmark scores provide direction, but they cannot replace real-world model selection

Public benchmarks are useful, but only when interpreted within the scope of their test definitions. For example, GPT-5.5’s Terminal-Bench 2.0 and Claude’s SWE-Bench Verified do not measure the same type of problem, so they cannot support a simple conclusion about which model is universally stronger.

A more reliable approach is to split tasks into four categories: terminal execution, engineering remediation, multimodal analysis, and real-time retrieval. Then bind each category to four metrics: success rate, latency, token cost, and manual rework rate. Only then do the results provide meaningful guidance for business routing decisions.

The practical recommendation for teams is to build a “primary model + fallback model + routing layer” structure

If your team focuses on automated development, script execution, and agentic workflows, GPT-5.5 can serve as the primary model. If your core need is complex repository refactoring, strict review, and high-precision delivery, Claude Opus 4.7 is a better engineering-first default.

If your product workflow depends heavily on mixed text-and-image inputs, let Gemini 3.1 Pro handle the multimodal entry point. Grok 4.3 is better attached to real-time queries, voice collaboration, or web-connected verification as an enhancement layer rather than the only primary model.

model_stack = {

"primary": "Claude Opus 4.7", # Primary engineering model

"secondary": "GPT-5.5", # Fallback for terminal and agent tasks

"multimodal": "Gemini 3.1 Pro", # Dedicated multimodal model

"realtime": "Grok 4.3" # Supplement for real-time retrieval

}This code snippet provides an easy-to-implement skeleton for a multi-model toolchain.

FAQ structured questions and answers

Q: If I can subscribe to only one model, which one should I choose first?

A: If your work centers on terminal automation, script execution, and agentic development, prioritize GPT-5.5. If your core workload is complex codebase maintenance, precise refactoring, and strict instruction following, prioritize Claude Opus 4.7.

Q: Why can’t I rely only on benchmark leaderboard rankings?

A: Because different benchmarks define different problem spaces. Terminal-Bench is closer to environment operations, SWE-Bench is closer to real software remediation, and ARC-AGI-2 emphasizes reasoning. Leaderboards reveal partial capability, but they cannot replace scenario-based validation.

Q: What is the minimum viable setup for multi-model collaboration?

A: At minimum, prepare one primary model, one fallback model, and one rule-based routing layer. The primary model handles high-frequency tasks, the fallback model handles failure recovery, and the routing layer distributes requests by task type. This can significantly reduce interruption risk and rework cost.

AI Readability Summary: Based on public benchmarks and product updates from April 2026, this article compares GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and Grok 4.3 across terminal automation, complex engineering, multimodal understanding, and real-time retrieval, then provides scenario-based model selection guidance for developers.