The core of Harness Engineering is to build an executable, verifiable, and rollback-safe engineering environment for AI, so a model can evolve from “good at chatting” to “reliably shipping real project code.” It addresses three major pain points: context loss, task drift, and codebases that become messier with every edit. Keywords: AI coding, Harness, engineering.

The technical spec snapshot clarifies the project context

| Parameter | Details |

|---|---|

| Core topic | Harness Engineering / Engineering for AI Coding |

| Language environment | Primarily Python, compatible with mixed frontend-backend stacks |

| Protocols and interfaces | MCP, file system, Bash, Browser Use |

| Core dependencies | yt-dlp, linters, automated tests, Git |

| Project model | Agent = Model + Harness |

| Source characteristics | Blog article by programmer Yupi |

| Article traction | About 160 views, 2 comments (as shown in the original article) |

Harness Engineering is not a new idea but a new name for engineering in the AI era

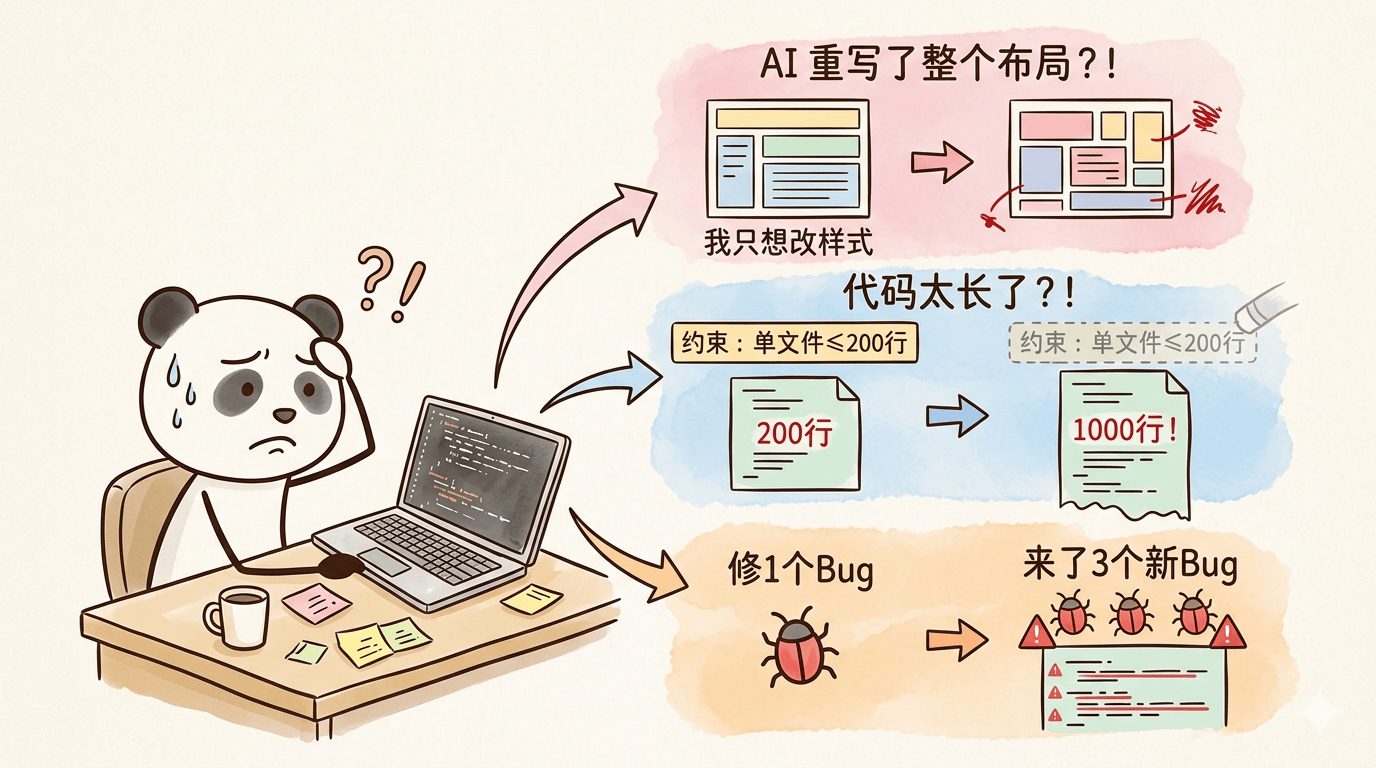

When developers use AI to tweak UI, fix bugs, or extend features, the real problem is often not the model itself. The problem is the lack of a stable working environment. AI can easily misread requirements, forget constraints, and introduce cascading bugs. At its core, this means the model is not being governed by a proper engineering system.

AI Visual Insight: The diagram highlights three common failure modes in AI coding: requirement misunderstanding, forgotten constraints in long conversations, and single-point fixes that trigger multi-point failures. It shows why prompts alone cannot support complex project delivery.

AI Visual Insight: The diagram highlights three common failure modes in AI coding: requirement misunderstanding, forgotten constraints in long conversations, and single-point fixes that trigger multi-point failures. It shows why prompts alone cannot support complex project delivery.

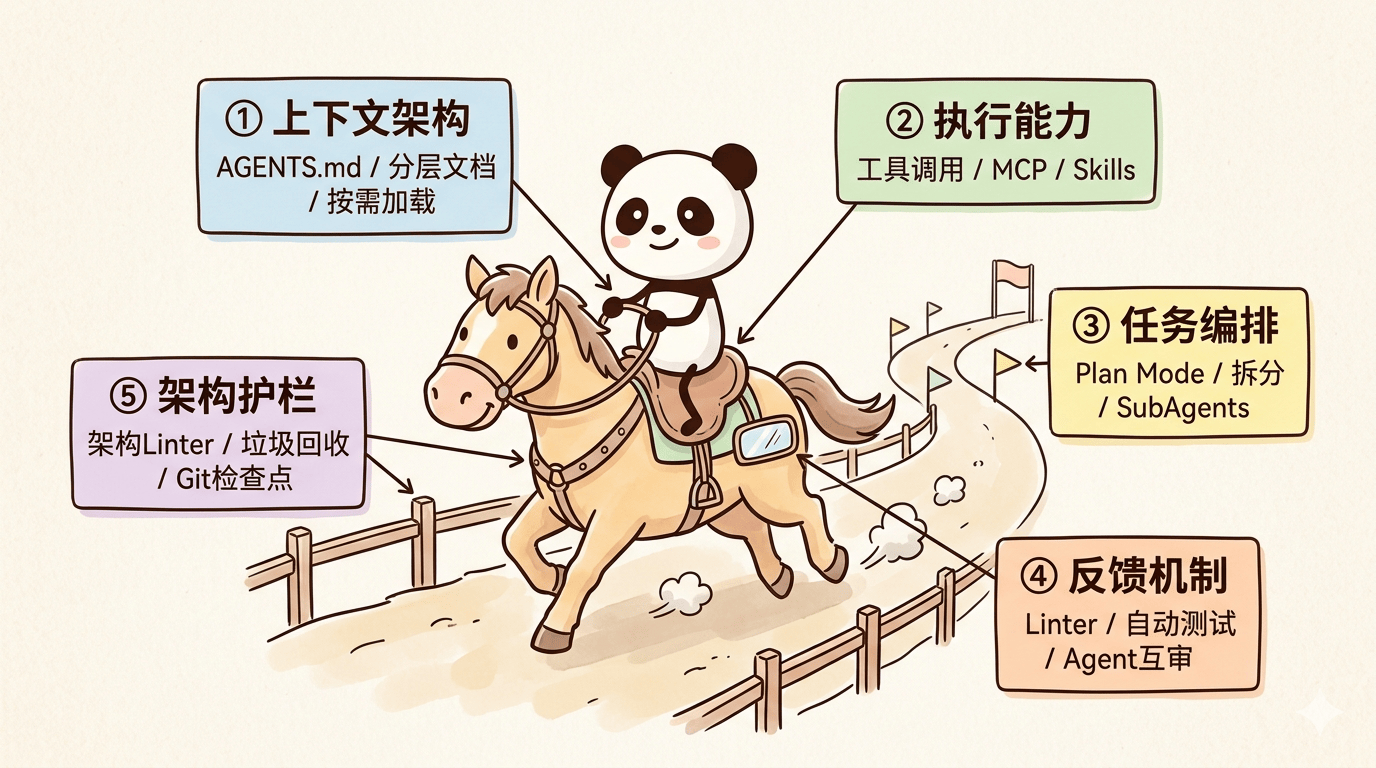

You can think of Harness as “putting a bridle on AI.” The model is the horse; the Harness is the combined system of reins, route, fences, and inspection mechanisms. It includes rule files, toolchains, task decomposition, test feedback, and architectural guardrails.

Harness can be reduced to a simple formula

Agent = Model + HarnessThis formula shows that a truly usable agent is not just a large language model. It also includes context, tools, workflows, and validation systems.

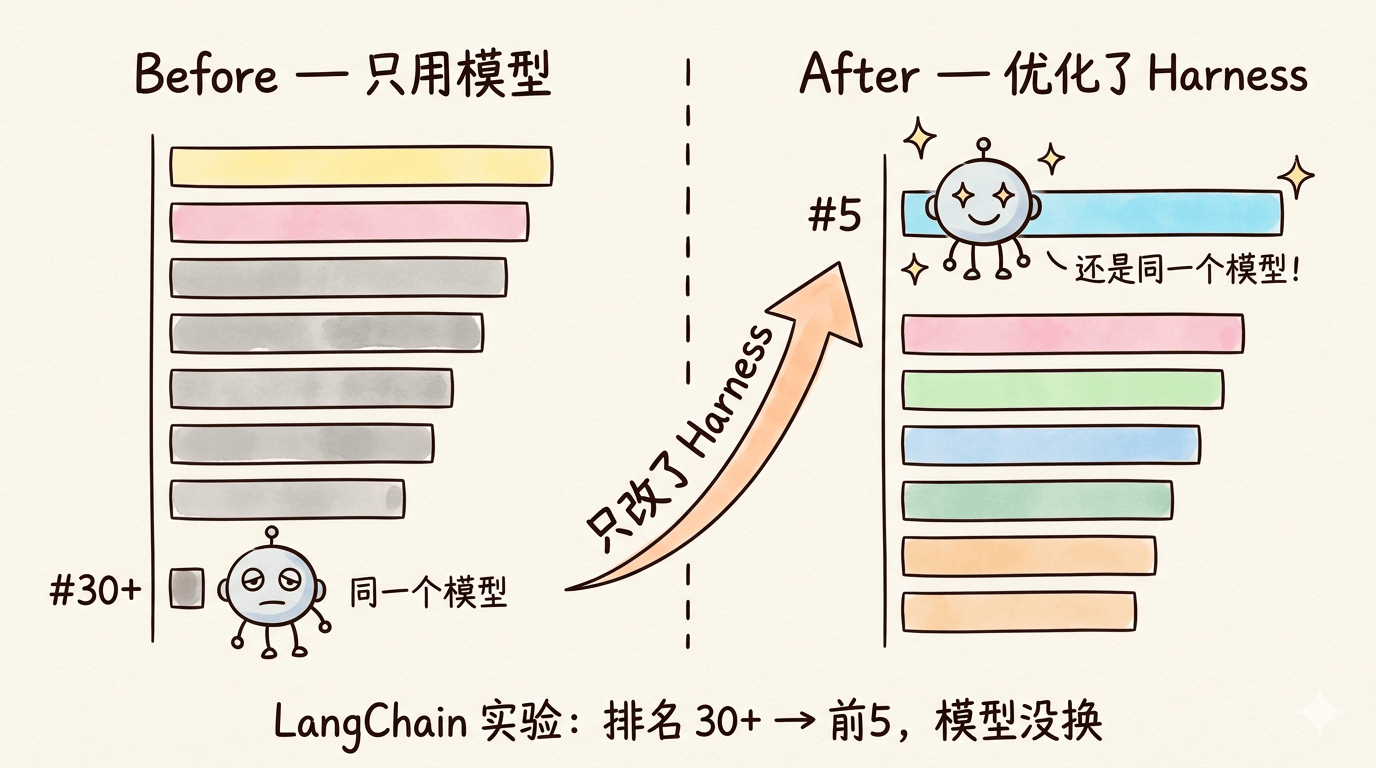

Leading teams have repeatedly validated the value of Harness

The article notes that LangChain used the same model but improved only the Harness design, which moved its coding benchmark ranking from outside the top 30 into the top 5. That result suggests the upper bound of AI coding is increasingly an engineering problem rather than a pure model problem.

AI Visual Insight: By separating the horse from the harness, the image distinguishes model capability from the control system. It emphasizes that stable output depends on external constraints and path design, not just a smarter model.

AI Visual Insight: By separating the horse from the harness, the image distinguishes model capability from the control system. It emphasizes that stable output depends on external constraints and path design, not just a smarter model.

AI Visual Insight: This visual should present a comparison of experimental results, showing that coding benchmark performance improved through Harness optimization while the model stayed unchanged. The takeaway is that process engineering offers more leverage than one-off prompt tuning.

AI Visual Insight: This visual should present a comparison of experimental results, showing that coding benchmark performance improved through Harness optimization while the model stayed unchanged. The takeaway is that process engineering offers more leverage than one-off prompt tuning.

The evolution of Harness follows a clear path

First came prompt engineering, which solves “how to phrase instructions.” Then came context engineering, which solves “what information to provide.” Harness Engineering is the next step, solving “how to let AI complete tasks continuously, reliably, and end to end.”

stages = [

"Prompt Engineering", # Solves instruction phrasing

"Context Engineering", # Solves information supply

"Harness Engineering", # Solves execution and reliable delivery

]

print(" -> ".join(stages))This code snippet summarizes the evolution of AI engineering capability from “expression” to “delivery.”

The five core modules of Harness form the minimum viable loop

The context architecture determines whether AI truly understands the project

The first layer of a high-quality Harness is context architecture. The key is not to dump every rule into one massive document. Instead, use AGENTS.md as an index and store frontend, security, architecture, and other details in layered documentation under a docs directory so the agent can load what it needs on demand.

This reduces context pollution and prevents critical rules from getting buried. Conceptually, it is no different from requirements documents, architecture documents, and engineering standards in traditional software projects.

Execution capability determines whether AI can actually take action

If a model can only output text, it is still just a question-answering system. Through Bash, file systems, browsers, MCP, and Skills, AI can read code, run commands, browse documentation, and verify UI results. That is what gives it real engineering execution capability.

tools = {

"terminal": True, # Allow command execution

"filesystem": True, # Allow reading and writing project files

"browser": True, # Allow end-to-end testing

"mcp": ["firecrawl", "context7"], # Web retrieval and documentation lookup

}This configuration expresses the minimum capability components required for a usable AI development environment.

Task orchestration determines whether complex requirements spiral out of control

You should not ask AI to complete a large requirement in one shot. Instead, start in Plan Mode: let AI produce a proposal, have a human confirm it, and then split the work into incremental feature tasks. After each step, persist the result in documentation. If needed, start a new conversation and restore context from those documents.

If tasks are independent, you can also use SubAgents to work in parallel. This is fundamentally the same as modular decomposition, parallel development, and staged acceptance in traditional software delivery.

Feedback mechanisms determine whether AI can self-correct

AI generating code does not mean the task is done. You must make it run linters, automated tests, and browser tests. In some cases, another agent should review the output as well. Only when errors, failure screenshots, and runtime results become feedback signals can the system close the loop and repair itself.

Architectural guardrails determine whether the codebase decays over time

AI will inherit the good habits in a repository, but it will also amplify bad patterns. Architectural constraint linters, pre-commit hooks, garbage-collection-style code inspections, and Git checkpoints are all critical mechanisms for preventing a project from becoming “still runnable, but increasingly chaotic.”

git add .

git commit -m "feat: implement core video download capability" # Create a checkpoint for the current stable state

pytest # Run tests to prevent failing code from entering the mainlineThese commands show the most basic quality guardrail: verify first, commit next, and always preserve rollback capability.

A video download summarizer case study shows how Harness works in practice

The author uses a “universal video download summarizer” as an example to demonstrate the full Harness workflow in a real project. Early in the process, the team selects yt-dlp as the core download engine and Python as the main stack. Then it asks AI to complete the documentation based on the agreed plan, rather than generating the entire codebase immediately.

During development, Firecrawl MCP and Context7 MCP give AI access to web content and up-to-date documentation, reducing errors caused by outdated APIs. After a feature is complete, the workflow requires AI to summarize the current implementation, update the documentation, and commit the changes to Git. That commit becomes a “memory restore point” for future conversations.

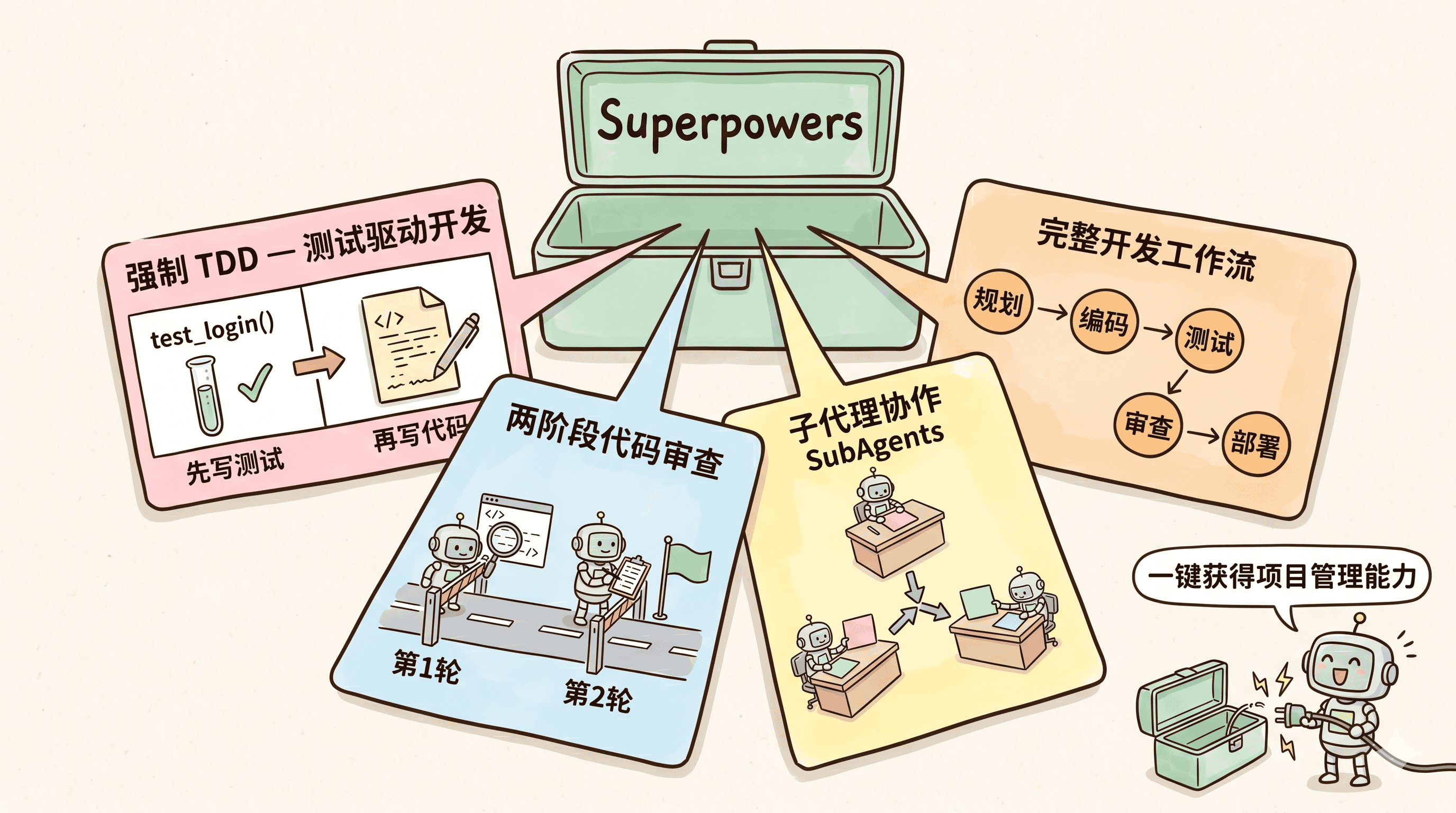

AI Visual Insight: The image should show an Agent Skills or workflow orchestration interface, highlighting how TDD, code review, and sub-agent collaboration can be packaged as reusable capability modules. That lowers the barrier to building a Harness.

AI Visual Insight: The image should show an Agent Skills or workflow orchestration interface, highlighting how TDD, code review, and sub-agent collaboration can be packaged as reusable capability modules. That lowers the barrier to building a Harness.

In the testing phase, AI can also use a browser to input links automatically, click parse actions, and validate page results. If issues such as Bilibili 403 errors or Markdown rendering failures appear, a human can provide the key error messages and restate the problem to help the agent escape an unproductive debugging path.

The fastest way to get started with Harness is to build the minimum viable loop first

Do not try to build a complex system all at once. Start by preparing AGENTS.md to define the tech stack, standards, and forbidden areas. Then require AI to propose a plan before coding. Next, connect web access and documentation retrieval. After implementation, enforce testing. Finally, use Git and documentation updates to preserve stage checkpoints.

def harness_checklist():

return [

"Write the AGENTS.md rule file", # Give AI global constraints

"Plan before coding", # Prevent uncontrolled implementation

"Integrate MCP / Skills", # Fill the execution capability gap

"Run tests and linters", # Establish the feedback loop

"Commit to Git and update docs", # Preserve checkpoints and memory

]This checklist works well as a starting template for any AI project.

Understanding Harness matters more than memorizing a specific tool

Tools such as Spec Kit and Superpowers can package spec-driven development, test-driven development, code review, and sub-agent collaboration for you, but they are only carriers. The truly durable advantage is Harness thinking: applying mature software engineering experience to AI execution systems.

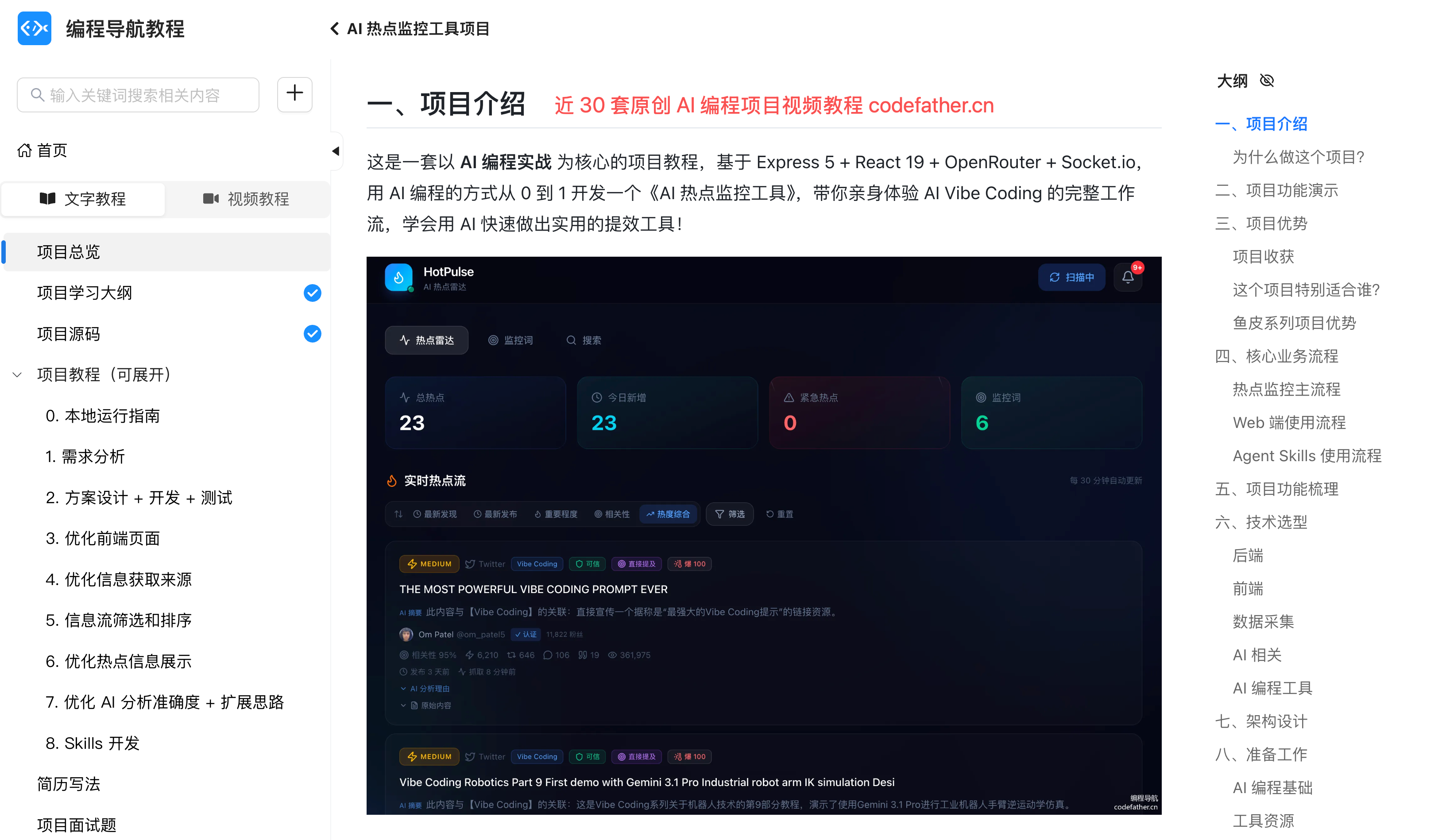

AI Visual Insight: This image most likely presents the author’s course or project matrix, conveying that Harness is not a single trick but a reusable methodology across many AI coding projects.

AI Visual Insight: This image most likely presents the author’s course or project matrix, conveying that Harness is not a single trick but a reusable methodology across many AI coding projects.

AI Visual Insight: The image should show an entry point into open-source tutorials or a knowledge base, reinforcing the idea of documenting and operationalizing experience. That aligns closely with Harness’s emphasis on turning context into durable assets.

AI Visual Insight: The image should show an entry point into open-source tutorials or a knowledge base, reinforcing the idea of documenting and operationalizing experience. That aligns closely with Harness’s emphasis on turning context into durable assets.

FAQ

Q: What is the essential difference between Harness Engineering and prompt engineering?

A: Prompt engineering focuses on “how to say it.” Harness Engineering focuses on “how to help AI keep getting the work done.” It additionally includes tool invocation, task orchestration, test feedback, and architectural guardrails, making it a complete delivery system.

Q: Do solo developers really need to build a Harness?

A: Yes, but you do not need to do it all at once. If an individual developer starts with four capabilities—rule files, plan confirmation, test validation, and Git checkpoints—they are already operating at a much higher level than most casual AI usage.

Q: Why does AI become less reliable in long conversations?

A: Because context gradually becomes polluted, and older constraints get diluted by newer information. The solution is layered documentation, on-demand loading, staged summaries, and restoring the necessary memory through documents in a fresh conversation.

Core summary

This article systematically reconstructs the core concept of Harness Engineering, its five major modules, and its practical implementation path. It explains why the bottleneck in AI coding has shifted from model capability to engineering environments, task orchestration, and quality guardrails, and it uses a video download summarizer case study to provide a directly reusable path into practice.