[AI Readability Summary] Robot learning is shifting from reinforcement learning to data-centric methods: high-quality demonstration data has turned Behavior Cloning into a strong baseline, first-person human data has become the scalable entry point, and the real bottleneck now lies in hardware and systems integration. Keywords: Behavior Cloning, Human Data, Embodied AI.

Technical specifications show the current landscape

| Parameter | Information |

|---|---|

| Domain | Robot Learning / Embodied AI |

| Core Methods | Behavior Cloning, Teleoperation, Human Data Transfer |

| Key Perception Protocols | Multi-camera video, IMU, VIO, SLAM, Hand Pose |

| Data Scale Estimate | Target: ~100 million hours; Current: ~100,000–200,000 hours |

| Hardware Focus | Dual-arm systems, dexterous hands, head-mounted viewpoint cameras, high-speed controllers |

| Representative Projects | EgoMimic, UMI |

| Article Format | Reconstructed technical interview |

| Core Dependencies | Vision encoders, temporal models, state estimation, teleoperation systems |

Behavior Cloning has moved from an outdated baseline to the practical mainstream

For years, the robotics community placed a long-term bet on reinforcement learning, assuming that robots should learn by themselves. In the real world, however, that path has consistently run into limits in sample efficiency, hardware cost, and closed-loop experimentation.

The interview presents a clear counterargument: if data quality is high enough, Behavior Cloning is not weak. In fact, it can quickly become a competitive baseline for complex manipulation tasks.

Behavior Cloning has returned to center stage for clear reasons

At its core, BC models control as supervised learning: it takes images and state as input and outputs actions. It does not require reward design or long exploration cycles, and it offers much better engineering controllability than RL.

# Input multimodal observations and output continuous control commands

obs = encoder(camera_images) # Extract multi-view visual features

hist = rnn(obs) # Preserve temporal context and reduce instantaneous ambiguity

action = policy_head(hist) # Predict robot arm or gripper control signals

loss = mse(action, expert_action) # Directly fit expert demonstration actionsThis code snippet shows the minimal training loop of behavior cloning: end-to-end supervised learning from perception to action.

High-quality teleoperation data determines the upper bound of Behavior Cloning

An observation from the DeepMind era remains critical: BC works not because the model is magical, but because the data collection system is mature enough to filter out suboptimal trajectories.

Danfei and Jia’s work on the Franka Panda platform reinforced the same point. A combination of a wrist camera, a ResNet encoder, Spatial Softmax, and an RNN was already sufficient to handle complex long-horizon manipulations lasting roughly 30 seconds.

Data matters more than algorithmic novelty in practice

Academia often places novelty at the algorithmic layer, but real gains in robot learning frequently come from the data pipeline, sensor placement, and control stability. This helps explain why several months of algorithm tuning may deliver less value than a few days of training on high-quality data.

config = {

"camera": ["head_cam", "wrist_cam"], # The head view handles scene understanding, while the wrist view captures contact details

"encoder": "resnet18", # Extract stable visual representations

"temporal": "rnn", # Model action history and phase transitions

"policy": "bc" # Learn the action policy through direct supervision

}This configuration summarizes the engineering backbone of early high-performing BC systems.

Human data is becoming the internet-scale corpus for robots

If large language models consume internet text, future robots will consume operational data generated by humans in the physical world. This is the most decisive claim in the interview.

Human data is not just a scaled-up version of teleoperation. It is a larger, more natural, and more multimodal data source that includes first-person video, hand pose, IMU, VIO, tactile signals, and language annotations.

First-person data is closer to usable supervision than third-person video

Third-person video is abundant, but its camera layout, acting subject, and interaction details differ too much from the deployment distribution of robots. First-person data is naturally closer to what a robot sees from its own head-mounted viewpoint.

This is where EgoMimic becomes important. It attempts to convert human first-person observations directly into trainable robot behavior data, while redesigning both the collection device and the dual-arm robot embodiment to support that goal.

Robots can learn three distinct layers of information from human video

The interview breaks learning targets into three layers: how the world changes, how to interact with objects, and how joints generate motion. This decomposition is highly explanatory.

The first layer transfers most easily because task outcomes are largely consistent between humans and robots. The second layer is learnable, but it depends on contact and geometric priors. The third layer is the hardest, because human muscles do not align directly with robotic actuators.

This is why hardware looks more like the bottleneck than algorithms

If a robot lacks sufficient degrees of freedom, speed, or control precision, even the best data cannot produce high-fidelity transfer. The true boundary of human-to-robot transfer is often set by the embodiment, not by the model.

State estimation is the hidden protagonist of the human data pipeline

If we treat a human as another kind of robot, then video alone is far from enough. The system must also capture action labels. The position, orientation, and velocity of the hand in space all depend on VIO, IMU, and SLAM.

That means the human data pipeline is not just a modeling problem. It is a systems engineering problem that requires multi-sensor synchronization, online calibration, sub-centimeter localization, and thermal drift correction.

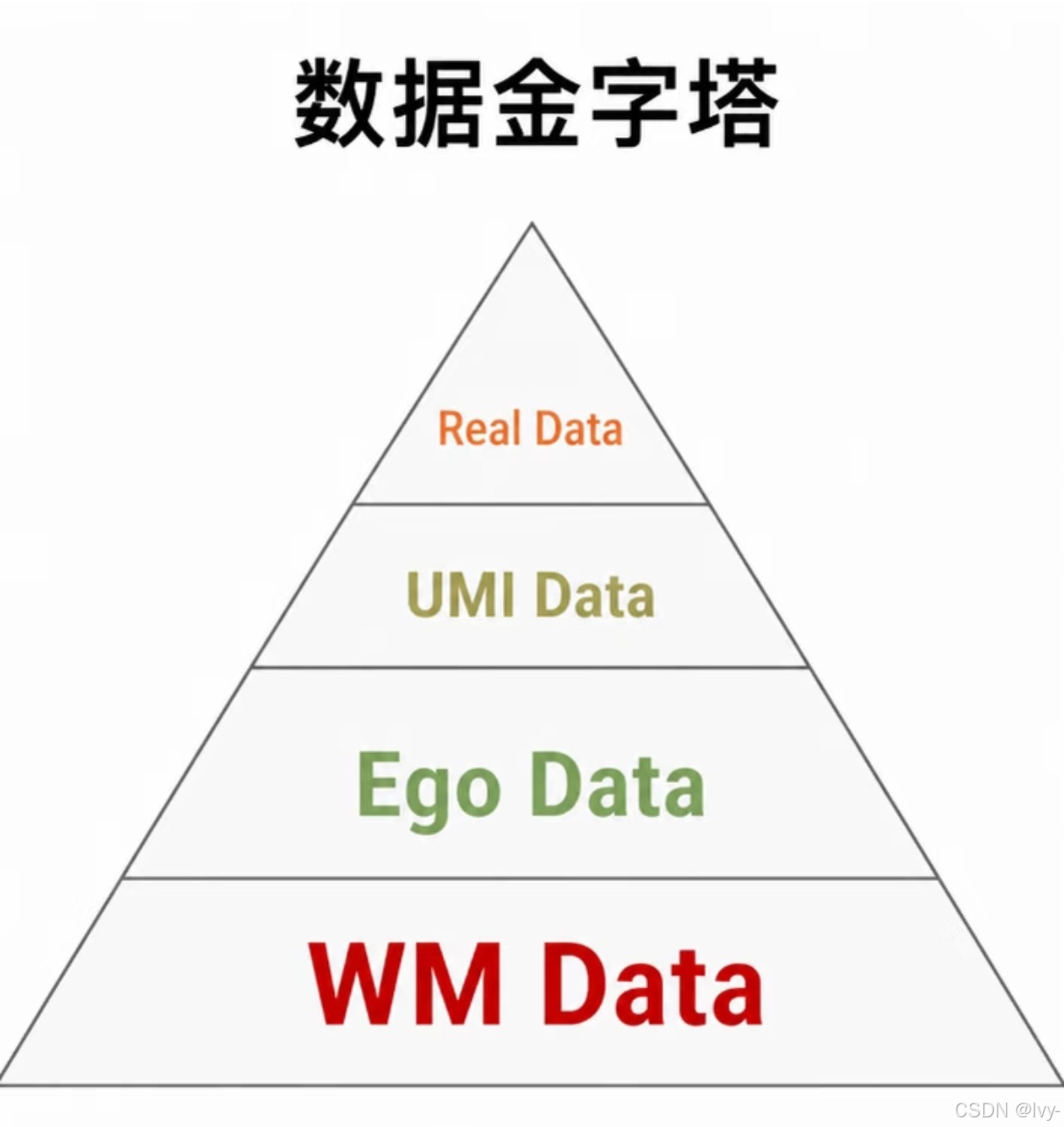

AI Visual Insight: The figure ranks the importance of data modalities in robot learning. Vision sits at the center, followed by hand pose and language annotation, while tactile sensing, full-body pose, audio, and smell decrease in importance. This reflects a practical reality: the modalities that can currently scale, align reliably, and map directly to control policies are still dominated by video and hand pose.

AI Visual Insight: The figure ranks the importance of data modalities in robot learning. Vision sits at the center, followed by hand pose and language annotation, while tactile sensing, full-body pose, audio, and smell decrease in importance. This reflects a practical reality: the modalities that can currently scale, align reliably, and map directly to control policies are still dominated by video and hand pose.

A scalable data stack must be built around video and hand pose

The current priority order can be summarized as follows: video first, hand pose second, language annotation third. Tactile sensing matters, but it is not yet standardized. Audio and smell are unlikely to become primary supervisory signals in the near term.

modalities = ["video", "hand_pose", "language", "tactile"]

priority = sorted(modalities, key=lambda x: {

"video": 4, # Most stable, most general, and easiest to scale

"hand_pose": 3, # Directly constrains manipulation intent and geometry

"language": 2, # Useful for semantic labeling and task segmentation

"tactile": 1 # Important, but lacks a unified representation

}[x], reverse=True)This code snippet expresses the current engineering priority of robot data modalities.

UMI shows that data collection and deployment are converging

UMI takes a highly pragmatic approach. Instead of insisting on reproducing the full human hand, it first collects high-quality state and action data through an interface that already resembles a robotic gripper, making the data distribution closer to the deployment endpoint.

The advantage of this approach is that it introduces almost no sim-to-real gap, because the data collection device is itself an approximate deployment interface. It acts as a compromise between human data and teleoperation.

The future is not competition between three data routes, but fusion

Pure human data, teleoperation data, and UMI-style data will become increasingly difficult to separate. As long as controllers are fast enough and end-effectors become sufficiently hand-like, all three will gradually merge into a unified training stack.

The real bottleneck in embodied AI is full-stack capability, not a single model

The interview repeatedly emphasizes the full stack. This does not mean every component must be built in-house. It means teams must understand which parts of the pipeline affect final outcomes.

In particular, evaluation, training loops, data filtering, and vendor data quality assessment cannot remain black boxes if the goal is stable iteration.

The long-term vision is human-level Behavior Cloning

The endgame of this direction looks a lot like language models: first achieve human-level behavior cloning, then talk about superhuman capability. The upper bound is not a single successful grasp, but a level of physical-world interaction where it becomes difficult to tell whether the agent is human or robotic.

At the same time, the industry still lacks data infrastructure at least 100 times larger than what exists today. Moving from 100,000–200,000 hours to 100 million hours will not come from papers alone. It will require coordinated progress in data collection devices, standardized modalities, data supply chains, and hardware platforms.

FAQ provides structured answers to the core questions

Q1: Why has Behavior Cloning become important again?

A: Because high-quality demonstration data and more stable perception-to-control pipelines have significantly reduced BC’s error accumulation problem, making it a strong baseline for real-world robot tasks.

Q2: Why is first-person human data more valuable than third-person video?

A: Because first-person viewpoints are much closer to the robot’s deployment-time observation distribution, and they combine more naturally with hand pose, IMU, and VIO to generate supervised action labels.

Q3: What is the biggest bottleneck in robot learning today?

A: It is not a single algorithm. It is the full-stack capability formed by hardware, state estimation, data quality control, and the training closed loop.

Core Summary: This article reconstructs the key arguments from a technical interview on robot learning, focusing on four major threads—Behavior Cloning, Human Data, EgoMimic, and UMI. It explains why high-quality human data, first-person perception, and full-stack hardware integration are becoming the real bottlenecks and opportunities in embodied intelligence.