The core of Linux thread synchronization is not just thread safety. It is about establishing when a thread should access shared state on top of mutual exclusion. This article focuses on condition variables, blocking queues, and the producer–consumer model, explaining the atomic behavior of

pthread_cond_wait, whywhileprotects against spurious wakeups, and how semaphore-based designs evolve from this foundation. Keywords: Linux thread synchronization, condition variables, producer–consumer.

The technical specification snapshot is straightforward

| Parameter | Description |

|---|---|

| Language | C, C++11 |

| Threading Library | POSIX Threads, std::thread |

| Core Protocols/Standards | POSIX pthread, POSIX semaphore |

| Typical Compiler Flags | -lpthread |

| Core Dependencies | <pthread.h>, <condition_variable>, <semaphore.h>, <queue> |

| Article Type | Conceptual analysis + source code implementation |

| Applicable Scenarios | Multithreaded coordination, task queues, backend services |

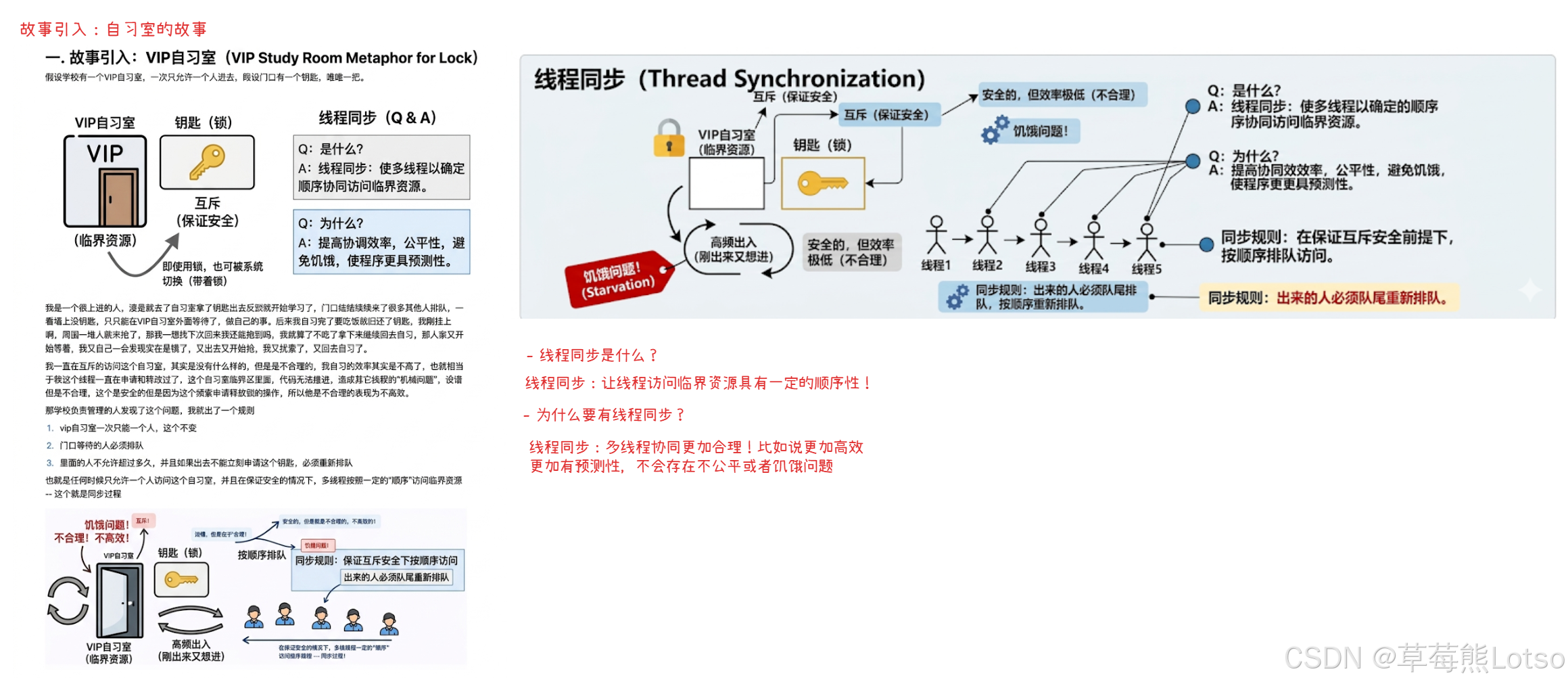

Mutexes guarantee safety, but not coordination order

A mutex solves one question: which thread can enter the critical section at a given moment? It does not solve another equally important question: should a thread enter now?

That means code can be thread-safe and still remain inefficient, or even suffer from starvation.

A typical case is when one thread repeatedly acquires the lock while other threads rarely get scheduled. Shared data remains intact, but system throughput and fairness degrade significantly. The value of thread synchronization lies in building a predictable access order.

AI Visual Insight: The image shows multiple execution units competing for the same critical resource. Its key point is that “holding the lock” does not mean “this is the right time to run.” Mutual exclusion guarantees exclusive access, but it does not enforce ordered entry based on resource state.

AI Visual Insight: The image shows multiple execution units competing for the same critical resource. Its key point is that “holding the lock” does not mean “this is the right time to run.” Mutual exclusion guarantees exclusive access, but it does not enforce ordered entry based on resource state.

The definition of thread synchronization must be grounded in conditions

Synchronization essentially means this: when the resource state does not satisfy the required condition, put the thread to sleep; when the condition becomes true, wake the thread so it can continue.

This mechanism avoids busy waiting and reduces wasted CPU cycles.

pthread_mutex_lock(&mutex);

while (!ready) { // Wait while the condition is not satisfied

pthread_cond_wait(&cond, &mutex); // Atomically release the lock and suspend the thread

}

// Access the critical resource only after the condition becomes true

pthread_mutex_unlock(&mutex);This code demonstrates the smallest complete synchronization loop: check the condition, wait for the condition, and proceed when it is satisfied.

Condition variables are the core tool for Linux thread synchronization

A condition variable does not protect the resource itself. It expresses whether the resource is in a state that allows access.

That is why it must work together with a mutex: the mutex protects state, while the condition variable coordinates waiting and waking.

pthread_cond_wait is the center of this mechanism. It does not simply sleep. Instead, it performs two atomic actions: it releases the mutex and places the thread into the wait queue. After another thread wakes it, it must reacquire the mutex before returning.

AI Visual Insight: The image illustrates the timing relationship between a condition variable and a mutex. A thread acquires the lock and checks the condition first. If the condition is not satisfied, it enters the wait queue and automatically releases the lock inside

AI Visual Insight: The image illustrates the timing relationship between a condition variable and a mutex. A thread acquires the lock and checks the condition first. If the condition is not satisfied, it enters the wait queue and automatically releases the lock inside wait. After wakeup, it competes for the lock again before continuing. This atomic design is exactly what prevents lost wakeups.

The core condition-variable APIs are few, but their semantics are powerful

pthread_cond_t cond = PTHREAD_COND_INITIALIZER; // Static initialization

pthread_cond_init(&cond, nullptr); // Dynamic initialization

pthread_cond_wait(&cond, &mutex); // Wait until the condition is satisfied

pthread_cond_signal(&cond); // Wake one waiting thread

pthread_cond_broadcast(&cond); // Wake all waiting threadsThese APIs are enough to cover most thread-coordination scenarios. The challenge is not the number of APIs, but using them at the correct moment.

wait must be bound to a mutex, or signals can be lost

Many developers assume that “unlock first, then wait” should still work. The problem is that a timing window appears between unlocking and waiting.

If another thread updates the condition and sends a signal during that window, the current thread may not have entered the wait queue yet. It then misses the notification permanently.

This is the classic lost wakeup problem. The value of pthread_cond_wait is precisely that it packages “release the lock” and “enter the wait state” into one atomic operation, removing the race window at its source.

// Incorrect example: unlocking and waiting are split, creating a lost-signal race window

pthread_mutex_lock(&mutex);

while (!ready) {

pthread_mutex_unlock(&mutex); // Incorrect: creates a race window

pthread_cond_wait(&cond, &mutex); // The signal may already have been missed here

pthread_mutex_lock(&mutex);

}

pthread_mutex_unlock(&mutex);This incorrect code highlights a key point: the hard part of synchronization is not whether you know the API, but whether you understand its atomicity boundaries.

Using while instead of if is a hard rule for handling spurious wakeups

A returning condition wait does not guarantee that the condition is actually satisfied. It may return because of broadcast contention, system interruption, or a spurious wakeup.

If you use only if, the thread may continue even though the condition is still false.

pthread_mutex_lock(&mutex);

while (queue.empty()) { // Always re-check the condition in a loop

pthread_cond_wait(&cond, &mutex);

}

auto data = queue.front(); // Access the resource only after confirming the condition

queue.pop();

pthread_mutex_unlock(&mutex);This code ensures that every time the thread returns from wait, it validates the state again and avoids consuming from an empty queue.

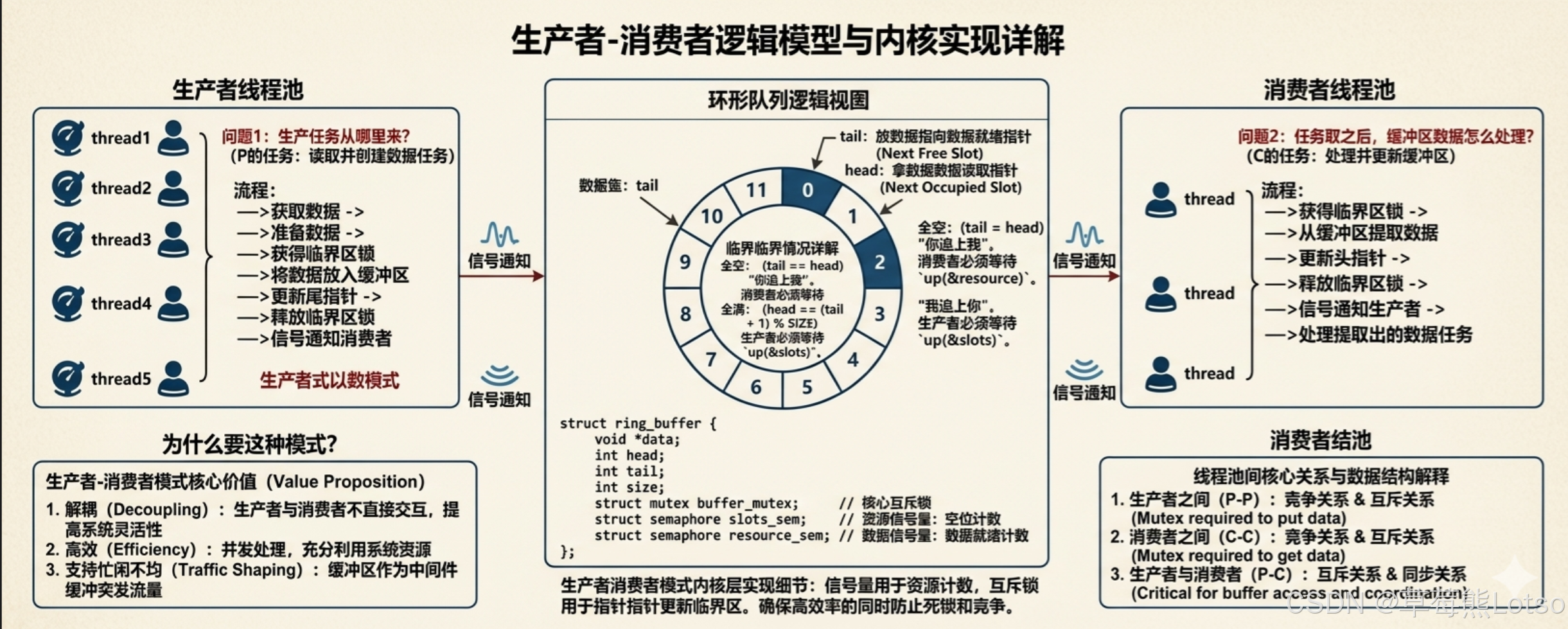

The producer–consumer model is the classic practical form of thread synchronization

The producer–consumer model is fundamentally about role separation + a buffer as intermediary + condition-driven coordination.

Producers only push data. Consumers only pull data. The buffer decouples the two sides.

This pattern remains classic because it solves three problems at once: decoupling between threads, imbalance in processing rates, and efficient concurrent execution. In real systems, task queues, log queues, and thread-pool work queues all follow this pattern.

AI Visual Insight: The image presents the three-stage structure of producers, buffer, and consumers. Technically, it highlights two relationships: mutual exclusion among peers around the shared queue, and synchronization between different roles around the “empty/full” conditions. That is the core abstraction of the model.

AI Visual Insight: The image presents the three-stage structure of producers, buffer, and consumers. Technically, it highlights two relationships: mutual exclusion among peers around the shared queue, and synchronization between different roles around the “empty/full” conditions. That is the core abstraction of the model.

A blocking queue is the most direct engineering implementation

A blocking queue has two conditions: when the queue is empty, consumers block; when the queue is full, producers block.

A common design uses one mutex to protect the queue and two condition variables to represent “not empty” and “not full.”

template <class T>

class BlockQueue {

public:

void Push(const T& in) {

pthread_mutex_lock(&_mutex);

while (_queue.size() == _cap) { // Wait if the queue is full

pthread_cond_wait(&_full, &_mutex);

}

_queue.push(in); // Enqueue produced data

pthread_cond_signal(&_empty); // Notify consumers that data is available

pthread_mutex_unlock(&_mutex);

}

void Pop(T* out) {

pthread_mutex_lock(&_mutex);

while (_queue.empty()) { // Wait if the queue is empty

pthread_cond_wait(&_empty, &_mutex);

}

*out = _queue.front(); // Read the front element

_queue.pop();

pthread_cond_signal(&_full); // Notify producers that space is available

pthread_mutex_unlock(&_mutex);

}

};This code implements a minimal usable blocking queue that works for single-producer/single-consumer and multi-producer/multi-consumer scenarios.

A two-condition-variable design is more efficient than a single-condition-variable design

If you use only one condition variable, a wakeup may target the wrong role. For example, the queue may still be empty, yet another consumer gets awakened, creating pointless contention.

Two condition variables split the wait queues and significantly reduce unnecessary wakeups.

Further engineering optimizations include waiter counters and low/high watermark control. These techniques do not change correctness. They reduce system-call frequency and thread-switch overhead.

Semaphores and ring buffers are the next step on the performance path

Condition variables are well suited to expressing who should be awakened when state changes.

Semaphores behave more like resource counters. They are a more natural fit for expressing how many slots or tasks are currently available. Once the buffer model moves toward a fixed-capacity array, semaphores often become the better abstraction.

A ring buffer combined with semaphores can count writable slots and readable items separately, allowing production and consumption to advance in parallel on different slots. This reduces the serialization caused by locking the entire queue.

sem_t data_sem; // Number of items available for consumption

sem_t room_sem; // Number of empty slots available for writing

sem_init(&data_sem, 0, 0);

sem_init(&room_sem, 0, N);This initialization upgrades synchronization from boolean state management to resource-count management.

The conclusions developers should master are very clear

First, mutual exclusion solves safety, while synchronization solves ordering.

Second, the value of pthread_cond_wait lies in atomically releasing the lock and waiting.

Third, you must hold the mutex before waiting on a condition variable, and you must re-check the condition after the wait returns.

Fourth, the producer–consumer model is the most important synchronization template.

Once you fully internalize these principles, thread pools, connection pools, and task schedulers become recognizable as variations of the same synchronization ideas applied to different business objects.

FAQ in structured Q&A form

Q1: Why must pthread_cond_wait take a mutex?

Because it must atomically perform “release the lock + enter the wait queue.” If these actions are separated, signals can be lost and the thread may sleep forever.

Q2: Why must the condition check use while instead of if?

Because when wait returns, the condition may still be false. Spurious wakeups or contention may invalidate the condition again. while forces the thread to re-check the state and preserves correctness.

Q3: Why are two condition variables commonly used in the producer–consumer model?

One represents “queue not empty,” and the other represents “queue not full.” This allows precise wakeups for the correct role, reduces false wakeups and useless contention, and performs better than a single-condition-variable design.

AI Readability Summary: This article systematically reconstructs the core concepts of Linux thread synchronization, focusing on condition variables, the coordination model between mutexes and waits, blocking queue implementations, and the producer–consumer pattern. It emphasizes the atomicity of wait, the role of while in defending against spurious wakeups, the efficiency of a two-condition-variable design, and the performance evolution toward semaphores and ring buffers.