RAGFlow v0.25.0 focuses on enterprise-grade RAG platform upgrades, strengthening document parsing, datasource synchronization, Agent publishing, multi-model integration, and security governance to solve stability, compatibility, and operability challenges in production environments. Keywords: RAGFlow, Agent, document parsing.

Technical Specification Snapshot

| Parameter | Details |

|---|---|

| Project Name | RAGFlow |

| Version | v0.25.0 |

| Core Positioning | Open-source RAG and Agent platform |

| Primary Languages | Python, Go, TypeScript (as indicated by module updates) |

| Key Capabilities | Document parsing, datasource synchronization, Agent orchestration, multi-model integration |

| License | Open-source project; the original material does not explicitly list the license |

| GitHub Stars | Not provided in the source material |

| Core Dependencies | Jinja2 SandboxedEnvironment, OCR parsing pipeline, Redis, SQLite, MySQL, PostgreSQL, OceanBase |

This release is fundamentally a platform-layer refactor

RAGFlow v0.25.0 is not a one-off feature patch. It is a systematic upgrade that spans ingestion, parsing, inference, execution, and governance. It pushes the RAG platform from merely usable to production-ready.

For teams, the most immediate value appears in three areas: document ingestion into the knowledge base is more reliable, Agent execution is more secure, and model and storage backend choices are more flexible. Together, these changes significantly improve confidence in enterprise adoption.

# Clone the project source code

git clone https://github.com/infiniflow/ragflow.git

cd ragflow

# Inspect the version tag

git tag | grep v0.25.0These commands help you locate the source code and version tag so you can review the exact changes alongside the release notes.

The parsing pipeline has evolved from an import utility into a tunable system

The most notable upgrade in this release appears in the ingestion pipeline. The project adds seven built-in templates and aligns them with native parsers, which means different document types can finally be handled with more granular strategies.

More importantly, preprocess capabilities are now formally integrated into pipeline configuration. Preprocessing is no longer just a UI button. It is now part of a parameterized and extensible pipeline system, making it suitable for cleaning, normalization, and pre-parse correction.

The new parsing pipeline capabilities address three categories of problems

The first category is parsing accuracy, including markdown parser fixes, additional Word parser documentation, and VLM parsing toggle control. The second category is configuration flexibility, such as support for the ONE chunking method and preprocess parameters. The third category is maintainability, including parser log display fixes and template refactoring.

This indicates that RAGFlow is transforming chunking and parsing from a black-box workflow into an observable and controllable pipeline.

pipeline:

parser: docx # Specify the document parser

preprocess:

enabled: true # Enable the preprocessing stage

normalize_heading: true # Normalize heading structure

chunking:

method: one # Use a single chunking strategyThis configuration shows the pipeline-first design emphasized in v0.25.0: preprocessing, parsing, and chunking are modeled explicitly.

Datasource capabilities are beginning to support continuous synchronization scenarios

New datasource support for Seafile, RSS, and DingTalk AI Sheet signals that RAGFlow is moving beyond file uploads toward collaborative platforms and subscription-based content ingestion. For enterprise knowledge bases, this is more important than one-time import.

Even more valuable is support for synchronizing deleted files. Many knowledge base systems previously handled only incremental additions and could not detect source-side deletions, which led to stale or polluted indexes. Now, deletions at the source can be synchronized into the knowledge base, substantially improving data consistency.

Incremental sync and file governance are being strengthened together

Updates across Google Drive, Jira, MySQL, PostgreSQL, and WebDAVConnector show that connector development is moving from “can connect” to “can run reliably over time.” Re-chunking, file type validation, folder uploads, and file list API refactoring all follow this direction.

def sync_datasource(event):

if event.deleted:

remove_from_index(event.file_id) # Clean up the index after a source-side deletion

else:

chunks = re_chunk(event.content) # Re-chunk after content changes

upsert_chunks(chunks) # Write back to the knowledge baseThis pseudocode captures the updated synchronization logic: the system must handle not only additions, but also deletions and re-chunking.

Document parsing optimization now focuses on large-file stability

The key phrase for DOCX parsing is lazy-load images. Image lazy loading reduces peak memory usage without changing output results, while significantly improving the handling of large documents, especially image-heavy Word and Excel files.

At the same time, PDF, OCR, and HTML/Markdown parsing all receive targeted fixes, including automatic OCR fallback for garbled PDFs, corrected absolute page indexing, fixed duplicate table extraction, and state recovery after transient errors.

AI Visual Insight: This image shows the version banner area on the RAGFlow release page. Its main purpose is to reinforce version identity and release context. For technical readers, it signals that this article focuses on a version-level update rather than a single-feature tutorial.

AI Visual Insight: This image shows the version banner area on the RAGFlow release page. Its main purpose is to reinforce version identity and release context. For technical readers, it signals that this article focuses on a version-level update rather than a single-feature tutorial.

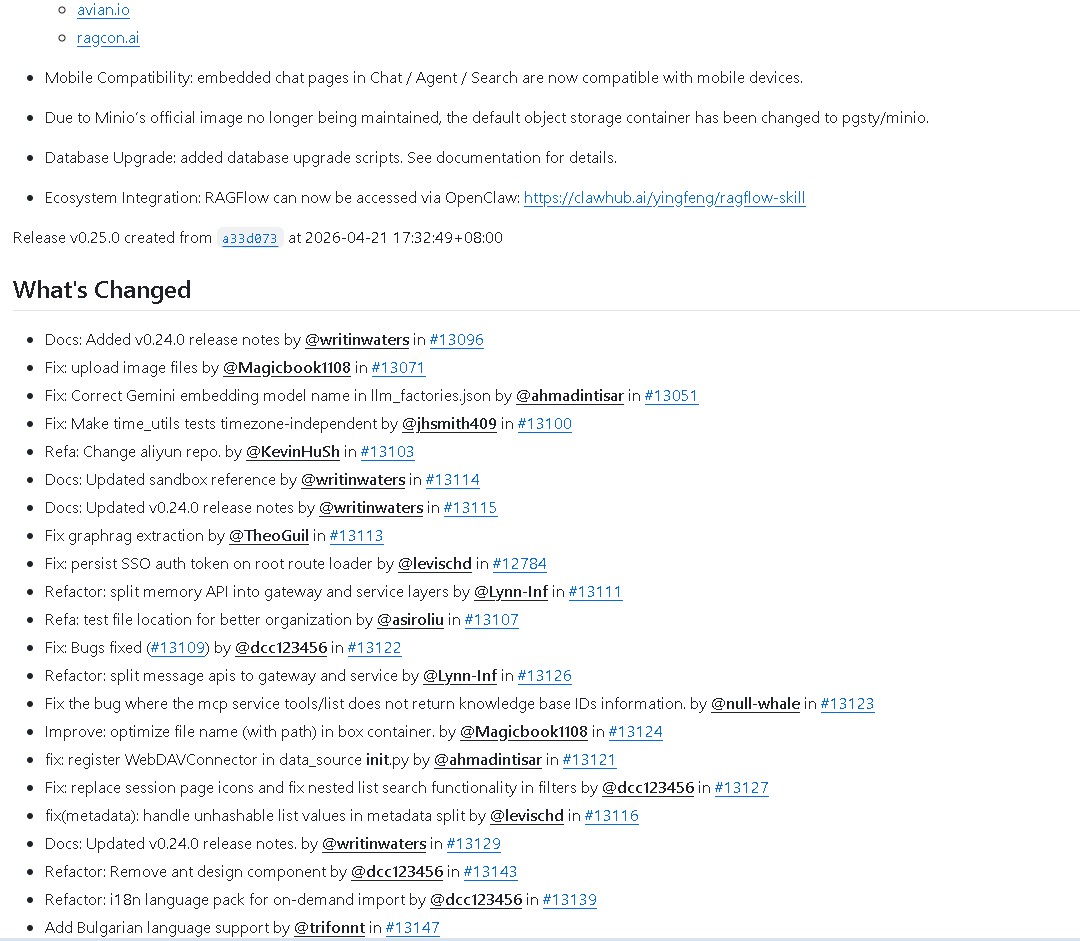

AI Visual Insight: This image reflects the article’s mid-page visual content, typically used to present the release update interface or a feature overview. It emphasizes the broad scope of this release across parsing, Agents, models, and UI subsystems.

AI Visual Insight: This image reflects the article’s mid-page visual content, typically used to present the release update interface or a feature overview. It emphasizes the broad scope of this release across parsing, Agents, models, and UI subsystems.

AI Visual Insight: This image corresponds to another visual from the release notes. Technically, it serves as a supplementary visual anchor for the release, helping readers understand the density of module updates and the idea of parallel evolution across multiple subsystems.

AI Visual Insight: This image corresponds to another visual from the release notes. Technically, it serves as a supplementary visual anchor for the release, helping readers understand the density of module updates and the idea of parallel evolution across multiple subsystems.

The Agent module is beginning to support a complete release lifecycle

Agent functionality is another major theme in v0.25.0. The addition of agent publishing capability and published agent version control means Agents are no longer just experimental artifacts. They are becoming deployable, traceable, and rollback-ready application units.

At the same time, embedded page compatibility, mobile adaptation, log export, user_id tracking, and citation display fixes complete the workflow from development to production to audit.

Sandboxed execution and data analysis templates raise the ceiling for executable Agents

The addition of sandboxed code execution and chart generation allows Agents to handle more complex data analysis tasks. With the introduction of a Data Analysis Agent template, RAGFlow is no longer limited to question-answering knowledge assistants. It is evolving toward executable intelligent agents.

The introduction of Jinja2 SandboxedEnvironment is especially important. It directly constrains template rendering risk and serves as a core mechanism in this release’s security hardening.

from jinja2.sandbox import SandboxedEnvironment

env = SandboxedEnvironment() # Use a sandboxed environment to restrict template execution

template = env.from_string("{{ user_query }}") # Safely render user input

result = template.render(user_query="sales trend")This code shows why Agent template rendering is safer in the new version: it runs in a restricted environment by default.

Multilingual, RTL, and multi-model support demonstrate global readiness

At the UI layer, RAGFlow adds Arabic, Bulgarian, and Turkish, along with RTL layout support. This is not a simple translation pass. It requires systematic changes to the layout engine, component copy, and language pack loading.

At the model layer, the platform adds support for Claude, ZhipuAI, Mistral, Yandex, Jina embeddings, Qwen3, GPT-4.1, GPT-4o-mini, DeepSeek OCR, and nv-embed, significantly improving model pluggability.

Storage, API, and security fixes jointly support enterprise deployment

Support for OceanBase, SQLite batch updates, Redis locks, Redis configuration isolation, and exposed incremental sync fields shows that the underlying system is preparing for high concurrency and complex deployment environments. Refactoring across the API, CLI, and Go services further indicates that the platform architecture is still converging toward a cleaner design.

On the security side, stricter file type and URL validation, restricted template rendering, tighter sandbox behavior controls, and fixes for permissions and access control are all baseline capabilities required before enterprise rollout.

{

"focus": ["sandbox security", "file validation", "access control"],

"impact": "reduce risk in production RAG and Agent workloads"

}This structured summary captures the primary focus areas and practical value of the security upgrades in this release.

This update deserves close attention from three kinds of teams

The first group is teams building enterprise knowledge bases. They will directly benefit from improvements in the parsing pipeline, datasource synchronization, and OCR fallback. The second group is teams delivering Agent-based applications. They will care most about publishing, version control, and sandboxed execution. The third group is teams deploying across regions. They will focus on multilingual support, RTL layouts, and multi-model compatibility.

From a product evolution perspective, the strongest signal in v0.25.0 is not how many features were added. It is that RAGFlow is moving toward a complete platform: ingestion, indexing, execution, auditing, security, and deployment are gradually forming an end-to-end closed loop.

FAQ

What capabilities in RAGFlow v0.25.0 should you validate first?

Prioritize the ingestion pipeline, datasource deletion synchronization, and Agent sandboxed execution. These three areas have the most direct impact on knowledge correctness, runtime security, and production stability.

Why is this release considered more suitable for enterprise deployment?

Because it simultaneously strengthens continuous datasource synchronization, database backend compatibility, Redis concurrency control, access control fixes, and template sandbox security. These are all hard requirements in enterprise environments.

What is the practical value of multi-model support in a RAG system?

It allows teams to choose vectorization, reranking, OCR, and inference models by task. Developers can make more precise trade-offs across cost, quality, language capability, and deployment environment.

Core Summary: RAGFlow v0.25.0 is a major platform-oriented release covering parsing pipelines, datasource integration, Agent publishing and sandboxed execution, multilingual UI support, expanded model ecosystem support, storage backend compatibility, and security fixes. Its main focus is to improve production readiness, enterprise deployment capability, and end-to-end retrieval pipeline integrity.