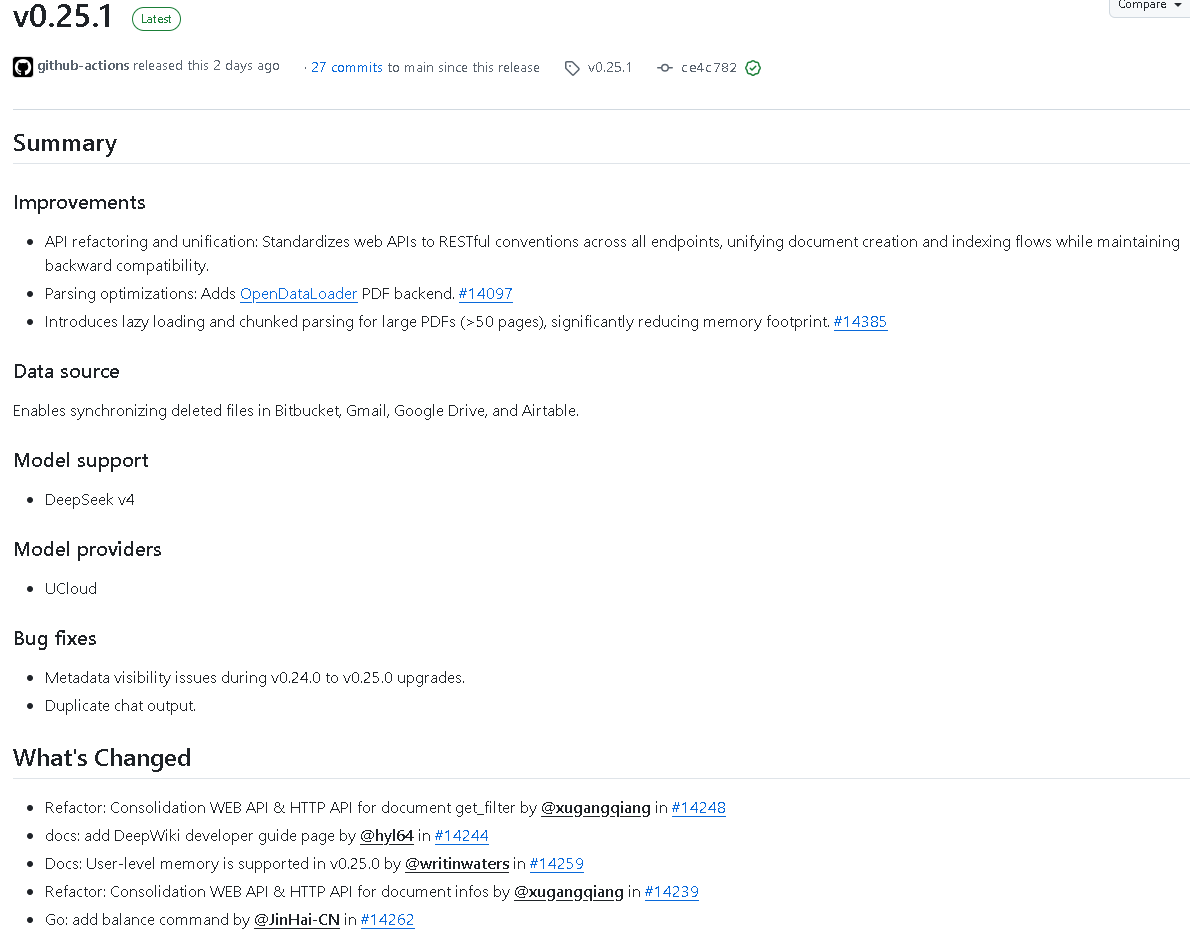

RAGFlow v0.25.1 focuses on three core improvements: API unification, faster PDF parsing, and stronger connector deletion sync. It addresses three common pain points: inconsistent interface design, unstable large-document processing, and unsynchronized deletions from external knowledge sources. Keywords: RAGFlow, PDF parsing, REST API.

Technical Specifications Snapshot

| Parameter | Details |

|---|---|

| Project | infiniflow/ragflow |

| Version | v0.25.1 |

| Primary Languages | Python, Go |

| Interface Protocols | HTTP / RESTful API / SSE |

| Core Focus | API refactoring, document parsing, connector synchronization, model expansion |

| PDF Capabilities | OpenDataLoader backend, lazy loading, chunked parsing |

| Key Dependency Updates | lxml 6.1.0, grpc 1.79.3 |

| Repository | github.com/infiniflow/ragflow |

AI Visual Insight: The image highlights the core summary section of the release page, which typically includes the version number, release date, and high-priority changes. It helps you quickly determine whether this iteration is an architectural update rather than a routine patch release.

AI Visual Insight: The image highlights the core summary section of the release page, which typically includes the version number, release date, and high-priority changes. It helps you quickly determine whether this iteration is an architectural update rather than a routine patch release.

This release is fundamentally an architectural convergence

RAGFlow v0.25.1 is not a collection of isolated fixes. It is a systematic upgrade centered on two goals: unified API semantics and a more stable document processing pipeline. For teams maintaining private knowledge bases, enterprise retrieval systems, and Agent workflows over the long term, this kind of release often delivers more value than a single new feature.

Three changes matter most. First, APIs for documents, chat, search, chunks, MCP, and Agents continue to move toward a RESTful design. Second, large PDF parsing now uses lazy loading and chunked processing. Third, multiple connectors now support deletion sync, reducing stale data in the knowledge base.

API unification directly reduces secondary development costs

The old problem was usually not a lack of APIs. It was inconsistent naming, routing style, and parameter semantics across APIs. In v0.25.1, document creation, upload-and-parse, metadata updates, deletion, thumbnails, and run operations are being standardized. That makes it easier to normalize SDKs, gateways, auditing, and permission layers.

# The document workflow is converging toward consistent REST routes

GET /api/v1/documents # List documents

POST /api/v1/documents # Create a document

POST /api/v1/documents/upload # Upload and parse a document

PATCH /api/v1/documents/{id}/meta # Update metadata

DELETE /api/v1/documents/{id} # Delete a documentThis example shows the core direction of v0.25.1: consolidating fragmented endpoints into predictable resource-oriented APIs.

PDF processing is meaningfully stronger in this release

In RAG systems, PDFs are not attachments. They are often the primary data source. RAGFlow v0.25.1 introduces an OpenDataLoader PDF parser backend and enables lazy loading and chunked parsing for PDFs over 50 pages. The goal is to reduce memory pressure and parsing failures for large files.

More importantly, the release fixes failures in PDFs over 300 pages that were caused by a hardcoded page-limit constraint. This shows that the team is not only improving performance, but also removing hidden upper bounds that affect production reliability.

AI Visual Insight: The image emphasizes the optimization points in the PDF parsing pipeline. These typically map to backend parser switching, paginated loading, chunk-level segmentation strategies, and failure recovery logic, reflecting a shift from one-shot processing to streaming-style ingestion.

AI Visual Insight: The image emphasizes the optimization points in the PDF parsing pipeline. These typically map to backend parser switching, paginated loading, chunk-level segmentation strategies, and failure recovery logic, reflecting a shift from one-shot processing to streaming-style ingestion.

The recommended integration strategy for large PDFs has changed

For very long manuals, annual reports, and contract collections, chunked parsing should now take priority over loading the entire file at once. Parsing latency may increase slightly, but the tradeoff is lower peak memory usage and more stable job completion. That tradeoff is especially important for bulk ingestion.

def parse_large_pdf(file_path, pages):

if pages > 50:

mode = "chunked" # Enable chunked parsing for large files

lazy = True # Avoid loading all pages at once

else:

mode = "full"

lazy = False

return {"mode": mode, "lazy_loading": lazy}This code reflects the strategic shift in v0.25.1: dynamically choose the parsing path based on document size.

Deletion sync closes a critical consistency gap in knowledge bases

Many RAG projects do not fail at ingestion. They fail when deleted files continue to appear in retrieval results. This release strengthens deleted-file synchronization for Bitbucket, Gmail, Google Drive, Airtable, GitLab, Dropbox, and Discord. That matters a great deal in real enterprise environments.

If a source file has been deleted externally but its vectors and chunks still remain, retrieval results will continue to contaminate generated answers. RAGFlow v0.25.1 also adds pruning of deleted document chunks from retrieval, which means deletion sync now extends into the retrieval pipeline instead of stopping at connector-level state alignment.

Connector upgrades require operations strategies to evolve as well

Once deletion sync is enabled, you should upgrade your process from incremental pull only to incremental pull plus deletion auditing plus retrieval cleanup validation. Otherwise, you may still see residual results because the connector deleted the file, but the index was not cleaned and the cache was not invalidated.

sync_policy:

connector: google_drive

enable_deleted_file_sync: true # Enable deletion sync

prune_retrieval_chunks: true # Remove residual chunks from retrieval

skip_unsupported_files: true # Skip unsupported filesThis configuration example shows the shift in connector synchronization from “only ingest new content” to full lifecycle consistency management.

AI Visual Insight: The image typically summarizes newly added providers, fix lists, and module coverage. It helps assess whether the release also affects the model integration layer, retrieval layer, frontend interaction layer, and backend service layer.

AI Visual Insight: The image typically summarizes newly added providers, fix lists, and module coverage. It helps assess whether the release also affects the model integration layer, retrieval layer, frontend interaction layer, and backend service layer.

The model and server ecosystem continues to expand

RAGFlow v0.25.1 adds or extends support for DeepSeek v4, UCloud, Astraflow, MiniMax, Gitee, SiliconFlow, Aliyun, Google, Volcengine, and Moonshot. This shows that RAGFlow is continuing to improve compatibility in its model abstraction layer.

The value of this expansion is not only that you can connect more models. It also gives enterprises more flexibility to switch providers based on cost, geography, compliance, and inference type. Combined with the refactoring from model type to model class, future model governance should become more stable.

Stability fixes cover the areas most likely to break during upgrades

This release also fixes metadata visibility, duplicate chat outputs, upload-stream truncation, compatibility between search id and _id, user_id retrieval support, GraphRAG entity merge race conditions, and SSRF protection.

For upgrade users, the most important areas to watch are metadata migration, search filter compatibility, and upload pipeline stability. These directly determine whether you will run into hidden issues after the upgrade, such as “search returns results but not complete metadata” or “upload succeeds but content is corrupted.”

Upgrade guidance for v0.25.1 should be more engineering-driven

If you are deploying from scratch, validate API routes, large PDF samples, and connector deletion sync first. If you are upgrading from v0.24.x or v0.25.0, focus on metadata config, dataset page rendering, search message with user_id, and GraphRAG-related tasks.

Run a small regression first, then shift traffic in batches. Historical datasets deserve special attention. You should verify that metadata parsing and filtering behavior still match expectations from the previous version.

checklist = [

"Validate REST API route compatibility", # Check whether legacy endpoints still work

"Sample-test 50-page and 300+ page PDFs", # Verify large-file parsing stability

"Inspect retrieval results after deletion sync", # Confirm stale chunks are removed

"Regression-test metadata and user_id search" # Avoid query anomalies after upgrade

]

for item in checklist:

print(item)This script compresses upgrade validation into four high-value checks.

FAQ

Q1: What is the most important upgrade in v0.25.1?

A1: API unification and large PDF parsing optimization. The first reduces integration complexity, and the second directly improves document ingestion reliability in RAG systems.

Q2: Which teams should upgrade as soon as possible?

A2: Teams that depend on large-scale PDF ingestion, need synchronization across multiple connectors, or are integrating Agents or GraphRAG will benefit the most.

Q3: What is easiest to overlook during the upgrade?

A3: Metadata migration, retrieval cleanup after deletion sync, and validation of legacy API compatibility. These three often affect production stability more than the feature changes themselves.

Core summary

RAGFlow v0.25.1 focuses on three priorities: unifying Web/HTTP/REST interfaces, optimizing the large-PDF parsing pipeline, and strengthening deletion sync across multiple connectors. This article distills its API refactoring, OpenDataLoader PDF backend, model expansion, GraphRAG fixes, and upgrade risk considerations.