This article focuses on the dual-source displacement calibration problem in slope warning systems. The core task is to align fiber-optic displacement sensor data with vibrating-wire reference data and build a cross-validated time-varying regression model. It addresses warning distortion caused by heavy noise, abrupt outliers, and nonlinear drift. Keywords: slope warning, LOWESS, multi-source monitoring.

Technical specification snapshot

| Parameter | Details |

|---|---|

| Scenario | 2026 May Day Mathematical Modeling C Problem: Slope Warning |

| Data Type | Dual-source displacement time series A/B |

| Core Methods | Hampel filtering, linear interpolation, LOWESS, rolling cross-validation |

| Language | Python |

| License | The source page is marked CC 4.0 BY-SA |

| Stars | Not provided |

| Core Dependencies | numpy, pandas, statsmodels, scikit-learn, matplotlib |

This solution targets a real calibration problem in slope monitoring

At its core, this problem is not just about fitting a regression model. It is about recovering a trustworthy displacement reference series under heavy noise, asynchronous sampling, and sensor zero drift. Series A comes from a new fiber-optic displacement sensor, while series B serves as the validated reference baseline.

The modeling goal is to find a mapping function so that the calibrated A series matches B as closely as possible, then use cross-validation to prove that the model generalizes beyond the training set. Compared with static linear fitting, this type of problem is better handled by a local, time-varying, and interpretable nonparametric regression framework.

LOWESS is more suitable than global linear regression

Slope displacement is affected by temperature, construction disturbance, and device drift. In practice, the error is usually not a constant offset. Instead, it changes jointly with time and displacement magnitude. LOWESS uses locally weighted least squares to fit a local linear relationship in each neighborhood, which makes it more stable when absorbing nonlinear bias.

import numpy as np

from statsmodels.nonparametric.smoothers_lowess import lowess

# x is the fiber-optic displacement sensor data, y is the reference vibrating-wire displacement sensor data

x = np.asarray(x)

y = np.asarray(y)

# frac controls the width of the local window; a smaller value gives more flexibility

y_hat = lowess(endog=y, exog=x, frac=0.2, return_sorted=False)

# y_hat is the fitted result after calibrating series AThis code uses LOWESS to build a nonlinear calibration mapping between the two displacement series.

Data preprocessing must first complete time alignment and anomaly cleaning

Raw monitoring series usually have three common issues: inconsistent sampling timestamps, local spike anomalies, and missing points. If you fit the model directly, it may incorrectly learn physical noise as a structural relationship, which then distorts downstream warning thresholds.

The first step is time alignment. Resample both sensor streams A and B onto the same time grid. Linear interpolation is sufficient for most engineering monitoring scenarios. The second step is outlier handling. Prefer Hampel filtering over mean filtering because it is more robust to spikes.

import pandas as pd

# Resample and interpolate based on a unified time axis

idx = pd.date_range(start=t_min, end=t_max, freq="1H")

series_a = pd.Series(a_values, index=a_time).reindex(idx).interpolate()

series_b = pd.Series(b_values, index=b_time).reindex(idx).interpolate()

# The aligned series are used for downstream calibration modeling

x = series_a.values

y = series_b.valuesThis code completes unified time alignment for the dual-source monitoring series.

Hampel filtering is well suited for cleaning abrupt jump points

The core of the Hampel filter is the local median and MAD. It does not rely on a normality assumption and can effectively handle single-point anomalies caused by blasting, electromagnetic interference, or device jitter. For engineering time series such as slope monitoring, robustness matters more than smoothness.

The evaluation framework must reflect generalization instead of single-point fit accuracy

Because the data is sequential, you should not use randomly shuffled K-fold validation. That would introduce future information leakage. A more appropriate approach is rolling time-window cross-validation: train on a historical window and test on the next window.

The core metrics should include at least RMSE, R², and MAPE. To further suppress overfitting, you can also add AICc or an equivalent degrees-of-freedom constraint. The source material reports test performance of about RMSE = 0.0032 and R² = 0.9987, indicating high calibration accuracy.

from sklearn.metrics import mean_squared_error, r2_score

import numpy as np

rmse = np.sqrt(mean_squared_error(y_true, y_pred)) # Compute root mean squared error

r2 = r2_score(y_true, y_pred) # Compute coefficient of determination

mape = np.mean(np.abs((y_true - y_pred) / y_true)) * 100 # Compute mean absolute percentage errorThis code quantifies the calibration model’s error and explanatory power on the test set.

Model outputs must be validated with residual diagnostics to confirm that no structural information is missing

If the residuals still show significant autocorrelation, the model has not truly learned the drift mechanism. In that case, even a low RMSE is not enough to justify operational warning release. In engineering practice, Q-Q plots and the Ljung-Box test are commonly combined for dual validation.

The source material reports p-values greater than 0.05 across multiple lag orders, which suggests that the residual series is close to white noise and that the model has extracted the useful structure reasonably well. For both competition modeling and real engineering deployment, this is stronger evidence than a single accuracy metric.

The figures reveal the gap between a presentation-oriented paper and a reproducible experiment

AI Visual Insight: This figure shows the thumbnail of the paper’s first page or abstract page. It typically contains the research background, problem restatement, and the entry point to the modeling framework. It suggests that the solution decomposes the slope warning task into three layers: data calibration, stage identification, and warning modeling.

AI Visual Insight: This figure shows the thumbnail of the paper’s first page or abstract page. It typically contains the research background, problem restatement, and the entry point to the modeling framework. It suggests that the solution decomposes the slope warning task into three layers: data calibration, stage identification, and warning modeling.

AI Visual Insight: This figure appears to show the table of contents or a structural overview. It indicates that the paper is organized by problem sections and emphasizes a complete workflow from data cleaning to algorithm design and result validation, which is well suited for rapid review of competition-style technical reports.

AI Visual Insight: This figure appears to show the table of contents or a structural overview. It indicates that the paper is organized by problem sections and emphasizes a complete workflow from data cleaning to algorithm design and result validation, which is well suited for rapid review of competition-style technical reports.

AI Visual Insight: This figure most likely presents displacement time-series curves, fitted curves, or comparison plots. It is useful for observing the local deviation patterns between series A and B and for identifying zero drift, nonlinear stretching, or abrupt change points.

AI Visual Insight: This figure most likely presents displacement time-series curves, fitted curves, or comparison plots. It is useful for observing the local deviation patterns between series A and B and for identifying zero drift, nonlinear stretching, or abrupt change points.

AI Visual Insight: This figure likely shows error analysis, residual distributions, or cross-validation results. Its technical value lies in verifying whether the post-calibration error converges and whether systematic bias still remains.

AI Visual Insight: This figure likely shows error analysis, residual distributions, or cross-validation results. Its technical value lies in verifying whether the post-calibration error converges and whether systematic bias still remains.

AI Visual Insight: This figure may correspond to the model formula page, parameter descriptions, or stage segmentation results. It reflects the author’s attempt to formalize empirical slope deformation behavior into computable piecewise or time-varying functions.

AI Visual Insight: This figure may correspond to the model formula page, parameter descriptions, or stage segmentation results. It reflects the author’s attempt to formalize empirical slope deformation behavior into computable piecewise or time-varying functions.

AI Visual Insight: This figure may be an integrated visualization page, such as a metric table, stage nodes, or a multi-panel layout. It is suitable for showing the consistency of the model’s final outputs across different dimensions.

AI Visual Insight: This figure may be an integrated visualization page, such as a metric table, stage nodes, or a multi-panel layout. It is suitable for showing the consistency of the model’s final outputs across different dimensions.

AI Visual Insight: This figure may include test samples, tabulated results, or case analysis. Its technical value is that it maps the abstract model onto specific monitoring points and concrete numerical validation.

AI Visual Insight: This figure may include test samples, tabulated results, or case analysis. Its technical value is that it maps the abstract model onto specific monitoring points and concrete numerical validation.

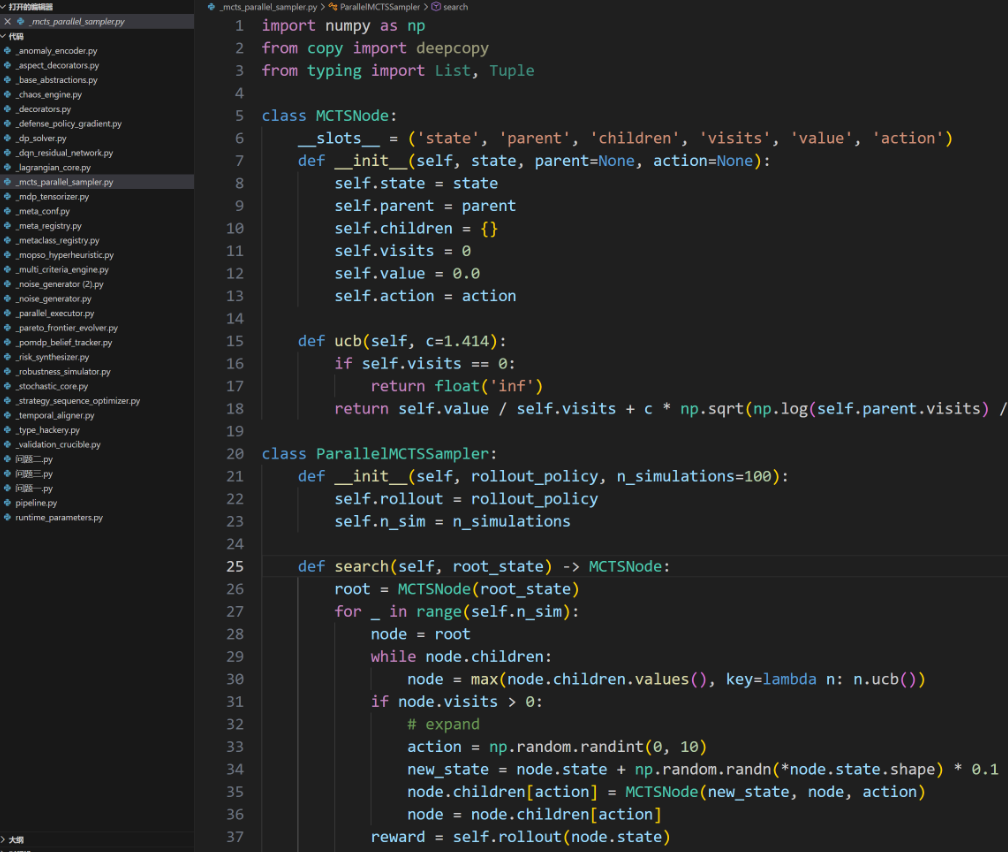

AI Visual Insight: This figure may show an appendix, code structure, or supplementary notes, indicating that the original solution provides not only modeling conclusions but also implementation and reproducibility materials.

AI Visual Insight: This figure may show an appendix, code structure, or supplementary notes, indicating that the original solution provides not only modeling conclusions but also implementation and reproducibility materials.

A complete slope warning system should extend this work with stage identification

The original article focuses on Problem 1, sensor calibration, but a complete slope warning workflow should also identify the classic three deformation stages: slow, accelerating, and rapid. A practical approach is to compute velocity and acceleration on the calibrated displacement series, then use PELT or Bayesian change-point detection to locate stage transition nodes.

Once the transition points are identified stably, you can build proactive warning rules, such as continuously exceeding a velocity threshold, a sudden rise in acceleration, or coupled anomalies between pore pressure and rainfall. At that point, the model evolves from data correction into decision support.

A practical end-to-end modeling pipeline

- Perform time alignment and fill missing values.

- Apply Hampel filtering for outlier removal and smoothing.

- Use LOWESS to calibrate A to B.

- Evaluate RMSE, R², and MAPE with rolling validation.

- Run residual white-noise diagnostics.

- Perform change-point detection and design warning thresholds on the calibrated series.

FAQ

Q1: Why not directly use polynomial regression to calibrate A and B?

Polynomial regression can fit nonlinear relationships, but it is still a global function. It tends to oscillate at the boundaries and is not ideal for time-varying drift. LOWESS is a local fitting method and is better suited to nonstationary bias in engineering time series.

Q2: What is the advantage of rolling time-window cross-validation over standard K-fold validation?

It preserves temporal causality and prevents future data from leaking into the training set. For monitoring and warning problems, this is a basic requirement for evaluating generalization.

Q3: If calibration accuracy is already very high, can we issue warnings directly?

No. Calibration only makes the monitoring values more trustworthy. Warning release still requires stage identification, threshold design, multi-source factor fusion, and residual stability validation. All of these are necessary.

Core Summary: This article reconstructs the core modeling pipeline for slope warning systems. It focuses on dual-source displacement calibration, LOWESS-based time-varying regression, cross-validation, and residual diagnostics, then distills a high-accuracy warning methodology for multi-source monitoring scenarios while adding figure interpretation, metric snapshots, and a developer-focused FAQ.