This article focuses on three mainstream 3D imaging approaches—Stereo Vision, Structured Light, and ToF—to help developers navigate trade-offs among accuracy, range, interference resistance, and cost. Core takeaway: choose Structured Light for high-precision short-range tasks, ToF for dynamic mid- to long-range sensing, and Stereo Vision for bright outdoor, cost-sensitive deployments. Keywords: 3D Imaging, ToF, Structured Light.

The technical specification snapshot highlights the core differences

| Parameter | Stereo Vision | Structured Light | Time of Flight (ToF) |

|---|---|---|---|

| Core language / algorithm stack | C++ / Python / OpenCV | C++ / FPGA / ISP | C++ / Embedded / ISP |

| Physical principle | Disparity-based triangulation | Encoded projection triangulation | Time-of-flight ranging |

| Primary communication interface | MIPI / USB / GMSL | MIPI / I2C / USB | MIPI / CSI-2 / I2C |

| Typical range | 0.3–25 m | 0.1–10 m | 0.02–50 m |

| Accuracy characteristics | Degrades rapidly with distance | Best millimeter-level accuracy at short range | Stable at mid to long range |

| Environmental adaptability | Strong in bright light, weak on low-texture surfaces | Strong indoors, weak outdoors | More balanced indoors and outdoors |

| Typical core dependencies | OpenCV, stereo matching, calibration | VCSEL, DOE, IR camera | VCSEL, SPAD/APD, TDC/ISP |

| Representative vendors | ZED, DJI | Apple, Orbbec | Sony, Infineon, ST, ADI |

| Open-source activity (approx.) | High | Medium | Medium |

The essential difference among the three 3D imaging approaches is how they obtain depth

Stereo Vision relies on the pixel offset, or disparity, between two cameras observing the same target. Structured Light actively projects a known pattern and then measures how that pattern deforms. ToF directly measures either the round-trip travel time of light or its phase shift. At a fundamental level, these represent passive vision, active triangulation, and active time measurement.

For engineering decisions, start with the scenario and then validate against metrics. If your application requires high precision at short range—such as smartphone face unlock or part scanning—Structured Light is usually the most reliable option. If the target moves quickly or the sensing range must be longer, ToF is often a better fit. If the design is cost-sensitive and must work in strong outdoor light, Stereo Vision is generally more practical.

A minimal selection rule can quickly eliminate the wrong option

# Perform an initial 3D imaging method screening based on project constraints

def select_3d_method(distance_m, need_mm_precision, outdoor, low_cost):

if need_mm_precision and distance_m <= 3:

return "Structured Light" # Prioritize millimeter-level accuracy at short range

if distance_m >= 5 and not low_cost:

return "ToF" # Prioritize mid-/long-range and dynamic scenes

if outdoor or low_cost:

return "Stereo Vision" # Prioritize bright-light environments and cost-sensitive projects

return "ToF" # Default to the balanced optionThis code compresses a complex requirement set into an executable first-pass selection rule.

ToF is better suited to mid- to long-range and dynamic depth sensing

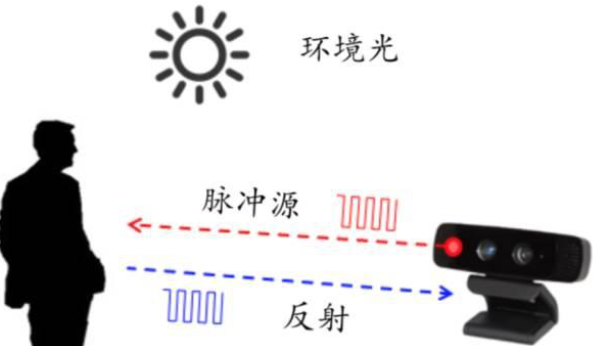

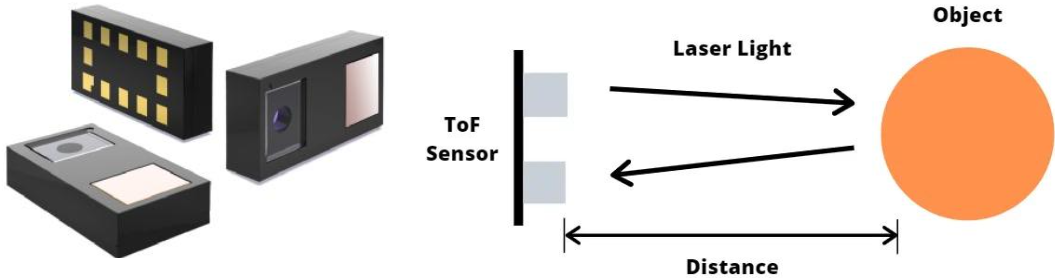

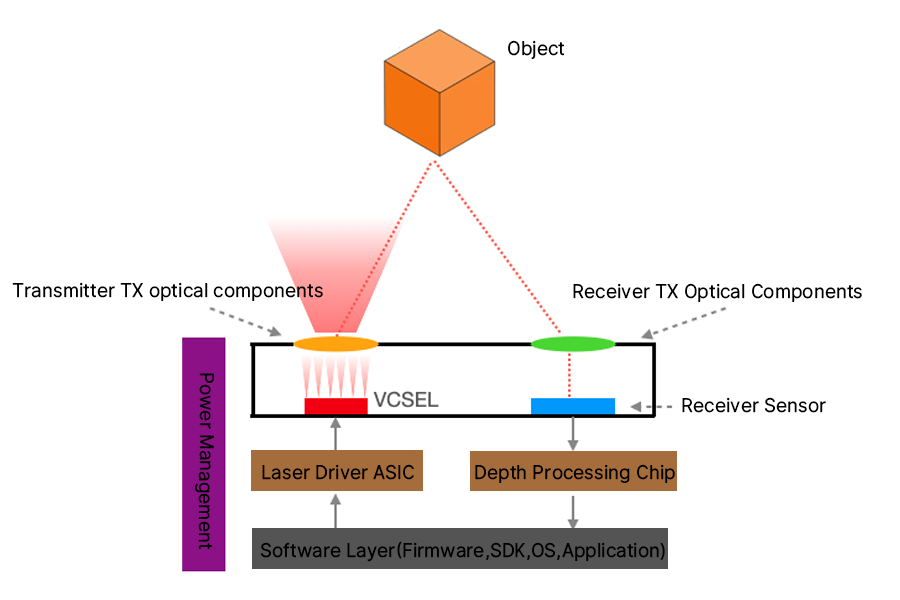

ToF works by emitting infrared light or a laser pulse and then measuring the return time to calculate distance. A typical system includes a transmitter, receiver, power management, and a software stack. The transmitter commonly uses a VCSEL, while the receiver may use SPAD, APD, or a dedicated pixel array.

AI Visual Insight: This diagram shows the closed-loop ranging path in which active light is emitted, reflected by the target surface, and captured by the receiver. It emphasizes the timing relationship among emission, reflection, reception, and distance calculation, which is foundational to understanding a ToF system.

AI Visual Insight: This diagram shows the closed-loop ranging path in which active light is emitted, reflected by the target surface, and captured by the receiver. It emphasizes the timing relationship among emission, reflection, reception, and distance calculation, which is foundational to understanding a ToF system.

AI Visual Insight: This image illustrates the processing pipeline that fuses depth data with 2D imagery. It shows that ToF does more than output a single distance value—it can generate a depth map that feeds into the ISP or higher-level vision algorithms.

AI Visual Insight: This image illustrates the processing pipeline that fuses depth data with 2D imagery. It shows that ToF does more than output a single distance value—it can generate a depth map that feeds into the ISP or higher-level vision algorithms.

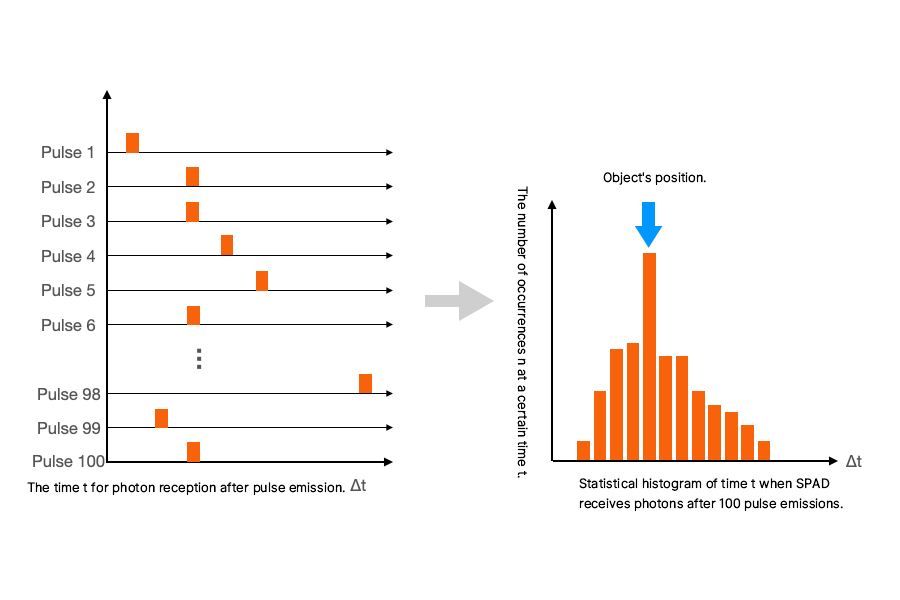

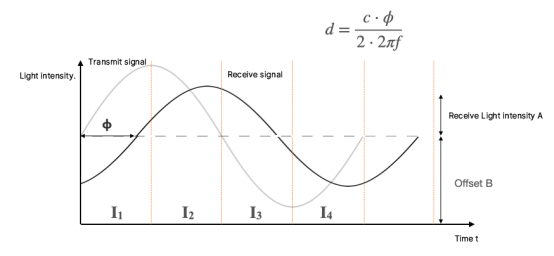

dToF and iToF define the accuracy boundary and industrial maturity of ToF

dToF directly measures photon travel time and typically depends on SPAD and high-precision time-to-digital conversion. It offers strong interference resistance and suits longer-range sensing, but chip design is complex and cost is higher. iToF measures distance by emitting modulated light and solving for phase difference. It is more mature in manufacturing and easier to scale in consumer electronics, but range and accuracy are more constrained.

AI Visual Insight: This diagram breaks down the key hardware layers of a ToF module, including laser emission, receiving optics, sensor, processing chip, and power subsystem. It makes clear that ToF is not a single chip but a complete optoelectronic system.

AI Visual Insight: This diagram breaks down the key hardware layers of a ToF module, including laser emission, receiving optics, sensor, processing chip, and power subsystem. It makes clear that ToF is not a single chip but a complete optoelectronic system.

AI Visual Insight: This figure uses a photon arrival-time histogram to show how dToF measures distance. The peak position corresponds to target distance and highlights the importance of single-photon counting and time resolution.

AI Visual Insight: This figure uses a photon arrival-time histogram to show how dToF measures distance. The peak position corresponds to target distance and highlights the importance of single-photon counting and time resolution.

AI Visual Insight: This image shows the phase offset between the transmitted modulation wave and the received echo. It demonstrates that iToF is fundamentally a phase-solving method rather than a direct timing method, which is why it depends heavily on modulation frequency and demodulation quality.

AI Visual Insight: This image shows the phase offset between the transmitted modulation wave and the received echo. It demonstrates that iToF is fundamentally a phase-solving method rather than a direct timing method, which is why it depends heavily on modulation frequency and demodulation quality.

The mainstream ToF supply chain now spans consumer electronics to industrial automation

The industry can be roughly divided into three groups. The first includes consumer-grade module vendors and smartphone solution providers such as Samsung, Infineon, and Sony. The second includes industrial and robotics suppliers such as ADI, TI, and ST. The third includes emerging domestic ToF chip vendors such as Juxin Micro, Guangwei Technology, Lingming Photonics, Orbbec Intelligence, and Xinshi Vision.

Among them, Sony IMX459 targets high-resolution SPAD depth sensing, the Infineon REAL3 series covers VGA-class applications, and the ST VL53L4ED is more focused on single-point and short-range ranging. Solutions such as Lingming Photonics ADS6401, Juxin Micro SIF2610, and OPNOUS OPN8018 show that domestic ToF vendors are iterating quickly toward higher resolution, lower power consumption, and stronger ambient light immunity.

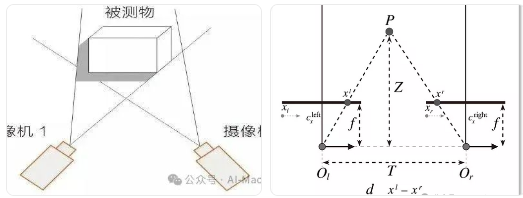

Stereo Vision depends on texture and compute, but it leads in bright light and cost

Stereo Vision uses two synchronized cameras to capture the same scene, matches corresponding points between the left and right images, and then reconstructs depth through triangulation. Because it does not require an active light source, it is more robust under outdoor sunlight and makes bill-of-materials cost easier to control.

AI Visual Insight: This diagram shows the geometric relationship among the left-right camera baseline, focal length, target projection, and disparity. It clearly explains why Stereo Vision depth accuracy drops rapidly as distance increases.

AI Visual Insight: This diagram shows the geometric relationship among the left-right camera baseline, focal length, target projection, and disparity. It clearly explains why Stereo Vision depth accuracy drops rapidly as distance increases.

Its limitations are also clear. Textureless surfaces, repetitive patterns, and occlusion boundaries can all cause matching failures. In addition, a larger baseline improves long-range accuracy but increases device size, which becomes a real constraint for drones, automotive systems, and portable devices.

Stereo Vision implementations usually require calibration and stereo matching

import cv2

# Load left and right images

left = cv2.imread("left.png", 0)

right = cv2.imread("right.png", 0)

# Create an SGBM matcher

stereo = cv2.StereoSGBM_create(

minDisparity=0,

numDisparities=128, # Disparity search range

blockSize=5 # Matching window size

)

disp = stereo.compute(left, right) # Compute the disparity map

depth_proxy = 1.0 / (disp.astype("float32") + 1e-6) # Approximate depth as inversely proportional to disparityThis code demonstrates the core computation flow of a stereo system, from the left-right image pair to the disparity map.

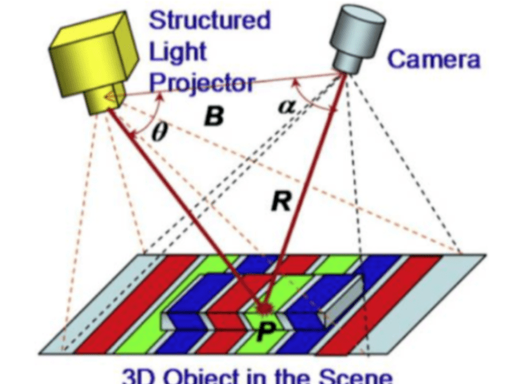

Structured Light is the strongest option for short-range precision reconstruction

Structured Light projects speckle, stripe, or encoded patterns through a VCSEL or projector, then uses a camera to observe pattern distortion and recover depth via triangulation. Because the projected pattern is known in advance, the system can actively add texture to low-texture surfaces. As a result, it usually outperforms Stereo Vision on targets such as white walls, skin, and smooth workpieces.

AI Visual Insight: This figure shows the triangulation geometry among the projector, target surface, and receiving camera. The key idea is that surface height is reconstructed from pattern deformation, enabling high-precision 3D reconstruction.

AI Visual Insight: This figure shows the triangulation geometry among the projector, target surface, and receiving camera. The key idea is that surface height is reconstructed from pattern deformation, enabling high-precision 3D reconstruction.

However, Structured Light depends heavily on the visibility of the projected pattern. Infrared background radiation in sunlight can overwhelm the pattern signal, which makes outdoor usability poor. It is best suited to high-precision identification, Face ID, gesture tracking, and industrial scanning within roughly 0.5 to 3 meters.

A horizontal comparison of the three approaches can be reduced to four dimensions

Environmental adaptability determines whether a system can truly ship

Stereo Vision struggles most with low texture and low light. Structured Light struggles most with strong ambient light. ToF is more vulnerable to multipath reflections, glass, and mirror-like surfaces that introduce ghost artifacts. If the scene includes highly absorptive black materials, reflective metals, or transparent objects, you should also evaluate IR wavelength, optical filter design, and algorithmic compensation.

Accuracy and range have clear boundary conditions

Structured Light is the short-range accuracy leader and commonly delivers millimeter-level precision or better. Stereo Vision can also perform well at short range, but its accuracy decays roughly with the square of distance. ToF is more stable beyond 5 meters and is particularly well suited to real-time dynamic ranging and spatial perception.

Compute, power, and cost are system-level trade-offs

Stereo Vision has the lowest hardware cost but the heaviest algorithms. Structured Light adds a projection module, placing it in the middle for both power and cost. ToF is highly modular and algorithmically simpler, but its high-speed emission and reception chain usually increases both power consumption and chip cost.

comparison = {

"Stereo Vision": {"Power": "Low", "Compute": "High", "Cost": "Low"},

"Structured Light": {"Power": "Medium", "Compute": "Medium", "Cost": "Medium to High"},

"ToF": {"Power": "High", "Compute": "Low", "Cost": "High"}

}

for name, meta in comparison.items():

print(name, meta) # Output the system trade-offs of the three approachesThis code compresses the engineering constraints of the three approaches into structured data that is easier to use in decision-making.

Application-oriented conclusions matter more than a pile of parameters

If your project involves smartphone facial recognition, smart lock liveness detection, or desktop scanning, prioritize Structured Light. If your project involves warehouse volume measurement, AR spatial mapping, robot obstacle avoidance, or mid-range dynamic tracking, prioritize ToF. If your project involves outdoor navigation, agricultural robots, or low-cost autonomous driving perception front ends, prioritize Stereo Vision.

The selection rule can be remembered in one sentence

Choose Structured Light for short-range high precision, ToF for mid- to long-range high-frame-rate sensing, and Stereo Vision for bright outdoor, low-cost deployment. If the scene is complex and the budget allows it, more systems are adopting fused approaches such as RGB + ToF or Stereo Vision + active illumination to compensate for the weaknesses of any single technology.

FAQ

1. Why do smartphones often use Structured Light instead of Stereo Vision for front-facing 3D face recognition?

Because Structured Light provides high accuracy at close range from 0.3 to 1 meter, works well on low-texture human faces, and can be miniaturized into a compact module suitable for stable facial modeling and liveness detection.

2. Why are robots and AR devices increasingly adopting ToF?

Because ToF can output depth directly, supports high frame rates, offers better robustness in dynamic scenes, and performs better in low-texture environments. That makes it well suited to real-time obstacle avoidance, mapping, and spatial localization.

3. Has Stereo Vision already been replaced by ToF or Structured Light?

No. Stereo Vision still offers clear advantages in strong outdoor light, low BOM cost, no active illumination requirement, and large-scene perception. It remains especially suitable for automotive systems, drones, and low-power edge vision platforms.

Core summary: This article systematically reconstructs the three mainstream 3D imaging approaches—Stereo Vision, Structured Light, and ToF—covering working principles, key components, dToF/iToF differences, environmental adaptability, cost and compute trade-offs, and representative vendors. It provides a dense and practical decision framework for robotics, AR/VR, smartphones, and autonomous driving projects.