[AI Readability Summary] GPT-Image-2 is reshaping how teams create PPT visuals and infographics. It performs well in Chinese typography, knowledge cards, product posters, and chart mockups, significantly lowering the design barrier. However, it still has limitations, including small-text errors, unstable reproducibility, and non-editable chart output. Keywords: GPT-Image-2, PPT visuals, data visualization

The technical specification snapshot captures the model’s positioning

| Parameter | Details |

|---|---|

| Model/Subject | Hands-on evaluation of the GPT-Image-2 image generation model |

| Primary Capabilities | PPT visuals, knowledge cards, product posters, long-form infographics, data chart mockups |

| Interaction Method | Natural language prompts |

| Inference Characteristics | Single-pass generation with planning and self-check tendencies |

| Language Performance | Significantly improved Chinese text rendering |

| Collaboration Tools | PowerPoint, Excel, G2, ggplot2 |

| Stars | Not provided in the source |

| Core Dependencies | Image generation models, PPT workflows, visualization libraries |

GPT-Image-2 has already changed how PPT visuals are produced

The original hands-on conclusion is clear: creating PPT visuals is no longer just about searching for assets and manually assembling layouts. GPT-Image-2 behaves more like a generation engine that can directly produce visual drafts, especially for training decks, project updates, product overview slides, and promotional content.

Its key difference from earlier image models is not simply that it can generate images. It also demonstrates a stronger understanding of Chinese text, layout structure, and information organization. The source cites research framing that describes it as a standalone system closer to “GPT for images,” rather than a traditional two-stage pipeline.

Input: topic description + style constraints + text content + page objective

Process: model plans composition → generates visuals → checks local errors → iterates fixes

Output: promotional graphics, knowledge cards, or chart mockups suitable for insertion into PPTThis workflow explains its core value: it shifts early-stage draft work, which previously required designer involvement, into the prompt design phase.

Knowledge-focused PPT visuals benefit first from the model’s layout understanding

In knowledge-heavy scenarios, users often start with only a course paragraph, a few bullet points, or a training outline. GPT-Image-2 stands out because it can automatically organize that text into presentation-ready information cards, rather than merely producing a decorative image.

For topics such as large language model training workflows, tea production processes, or city travel guides, the model does more than generate illustrations. It also proactively fills in common information structures, such as steps, categories, caveats, and visual hierarchy. That makes it well suited to serving as the supporting hero visual for each PPT slide.

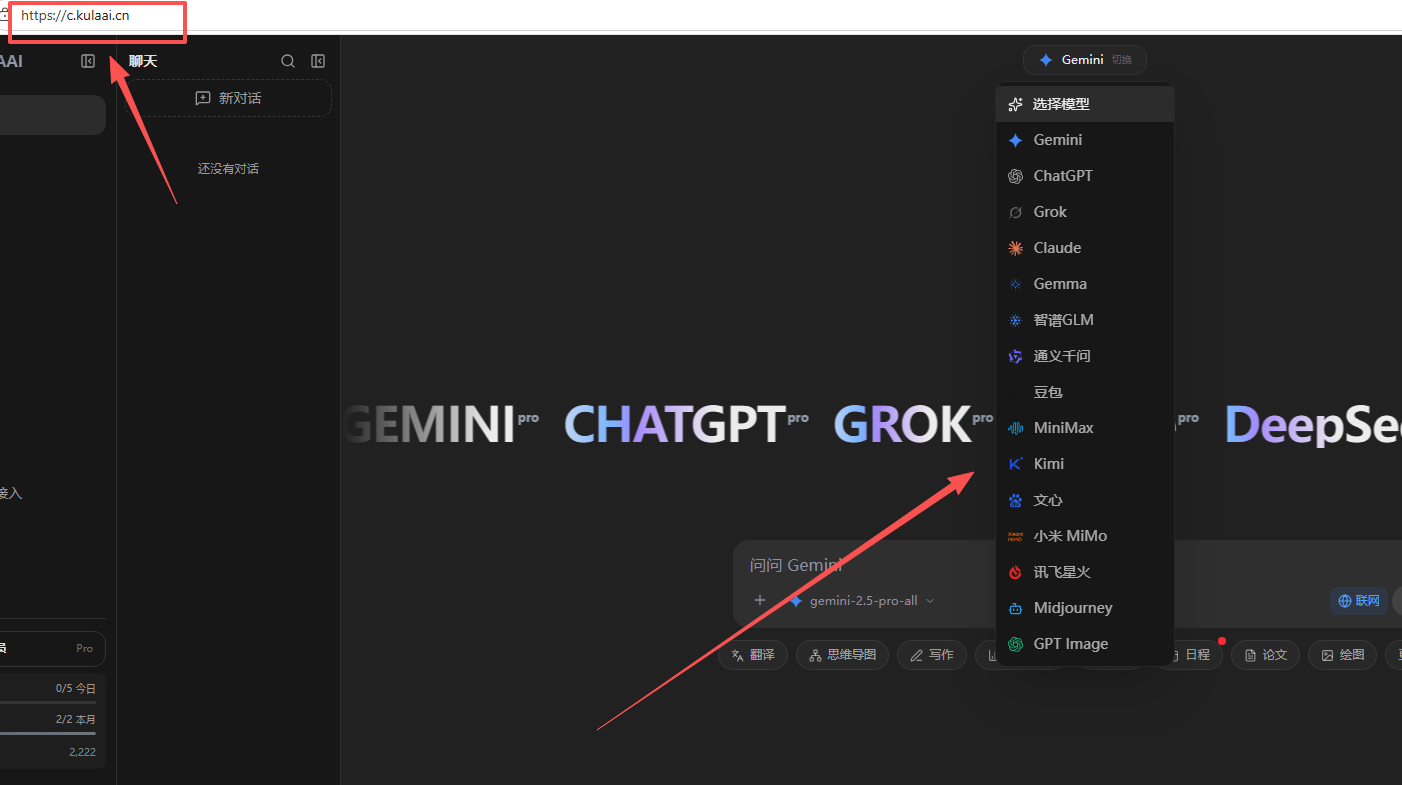

AI Visual Insight: This image shows hands-on examples used by the author to validate GPT-Image-2 output quality, with emphasis on dense Chinese typography, card-based information organization, and multi-module parallel layout capabilities. If the image contains multi-column structures or title-based sections, it usually indicates that the model has already reached relatively strong stability in long-text visual segmentation, hierarchical heading generation, and knowledge-point clustering.

AI Visual Insight: This image shows hands-on examples used by the author to validate GPT-Image-2 output quality, with emphasis on dense Chinese typography, card-based information organization, and multi-module parallel layout capabilities. If the image contains multi-column structures or title-based sections, it usually indicates that the model has already reached relatively strong stability in long-text visual segmentation, hierarchical heading generation, and knowledge-point clustering.

A high-quality prompt matters more than stacking style adjectives

Instead of piling on adjectives like “premium,” “Apple-style,” or “minimalist,” define the page objective clearly. The most effective prompts usually include five elements: subject, purpose, audience, layout, and constraints.

prompt = {

"主题": "大语言模型训练流程介绍", # Define the knowledge topic clearly

"用途": "用于培训PPT单页配图", # Specify the delivery scenario

"受众": "产品经理和研发新人", # Define the target audience

"风格": "极简、科技感、中文排版清晰", # Constrain the visual language

"结构": "标题+4个步骤+底部总结", # Enforce the information framework

"限制": "避免小字,确保重点词醒目" # Reduce the risk of text errors

}The function of this structured prompt is to turn “generate an image” into “generate a usable page asset.”

Data chart visualization is usable, but it is not a replacement for professional charting

One of the source article’s most valuable judgments is that it does not overstate AI charting. GPT-Image-2 produces visibly better chart mockups than many traditional AI image tools for common visuals such as trend lines, pie charts, and bar charts, especially in color, spacing, and overall polish.

But its output is still an image, not a data object. That means you cannot continue editing axes, legends, or series values the way you can in Excel, G2, or ggplot2. For formal reporting, this is a critical boundary.

The right approach is AI for visual direction, professional libraries for final delivery

In a real workflow, the recommended approach is to let GPT-Image-2 generate the chart direction first, then recreate it in a professional tool as an editable version. This preserves the aesthetic benefit while preventing data errors from flowing directly into deliverables.

sales = [120, 168, 210, 260]

months = ["Q1", "Q2", "Q3", "Q4"]

# Rebuild the chart with a professional library instead of submitting the AI image directly

chart_spec = {

"x": months, # Horizontal axis: quarters

"y": sales, # Vertical axis: sales volume

"type": "bar", # Choose a bar chart

"title": "季度销售趋势", # The title must be manually verified

"color": "#4F46E5" # Keep branding colors consistent

}The core idea in this snippet is straightforward: AI handles inspiration, while production-grade tools handle accurate delivery.

Product posters and showcase visuals are becoming a high-frequency deployment scenario

Product posters are a strong fit for GPT-Image-2 because this use case values visual persuasion more than strict data editability. The source evaluation shows that even casually shot product photos with poor lighting and cluttered backgrounds can be reconstructed into visuals much closer to commercial poster quality.

This capability is especially effective for e-commerce detail pages, PPT product overview slides, and pitch presentation pages. You can upload a real product photo first, then use a concise prompt to align it with the product’s intended brand tone and quickly generate a consistent promotional visual.

But realism does not equal truth, so review must be part of the workflow

The source also identifies an important risk: the model may automatically invent selling points, brand details, or even product information that does not exist. In industries such as food, hardware, and automotive, this kind of plausible hallucination can lead directly to factual errors.

def review_ai_image(checklist):

# Manually review critical facts in generated content

for item in checklist:

assert item["人工确认"] is True # Unverified information must not enter formal materials

review_ai_image([

{"字段": "产品名称", "人工确认": True},

{"字段": "卖点文案", "人工确认": True},

{"字段": "价格信息", "人工确认": False}

])The purpose of this code is to emphasize one rule: every critical field on a commercial visual must be manually verified.

Three current limitations define it as a productivity enhancer rather than a fully autonomous system

First, small text and edge annotations still fail easily, especially for disclaimers, contact details, and bottom-of-page notes on long visuals. Second, reproducibility remains unstable, and repeated generations from the same prompt can vary significantly. Third, the more realistic an image appears, the more it can amplify the risk of false information.

As a result, GPT-Image-2 is currently best positioned for visual first drafts, information mockups, and layout references, rather than unsupervised final output. The more important the business material, the more essential it is to embed the model into a human verification workflow.

The future points to automated visual production, but judgment still belongs to humans

From an industry perspective, image models are moving from creative demos to production infrastructure. This shift has major implications for PPT creation, content marketing, knowledge communication, and data storytelling.

What gets replaced is not design itself, but low-value, highly repetitive first-draft labor. The skill that becomes more valuable is judging whether an image is accurate, aligned with the business, and appropriate for decision-making contexts.

FAQ structured Q&A

1. Is GPT-Image-2 suitable for directly generating final data charts for formal reports?

It is not recommended as the final chart output. It works well for visual references and mockups, but formal deliverables should be rebuilt as editable charts in tools such as Excel, G2, or ggplot2.

2. Why is it more valuable than traditional AI image tools in PPT scenarios?

Because it does more than generate visuals. It is also better at Chinese text rendering, information hierarchy, and card-based layout, making it much closer to a page-ready content asset.

3. What should users watch out for most when using GPT-Image-2?

The top three risks are small-text errors, unstable outputs, and factual hallucinations. Any content involving data, pricing, branding, or compliance must be manually reviewed.

Core Summary: This article reconstructs the real capabilities of GPT-Image-2 in PPT visuals, infographics, and data chart generation based on hands-on testing. It focuses on Chinese text rendering, single-pass generation behavior, chart visualization strengths, and the limitations of non-editable output, while providing practical workflows for reporting, training, and e-commerce poster use cases.