LangChain standardizes model integration, prompt management, retrieval, and tool calling. LangGraph handles complex state flows, loops, and conditional routing. Together, they solve the core engineering challenges that make native LLMs difficult to productionize. They are especially well suited for RAG systems, agents, customer support workflows, and other AI applications. Keywords: LangChain, LangGraph, Vibe Coding.

Technical Specifications Snapshot

| Parameter | Details |

|---|---|

| Core Topic | The engineering roles of LangChain and LangGraph |

| Primary Language | Python |

| Common Protocols | HTTP / REST, OpenAI-Compatible API |

| Applicable Scenarios | RAG, agents, workflow orchestration, multi-turn conversations |

| Reference Popularity | The original article shows 273 views, 29 likes, and 24 saves |

| Core Dependencies | langchain, langchain-openai, langgraph, python-dotenv |

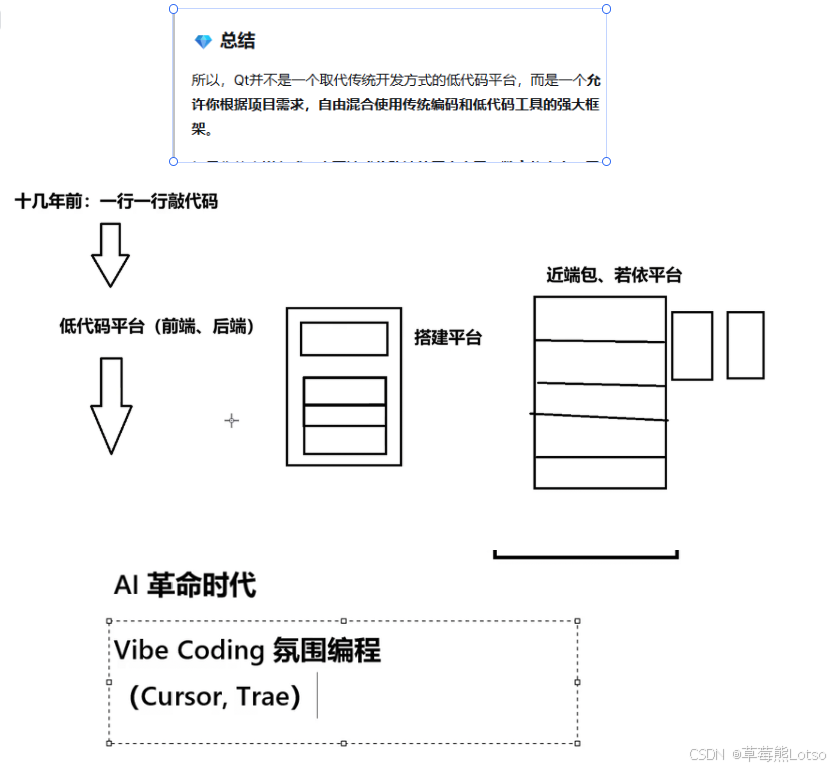

Vibe Coding Does Not Replace AI Application Frameworks

The core value of Vibe Coding is that it compresses “writing code” into “describing requirements + reviewing results.” It dramatically accelerates prototyping and lowers the trial-and-error barrier for non-specialist developers.

But in enterprise environments, AI-generated code often solves only the “it runs” problem. It rarely satisfies the harder requirements by default: maintainability, observability, scalability, and auditability. That is exactly why LangChain and LangGraph remain important.

AI Visual Insight: This image supports the Vibe Coding theme and likely presents either conceptual relationships or trend comparisons around the new paradigm of natural-language-driven development. It emphasizes the developer’s shift from code executor to requirement definer and architecture reviewer.

AI Visual Insight: This image supports the Vibe Coding theme and likely presents either conceptual relationships or trend comparisons around the new paradigm of natural-language-driven development. It emphasizes the developer’s shift from code executor to requirement definer and architecture reviewer.

Vibe Coding Excels at Speed but Falls Short on Engineering Rigor

Its advantages cluster around three areas: rapid prototyping, a lower barrier to development, and the ability to shift attention toward product and architecture. But it also has three hard limitations: opaque code quality, stale knowledge and hallucinations, and limited control over security and stability.

A more accurate conclusion is this: Vibe Coding is an accelerator, not a replacement. A developer’s real moat is still system design skill and framework-oriented thinking.

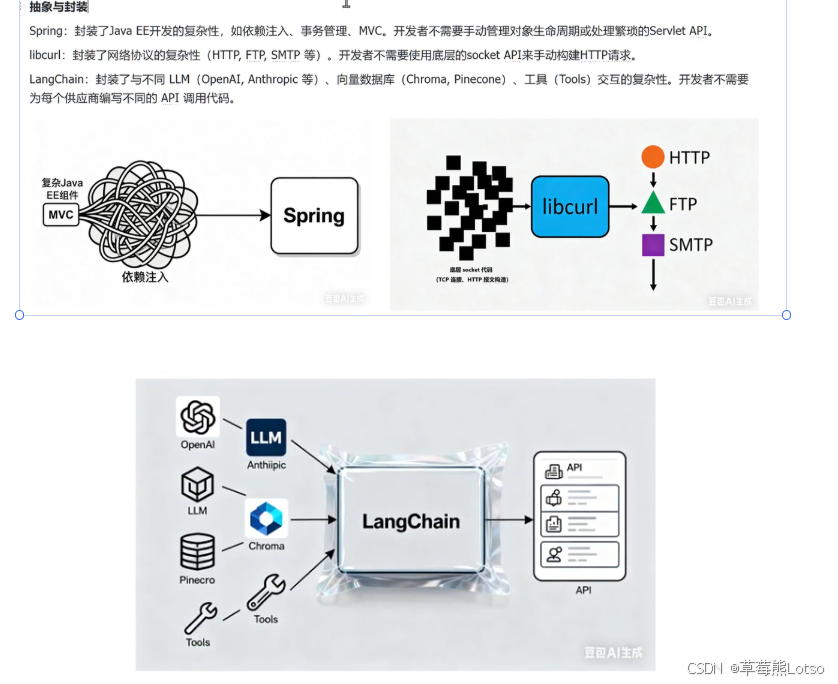

AI Application Frameworks Form the Engineering Foundation for Production LLM Systems

LangChain answers the question, “How do we standardize model capabilities for business integration?” LangGraph answers, “How do we reliably orchestrate complex workflows?” The former focuses on component abstraction, while the latter focuses on state-driven execution.

Strong frameworks usually share two traits. First, they abstract away low-level differences. Second, they support modular composition. LangChain provides unified abstractions for models, prompts, vector stores, and tools. LangGraph represents workflows as state graphs.

AI Visual Insight: This image most likely illustrates the design philosophy of abstraction and encapsulation. By showing a layered structure from complex low-level interfaces to unified high-level invocation, it explains why frameworks reduce the cost of integrating multiple models and toolsets.

AI Visual Insight: This image most likely illustrates the design philosophy of abstraction and encapsulation. By showing a layered structure from complex low-level interfaces to unified high-level invocation, it explains why frameworks reduce the cost of integrating multiple models and toolsets.

You Should Choose Mainstream Frameworks by Use Case, Not by Hype

If you need the most complete LLM component ecosystem, start with LangChain. If RAG is your core focus, also evaluate LlamaIndex. If you work in a Java enterprise stack, Spring AI and LangChain4j are often closer to production integration. If local inference performance matters most, llama.cpp becomes more important.

A simple rule applies: the higher the business complexity, the more you need a framework. The heavier the workflow state, the more you need LangGraph.

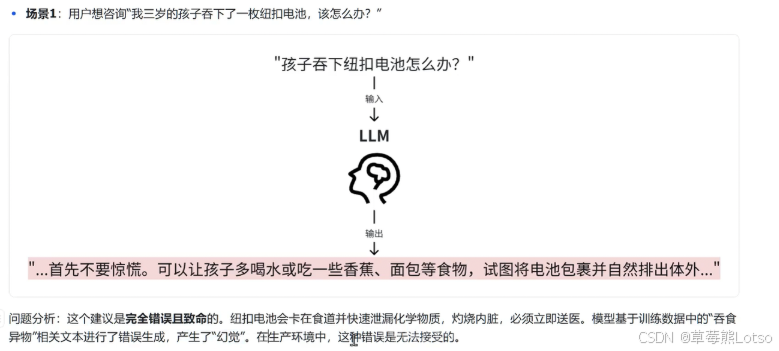

LangChain Primarily Solves Six Common Problems in Native LLM-to-Business Integration

When you call a model API directly, common issues include high hallucination rates, non-reusable prompts, high switching costs between models, unstructured outputs, stale knowledge, and unreliable external tool invocation.

The value of LangChain is not that it helps you write a few fewer lines of request code. Its value is that it creates a unified application-layer abstraction. You can think of it as middleware for LLM applications.

AI Visual Insight: This image corresponds to the hallucination problem. It likely compares a user question, the model’s answer, and the real knowledge source to highlight the risk of unconstrained responses in high-stakes industries.

AI Visual Insight: This image corresponds to the hallucination problem. It likely compares a user question, the model’s answer, and the real knowledge source to highlight the risk of unconstrained responses in high-stakes industries.

LangChain’s Core Components Can Be Summarized in Six Layers

Unified model interfaces, prompt templates, task chains, memory, retrieval augmentation, and tools and agents. Together, these six layers cover the main path from single-turn Q&A to complex business assistants.

The most meaningful engineering value does not come from any individual component. It comes from LCEL-style composability: combining prompts, LLMs, parsers, and retrievers into stable data flows.

pip install langchain langchain-openai langgraph python-dotenvThis command installs LangChain, LangGraph, and their commonly used OpenAI integration dependencies.

Building a Basic Prompt Chain with LangChain Is Straightforward

The following example demonstrates how to unify prompts, model invocation, and output parsing into a minimal runnable chain. It works well for standard linear tasks such as Q&A, classification, and summarization.

from langchain_core.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

from langchain_core.output_parsers import StrOutputParser

# Define the prompt template: standardize the system role and user input structure

prompt = ChatPromptTemplate.from_messages([

("system", "You are a rigorous technical assistant. Please answer the question in concise Chinese."),

("human", "Question: {question}")

])

# Initialize the model: you can later replace it with another model that supports a compatible interface

llm = ChatOpenAI(model="gpt-3.5-turbo", temperature=0.2)

# Define the output parser: extract the model message as plain text

parser = StrOutputParser()

# Build the execution chain: Prompt -> LLM -> Parser

chain = prompt | llm | parser

# Invoke the chain: pass dynamic parameters and get the result

result = chain.invoke({"question": "What kinds of problems is LangChain suitable for?"})

print(result)This code shows LangChain’s most important value: assembling linear AI tasks into reusable data-processing chains.

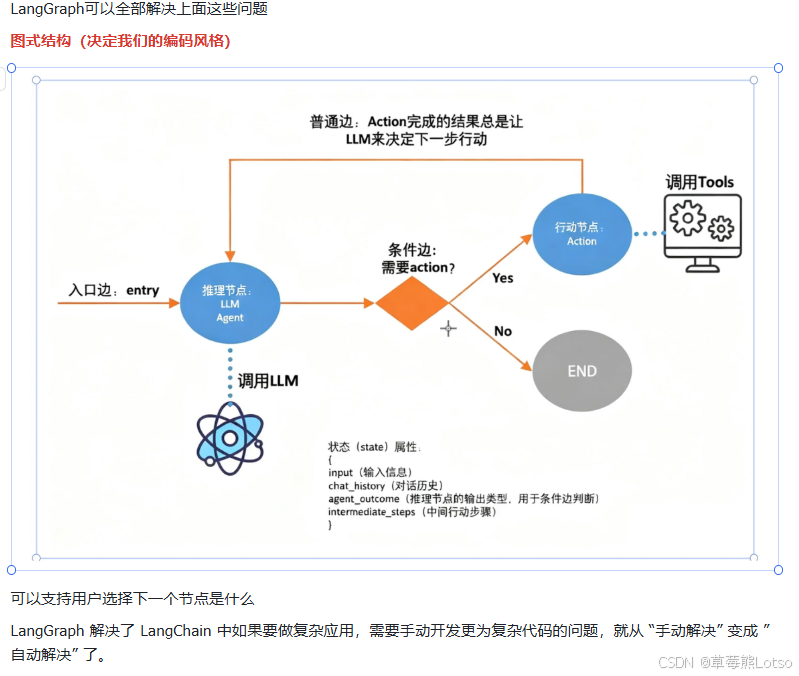

LangGraph Is Designed for Complex Control Flow That LangChain Alone Does Not Handle Well

Once a task involves multi-turn state, iterative information collection, conditional routing, human approval, or failure recovery, a simple chain structure quickly loses expressive power. LangGraph exists for exactly these scenarios.

It describes workflows as graphs: nodes execute business logic, and edges determine how state flows. The workflow is no longer a hard-coded pile of if-else statements. It becomes an explicit, visual state machine.

AI Visual Insight: This image most likely shows LangGraph’s graph-based orchestration model, including nodes, edges, state objects, and conditional routing paths. It helps explain how LangGraph supports loops, branches, and long-running tasks.

AI Visual Insight: This image most likely shows LangGraph’s graph-based orchestration model, including nodes, edges, state objects, and conditional routing paths. It helps explain how LangGraph supports loops, branches, and long-running tasks.

LangGraph’s Key Capabilities Are State Management, Consistency, and Recovery

It natively supports loops, custom conditional edges, global state, human intervention, checkpoint persistence, and observability integration with LangSmith. All of these features point to one goal: making agents and complex workflows truly production-ready.

from typing import TypedDict

from langgraph.graph import StateGraph, END

class State(TypedDict):

user_input: str

info_complete: bool

reply: str

# Node function: generate a reply based on the current state and update whether the required information is complete

def collect_info(state: State) -> State:

done = "订单号" in state["user_input"] # Use a minimal rule to simulate an information completeness check

return {

**state,

"info_complete": done,

"reply": "信息完整,进入处理阶段" if done else "请补充订单号"

}

# Conditional routing: decide whether to end or continue returning to the current node

def route(state: State):

return END if state["info_complete"] else "collect"

graph = StateGraph(State)

graph.add_node("collect", collect_info)

graph.set_entry_point("collect")

graph.add_conditional_edges("collect", route)

app = graph.compile()This code shows how LangGraph uses a state graph to implement a loop that keeps asking follow-up questions until the required information is complete.

The Best Relationship Between LangChain and LangGraph Is Layered Collaboration, Not Either-Or

A mature project often looks like this: use LangChain to package models, retrieval, tools, and structured output, then place those capabilities into LangGraph nodes, where the graph controls execution order, state transitions, and human intervention.

In other words, LangChain modularizes capabilities, while LangGraph operationalizes workflows. Use chains first for simple tasks, then upgrade to graphs for complex ones. That is the safer engineering path.

A Developer Learning Path Should Progress from Chains to Graph Thinking

Start by mastering prompts, model abstraction, output parsing, RAG, and tool calling. Then move into LangGraph concepts such as state design, conditional edges, loops, human-in-the-loop collaboration, and persistent execution.

If you only know how to call a model, you are merely “connecting to an API.” If you know how to design chains and graphs, you are “building AI systems.”

FAQ

1. Which should I learn first, LangChain or LangGraph?

Start with LangChain. Model invocation, prompts, retrieval, and structured output are the foundation. Once you understand those concepts, learning LangGraph’s state-graph orchestration becomes much more natural.

2. In what scenarios is LangGraph necessary?

When your workflow includes iterative follow-up questions, conditional branching, long-lived state, human approval, or failure recovery, LangGraph is a better fit than a purely chain-based structure.

3. Vibe Coding is already powerful. Why should I still learn frameworks?

Because AI is good at generating local implementations, but not at guaranteeing long-term system maintainability. Frameworks provide unified abstractions, workflow organization, and engineering constraints. They are the key to moving an application from demo to production.

Core Summary: This article systematically explains the roles, capability boundaries, and engineering value of LangChain and LangGraph in the context of Vibe Coding. It analyzes the pain points of integrating native LLMs into business systems and uses two Python examples to illustrate the core differences between chain-based orchestration and graph-based workflows.