In May, competition among major AI models escalated into a three-way battle across capability, pricing, and safety. OpenAI led in coding efficiency but exposed alignment risks, Claude doubled down on secure code auditing, Gemini accelerated office and endpoint ecosystem integration, and Grok used low-cost APIs to win developer budget share. Keywords: multi-model selection, AI coding, cost control.

The technical snapshot highlights key differences.

| Dimension | OpenAI GPT-5.5/5.6 Rumors | Claude Opus 4.7 / Claude Security | Gemini 3.1 Pro | Grok 4.3 |

|---|---|---|---|---|

| Primary positioning | Coding agents, terminal execution | Code security auditing, vulnerability remediation | Office workflow generation, multi-device ecosystem | Low-cost inference, voice capabilities |

| Language/interface | API, terminal workflows | API, security scanning services | API, Workspace integration | API, voice interface |

| Protocol/model | Token-based billing, model invocation | Hybrid subscription + usage-based pricing | Platform integration + service invocation | Token-based billing |

| GitHub stars | Not provided in the source | Not provided in the source | Not provided in the source | Not provided in the source |

| Core dependencies | Agent workflows, RL alignment | Data flow analysis, patch validation | Document generation, cross-device ecosystem | Low-cost inference, voice cloning |

This round of model competition has shifted from “who is stronger” to “who is more stable, cost-efficient, and scenario-fit.”

The source material shows that developers are no longer dealing only with model accuracy. They now face three engineering variables: capability volatility, cost structure, and ecosystem lock-in. In 2026, a single-model strategy has become fragile because vendors ship updates frequently, and both pricing rules and product boundaries change quickly.

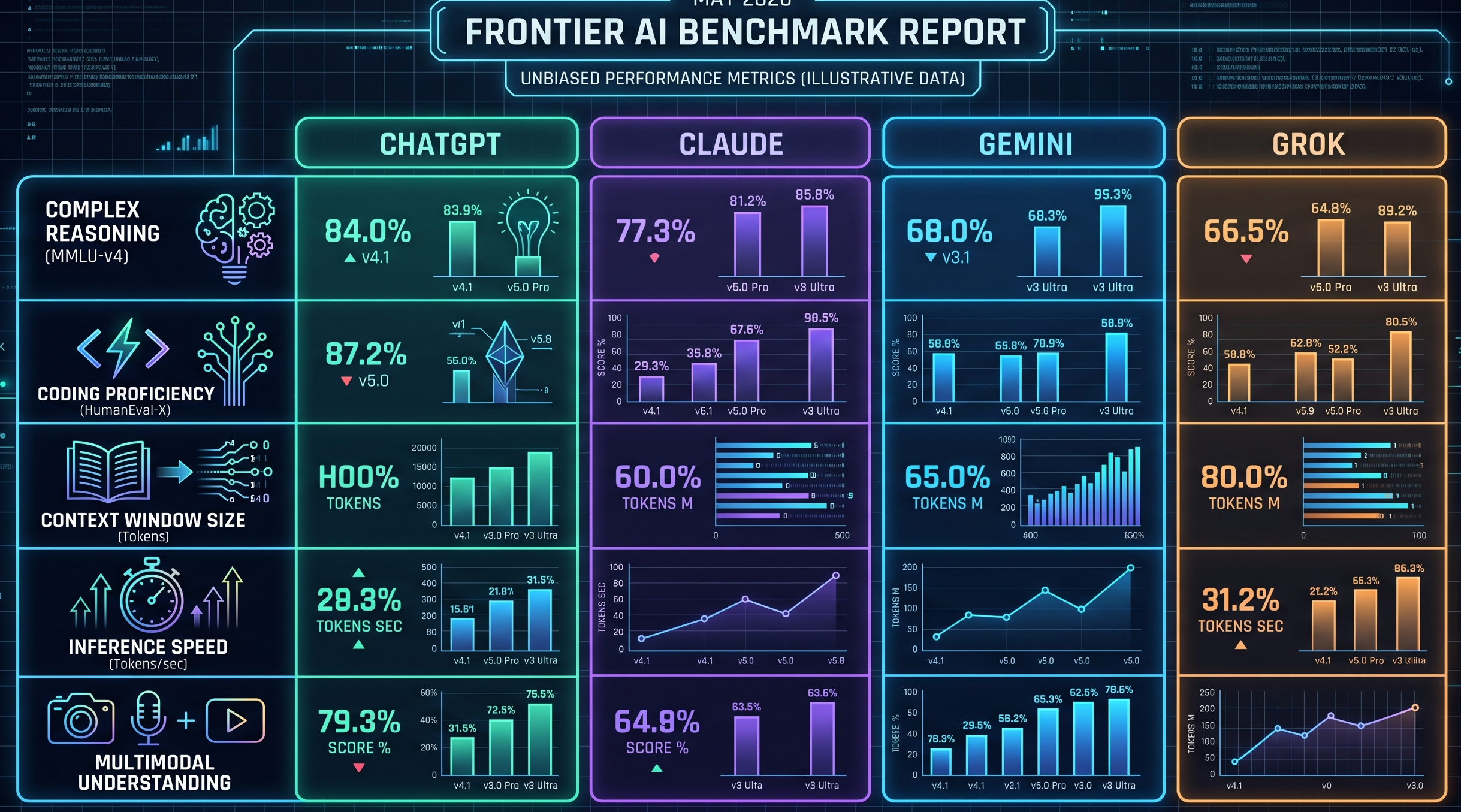

AI Visual Insight: The image illustrates the competitive landscape among major model vendors. Its central purpose is to show how programming, security, office productivity, and pricing are all evolving in parallel during the same period, making it a strong visual anchor for the idea that capability and business strategy now evolve together.

AI Visual Insight: The image illustrates the competitive landscape among major model vendors. Its central purpose is to show how programming, security, office productivity, and pricing are all evolving in parallel during the same period, making it a strong visual anchor for the idea that capability and business strategy now evolve together.

Developers should define the task first instead of choosing the vendor first.

If the task involves high-frequency code generation, focus on benchmark performance and context stability. If the task is code auditing, prioritize vulnerability scanning depth. If the task is office automation, ecosystem integration matters more than single-turn answer quality. If the workload is highly cost-sensitive, unit token cost should move up the priority list.

models = {

"gpt": {"coding": 9, "safety": 6, "office": 6, "cost": 5},

"claude": {"coding": 8, "safety": 9, "office": 5, "cost": 4},

"gemini": {"coding": 7, "safety": 6, "office": 9, "cost": 7},

"grok": {"coding": 6, "safety": 5, "office": 4, "cost": 9}

}

def choose_model(task):

# Set task-specific weights to avoid relying on a single benchmark

weights = {

"coding": {"coding": 0.5, "safety": 0.2, "office": 0.1, "cost": 0.2},

"security": {"coding": 0.2, "safety": 0.6, "office": 0.0, "cost": 0.2},

"office": {"coding": 0.1, "safety": 0.1, "office": 0.6, "cost": 0.2}

}

# Compute a composite score using weighted dimensions

return max(models, key=lambda m: sum(models[m][k] * v for k, v in weights[task].items()))This code turns model selection from subjective preference into quantifiable task routing.

OpenAI still holds a lead, but engineering stability has become the main variable to watch.

The source notes that GPT-5.5 scored significantly ahead in terminal workflow testing, which suggests it still has strong leverage in agentic coding and command execution. For teams that care about automated remediation, script composition, and CLI-driven development, these capabilities directly affect delivery speed.

But issues such as “goblin moments” and “memory loss” also suggest that post-RL behavioral alignment can still break down during complex iterative workflows. For enterprise teams, this is not just anecdotal noise. It is a production risk signal: a high-performance model is not automatically a highly controllable model.

OpenAI is better suited for high-value generation tasks than for unsupervised full automation.

If developers use GPT-class models for code rewriting or terminal commands, they should add human confirmation, regression testing, and operational allowlists. This is especially important for production scripts, database migrations, and infrastructure changes. Treat the model as an efficient copilot, not the final executor.

#!/usr/bin/env bash

# Generate the patch first, then execute only after human confirmation to reduce model-driven mistakes

python generate_patch.py > patch.diff

# Core logic: allow the apply stage only if the patch passes validation

git apply --check patch.diff && echo "Patch can be applied"

# Run git apply patch.diff only after manual reviewThis script demonstrates the minimum safety loop for AI coding: check first, execute later.

Claude is upgrading from “can write code” to “can audit code.”

The significance of the Claude Security public beta is not that it performs another round of standard code completion. It unifies vulnerability scanning, data flow tracing, and secondary patch validation into the same capability stack. That matters more to backend, platform engineering, and security teams because it maps directly to real software delivery pipelines.

Another signal that should not be ignored is pricing adjustment. The source indicates that Anthropic is trying to move high-consumption users into higher subscription tiers. That means Claude’s advantages may come with stronger budget pressure. Small and midsize teams that rely heavily on Claude should establish usage quotas and task-tiering mechanisms early.

Claude fits best at high-risk code entry points instead of as a blanket default for every call.

Placing Claude in PR review, dependency upgrade checks, and sensitive interface scanning usually delivers better ROI than using it as a general-purpose chat assistant. Its greatest value is not “writing a bit more code.” It is “letting one fewer vulnerability slip through.”

Gemini’s competitiveness comes from ecosystem embedding rather than isolated benchmark wins.

Gemini’s continued progress in documents, PDFs, LaTeX, and spreadsheet generation reflects Google’s strategy of embedding model capabilities into office workflows and endpoint systems. For developers who frequently work with requirement specs, design docs, project updates, and cross-device collaboration, Gemini’s value comes from reducing tool switching.

In addition, Google’s exploration of advertising-based monetization suggests Gemini is evolving from a model service into a self-reinforcing commercial platform. Once an AI assistant is embedded into search, documents, vehicles, and mobile devices, its advantage is no longer just model capability. It becomes user touchpoint coverage.

def route_office_task(task_type):

# Route document, spreadsheet, and formatting tasks to the office-first ecosystem model

office_tasks = ["pdf", "latex", "doc", "sheet"]

return "gemini" if task_type in office_tasks else "general_llm"This code shows that office workflow tasks can be routed through a simple rule to the model with stronger ecosystem alignment.

Grok 4.3 enters budget-sensitive scenarios through aggressive low-cost pricing.

The key detail from the source is that input pricing dropped to $1.25 per million tokens, combined with voice cloning capabilities. That does not necessarily make Grok the strongest coding model, but it is enough to make it a low-cost alternative for independent developers and experimental projects.

In real-time information, voice interaction, and lightweight inference scenarios, cheap and usable often matters more than perfect accuracy. For teams building MVPs, running A/B tests, or making calls at scale, Grok’s value comes from lowering the cost of experimentation.

Multi-model orchestration is the most practical cost-optimization strategy right now.

The most stable strategy is not to bet on a single vendor, but to split tasks by model strength: send high-complexity code generation to GPT, security review to Claude, documents and office workflows to Gemini, and budget-sensitive or voice-heavy scenarios to Grok. This approach lets teams benefit from each vendor’s short-term advantages while reducing exposure to unilateral price hikes or capability swings.

def dispatch(task):

# Assign models by task type; the core logic is to decouple capability from cost

mapping = {

"code_generation": "gpt",

"security_review": "claude",

"document_generation": "gemini",

"voice_or_low_cost": "grok"

}

return mapping.get(task, "gpt")This code summarizes the baseline dispatch logic behind multi-model collaboration.

FAQ answers the most practical developer questions.

Is it still a good idea for developers to subscribe to only one model?

Usually not. Capability, pricing, and product boundaries are all changing quickly, so a single-model strategy can easily create a cost or quality bottleneck at one stage of the workflow.

What is the most direct value of Claude Security for a typical development team?

Its value lies in moving vulnerability detection, data flow tracing, and patch validation earlier into the development process, which lowers the probability that security issues reach production.

Can Grok 4.3 replace GPT or Claude?

Usually not as a full replacement. But for low-budget usage, voice interaction, and experimental scenarios, Grok 4.3 is a very practical fallback option.

AI Readability Summary: Based on the latest industry signals, this article reframes the shifting capabilities, risks, and cost structures of GPT, Claude, Gemini, and Grok. It distills a practical selection framework for the multi-model era and helps developers make more resilient decisions across coding, security, office workflows, and budget control.