The core value of GPT-5.5 lies in stronger agent execution, higher reasoning efficiency, and ultra-long context handling. For users in mainland China, however, the main pain points are the lack of direct official access, the high ban risk of shared accounts, and the instability of free mirror sites. This article outlines stable usage paths and selection guidance from a compliance-aware perspective. Keywords: GPT-5.5, aggregation platforms, Agent

The technical specification snapshot highlights GPT-5.5 availability and access strategy

| Parameter | Information |

|---|---|

| Model/Topic | GPT-5.5 availability and access strategy in China |

| Core Capabilities | Agent execution, long context, cost-efficiency optimization |

| Language | Chinese |

| Protocol/Access Method | Official API relay, web-based aggregation entry |

| Stars | Not provided in the source |

| Core Dependencies | Browser, aggregation platform account, stable API channel |

| Target Audience | Developers, writers, and office productivity users |

GPT-5.5 capability gains have moved from chat to real workflows

The focus of GPT-5.5 is no longer just “sounding more human” in conversation. It is about “completing tasks more like an assistant.” The source material emphasizes agents and real-world workflows, which means the model is better suited for task decomposition, tool use, and result validation rather than single-turn Q&A alone.

From a developer’s perspective, this type of model is a better fit for code generation, research organization, document summarization, and process-driven office work. Its value lies less in individual responses and more in end-to-end task completion.

Three technical signals deserve the most attention

First, agent capability has improved significantly. The source cites an 82.7% score on Terminal-Bench 2.0, suggesting the model behaves more like an executable system for command-line and multi-step tasks rather than a static question-answering interface.

Second, token usage is more efficient. Even if the per-token price rises, the total task cost may not increase if the model reduces repeated prompting and retries. This is the total cost of ownership that engineering teams care about most.

Third, the context window has expanded to the 2 million token range. That directly affects heavyweight tasks such as long-document retrieval, cross-section summarization, and repository-scale code analysis.

features = {

"agent": "Supports complex task decomposition and tool use", # Core: shift from conversation to execution

"efficiency": "Uses fewer tokens to complete similar tasks", # Core: reduce repeated interaction costs

"context": 2_000_000 # Core: support ultra-long text understanding

}

for k, v in features.items():

print(f"{k}: {v}")This code snippet summarizes the three key GPT-5.5 capabilities in a structured dictionary.

The main challenge for mainland China users is not model quality but access stability

The source arrives at a clear conclusion: the question is not whether GPT-5.5 is worth using, but how to keep it available consistently. For users in mainland China, the primary obstacles are the lack of direct official availability and the high volatility of unofficial channels.

Free mirror sites usually introduce three categories of problems: opaque API key sources, service interruptions at any time, and no reliable history or session traceability. For developers, these issues directly break workflow continuity.

Another common risk is the banning of shared Pro accounts. The source notes that Claude Pro and ChatGPT Plus have relatively high ban rates under gray-market sharing models. The root causes are usually abnormal account behavior, suspicious IP activity, or concurrent multi-user access.

Being usable once does not mean it is suitable for long-term production

Development environments fear three things most: broken access, lost context, and disappearing service after payment. Getting something to work once does not mean it is fit for long-term use. In coding, writing, and knowledge-base scenarios, stability matters more than leaderboard scores.

Therefore, when evaluating an access option, prioritize stability, billing transparency, and model coverage over marketing language on the homepage.

# Access option evaluation checklist

checklist=(

"Does it support direct access from mainland China?"

"Does it offer daily or monthly billing?"

"Does it explain model sources and limitations?"

"Does it support file upload and web search?"

)

printf '%s

' "${checklist[@]}"This script shows the most basic checks for screening an aggregation platform.

Membership-based aggregation platforms are currently the more realistic middle-ground solution

Based on the source’s hands-on conclusions, membership-based aggregation platforms are more reliable than free sites not because they are inherently “more advanced,” but because they can cover real operating costs. High-quality model access, account pool maintenance, IP rotation, and load balancing all require sustained investment.

These platforms usually expose two typical access layers. One is an official API relay, which is better suited to ChatGPT-like models. The other is a standard account-pool integration, which is more suitable for supplementary models such as Claude and Grok. The former prioritizes stability, while the latter prioritizes coverage.

The engineering logic behind membership platforms is a closed cost loop

If a platform is completely free, it is difficult for it to sustain high-frequency usage and ongoing operations. By contrast, daily trials and monthly renewals align better with the business logic of service infrastructure while also giving users more controllable risk.

The source’s practical ranking can be summarized as follows: ChatGPT is the most stable primary option, standard Claude accounts work well for editing and writing, and Grok is better treated as a backup. For daily work and development, this conclusion is already highly practical.

| Model | Stability | Speed | Best For |

|---|---|---|---|

| ChatGPT (GPT-5.5) | High | Relatively fast | Primary conversations, coding, general-purpose tasks |

| Claude (standard account) | Medium-high | Relatively fast | Writing polish, long-form expression |

| Grok | Medium-low | Average | Backup use |

When choosing an aggregation entry point, validate low-cost trial mechanisms first

The source notes that some entry points support day-based purchases, which is more reasonable than paying annually upfront. For any aggregation platform, first-time access should follow the principle of “validate cheaply, then scale gradually.”

Start by verifying four capabilities: direct access, stable model switching, file upload support, and session context retention. These four factors directly determine whether the platform can support real tasks.

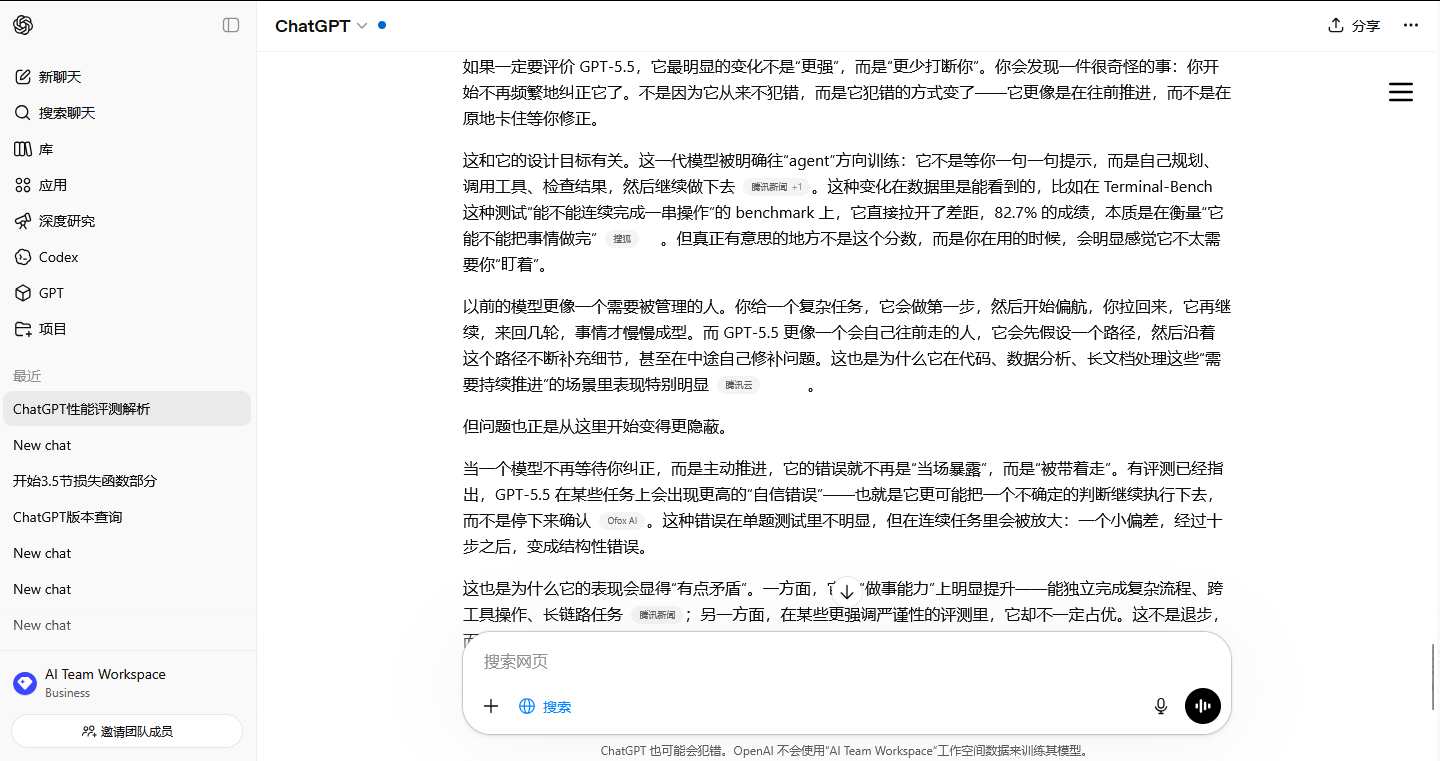

AI Visual Insight: This screenshot shows the interface of an aggregated AI chat platform. The core signals include multi-model entry points, a unified chat panel, and a web-based access pattern designed for users in mainland China. From a technical perspective, it reflects capabilities such as a unified frontend wrapper, multi-model routing and dispatch, and integrated file and context session handling. This suggests the platform’s value lies less in the native experience of any single model and more in multi-model orchestration and low-friction access.

AI Visual Insight: This screenshot shows the interface of an aggregated AI chat platform. The core signals include multi-model entry points, a unified chat panel, and a web-based access pattern designed for users in mainland China. From a technical perspective, it reflects capabilities such as a unified frontend wrapper, multi-model routing and dispatch, and integrated file and context session handling. This suggests the platform’s value lies less in the native experience of any single model and more in multi-model orchestration and low-friction access.

Pitfall avoidance rules should be written as explicit operating constraints

Do not trust marketing claims such as “unlimited and fully stable Claude Pro.” Also avoid buying annual plans upfront, and do not submit sensitive real-name information unless it is absolutely necessary.

A safer strategy is to buy one day first for testing and then move to monthly subscription if it passes. Prefer plans you can stop at any time, and treat aggregation platforms as production tools rather than long-term assets.

def choose_plan(has_trial: bool, billing_cycle: str, need_realname: bool) -> str:

if need_realname:

return "Reject" # Core: unnecessary sensitive information requirements are a risk signal

if has_trial and billing_cycle in ["day", "month"]:

return "Worth testing" # Core: validate with a small spend before long-term use

return "Proceed cautiously"This code condenses platform screening logic into an executable decision rule.

In 2026, a better strategy will be combining foreign flagship models with domestic models

If your usage is light, day-based access is enough. If you write code, create content, or conduct research every day, a monthly subscription to a stable aggregation entry point is more appropriate. If you work with enterprise-scale long documents, you should combine GPT-5.5 with domestic models.

The source mentions domestic models such as Kimi, Zhipu Qingyan, and DeepSeek. The value of this combined strategy is clear: foreign flagship models handle complex reasoning and workflows, while domestic models provide compliance coverage, Chinese-language optimization, and cost control.

FAQ

Q1: What is the most valuable GPT-5.5 capability for developers?

A1: It is not simply better answer accuracy. The biggest value is stronger task decomposition, tool use, and long-context handling, which makes it well suited to code, documentation, and automated workflows.

Q2: Why is it not recommended to rely directly on free mirror sites?

A2: Free mirrors often suffer from opaque API sourcing, frequent outages, and uncontrollable data and context handling. They are not suitable for production environments.

Q3: What is the safer current access approach for users in mainland China?

A3: Prioritize membership-based aggregation platforms that support day-based trials, monthly renewal, direct access from mainland China, and relatively transparent model sourcing. Then combine them with domestic models to create a dual-path backup strategy.

Core Summary: This article reconstructs the current GPT-5.5 usage landscape in mainland China after its release. It focuses on three upgrades—agent capability, long context, and cost efficiency—while analyzing practical access paths and risk-avoidance principles under the constraints of unavailable direct official access and the high ban rate of shared accounts.