In 2025, drones have entered a phase of ubiquitous intelligence and system-level convergence. Core capabilities are shifting from single-aircraft automation to swarm collaboration, autonomous decision-making, and integrated air-ground networking. This article maps five major technical threads—route planning, reinforcement learning, 6G networking, multimodal perception, and large-model integration—to explain how the low-altitude economy can scale. Keywords: drones, low-altitude economy, swarm intelligence.

Technical Specifications at a Glance

| Parameter | Details |

|---|---|

| Research Focus | Unmanned Aerial Vehicles (UAVs) and the Low-Altitude Economy (LAE) |

| Core Technology Domains | Route planning, intelligent decision-making, communication networking, multi-UAV collaboration, multimodal perception, LLM/VLM integration |

| Related Protocols / Networks | 5G-A, 6G, integrated air-space-ground communications, distributed control |

| Languages and Methods | Reinforcement learning, deep learning, graph neural networks, model predictive control, neuro-symbolic reasoning |

| Representative Platforms | Quadrotors, high-speed autonomous flight platforms, collaborative combat UAVs, industrial inspection platforms |

| GitHub Stars | The source article is a survey and does not provide open-source repository star data |

| Core Dependencies | LiDAR, IMU, GPS, stereo cameras, edge computing, intelligent reflecting surfaces, LLMs/VLMs |

Drones in 2025 are shifting from simply flying to understanding missions

The defining change in 2025 is not just incremental improvement in flight-control precision. Drones are beginning to acquire system-level understanding. Their objectives are no longer limited to obstacle avoidance and point-to-point cruising. Instead, they are moving toward end-to-end closed-loop execution for complex tasks such as delivery, inspection, search and rescue, and battlefield collaboration.

This phase is defined by a three-layer convergence. The perception layer relies more heavily on multimodal inputs. The decision layer increasingly depends on reinforcement learning and distributed optimization. The execution layer emphasizes swarm coordination and real-time communication. As a result, the low-altitude economy is moving from pilot validation to scaled operations.

A simplified autonomous decision pipeline for UAVs looks like this

class UAVAgent:

def perceive(self, sensors):

state = fuse_modalities(sensors) # Fuse multimodal data from LiDAR, vision, IMU, and other sources

return state

def plan(self, state, mission):

route = task_aware_planner(state, mission) # Generate a trajectory based on mission objectives

return route

def act(self, route):

execute_controller(route) # Control the aircraft to follow the trajectory stablyThis code captures the primary integrated chain from perception to planning and control in modern UAV systems.

Route planning is evolving from geometric shortest paths to task-optimal solutions

Traditional path planning focused mainly on one question: how to avoid obstacles. In 2025, the more important question is how to complete the mission while achieving global optimality. That means the objective function has expanded from collision-free feasibility to joint optimization across energy consumption, latency, charging, collaborative efficiency, and mission value.

A team from the University of Hong Kong demonstrated a high-speed autonomous flight platform that combines a high thrust-to-weight airframe with lightweight 3D LiDAR to achieve high-speed traversal in unknown environments. Its significance lies not only in flying faster, but also in providing the high-quality real-time perception needed for downstream swarm trajectory optimization.

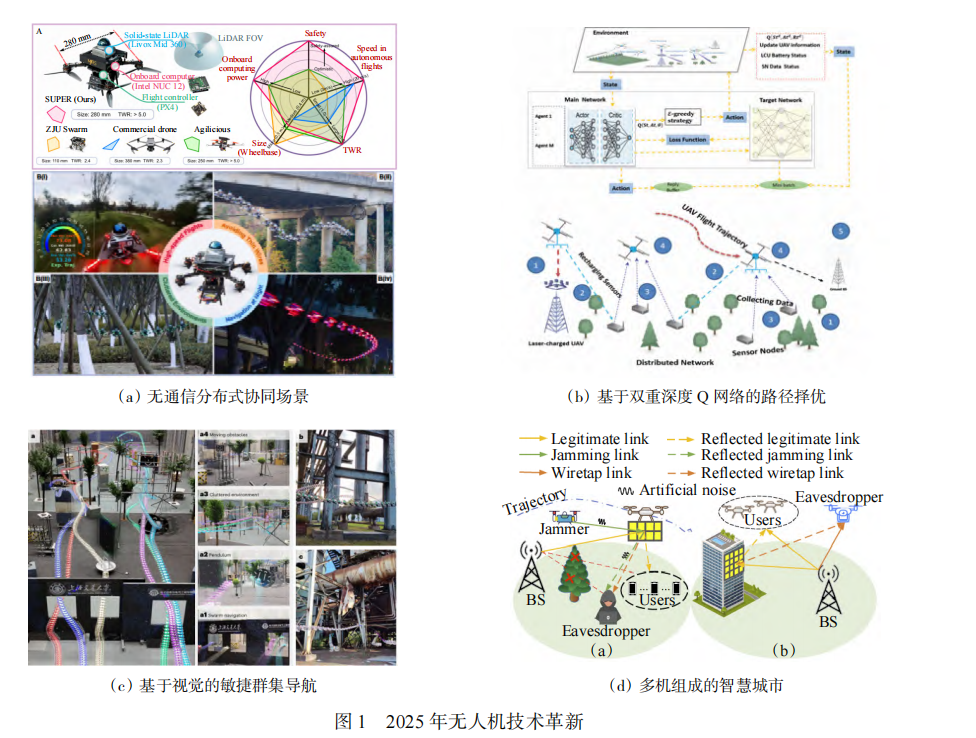

AI Visual Insight: The image presents a representative technology map for UAV route planning in 2025, typically including high-speed autonomous flight platforms, joint charging and path optimization frameworks, end-to-end learned navigation systems, and emerging communication support architectures. The key message is that lightweight hardware, real-time perception, task-constrained optimization, and learning-based control have been integrated into a single technical closed loop.

AI Visual Insight: The image presents a representative technology map for UAV route planning in 2025, typically including high-speed autonomous flight platforms, joint charging and path optimization frameworks, end-to-end learned navigation systems, and emerging communication support architectures. The key message is that lightweight hardware, real-time perception, task-constrained optimization, and learning-based control have been integrated into a single technical closed loop.

The core of task-oriented planning is not the shortest path, but maximum overall utility

def score_route(distance, energy, risk, reward):

# Score a route by combining distance, energy use, risk, and mission reward

return reward - 0.3 * distance - 0.4 * energy - 0.3 * riskThis type of objective function shows how path planning is shifting from a feasibility problem to a utility optimization problem.

Intelligent decision-making is becoming the dividing line for UAV systems

The upgrade in UAV intelligence is unfolding in two main directions. The first is rapid single-agent adaptation in unknown environments, such as deep meta-reinforcement learning for search-and-rescue scenarios. The second is multi-agent resource coordination under decentralized conditions, such as joint deployment and task offloading in mobile edge computing networks.

State estimation is also the foundation of reliable decision-making. Through tightly coupled fusion of IMU, dual LiDAR, stereo cameras, and GPS, UAVs can maintain high-precision localization even in weak-GPS or GPS-denied environments. This directly determines whether autonomous systems can move from laboratory prototypes into real-world deployments.

Distributed collaborative control emphasizes global consistency under local perception

def consensus_update(x_i, neighbors):

# Update toward consensus based on neighboring states

for x_j in neighbors:

x_i += 0.1 * (x_j - x_i) # Gradually converge to a shared swarm state

return x_iThis logic is a foundational abstraction for drone formations, consensus control, and swarm coordination.

Communication networking has evolved from basic connectivity to self-optimization

In the era of 5G-A and 6G, UAV communication networks are no longer just link assurance systems. They have become part of mission execution itself. Intelligent reflecting surfaces, distributed self-triggered control, and knowledge-enabled learning are turning aerial networks into infrastructure that can sense, adapt, and collaborate.

The main challenges include incomplete channel information, highly dynamic topologies, energy constraints, and security risks. The corresponding technical directions include co-design of communication and control, certificateless coded signatures for stronger security, and distributed learning for improved network adaptability.

Multi-UAV collaboration and multimodal perception are defining the next drone operating system

The core of multi-UAV collaboration is no longer simple formation flight. It is integrated swarm-level orchestration across perception, decision-making, planning, and control. Planners at the thousand-drone scale, lightweight semantic map sharing, consensus-based target detection, and graph neural network pursuit strategies show that collaboration is moving from pure control theory into full system engineering.

Multimodal perception addresses the sensing limits of a single UAV. Once vision, LiDAR, AIS, health-monitoring sensors, and edge computing are combined, drones begin to acquire all-weather, cross-terrain, and low-power situational awareness. The value of collaborative multi-UAV SLAM becomes especially clear when satellite navigation is unavailable but high-precision operations must continue.

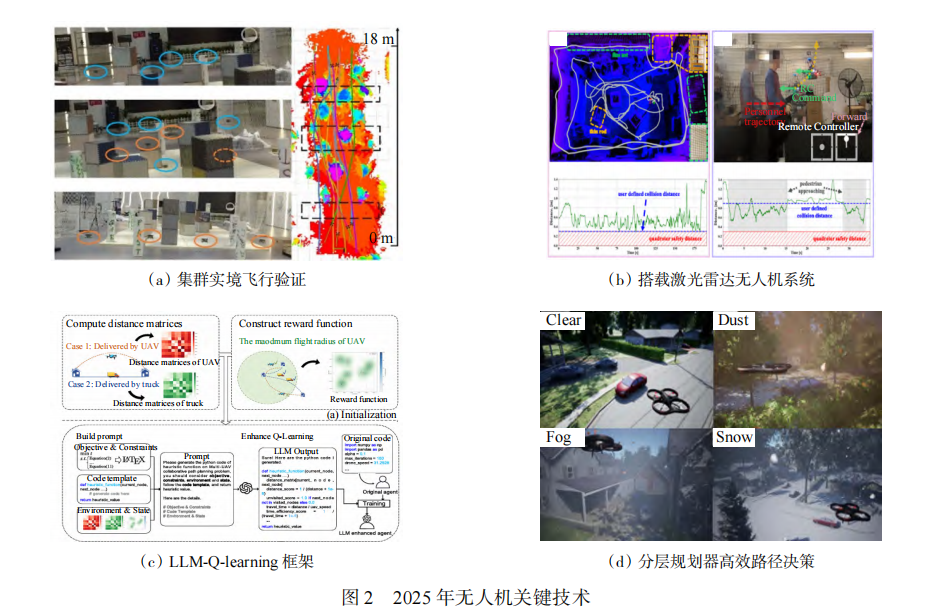

AI Visual Insight: The image highlights key scenarios in multi-UAV collaboration and multimodal perception, including large-scale swarm planning, inspection in complex terrain, LLM-assisted task scheduling, and neuro-symbolic search. It shows that UAV development has advanced beyond isolated algorithm validation toward an engineering model where hardware-software co-design, semantic modeling, and system-level closed-loop verification progress in parallel.

AI Visual Insight: The image highlights key scenarios in multi-UAV collaboration and multimodal perception, including large-scale swarm planning, inspection in complex terrain, LLM-assisted task scheduling, and neuro-symbolic search. It shows that UAV development has advanced beyond isolated algorithm validation toward an engineering model where hardware-software co-design, semantic modeling, and system-level closed-loop verification progress in parallel.

A common view of multimodal fusion inputs looks like this

sensors = {

"lidar": lidar_points, # Provide 3D structural information

"camera": image_frame, # Provide texture and semantic information

"imu": imu_signal, # Provide attitude and motion information

"gps": gps_fix # Provide an absolute localization reference

}

state = fuse_modalities(sensors) # Build a unified state estimateThis code shows that the goal of multimodal fusion is to construct a unified state space, not simply stack sensors together.

Large-model integration is rewriting human-drone interfaces and mission orchestration

The value of LLMs and VLMs is not to replace flight control. Their role is to fill the gap in high-level semantic understanding and global reasoning. They are well suited for task decomposition, resource scheduling, combinatorial optimization, and semantic target search. They are not well suited for direct closed-loop motor control. As a result, the dominant architecture today is clear: large models handle the high-level layer, while conventional controllers handle the low-level layer.

This integration delivers three practical benefits. First, operators can describe complex tasks in natural language. Second, large-scale scheduling problems can gain better search directions through prompt engineering. Third, neuro-symbolic systems create a more interpretable closed loop across semantic search, probabilistic modeling, and route planning.

Dual-use validation shows that drones have entered an era of system-level competition

Application validation in 2025 shows that competition has shifted from single-platform capability to system capability. On the military side, the focus is on manned-unmanned teaming, stealth penetration, low-cost swarms, and node-based air combat. On the civilian side, the focus is on cross-sea logistics, mountain inspection, smart agriculture, and instant delivery.

The industry is converging on a shared view: a general-purpose platform plus modular payloads. This model balances mission extensibility, cost control, and rapid deployment. At the same time, competition platforms and real-world adversarial testing continue to push algorithms to their limits, accelerating the maturity of SLAM, grasping, formation control, and anti-jamming capabilities.

AI Visual Insight: The image reflects the diversity of UAV application validation in 2025, including manned-unmanned collaborative formations, airborne tool exchange, large modular platforms, and cross-sea transport demonstrations. The key insight is that UAV evaluation has expanded from flight performance to payload capability, collaboration accuracy, adaptability in complex environments, and operational viability at industrial scale.

AI Visual Insight: The image reflects the diversity of UAV application validation in 2025, including manned-unmanned collaborative formations, airborne tool exchange, large modular platforms, and cross-sea transport demonstrations. The key insight is that UAV evaluation has expanded from flight performance to payload capability, collaboration accuracy, adaptability in complex environments, and operational viability at industrial scale.

Counter-UAV technology is shifting toward intelligent sensing and agile response

Counter-UAV systems are no longer defined by single-point detection and one-time interception. They are becoming system-level responses to slow, low, and small targets as well as swarm attacks. Multi-point collaborative sensing, cross-sensor fusion, rapid identification, and low-cost interception will become the mainstream direction.

For developers, this means future UAV system design cannot focus only on mission success rate. It must also account for detectability, anti-jamming resilience, communication exposure, and swarm robustness.

FAQ

Q1: Which technical tracks matter most for drones in 2025?

A: Prioritize five tracks: task-oriented route planning, multi-agent reinforcement learning, 5G-A/6G communication networking, multimodal perception fusion, and high-level task orchestration with LLMs/VLMs. Together, these determine whether a system can scale in real-world deployment.

Q2: Can large models directly control drone flight?

A: That is not recommended. Large models are suitable for task understanding, strategy decomposition, and combinatorial optimization, but they still suffer from hallucination and latency issues. In production systems, use a layered architecture: LLMs for high-level planning and traditional controllers for low-level execution.

Q3: What is the real bottleneck in scaling the low-altitude economy?

A: The bottleneck is not single-drone flight capability, but system coordination. The key constraints include airspace management, communication reliability, robust state estimation, multi-UAV coordination efficiency, regulatory compliance, and counter-UAV security requirements.

Core Summary: This article reconstructs the 2025 UAV technology landscape from the underlying survey literature. It focuses on route planning, intelligent decision-making, communication networking, multi-UAV collaboration, multimodal perception, and large-model integration, while also distilling dual-use validation trends and counter-UAV developments to help developers quickly understand the core technical stack of the low-altitude economy era.