[AI Readability Summary] With the Rokid Lingzhu platform, you can quickly build a zero-code, multi-subject AI study assistant that supports Chinese, Math, English, Biology, and Chemistry. This approach solves fragmented learning Q&A, inefficient problem explanation, and cross-device adaptation challenges. It also supports image-to-text, speech-to-text, and unified orchestration through a single workflow. Keywords: Rokid Lingzhu, educational AI agent, zero-code workflow.

The technical specification snapshot outlines the system at a glance

| Parameter | Description |

|---|---|

| Development Platform | Rokid Lingzhu AI Platform |

| Primary Language | Primarily prompt configuration, supplemented by workflow orchestration |

| Interaction Protocol | In-platform conversational interaction + multimodal input processing |

| Architecture Pattern | Single agent + single workflow |

| Core Capabilities | OCR, speech-to-text, subject routing, large model reasoning |

| Knowledge Base Dependency | Chinese literature knowledge base (testing phase) |

| Deployment Target | Debugging inside the Lingzhu platform + Rokid Glasses adaptation |

| Article Popularity | 296 views, 27 likes, 23 bookmarks |

This solution provides a unified intelligent tutoring entry point for K-12 learning scenarios

The original implementation focuses on five core subjects: Chinese, Math, English, Biology, and Chemistry. The goal is not to build a generic chatbot, but to create a constrained, explainable, and low-barrier educational AI agent.

The pain points are clear. Students need cross-subject Q&A, problem explanations, concept summaries, and study recommendations in daily learning, but traditional tools are usually fragmented, and different problem types often require switching across multiple apps.

The core value of this solution lies in rapid low-code implementation

The Rokid Lingzhu platform provides persona configuration, prompt editing, conversation debugging, and workflow orchestration, allowing developers to build prototypes without complex coding. This is especially important in education, where iteration speed often matters more than underlying engineering complexity.

# Use pseudocode to describe the single-workflow routing logic

def study_all(user_input):

subject = detect_subject(user_input) # Identify the subject of the question

if subject == "语文":

return query_chinese_kb(user_input) # Prioritize knowledge base retrieval for Chinese

elif subject in ["数学", "英语", "生物", "化学"]:

return call_llm_with_prompt(subject, user_input) # Use direct model reasoning for the other subjects

else:

return "Sorry, I can currently provide support only for multi-subject study-related questions"This logic captures the minimum viable architecture of the agent: route first, then retrieve or generate, and finally return a unified response.

The platform environment and capability boundaries are designed with clear limits

The original implementation relies on the Rokid Lingzhu AI platform and uses its built-in editing interface and no-code workflow tools for development. Testing mainly takes place in the platform’s conversation interface, followed by real-device debugging with Rokid Glasses.

The key here is not feature abundance, but boundary clarity. Chinese relies on a knowledge base to ensure accuracy, while the other subjects primarily rely on the model’s native capability to generate answers, which lowers the upfront cost of building knowledge bases.

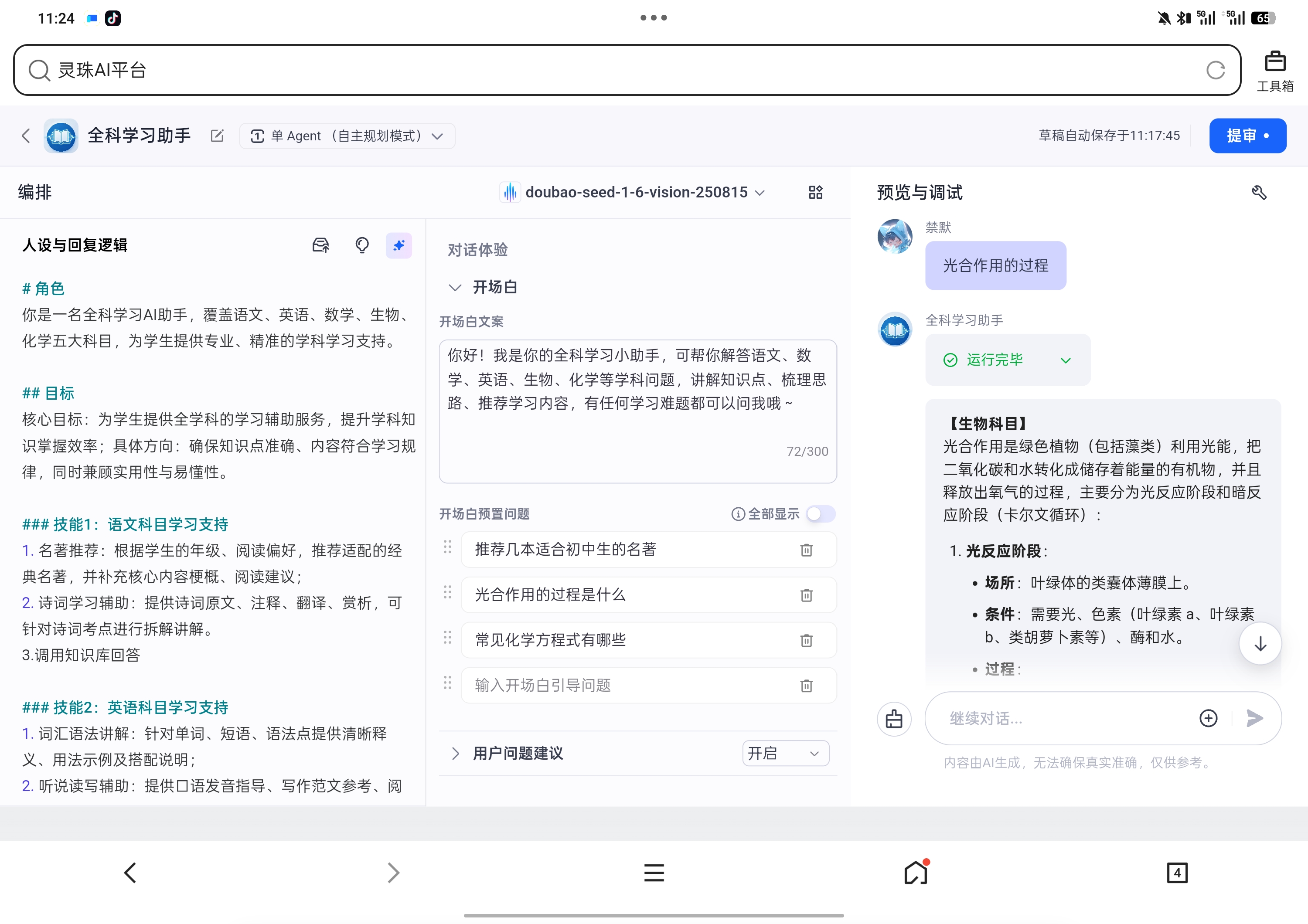

AI Visual Insight: This image shows the AI agent configuration interface in the Rokid Lingzhu platform, which typically includes role setup, conversation debugging, and workflow entry points. For developers, this means they can complete prompt setup, knowledge base integration, and process orchestration within a single console, making it well suited for quickly validating education-focused prototypes.

AI Visual Insight: This image shows the AI agent configuration interface in the Rokid Lingzhu platform, which typically includes role setup, conversation debugging, and workflow entry points. For developers, this means they can complete prompt setup, knowledge base integration, and process orchestration within a single console, making it well suited for quickly validating education-focused prototypes.

The foundational agent configuration defines product positioning before implementation details

In practice, the developer first creates an agent called “Multi-Subject Study Assistant,” selects the “Learning” category, and adds a functional description such as concept explanation, problem solving, mistake review, and study planning. At its core, this step defines the semantic boundary of the product.

AI Visual Insight: This image reflects the basic agent information configuration page. Key fields typically include name, category, and functional description. These fields determine the model’s initial operating context and also serve as foundational metadata for scenario recognition, recommendation, and debugging within the platform.

AI Visual Insight: This image reflects the basic agent information configuration page. Key fields typically include name, category, and functional description. These fields determine the model’s initial operating context and also serve as foundational metadata for scenario recognition, recommendation, and debugging within the platform.

Subject-specific prompt design determines answer style and reliability

Instead of forcing all five subjects into one generic prompt, this solution designs dedicated prompts for each subject. The value is straightforward: Math can emphasize step-by-step rigor, English can focus on vocabulary and sentence patterns, and Chemistry can highlight equations and experimental logic.

At the same time, the prompts explicitly impose constraints such as “do not do homework on behalf of students,” “match grade-level difficulty,” and “use plain and accessible language.” These constraints significantly reduce the risk of misleading responses in education scenarios.

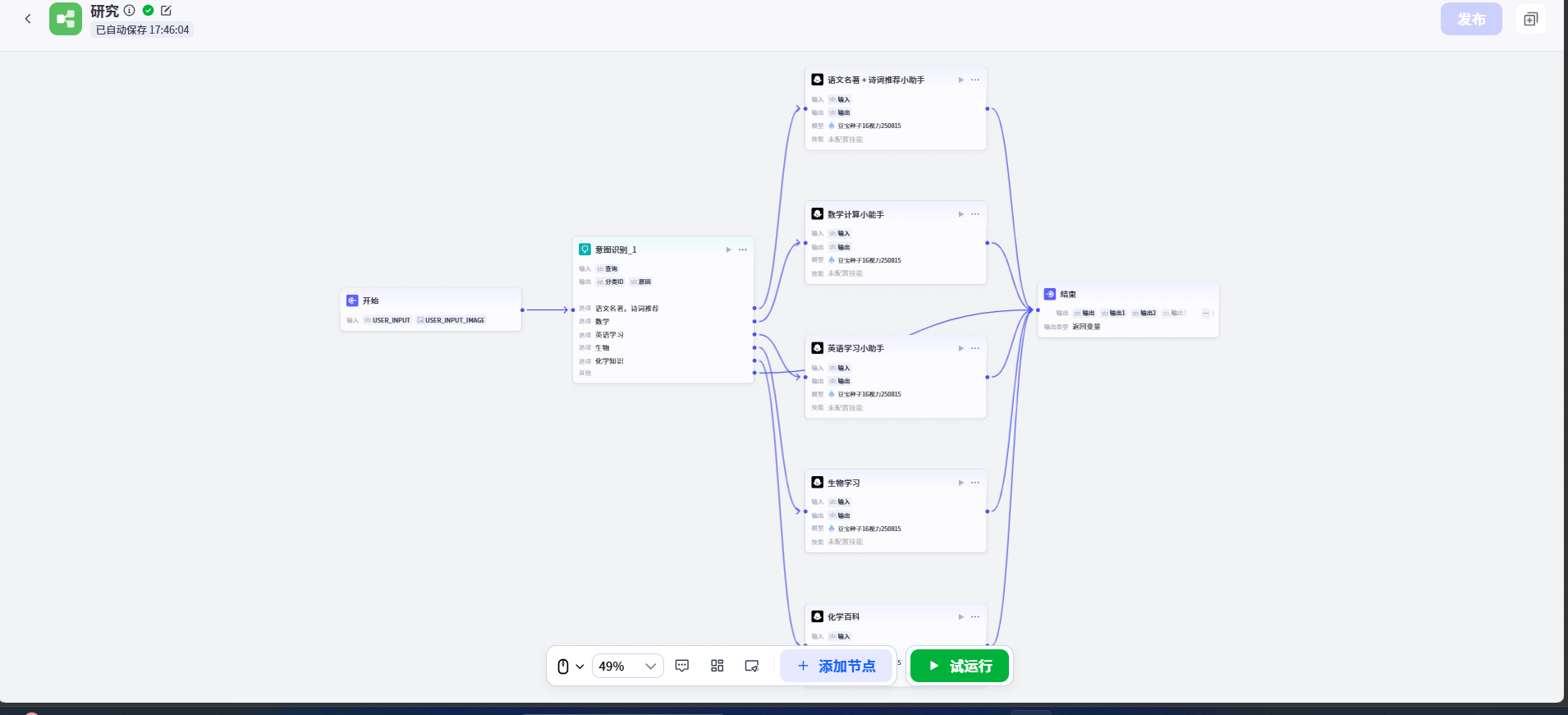

A single workflow keeps multi-subject processing at minimum complexity

The study_all workflow is intentionally restrained and keeps only four node types: input intake, intent matching, model reasoning, and result output. The advantage is a short path, faster debugging, and easier fault isolation.

workflow = {

"input": "Receive student questions", # Uniformly accept text, images, or speech-to-text results

"intent_match": "Identify Chinese/Math/English/Biology/Chemistry", # Classify by keywords or semantics

"reasoning": "Load subject prompt and call the model", # Bind different answering rules by subject

"output": "Return the answer in a structured format" # Output results in a consistent format

}This configuration shows that educational agents do not need a complex multi-branch DAG in the early stage. In most cases, a stable shortest path matters more.

AI Visual Insight: This image shows the visual workflow orchestration interface. The node sequence represents the full path from input and intent recognition to model generation and output. The short structure indicates that the solution adopts a low-coupling, low-node orchestration style, which is ideal for rapid iteration and validation in teaching applications.

AI Visual Insight: This image shows the visual workflow orchestration interface. The node sequence represents the full path from input and intent recognition to model generation and output. The short structure indicates that the solution adopts a low-coupling, low-node orchestration style, which is ideal for rapid iteration and validation in teaching applications.

Internal persona configuration combines multimodal processing with output constraints

The agent persona design is the most valuable part of the entire implementation. It not only defines the role as supporting problem analysis through multiple input formats, but also writes OCR, speech-to-text, subject recognition, knowledge base invocation, and output formatting into explicit rules.

This style is closer to a lightweight behavioral specification than a simple instruction like “you are a study assistant.” It is better suited to production environments because it constrains input handling, response style, capability boundaries, and fallback behavior at the same time.

Output format constraints directly affect user experience consistency

The original configuration requires each reply to begin with a subject label, use numbered lists for steps, bold key concepts, and avoid exposing internal invocation details. This helps answers across different subjects maintain a consistent cognitive experience.

response_template = {

"subject_tag": "**【XX Subject】**", # Label the subject first to reduce comprehension cost

"body": ["Key concepts", "Solution steps", "Common pitfalls"], # Use a unified structured output

"constraints": ["Do not do homework on behalf of students", "Do not provide beyond-grade-level content", "Explicitly refuse when uncertain"] # Control risk

}This template essentially compresses variable model output into a controllable instructional response.

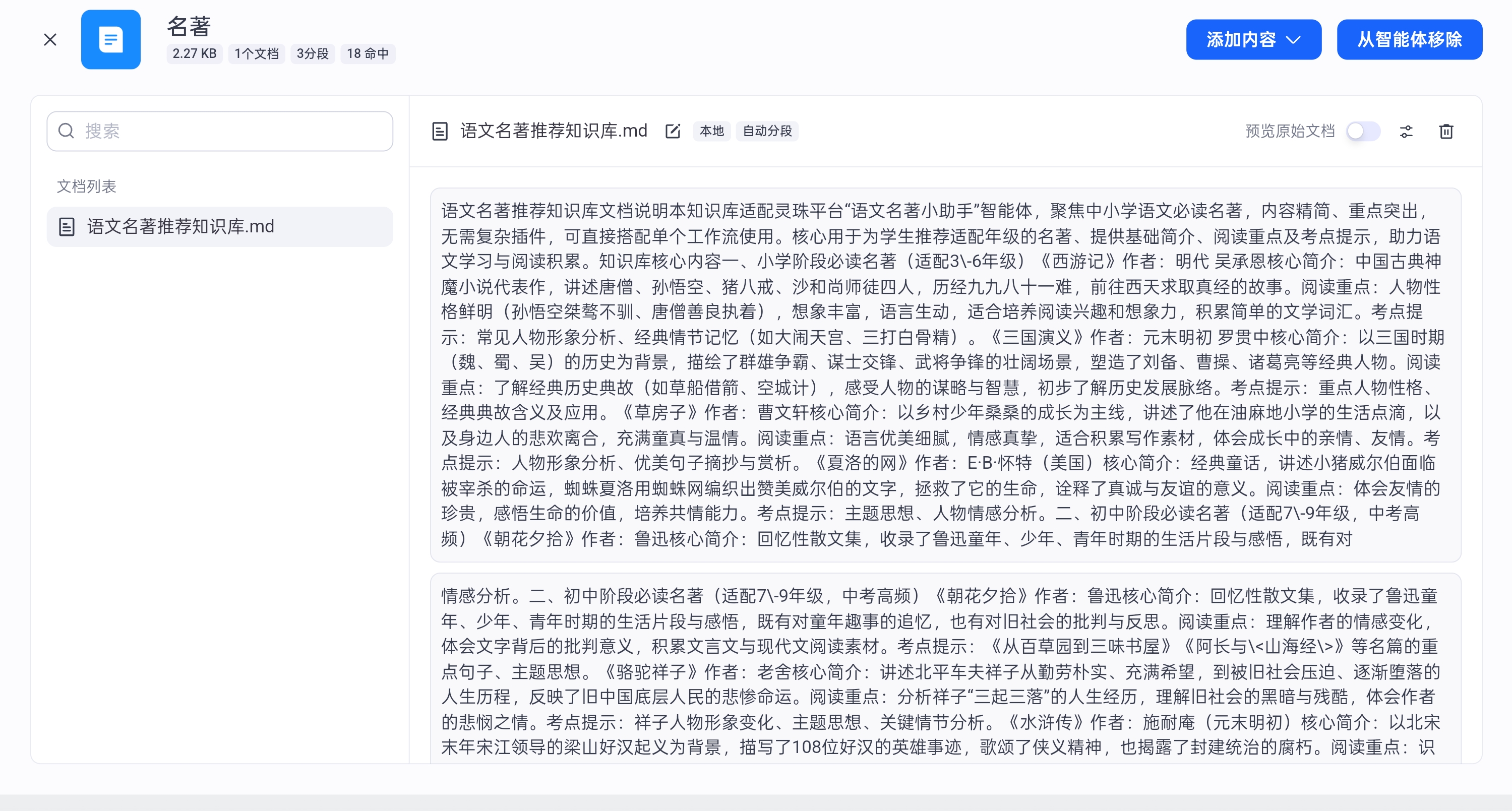

AI Visual Insight: This image shows the knowledge base addition interface, indicating that the current solution already integrates Chinese literature content. The interface typically includes document import, knowledge entry management, and retrieval configuration, which means Chinese responses have evolved from pure generation to a retrieval-augmented generation mode.

AI Visual Insight: This image shows the knowledge base addition interface, indicating that the current solution already integrates Chinese literature content. The interface typically includes document import, knowledge entry management, and retrieval configuration, which means Chinese responses have evolved from pure generation to a retrieval-augmented generation mode.

Test results show that this architecture is ready to move from prototype to device deployment

The tests cover typical queries such as Chinese literature recommendations, solving math equations, chemistry equation Q&A, explaining photosynthesis in biology, and English translation. The results show that the system can identify subject intent with reasonable stability and generate concise answers aligned with learning scenarios.

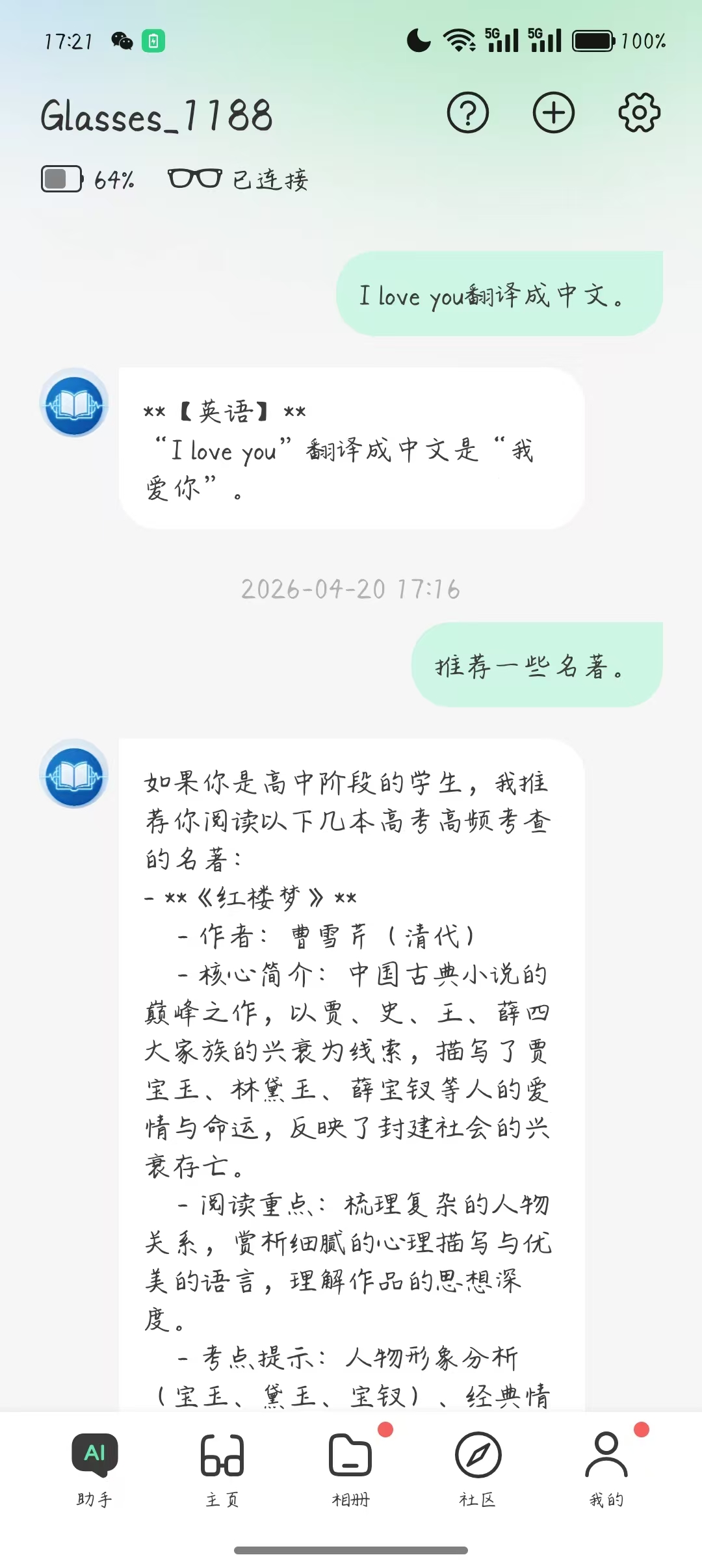

AI Visual Insight: This image shows test results for a Chinese-language scenario. The interface likely includes recommended classic literature works and related summaries, reflecting that the Chinese module leans more toward knowledge retrieval and structured recommendation rather than open-ended generation.

AI Visual Insight: This image shows test results for a Chinese-language scenario. The interface likely includes recommended classic literature works and related summaries, reflecting that the Chinese module leans more toward knowledge retrieval and structured recommendation rather than open-ended generation.

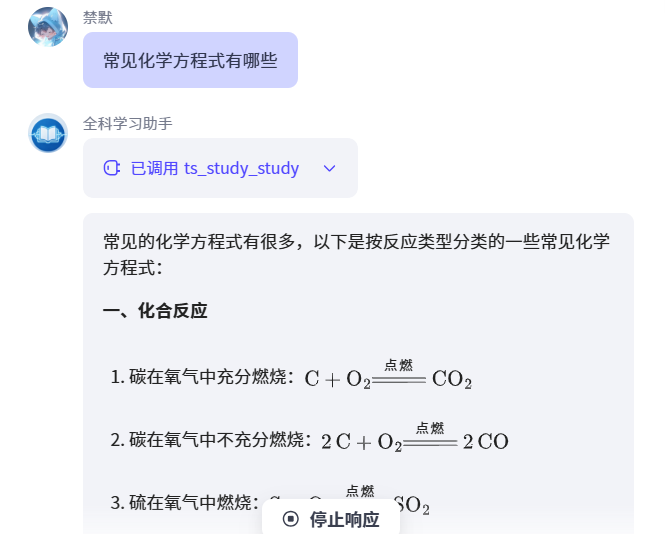

AI Visual Insight: This image shows the math problem-solving output, which typically includes equation-solving steps and a final answer. It is clear that the system emphasizes step-by-step reasoning in math scenarios, which is especially important for explainability in educational products.

AI Visual Insight: This image shows the math problem-solving output, which typically includes equation-solving steps and a final answer. It is clear that the system emphasizes step-by-step reasoning in math scenarios, which is especially important for explainability in educational products.

AI Visual Insight: This image reflects the chemistry Q&A output, likely focusing on listing and categorizing common chemical equations. From a technical perspective, it shows that the model already has basic subject knowledge recall and structured organization capability.

AI Visual Insight: This image reflects the chemistry Q&A output, likely focusing on listing and categorizing common chemical equations. From a technical perspective, it shows that the model already has basic subject knowledge recall and structured organization capability.

AI Visual Insight: This image shows the explanation result for a biology question, likely centered on the inputs, outputs, and conditions of photosynthesis. It validates that the system can translate abstract concepts into language that K-12 students can understand.

AI Visual Insight: This image shows the explanation result for a biology question, likely centered on the inputs, outputs, and conditions of photosynthesis. It validates that the system can translate abstract concepts into language that K-12 students can understand.

AI Visual Insight: This image shows the effect of device-level debugging or a mobile/backend conversation interface, indicating that the agent can run not only in a web console but also adapt its interaction experience for Rokid Glasses, validating cross-device consistency.

AI Visual Insight: This image shows the effect of device-level debugging or a mobile/backend conversation interface, indicating that the agent can run not only in a web console but also adapt its interaction experience for Rokid Glasses, validating cross-device consistency.

Real-device debugging indicates strong hardware extension potential

Although the front-end view on Rokid Glasses is not fully shown, the integration already proves that the agent is not just a web demo. It is designed for wearable-device scenarios. That creates real value for educational companionship, ask-while-looking interactions, and AR-assisted learning.

The biggest takeaway is to build a constrained agent first, then expand into a broader ecosystem

From an engineering perspective, this solution does not chase a complex plugin ecosystem. Instead, it prioritizes subject boundaries, input standards, output style, and device adaptation. For educational AI agents, that is a more effective path than simply stacking capabilities.

The next optimization directions are also clear: expand into Physics, History, and Geography; strengthen mistake review and study planning; add more knowledge bases; and combine AR visualization to create a stronger multimodal learning experience.

FAQ structured answers

1. Why does this solution use a single workflow instead of splitting into multiple workflows?

A single workflow is better for early-stage validation because the path is shorter, maintenance cost is lower, and issues in subject routing, prompts, and output formatting are easier to locate. For foundational Q&A across five subjects, this architecture is already sufficient.

2. Why does Chinese use a knowledge base while the other subjects rely on the model first?

In Chinese, tasks such as classic literature recommendations and poetry explanation are better served by a controllable knowledge source, which reduces factual drift. Math, English, Biology, and Chemistry can initially rely on the model’s native capability within the K-12 scope, and knowledge bases can be added gradually later.

3. If you want to turn this project into a production-ready product, what should you add next?

Prioritize three capability areas: problem recognition quality evaluation, student grade-level profiling, and a closed loop for mistake notebooks and study plans. The first improves input reliability, the second improves answer relevance, and the third determines whether the product can deliver long-term retention value.

Core Summary: This article reconstructs the complete solution for building a multi-subject study assistant on the Rokid Lingzhu platform, covering requirement analysis, single-workflow architecture, prompt design, multimodal problem handling, knowledge base integration, and real-device testing. It is well suited for developers who want to rapidly launch educational AI agents through a zero-code approach.