This article breaks down a high-concurrency flash sale optimization pattern: use Redis + Lua to complete inventory validation and user deduplication in milliseconds, then hand off order creation to an asynchronous queue so a dedicated worker thread can persist orders at a controlled pace. This design prevents the database from being overwhelmed by burst writes, reduces response latency, and decouples core business workflows. Keywords: Redis, Lua, asynchronous flash sale.

Technical specifications at a glance

| Parameter | Description |

|---|---|

| Primary languages | Java, Lua |

| Runtime framework | Spring Boot |

| Core protocols | HTTP, Redis RESP |

| Core middleware | Redis, JVM BlockingQueue |

| Concurrency model | Tomcat threads + dedicated consumer thread |

| Typical goals | Prevent overselling, enforce one order per user, smooth traffic spikes |

| Star count | Not provided in the original article |

| Core dependencies | StringRedisTemplate, DefaultRedisScript, BlockingQueue |

Traditional synchronous flash sale designs amplify database bottlenecks under high concurrency

Many flash sale systems already use Lua scripts to move inventory checks, stock deduction, and user deduplication into Redis for atomic execution. This step prevents overselling and blocks duplicate orders, but it does not solve the final write amplification problem when persisting data to the database.

Once Redis validation succeeds, the application thread still has to synchronously create the order, deduct database stock, write activity records, and sometimes call points or notification services. If thousands of requests succeed within a single second, the database quickly becomes the system bottleneck.

The root problem in the synchronous path is that slow operations occupy Web threads

User request -> Redis validation -> Create order -> Write database -> Call other services -> Return resultThe issue in this chain is not Redis. The real issue is that Tomcat threads remain blocked on database I/O for too long. Once the thread pool is exhausted, subsequent requests can only queue, time out, or be rejected.

The core goal of asynchronous flash sale design is to split the fast path from the slow path

The correct approach is to decouple eligibility validation from order persistence: Tomcat threads should handle only lightweight millisecond-level work, while slower operations should be delegated to background consumer threads. That is the only way to achieve both fast responses and high traffic tolerance.

The synchronous model emphasizes completing the order immediately. The asynchronous model emphasizes confirming eligibility immediately. Users first receive a response such as “queued” or “processing,” and the backend consumes order messages later at a controlled rate.

The asynchronous execution path is better aligned with flash sale traffic patterns

User request -> Lua script validation -> Enqueue async task -> Return immediately

-> Consumer thread persists order -> Notify user of resultThis effectively adds a buffer layer in front of the database. Redis and the queue absorb frontend traffic bursts, while the database processes requests at a stable throughput, which smooths peak load.

Redis and Lua should handle eligibility validation rather than the entire transaction lifecycle

The value of Lua in flash sale systems is atomicity. It combines the checks for sufficient stock and whether the user has already placed an order into a single Redis execution, which prevents overselling and duplicate purchases under concurrency.

Compared with Redis transactions, Lua can express conditional branches directly. Compared with distributed locks, it avoids thread blocking and lock contention overhead, which makes it better suited to high-frequency, short-lived transactional scenarios.

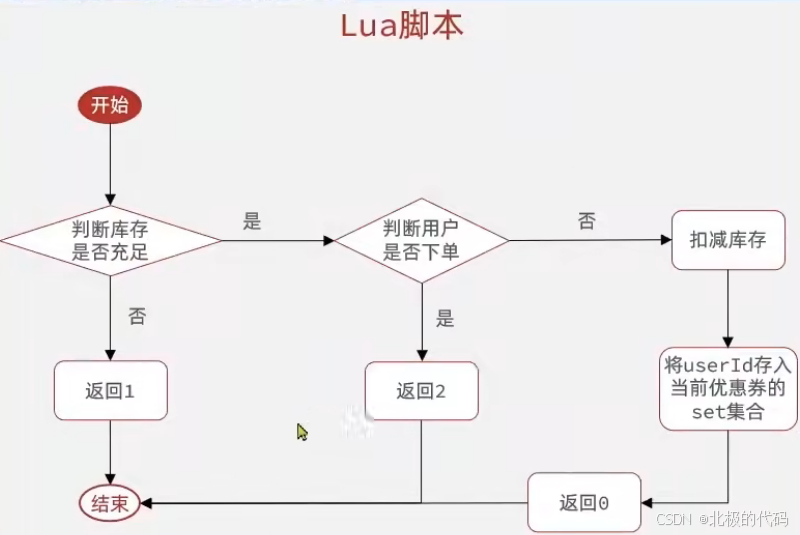

AI Visual Insight: The image illustrates the critical flash sale eligibility validation path. A Lua script reads both the inventory key and the order set key, then uses Redis’s single-threaded execution model to complete conditional checks, stock decrement, and user order recording in one atomic operation. This clearly shows how “prevent overselling + prevent duplicate orders” can be enforced within the same technical workflow.

AI Visual Insight: The image illustrates the critical flash sale eligibility validation path. A Lua script reads both the inventory key and the order set key, then uses Redis’s single-threaded execution model to complete conditional checks, stock decrement, and user order recording in one atomic operation. This clearly shows how “prevent overselling + prevent duplicate orders” can be enforced within the same technical workflow.

The Lua script compresses three steps into one atomic execution

-- 1. Get parameters: voucher ID and user ID

local voucherId = ARGV[1]

local userId = ARGV[2]

-- 2. Build the inventory key and order record key

local stockKey = 'seckill:stock:' .. voucherId

local orderKey = 'seckill:order:' .. voucherId

-- 3. Check whether stock is sufficient

local stock = redis.call('get', stockKey)

if (tonumber(stock) <= 0) then

return 1 -- Out of stock, fail immediately

end

-- 4. Check whether the user has already placed an order

if (redis.call('sismember', orderKey, userId) == 1) then

return 2 -- Users who already ordered cannot purchase again

end

-- 5. Decrement Redis stock and record the purchasing user

redis.call('incrby', stockKey, -1) -- Atomically decrement stock

redis.call('sadd', orderKey, userId) -- Write to the user deduplication set

return 0 -- Flash sale eligibility validation succeededThis script completes inventory checks, deduplication validation, and state updates inside Redis in a single operation. It is the core implementation for preventing overselling in a flash sale.

Redis preloading moves hot inventory reads and writes ahead of the database

When a flash sale campaign is created, the inventory should be synchronized into Redis in advance. That way, high-concurrency traffic does not hit the database first. Instead, it reaches the in-memory layer and completes stock validation there.

This step is straightforward, but critical. Without inventory preloading, the subsequent Lua validation loses its purpose because hot reads and writes would still fall back to the database.

The Java service preloads inventory when a flash sale voucher is created

@Override

@Transactional

public void addSeckillVoucher(Voucher voucher) {

save(voucher); // Save base voucher information

SeckillVoucher seckillVoucher = new SeckillVoucher();

seckillVoucher.setVoucherId(voucher.getId());

seckillVoucher.setStock(voucher.getStock());

seckillVoucher.setBeginTime(voucher.getBeginTime());

seckillVoucher.setEndTime(voucher.getEndTime());

seckillVoucherService.save(seckillVoucher); // Save flash sale business data

stringRedisTemplate.opsForValue().set(

"seckill:stock:" + voucher.getId(),

voucher.getStock().toString()

); // Preload inventory into Redis

}This code writes flash sale inventory into Redis ahead of time, providing the data foundation for subsequent millisecond-level eligibility validation.

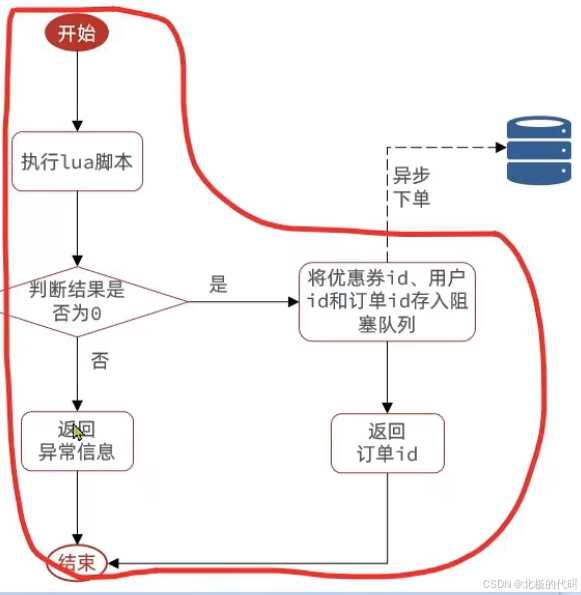

A blocking queue lets requests return quickly while orders are processed asynchronously

After Lua returns success, the system should not write to the database synchronously. Instead, it should publish minimal order data such as user ID and voucher ID into a queue. The producer thread can then end the request immediately, while the consumer thread processes the database transaction later.

The original example uses a JVM BlockingQueue. It fits monolithic applications or learning scenarios that demonstrate asynchronous flash sale design. It does not depend on an external message queue and is simple to implement, but it also comes with limited memory capacity and the risk of message loss if the service crashes.

AI Visual Insight: The image shows the asynchronous ordering flow after Lua validation succeeds and the request enters a blocking queue. It highlights the separation of responsibilities between Tomcat threads and background consumer threads: the former handles only fast validation and task submission, while the latter handles database writes and follow-up notifications. This reflects a system design centered on load smoothing and decoupling.

AI Visual Insight: The image shows the asynchronous ordering flow after Lua validation succeeds and the request enters a blocking queue. It highlights the separation of responsibilities between Tomcat threads and background consumer threads: the former handles only fast validation and task submission, while the latter handles database writes and follow-up notifications. This reflects a system design centered on load smoothing and decoupling.

The producer thread is responsible only for validation and enqueueing

private static final DefaultRedisScript

<Long> SECKILL_SCRIPT;

static {

SECKILL_SCRIPT = new DefaultRedisScript<>();

SECKILL_SCRIPT.setLocation(new ClassPathResource("seckill.lua"));

SECKILL_SCRIPT.setResultType(Long.class);

}

public Result seckillVoucher(Long voucherId) {

Long userId = UserHolder.getUser().getId();

Long result = stringRedisTemplate.execute(

SECKILL_SCRIPT,

Collections.emptyList(),

voucherId.toString(), userId.toString()

); // Execute the Lua script to complete eligibility validation

int r = result.intValue();

if (r != 0) {

return Result.fail(r == 1 ? "Out of stock" : "Duplicate orders are not allowed");

}

// In a real project, this should write to a blocking queue or MQ

return Result.ok("Purchase successful, now processing");

}This code reduces the flash sale entry point to a single Lua execution, ensuring that the Web thread completes request handling in a very short time.

A dedicated consumer thread handles database persistence and compensation logic

The consumer thread does not belong to the Tomcat thread pool, so it does not consume Web request resources. It can block continuously on the queue and wake up only when an order arrives.

This model is essentially the producer-consumer pattern: producers optimize for fast responses, while consumers optimize for stable processing. Thread isolation between the two is a key reason why high-concurrency flash sale systems can run reliably.

The background consumer thread creates orders and persists stock updates

@Component

@Slf4j

public class SeckillConsumer {

@Autowired

private OrderService orderService;

@Autowired

private SeckillService seckillService;

@PostConstruct

public void startConsumer() {

Thread consumerThread = new Thread(() -> {

while (true) {

try {

SeckillService.SeckillOrder order =

seckillService.getOrderQueue().take(); // Block and wait when no task is available

processOrder(order);

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

break;

} catch (Exception e) {

log.error("Failed to process order", e); // Failed orders should be logged and compensated

}

}

});

consumerThread.setDaemon(true);

consumerThread.start();

}

private void processOrder(SeckillService.SeckillOrder order) {

orderService.createOrder(order.getUserId(), order.getVoucherId()); // Create the order

boolean success = orderService.deductStock(order.getVoucherId()); // Deduct database stock

if (!success) {

log.error("Failed to deduct database stock, voucherId={}", order.getVoucherId());

}

}

}This code demonstrates the minimum viable implementation of asynchronous persistence: pull a task from the queue, execute the transaction, and record failures, forming a consumption loop independent of the Web layer.

This design improves throughput but still has reliability limits

The BlockingQueue approach works well for teaching and single-node experiments, but it is not suitable as a final production architecture. Its biggest weakness is that messages are not durable. Application restarts, unexpected process exits, or queue saturation can all cause order loss.

If this business flow moves into a real production environment, the next step should be to upgrade to Redis Streams, RabbitMQ, RocketMQ, or Kafka, combined with idempotent consumption, retry logic, dead-letter queues, and observability alerts.

You can think about the architectural boundary like this

Learning version: Redis + Lua + BlockingQueue

Production version: Redis + Lua + Durable MQ + Idempotent persistence + Compensation mechanismsThe first solves the high-concurrency design problem. The second solves the high-reliability delivery problem. They are not alternatives; they are stages in an architectural evolution.

FAQ

Why is Redis + Lua alone not enough?

Lua solves only the atomicity of eligibility validation. It does not eliminate the instantaneous write pressure on the database. If successful requests still persist synchronously, Tomcat threads will remain occupied by slow I/O, and system throughput will still be limited.

Can BlockingQueue be used directly in production?

Not recommended. It does not provide built-in persistence, so service crashes can cause message loss. It is better suited as a teaching example or a lightweight asynchronous option for a single-node deployment. Production systems should replace it with a message queue that supports acknowledgments and retries.

After switching to asynchronous ordering, how do you still guarantee one order per user and prevent overselling?

The one-order-per-user rule and Redis-side stock deduction are both enforced first by the Lua script. The order consumer processes only requests that have already passed eligibility validation. The database layer should still add idempotency checks and optimistic stock deduction as a second line of defense.

Core summary

This article reconstructs an optimization approach for flash sale systems around three layers: Redis atomic validation, Lua-based oversell prevention and one-order-per-user enforcement, and asynchronous order processing through a blocking queue. It explains how this architecture reduces peak database write pressure, shortens user response time, and provides key Java and Lua implementation patterns.