This article connects three foundational works—InstructGPT, Chain of Thought, and Llama 3.1—to explain how large language models achieve instruction alignment, activate reasoning, and scale open model architectures. Together, they address three persistent problems: models that can generate text but do not follow instructions, can answer questions but behave inconsistently, and can be trained but remain difficult to scale. Keywords: RLHF, CoT, Llama 3.1.

Technical specifications are easy to compare at a glance

| Parameter | Details |

|---|---|

| Primary languages | Python, PyTorch |

| Core protocols/paradigms | RLHF, PPO, DPO, Few-shot Prompting |

| Representative models | InstructGPT, PaLM, Llama 3.1 |

| Context capacity | Llama 3.1 supports 128K |

| Reference scale | GPT-3 175B, PaLM 540B, Llama 3.1 405B |

| Core dependencies | openai SDK, torch, Transformer attention mechanisms |

| Key signals | Human preferences, chains of thought, multi-stage post-training |

| Original popularity | The source article was a CSDN paper note with 100+ views and inclusion in a column |

InstructGPT redefined the alignment pipeline for large language models

The value of InstructGPT does not come from model scale alone. Its real contribution is establishing the most important engineering paradigm in modern LLMs: perform large-scale pretraining first, then align model behavior through human feedback. It solves a fundamental problem: a model may be good at continuation, but that does not mean it reliably follows instructions.

Its core pipeline has three stages. SFT teaches the model a basic response format with high-quality instruction data. RM trains a reward model on human preferences. PPO then optimizes the policy using reward scores. The result is output that is more helpful, more honest, and safer—the well-known 3H objective.

The three-stage training skeleton in InstructGPT is straightforward

stages = ["SFT", "Reward Model", "PPO"]

for stage in stages:

print(f"Current stage: {stage}") # Execute supervised fine-tuning, reward modeling, and reinforcement learning optimization in sequenceThis snippet shows the three-stage training order in InstructGPT in its simplest form.

It is also important to note that RLHF introduces clear trade-offs. First, annotation is expensive because preference data is costly and inherently subjective. Second, there is an “alignment tax”: after becoming safer and more compliant, the model may lose some raw benchmark performance, especially on coding and math tasks.

Chain of Thought showed that prompting can activate reasoning

The key conclusion of CoT is that large models are not inherently incapable of reasoning. Instead, they often need the right prompt structure to explicitly unfold intermediate steps. By providing examples in a “question → reasoning process → answer” format, models can improve significantly on complex arithmetic, symbolic, and commonsense tasks.

Few-shot CoT depends on demonstrations. Zero-shot CoT is lighter weight and often requires only one additional phrase after the question: “Let’s think step by step.” However, CoT is not a universal solution. It depends heavily on model scale. Smaller models often benefit less and can even be distracted by overly long reasoning traces.

Self-Consistency is a common way to improve CoT stability

from collections import Counter

def vote_answers(candidates):

final = Counter(candidates).most_common(1)[0][0] # Apply majority voting over the final answers from multiple reasoning branches

return final

answers = ["42", "41", "42"]

print(vote_answers(answers))This code captures the core idea behind self-consistency: do not trust a single reasoning path; trust the shared conclusion across the majority of paths.

The implementation pattern in the original note is also typical: increase the temperature to generate multiple reasoning trajectories, then extract the final answer from each trajectory and vote across them. This reduces the risk that a single CoT sample produces an answer that looks plausible but is actually wrong, which is why it has become a high-frequency technique in reasoning enhancement.

Llama 3.1 demonstrated that the standard Transformer still has significant headroom

The significance of Llama 3.1 is that it does not rely on a radically new architecture. Instead, it pushes the standard Transformer to a very high level through improvements in data, training, and post-training. Its training corpus reaches 15T tokens, and combined with GQA, RoPE, and multi-stage alignment, it forms a complete industrial-grade open model pipeline.

Compared with earlier open models, Llama 3.1 places more emphasis on long context, multilingual capability, tool use, and alignment stability. The 405B version is already competitive with leading closed-source models on multiple benchmarks, which meaningfully challenges the old assumption that open models can only follow behind.

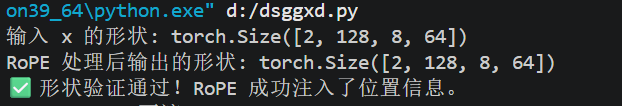

RoPE is a key foundation for context extension in Llama 3.1

import torch

def precompute_theta_pos_frequencies(dim, seq_len, theta=10000.0):

idx = torch.arange(0, dim, 2).float()

freqs = 1.0 / (theta ** (idx / dim)) # Compute the rotation frequency associated with each dimension

pos = torch.arange(seq_len)

return torch.outer(pos, freqs)This code shows the precomputation logic for the RoPE frequency matrix, which is a critical prerequisite for injecting positional information into attention heads.

The advantage of RoPE is that it rewrites absolute positional encoding as a rotation operation, allowing relative positional information to integrate more naturally into attention computation and making it better suited for long-sequence scaling. This is one of the key reasons Llama 3.1 can stably support a 128K context window.

AI Visual Insight: This diagram illustrates the technical stack behind Llama 3.1, highlighting how the base Transformer, positional encoding, long-context support, and post-training alignment pipeline fit together. It serves as a useful overview for understanding how RoPE, GQA, and post-training modules work in concert.

AI Visual Insight: This diagram illustrates the technical stack behind Llama 3.1, highlighting how the base Transformer, positional encoding, long-context support, and post-training alignment pipeline fit together. It serves as a useful overview for understanding how RoPE, GQA, and post-training modules work in concert.

DPO is replacing complex RLHF as a lighter alignment method

In the post-training pipeline of Llama 3.1, DPO is a critical step. Unlike PPO, it does not explicitly build an online reinforcement learning loop. Instead, it directly optimizes the model using preference pair data, which makes training more stable, lowers implementation cost, and improves engineering reproducibility.

The core data structure in DPO is very simple: for the same prompt, provide a better response as chosen and a worse response as rejected. The model does not learn an “absolutely correct answer.” It learns a preference ranking relation, which makes it a natural fit for human-feedback scenarios.

A minimal DPO sample explains the idea clearly

dpo_sample = {

"instruction": "Please explain what a stack overflow is",

"chosen": "A stack overflow occurs when data written to the stack exceeds its boundary and corrupts adjacent memory.", # The preferred answer is more accurate and more complete

"rejected": "It just means the memory ran out."

}

print(dpo_sample)This snippet shows the most important training relation in DPO: a preferred and dispreferred answer pair under the same instruction.

These three papers together form the backbone of modern LLM engineering

Viewed in the same coordinate system, InstructGPT solves “how to make models more obedient,” CoT solves “how to make models think better,” and Llama 3.1 solves “how to make models stronger and reproducible in open-source form.” These are not parallel concepts. They are a continuous chain of evolution.

Today’s LLM systems are built along this path in most cases: start with high-quality pretraining, apply preference alignment, then enhance reasoning through prompt engineering, self-consistency, retrieval, or tool use. If you understand these three papers, you have essentially grasped the foundational map of modern LLM engineering.

FAQ answers the most common implementation questions

Q1: What is the essential difference between the alignment methods in InstructGPT and Llama 3.1?

A: InstructGPT represents the classic RLHF pipeline, which depends on SFT, a reward model, and PPO. Llama 3.1 places more emphasis on lighter preference-optimization methods such as DPO, resulting in a shorter training pipeline and lower engineering cost.

Q2: Why does CoT sometimes make answers longer without making them more accurate?

A: Because a chain of thought is not the same as genuine reasoning. A model may generate steps that look structurally reasonable while still making logical errors in the middle. That is why Self-Consistency, tool use, or external verification mechanisms are often needed.

Q3: If Llama 3.1 is already strong, why is RAG still necessary?

A: No matter how strong a model is, it is still limited by its knowledge cutoff. RAG supplements real-time, private, or domain-specific knowledge and addresses the fact that parametric memory cannot be updated quickly enough. This is especially valuable for enterprise knowledge bases and question answering over the latest information.

Core summary captures the modern LLM pattern

This article reconstructs three critical technical threads—InstructGPT, Chain of Thought, and Llama 3.1—and focuses on RLHF, Self-Consistency, RoPE, and DPO to help developers quickly understand the core modern LLM paradigm of “pretraining → reasoning activation → alignment optimization.”