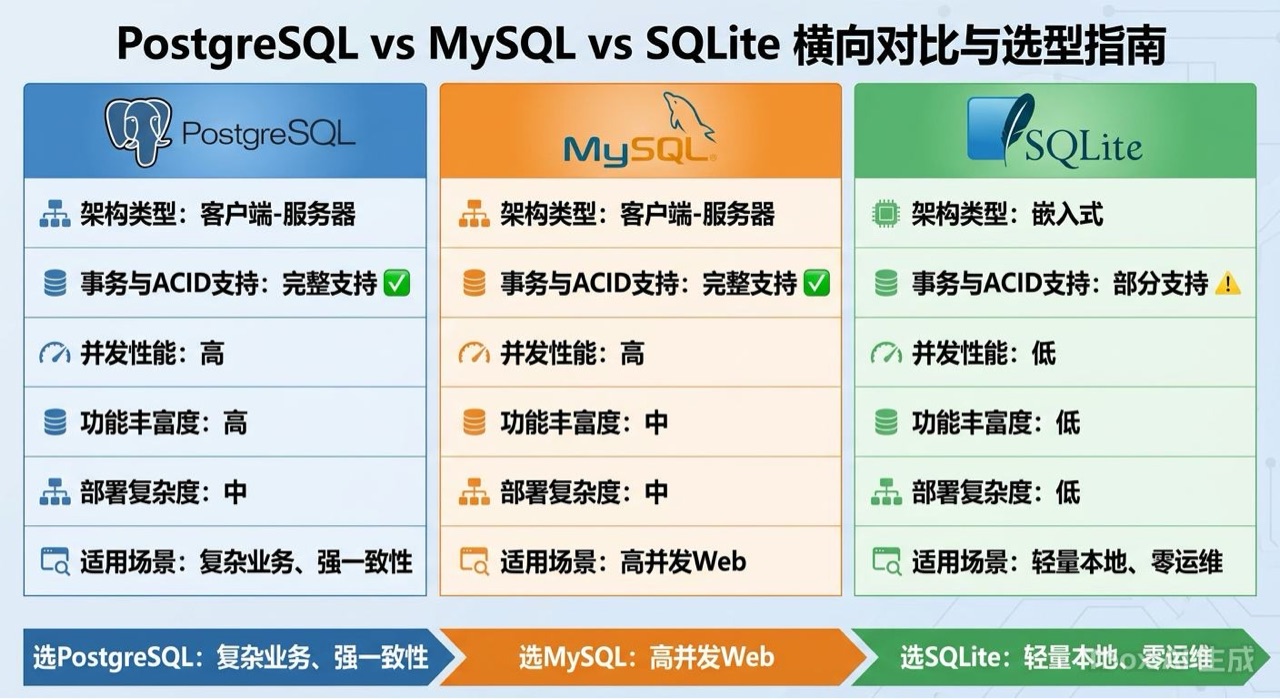

This article focuses on how to choose between PostgreSQL, MySQL, and SQLite. It breaks down the key differences in complex queries, JSON support, concurrent writes, and operational overhead to help developers make scenario-based decisions. Keywords: database selection, PostgreSQL, SQLite.

The technical specification snapshot highlights the trade-offs

| Dimension | PostgreSQL | MySQL | SQLite |

|---|---|---|---|

| Implementation Language | C | C/C++ | C |

| Access Protocol | TCP/IP | TCP/IP | Local file / embedded |

| GitHub Stars | N/A | N/A | N/A |

| Core Strengths in the Stack | JSONB, GIN, extension ecosystem | InnoDB, replication pipeline, cloud services | Single-file storage, WAL, sqlite3 |

| Typical Advantages | Strong complex queries, rich data types | Mature operations, strong cloud support | Zero configuration, minimal deployment |

| Typical Limitations | Slightly steeper learning curve | Average expressiveness for advanced queries | Weak high-concurrency write performance |

The core difference between these three databases is not popularity but workload model

The easiest mistake in database selection is treating “most common” as “most suitable.” SQLite works well for single-machine tools, local caches, and test environments. MySQL fits mature enterprise systems and standardized operations. PostgreSQL is better suited for complex analytics, JSON-heavy workloads, and rapidly evolving business models.

AI Visual Insight: The image presents a visual cover for comparing three database selection themes, emphasizing the side-by-side relationship between PostgreSQL, MySQL, and SQLite. It works well as a conceptual lead-in for relational database selection discussions rather than as a concrete architecture diagram.

AI Visual Insight: The image presents a visual cover for comparing three database selection themes, emphasizing the side-by-side relationship between PostgreSQL, MySQL, and SQLite. It works well as a conceptual lead-in for relational database selection discussions rather than as a concrete architecture diagram.

You can start with this decision table

| Scenario | Recommended Database | Core Reason |

|---|---|---|

| Local scripts, desktop tools, test environments | SQLite | Zero configuration, embedded, easy migration |

| Small to medium-sized web applications, fast-changing data models | PostgreSQL | Strong SQL capabilities, excellent JSONB, rich extensions |

| Traditional business systems, read/write splitting, managed cloud deployments | MySQL | Mature ecosystem, stable replication, abundant operations resources |

-- PostgreSQL: Suitable for complex aggregation and conditional statistics

SELECT category_id,

COUNT(DISTINCT user_id) AS unique_users,

AVG(view_count) AS avg_views_before_purchase

FROM (

SELECT user_id,

category_id,

COUNT(*) FILTER (WHERE action = 'view') AS view_count, -- Count page views

COUNT(*) FILTER (WHERE action = 'purchase') AS purchase_count -- Count purchases

FROM user_behavior_logs

WHERE created_at >= NOW() - INTERVAL '30 days' -- Limit to the last 30 days

GROUP BY user_id, category_id

HAVING COUNT(*) FILTER (WHERE action = 'purchase') > 0 -- Keep only users with purchases

) AS user_stats

GROUP BY category_id;This SQL example shows PostgreSQL’s expressive advantage in complex aggregations and conditional filtering.

PostgreSQL usually performs better for complex queries and semi-structured data

Real-world cases show that on behavior logs with 80 million rows, aggregation queries in MySQL can take several minutes. PostgreSQL, by contrast, can reduce similar queries to seconds or tens of seconds by using FILTER, parallel query execution, and more aggressive aggregation strategies.

The key advantage is not just cleaner syntax. PostgreSQL’s optimizer, execution plans, and indexing system are generally better suited for hybrid analytical and OLTP workloads. When your application involves log analysis, reporting, and multi-field filtering, PostgreSQL is more likely to deliver stable performance.

JSONB is one of PostgreSQL’s most decisive advantages

When schemas change frequently—such as user profiles, behavioral metadata, or fund holding details—JSONB provides much more practical engineering value than plain text-based JSON. It supports GIN indexes, containment queries, and path operations, combining flexible schema design with indexable queries.

-- PostgreSQL: JSONB queries combined with indexing

CREATE INDEX idx_extra_data_gin

ON user_behavior_logs USING GIN (extra_data); -- Create a GIN index for JSONB

SELECT *

FROM user_behavior_logs

WHERE extra_data @> '{"source": "mobile_app"}'::jsonb -- Query rows where source is mobile_app

AND (extra_data->>'version')::float > 2.0; -- Filter after casting the version to floatThis example shows that PostgreSQL can efficiently filter JSONB data directly while allowing indexes to participate in execution.

SQLite delivers value through extremely low operational cost, not high concurrency

SQLite is often misunderstood as a “toy database,” but it is extremely powerful in single-machine applications. The file itself is the database. You do not need a server process, an account system, or deployment prerequisites. It is highly practical for Python tools, Electron desktop apps, and mobile offline storage.

If your application matches these conditions—single machine, read-heavy, write-light, limited data volume, and complex deployment constraints—SQLite is often the lowest-cost correct choice.

SQLite fits a local data layer that you can ship quickly

import sqlite3

conn = sqlite3.connect('my_tool.db') # Connect to the local file database

conn.execute('CREATE TABLE IF NOT EXISTS tasks (id INTEGER PRIMARY KEY, title TEXT)') # Initialize the table

conn.execute('INSERT INTO tasks (title) VALUES (?)', ('完成报告',)) # Insert one task

rows = conn.execute('SELECT * FROM tasks').fetchall() # Query the task list

print(rows)

conn.commit() # Commit the transaction

conn.close() # Close the connectionThis code demonstrates the minimum viable practice of using SQLite as the database for a local tool.

But its boundaries are also very clear. Write locks apply at the database file level. Although WAL mode can improve the read/write concurrency experience, SQLite is still not suitable for high-frequency concurrent writes. If more than 10 threads keep writing continuously, database is locked is often only the beginning.

-- SQLite: Mitigate concurrency issues with WAL

PRAGMA journal_mode=WAL; -- Enable WAL journal mode

PRAGMA synchronous=NORMAL; -- Reduce sync level to improve performance

PRAGMA busy_timeout=5000; -- Wait 5 seconds when a lock conflict occursThis configuration can reduce lock contention in SQLite, but it does not change the upper limit of its write concurrency.

MySQL’s strength comes from its mature ecosystem, not from leading every category

MySQL remains a long-standing choice in enterprise systems. The reason is straightforward: it has the broadest cloud vendor support, the most documentation for primary-replica replication, mature tooling for backup, monitoring, and failover, and lower hiring and handoff costs for teams.

If your workload is centered on standard CRUD, orders, users, permissions, and admin backends—and you also care about read/write splitting, disaster recovery, and managed services—MySQL is still a stable solution.

MySQL looks more like a standardized component with a complete engineering system

CREATE TABLE users (

id BIGINT UNSIGNED AUTO_INCREMENT PRIMARY KEY,

username VARCHAR(50) NOT NULL,

email VARCHAR(100) NOT NULL,

status TINYINT DEFAULT 1,

created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP,

UNIQUE KEY uk_username (username), -- Unique constraint for username

UNIQUE KEY uk_email (email), -- Unique constraint for email

INDEX idx_status_created (status, created_at) -- Composite index for filtering and sorting

) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4;This CREATE TABLE statement reflects MySQL’s stable design pattern for typical business tables.

But MySQL also has clear limitations. It is weaker than PostgreSQL in complex aggregations, array types, native full-text capabilities, and custom data types. In older projects, teams also frequently run into legacy issues such as utf8mb3, online DDL locks on large tables, and awkward JSON index expressions.

A practical database selection framework should revolve around four questions

First, determine whether the data model is stable

If your schema changes frequently, or you need to combine structured and semi-structured data, prioritize PostgreSQL. If your model is stable and based mostly on standard transactional tables, MySQL is easier to integrate into enterprise systems. If you only need lightweight local storage, SQLite is enough.

Second, determine whether concurrent writes will keep growing

If write concurrency is very low, SQLite works directly. For standard web applications, both PostgreSQL and MySQL can handle the workload. If you need mature primary-replica replication, read/write splitting, and managed cloud hosting, MySQL usually has less implementation friction.

# Common MySQL operations commands

mysqldump -u root -p mydb > backup.sql # Create a logical backup of the database

pt-query-digest /var/log/mysql/slow.log # Analyze the slow query log

mysqltuner # Quickly inspect instance configurationThese commands show MySQL’s clear advantage in the operations tooling ecosystem.

Third, determine whether queries include complex aggregation and analysis

As soon as your application starts to require reports, behavioral analysis, conditional statistics, JSON search, and array filtering, PostgreSQL offers a higher ceiling. If your workload remains focused on CRUD and index-friendly lookups, MySQL is usually enough.

Fourth, determine whether your team is stronger in development or operations

If your team is technically strong and willing to use more advanced SQL and extensions, choose PostgreSQL. If your team values standard processes, stable replication, and cloud-platform compatibility, choose MySQL. If your priority is standalone distribution, embedded deployment, or testing, choose SQLite.

The final recommendation should be based on scenarios, not ideology

For personal projects and local tools, prefer SQLite. For new products and small to medium teams, PostgreSQL is the default recommendation. For traditional enterprise systems, evaluate MySQL first. If you need complex analytics, JSONB, partitioning, and extension support, lean toward PostgreSQL.

In one sentence: SQLite wins on simplicity, MySQL wins on stability, and PostgreSQL wins on capability.

FAQ

Q1: Which database should I choose by default for a new project?

A: If you do not have strong operations constraints, PostgreSQL should be your default choice. It is less likely to become a regret later because it performs well in complex queries, JSONB support, extensibility, and long-term evolution.

Q2: Can SQLite be used in production?

A: Yes, but only when the workload is single-machine, read-heavy, write-light, and low-concurrency. It is a strong fit for desktop tools, mobile offline data, local caches, and test systems.

Q3: How should I choose between MySQL and PostgreSQL?

A: If you care more about mature operations, primary-replica replication, managed cloud hosting, and broad team familiarity, choose MySQL. If you care more about query power, data types, JSONB, and analytical performance, choose PostgreSQL.

[AI Readability Summary] Based on real project experience, this article systematically compares PostgreSQL, MySQL, and SQLite across query performance, JSON support, concurrency, operations ecosystem, and ideal use cases. It also provides a practical database selection framework and working code examples.