Trae is a native IDE built for AI collaborative programming. Through a four-layer system architecture and three core software engines, it integrates code understanding, task planning, and tool invocation into a closed-loop development workflow, addressing the fragmented context and execution gaps common in traditional AI coding tools. Keywords: AI IDE, MCP, project context.

Technical Specifications Snapshot

| Parameter | Description |

|---|---|

| Project Name | Trae |

| Product Type | AI-Native Integrated Development Environment (AI IDE) |

| Core Foundation | Deeply customized VS Code |

| Core Protocol | MCP (Model Context Protocol) |

| Typical Models | Doubao, DeepSeek, Claude |

| Multimodal Input | Natural language, images, voice |

| Core Dependencies | Code indexing, AST semantic analysis, tool invocation, terminal execution |

| GitHub Stars | Not provided in the source |

| Use Cases | Code generation, refactoring, debugging, cross-tool collaborative development |

Trae Rebuilds the IDE for AI Instead of Simply Adding AI to an Editor

Trae’s key difference is not that it merely connects to a large language model. Its real innovation is that it places AI directly into the IDE’s primary execution path. Traditional AI plugins usually offer code completion or answer isolated questions, while Trae aims to understand the entire project, break down tasks, and directly operate on the codebase.

This design targets two core pain points: the lack of project-level context and the inability of AI to complete the full loop from suggestion to execution. By separating system layers from the execution layer, Trae elevates AI from an “assistant” to a collaborative development actor.

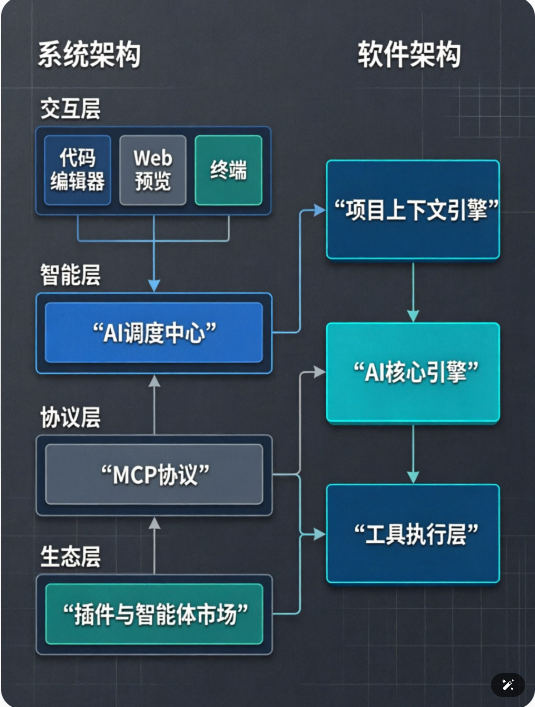

AI Visual Insight: The diagram illustrates Trae’s overall architectural layering, typically presented from top to bottom to show how interaction, intelligence, protocol, and ecosystem capabilities flow through the system. It highlights how multimodal inputs enter the model orchestration center, connect to external tools and data sources through MCP, and ultimately extend into the plugin and agent ecosystem, forming a closed-loop path of understanding, decision-making, execution, and extensibility.

The Four-Layer System Architecture Forms a Progressive Capability Chain

Trae’s high-level system architecture can be summarized in four layers: the interaction layer, the intelligence layer, the protocol layer, and the ecosystem layer. Each layer has a clearly defined role, with the goal of making AI capabilities both composable and extensible.

The interaction layer faces developers directly. It accepts natural language, screenshots, voice, and other inputs, then returns results through the code editor, terminal, and web preview. The focus here is not the UI itself, but reducing the barrier to expression so that requirements can be directly understood by machines.

The Intelligence Layer Handles Unified Understanding and Orchestration

The intelligence layer is Trae’s brain. It performs three jobs: parsing intent, planning steps, and selecting the right model. In other words, when a user says, “Help me refactor the login module into a plugin-based structure,” the system does not simply generate a code snippet. It first evaluates task scope, then decides which model to call, which files to read, and whether it needs to run commands.

class TaskPlanner:

def plan(self, user_request, project_context):

intent = self.parse_intent(user_request) # Parse the user's actual development intent

scope = self.scan_context(project_context) # Scan project scope and dependencies

steps = self.build_steps(intent, scope) # Generate an executable task tree

return stepsThis code shows how the intelligence layer converts a natural-language request into a structured execution plan.

The Protocol Layer Defines Trae’s External Extension Ceiling

The protocol layer centers on MCP. You can think of it as a universal connection protocol for the AI world, standardizing interactions between models, tools, data, and services. Databases, Figma, API documentation, and scripting environments can all expose capabilities through MCP.

This means Trae does not need hardcoded integration logic for every tool. Instead, it enables pluggable capabilities through protocol abstraction. From an AI engineering perspective, this is one of the most reusable parts of Trae’s architecture: it separates model capabilities from tool capabilities.

The Ecosystem Layer Turns Experience Into Reusable Capabilities

The ecosystem layer captures long-term value. The plugin marketplace supports functional extension, while the Agent/Skill marketplace supports scenario reuse. Teams can package high-frequency development workflows into intelligent agents, such as a frontend page generator, a database migration assistant, or an API integration agent.

As a result, development experience no longer lives only in documentation or personal memory. It becomes callable, reusable, and shareable AI capability units.

The Three Core Software Engines Give Trae Engineering-Grade Execution

If the system architecture explains Trae’s layered design, the software architecture explains why it can be implemented effectively in real engineering workflows. Its core consists of the project context engine, the AI core engine, and the tool execution layer.

The Project Context Engine Builds a Full-Project Cognitive Model

This is the foundation that sets Trae apart from single-turn conversational coding tools. It continuously scans the repository and maintains file nodes, function symbols, class relationships, dependency chains, and type information to form a project knowledge graph.

At a deeper level, it does not just index text. It also understands AST structures and semantic relationships. As a result, the AI can accurately identify who calls a given function and which modules a class change will affect. This directly improves the accuracy of code completion, refactoring, and Q&A.

class ContextIndexer:

def build_graph(self, files):

graph = {}

for file in files:

ast_info = parse_ast(file) # Parse the code syntax tree

symbols = extract_symbols(ast_info) # Extract functions, classes, variables, and other symbols

graph[file] = symbols

return graphThis code shows how the context engine builds a project-level semantic index from source files.

The AI Core Engine Orchestrates the Path From Understanding to Execution

The AI core engine is the actual decision-making hub. It receives project information from the context engine, combines it with user goals, generates an execution plan, and triggers file reads and writes, command execution, or network requests.

The most important point here is not code generation itself, but action orchestration. When a task involves dependency installation, test execution, or error fixing, Trae can chain these actions into an automated workflow instead of stopping at the level of chat-based suggestions.

The Tool Execution Layer Lets AI Take Real Action

The tool execution layer is Trae’s hands and feet. It creates files, modifies code, runs terminal commands, and accesses online documentation or APIs. Without this layer, AI can only make suggestions. With it, AI can complete tasks in a true closed loop.

def execute_plan(step):

if step["type"] == "file_write":

write_file(step["path"], step["content"]) # Write generated code to the target file

elif step["type"] == "command":

run_terminal(step["cmd"]) # Run install, test, or startup commands

elif step["type"] == "fetch_api":

call_api(step["url"]) # Access an external API or documentation sourceThis code demonstrates how the tool execution layer maps a task plan to concrete system operations.

Trae’s Architectural Value Lies in Creating a Complete Closed Loop

Trae’s real advancement is not that any single model is stronger. It is that the architecture connects input, understanding, planning, execution, and extension into one unified chain. The interaction layer handles expression, the intelligence layer handles decisions, the protocol layer handles connectivity, and the ecosystem layer handles capability accumulation. The three core engines then translate these capabilities into engineering execution.

For development teams, this architecture means AI is no longer limited to code completion. It begins to participate in project-level understanding, cross-tool collaboration, and automated delivery. It represents a broader shift in AI IDEs from plugin-style add-ons to native operating environments.

FAQ

What is the fundamental difference between Trae and traditional AI coding plugins?

Traditional plugins mainly provide local code completion or question answering, but they lack global project context and an execution closed loop. Trae rebuilds the IDE from the foundation up, giving it project understanding, task planning, and tool invocation capabilities.

Why is MCP important in Trae’s architecture?

MCP is the standard protocol that connects models with external tools and data sources. It reduces integration cost and allows capabilities such as databases, design tools, and API services to be connected and reused through a unified interface.

What problems do the three core engines solve?

The project context engine solves the problem of incomplete project visibility. The AI core engine solves the problem of poor task planning. The tool execution layer solves the problem of AI being able to suggest actions but not carry them out.

Core Summary

This article reconstructs and analyzes Trae’s system and software architecture, focusing on its four-layer design, the MCP-based extension model, and how the three core engines—project context, AI core, and tool execution—create a development closed loop from understanding and planning to execution.